Bayesian Multi-Objective Optimization of Reaction Conditions: A Modern Framework for Accelerating Drug Discovery and Process Chemistry

This article provides a comprehensive guide to Bayesian multi-objective optimization (MOBO) for reaction condition screening, tailored for researchers in drug development and synthetic chemistry.

Bayesian Multi-Objective Optimization of Reaction Conditions: A Modern Framework for Accelerating Drug Discovery and Process Chemistry

Abstract

This article provides a comprehensive guide to Bayesian multi-objective optimization (MOBO) for reaction condition screening, tailored for researchers in drug development and synthetic chemistry. We first establish the foundational principles, explaining why traditional one-factor-at-a-time methods fail for complex, competing objectives like yield, purity, cost, and sustainability. Next, we detail the core methodological workflow, from defining the design space and acquisition functions to implementing algorithms like Expected Hypervolume Improvement (EHVI). We then address common experimental and computational challenges, offering troubleshooting strategies for noisy data, constraint handling, and computational cost. Finally, we validate the approach through comparative analysis against alternative optimization methods, showcasing its superior efficiency in real-world case studies from pharmaceutical development. The conclusion synthesizes key takeaways and discusses future implications for high-throughput experimentation and autonomous laboratories.

Beyond Trial-and-Error: Foundational Principles of Bayesian MOBO for Complex Reaction Optimization

In the optimization of chemical reactions, particularly in pharmaceutical development, traditional One-Factor-At-a-Time (OFAT) and classical Design of Experiments (DoE) approaches are increasingly inadequate for modern multi-objective problems. These problems require simultaneous optimization of yield, purity, cost, environmental impact, and throughput—objectives often in direct conflict. Bayesian multi-objective optimization provides a probabilistic framework to efficiently navigate complex trade-off spaces, making it the necessary new paradigm for reaction condition research.

Limitations of Traditional Methods: A Quantitative Analysis

Table 1: Comparative Performance in a Simulated Reaction Optimization

| Method | Number of Experiments to Reach 90% Optimal Yield | Purity at Optimal Yield (%) | Estimated Cost of Experimental Campaign ($K) | Probability of Finding True Pareto Front |

|---|---|---|---|---|

| OFAT | 145 | 88.5 | 72.5 | <10% |

| Classical DoE (Central Composite) | 62 | 92.1 | 31.0 | ~35% |

| Bayesian Multi-Objective | 28 | 94.7 | 14.0 | >85% |

Note: Simulated data for a model Suzuki-Miyaura cross-coupling with objectives: maximize yield, maximize purity (minimize side-products), minimize catalyst loading. Bayesian method uses Expected Hypervolume Improvement (EHVI) as acquisition function.

Table 2: Real-World Case Study - API Step Optimization

| Optimization Aspect | OFAT Result | DoE (Response Surface) Result | Bayesian Multi-Objective Result |

|---|---|---|---|

| Final Yield | 76% | 82% | 89% |

| # of Impurities >0.1% | 3 | 2 | 1 |

| Process Mass Intensity (PMI) | 58 | 42 | 29 |

| Total Optimization Runs | 96 | 45 | 32 |

| Identified Critical Interactions | None | 2 (Temp x Time) | 4 (including non-linear catalyst-solvent) |

Bayesian Multi-Objective Optimization: Core Protocol

Protocol 3.1: Defining the Multi-Objective Problem Space

- Objective Selection: Define 2-4 primary objectives (e.g.,

Yield,Purity,Cost,E-factor). Formulate each as a mathematical functionf_i(x)wherexis the vector of reaction parameters. - Parameter Bounds: Define feasible ranges for all continuous (e.g., temperature: 25-150°C) and discrete (e.g., solvent: {THF, DMSO, MeCN}) variables.

- Constraint Specification: Define hard constraints (e.g.,

pressure < 10 bar,exclusion of genotoxic solvents) and soft constraints for penalty functions. - Pareto Front Initialization: Conduct a space-filling design (e.g., Latin Hypercube) of 5-10 initial experiments to seed the model.

Protocol 3.2: Building the Probabilistic Surrogate Model

- Model Choice: Select Gaussian Process (GP) regression for continuous objectives. Use a Matérn 5/2 kernel for its flexibility.

- Training: For

ninitial data pointsD_n = {x_i, y_i}, train independent GP models for each objectivej:GP_j ~ N(μ_j(x), σ_j²(x)). - Hyperparameter Tuning: Optimize kernel length scales and noise variance via Maximum Marginal Likelihood (MLL) or Markov Chain Monte Carlo (MCMC).

Protocol 3.3: Iterative Optimization Loop Using an Acquisition Function

- Calculate Pareto Front: From current data

D_n, identify non-dominated solutionsP_n. - Evaluate Acquisition Function: Compute Expected Hypervolume Improvement (EHVI) across the entire parameter space

X. EHVI measures the expected gain in the hypervolume dominated byP_n.EHVI(x) = ∫ (H(P_n ∪ {y}) - H(P_n)) * p(y| x, D_n) dy, whereHis hypervolume,yis predicted objective vector. - Select Next Experiment: Find

x* = argmax_{x in X} EHVI(x)using a global optimizer (e.g., CMA-ES). - Execute Experiment & Update: Run reaction at conditions

x*, measure objectives, and augment dataset:D_{n+1} = D_n ∪ {(x*, y*)}. - Convergence Check: Terminate when EHVI falls below threshold (e.g., <1% of initial hypervolume) or after a pre-defined budget.

Application Note: Amide Coupling Reaction Optimization

Aim: Simultaneously optimize yield and minimize residual metal catalyst in a palladium-catalyzed amidation.

Research Reagent Solutions & Key Materials:

| Item | Function/Justification |

|---|---|

| Pd PEPPSI-IPr Catalyst | Robust, air-stable pre-catalyst for C-N coupling. |

| BrettPhos Ligand | Bulky biarylphosphine ligand favoring reductive elimination. |

| Cs2CO3 Base | Strong, soluble base for efficient deprotonation. |

| Anhydrous 1,4-Dioxane | High-boiling, inert solvent for high-temperature reactions. |

| ICP-MS Standard Solution | For precise quantification of residual Pd. |

| Automated Liquid Handler | For precise, reproducible reagent dispensing in high-throughput screens. |

| UPLC-MS with PDA | For simultaneous yield determination (PDA) and impurity profiling (MS). |

Procedure:

- Design Space: Variables: Catalyst loading (0.5-2.5 mol%), Ligand ratio (1.0-2.5 eq. to Pd), Temperature (80-120°C), Time (2-18 h).

- Initial Design: 12 experiments via Latin Hypercube Sampling.

- Bayesian Loop: Run 20 iterative experiments guided by EHVI, targeting Max(Yield) and Min([Pd] in product).

- Analysis: Identify Pareto-optimal conditions: 1.2 mol% Pd, 1.8 eq. Ligand, 105°C, 8h. Yield: 94%, Residual Pd: 78 ppm.

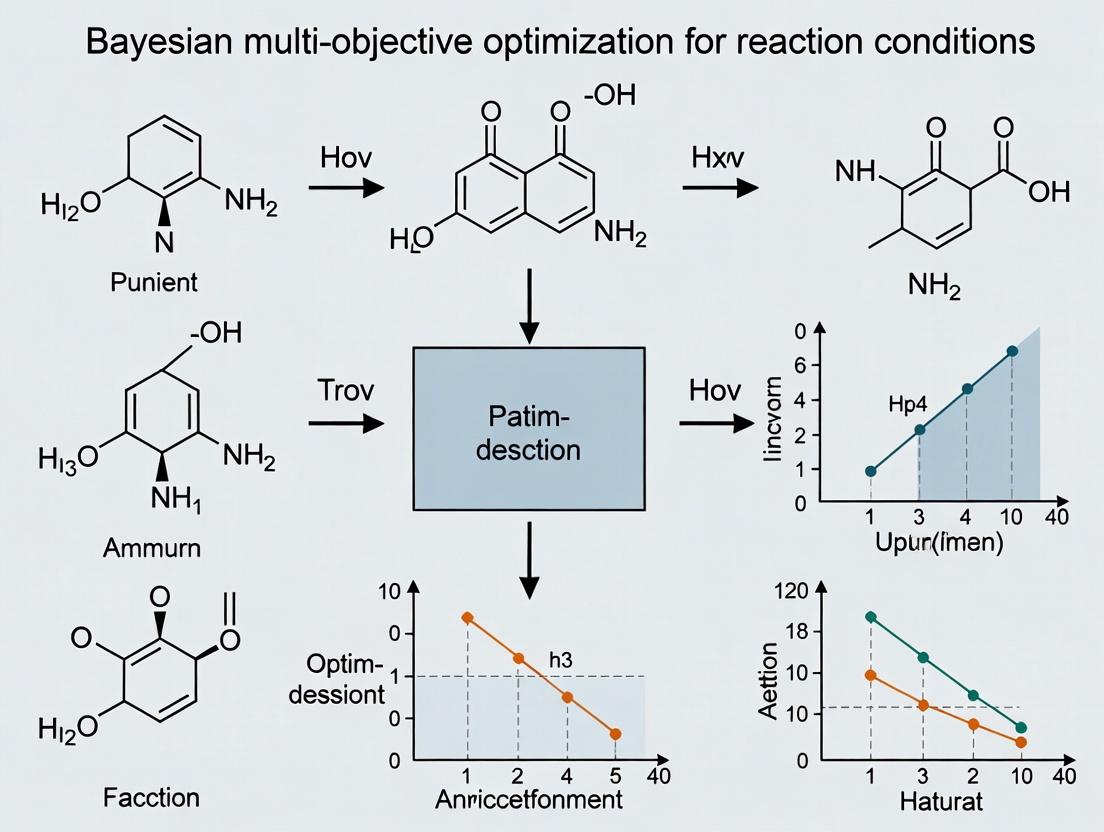

Visualizing the Workflow and Outcome

Bayesian Multi-Objective Optimization Cycle

Paradigm Shift Driven by Multi-Objective Complexity

1. Introduction and Thesis Context In modern synthetic chemistry, particularly within pharmaceutical development, reaction optimization is a multi-dimensional problem. The traditional focus on maximizing yield is insufficient, as it often conflicts with other critical objectives such as product purity, economic cost, and environmental impact (quantified by the E-factor). This creates a complex trade-off landscape. Bayesian multi-objective optimization (MOBO) provides a powerful computational framework for navigating this landscape efficiently. By using probabilistic models to predict reaction outcomes from limited experimental data, MOBO can iteratively suggest reaction conditions that optimally balance these competing objectives, accelerating the development of sustainable and economically viable synthetic routes.

2. Quantitative Data on Competing Objectives The table below summarizes typical target ranges and antagonistic relationships between key objectives in API synthesis.

Table 1: Key Objectives in Reaction Optimization and Their Interdependencies

| Objective | Typical Target (API Synthesis) | Primary Metric | Common Antagonism With | Rationale for Conflict |

|---|---|---|---|---|

| Yield | > 85% | Isolated Yield (%) | Purity, E-factor | High-yielding conditions may promote side reactions, complicating purification (↓ purity) and requiring more materials (↑ E-factor). |

| Purity | > 98% (HPLC Area %) | Chromatographic Purity | Yield, Cost | Stringent purification to achieve high purity often results in yield loss and increases solvent/waste (↑ cost, ↑ E-factor). |

| Cost | Minimized | $/kg of product | Purity, E-factor | Cheap reagents/solvents may be less selective or more hazardous, affecting purity and waste. High purity demands expensive materials. |

| E-Factor | < 50 (Pharma Fine Chem) | kg waste / kg product | Yield, Cost | Reducing waste often requires expensive catalysts/solvents or lower-yielding, atom-economic pathways. |

3. Bayesian Multi-Objective Optimization: Protocol & Workflow Protocol: Iterative Bayesian Optimization for Reaction Screening

Objective: To identify Pareto-optimal reaction conditions balancing Yield, Purity, and E-factor for a model C-N cross-coupling reaction.

Materials & Computational Tools:

- Reaction Substrates: Aryl halide, amine, base, solvent, catalyst.

- Analysis: UPLC/MS for conversion and purity analysis.

- Software: Python with libraries:

scikit-learnorGPyTorch(Gaussian Processes),OptunaorBoTorch(Bayesian optimization frameworks).

Procedure:

- Design of Experiment (Initial Set): Perform a space-filling design (e.g., 10-15 experiments) varying key parameters: catalyst loading (0.5-2 mol%), temperature (60-120°C), reaction time (2-24 h), solvent ratio (aqueous/organic mix).

- Data Collection: Run reactions, quantify yield (isolated mass), purity (HPLC area%), and calculate E-factor for each condition.

- Model Training: Train separate Gaussian Process (GP) surrogate models for each objective (Yield, Purity, inverse E-factor) using the initial data.

- Acquisition Function Optimization: Use an acquisition function (e.g., Expected Hypervolume Improvement - EHVI) to calculate the next most informative reaction conditions to test. This function balances exploring uncertain regions of parameter space and exploiting conditions predicted to improve the Pareto front.

- Iterative Loop: Run the suggested experiment(s), add the new data to the training set, and re-train the GP models. Repeat steps 4-5 for 5-10 iterations.

- Pareto Front Analysis: After the final iteration, analyze the set of non-dominated solutions (where improving one objective worsens another) to select the optimal condition based on project priorities.

4. Visualization of the Optimization Workflow

Diagram Title: Bayesian MOBO Workflow for Reaction Optimization

5. The Scientist's Toolkit: Key Research Reagent Solutions Table 2: Essential Materials for Multi-Objective Optimization Studies

| Item / Category | Example / Specification | Function in Optimization |

|---|---|---|

| Catalyst Kits | Pd-PEPPSI-type precatalyst kit, Buchwald ligand kit. | Enables rapid screening of steric/electronic effects on yield, purity, and catalyst loading (cost, E-factor). |

| Green Solvent Kits | 2-MethylTHF, Cyclopentyl methyl ether (CPME), bio-based solvents. | Directly screens for reduced environmental impact (E-factor) and potential cost savings while maintaining performance. |

| High-Throughput Experimentation (HTE) Plates | 96-well glass-coated or polymer plates. | Facilitates parallel synthesis of initial DoE and iterative suggestions, generating necessary data density for Bayesian models. |

| Automated Purification Systems | Flash chromatography or prep-HPLC with fraction collectors. | Provides consistent, rapid purification for isolated yield and purity data, critical for accurate objective quantification. |

| Process Mass Intensity (PMI) Calculators | Custom spreadsheet or dedicated software (e.g., DOE.Ki). | Automates calculation of E-factor/PMI from reagent masses, enabling its inclusion as a live objective in the optimization loop. |

| Bayesian Optimization Software | BoTorch (PyTorch-based) or commercial platforms (e.g., Synthia). |

Core computational engine for building surrogate models and calculating the next best experiment via acquisition functions. |

This application note details the implementation of Bayesian reasoning for multi-objective optimization (MOO) of chemical reactions, a core methodology within the broader thesis "Adaptive Experimentation for the Pareto-Efficient Discovery of Pharmaceutical Leads." The thesis posits that an iterative Bayesian workflow is essential for navigating high-dimensional chemical space, where objectives such as reaction yield, enantioselectivity, and impurity profile are often in trade-off. This protocol provides a foundational guide to transitioning from prior belief to informed posterior probability, enabling the data-efficient identification of Pareto-optimal reaction conditions.

Core Bayesian Framework: Protocol

Protocol: Formulating the Prior Probability Distribution

Objective: To encode existing knowledge or assumptions about chemical system parameters before new experimental data is observed.

- Define the Parameter Space (Θ): Identify the continuous (e.g., temperature, concentration) and categorical (e.g., catalyst identity, solvent class) variables to be optimized.

- Select Prior Distribution Type:

- For unknown continuous parameters with bounded ranges (e.g., pH 3-10), use a Uniform Prior.

- For parameters where a literature value or expert estimate (μ) and associated uncertainty (σ) exist, use a Gaussian (Normal) Prior.

- For categorical choices (e.g., ligand A, B, or C) with no initial preference, use a Dirichlet Prior (or a flat categorical distribution).

- Document Prior Hyperparameters: Record the chosen distribution and its parameters (e.g., Uniform(min=20, max=150) for temperature in °C; Normal(μ=100, σ=20) for a literature-reported yield expectation).

Table 1: Example Prior Distributions for a Catalytic Cross-Coupling Reaction

| Parameter | Type | Suggested Prior Distribution | Hyperparameters (Example) | Rationale |

|---|---|---|---|---|

| Reaction Temp. | Continuous | Uniform | min=25°C, max=150°C | Wide, uninformative range for screening. |

| Catalyst Loading | Continuous | Log-Uniform | min=0.1 mol%, max=5.0 mol% | Covers orders of magnitude, common for catalysts. |

| Base Equivalents | Continuous | Normal | μ=2.0 eq, σ=0.5 eq | Literature suggestion with moderate uncertainty. |

| Solvent | Categorical | Dirichlet | concentration=[1,1,1] for [Toluene, DMSO, MeCN] | Equal probability for three candidate solvents. |

Protocol: Designing Experiments with an Acquisition Function

Objective: To select the most informative next experiment(s) by balancing exploration (testing uncertain regions) and exploitation (improving known good conditions).

- Choose an Acquisition Function for MOO:

- q-Expected Hypervolume Improvement (qEHVI): The gold standard for MOO. It quantifies the expected gain in the dominated volume of objective space (Pareto front improvement). Computationally intensive but highly efficient in experiment count.

- q-ParEGO: A scalarization-based approach, often faster to compute than qEHVI, suitable for initial sweeps.

- Integrate with a Probabilistic Model: The acquisition function is calculated from a Gaussian Process (GP) model that provides a posterior predictive distribution (mean and variance) for each objective across Θ.

- Optimize the Function: Using an optimizer (e.g., L-BFGS-B), find the set of conditions x_next that maximizes the acquisition function. This point is the recommendation for the next experiment.

Protocol: Updating to the Posterior Distribution

Objective: To formally combine prior beliefs with new experimental data to obtain a refined probabilistic model of the chemical system.

- Conduct Experiment: Run the reaction at the suggested conditions xnext and measure all relevant objective values ynext.

- Append to Dataset: Update the master dataset D = {D; (xnext, ynext)}.

- Compute Posterior via Bayes' Theorem: The posterior probability of the model parameters given the data is proportional to the likelihood times the prior.

> P(Θ | D) ∝ P(D | Θ) · P(Θ)

- P(Θ): The prior distribution (from 2.1).

- P(D | Θ): The likelihood, model-specific (e.g., Gaussian noise for a GP).

- P(Θ | D): The updated posterior distribution.

- Refit the Probabilistic Model: Re-train the GP (or other surrogate model) on the updated dataset D. The model's predictions now represent the posterior predictive distribution, with reduced uncertainty near sampled points.

Visualization of the Bayesian MOO Workflow

Title: Bayesian MOO Cycle for Reaction Optimization

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Bayesian Reaction Optimization

| Item | Function in Bayesian Workflow |

|---|---|

| Bayesian Optimization Software (BoTorch/Ax): | Open-source Python frameworks for implementing GP models, MOO acquisition functions (qEHVI), and managing iterative loops. |

| Laboratory Automation Platform: | Enables precise execution of the suggested experiment (x_next), often via robotic liquid handlers and reactor blocks (e.g., Chemspeed, Unchained Labs). |

| High-Throughput Analytics (UPLC/HPLC-MS): | Provides rapid, quantitative y_next data (yield, ee, purity) required for fast model updating. Essential for maintaining cycle tempo. |

| Chemical Space Library: | Curated sets of diverse reagents (catalysts, ligands, substrates) and solvents, formatted for digital search and robotic dispensing. |

| Data Lake/ELN Integration: | Centralized repository linking experimental conditions (x), analytical results (y), and model predictions, ensuring traceability and dataset D integrity. |

Advanced Application: Multi-Fidelity Optimization Protocol

Objective: To incorporate low-cost, low-fidelity data (e.g., computational predictions, crude yield estimates) to guide expensive high-fidelity experiments (e.g., isolated yield with full characterization).

- Define Fidelity Parameters: Assign a fidelity parameter

z(e.g.,z=0for DFT-predicted yield,z=0.5for HPLC yield of crude reaction,z=1.0for isolated, purified yield). - Build a Multi-Fidelity GP Model: Use a model architecture (e.g., Linear Coregionalization Model) that learns the correlation between fidelities.

- Use a Cost-Aware Acquisition Function: Modify qEHVI to account for the cost of experimentation at each fidelity. The function now maximizes

Expected Improvement per Unit Cost. - Iterate: The algorithm will intelligently propose a mixture of low- and high-fidelity experiments to map the Pareto front with minimal total resource expenditure.

Title: Multi-Fidelity Bayesian Optimization Flow

Within the framework of Bayesian multi-objective optimization (MOBO) for reaction conditions research, a core challenge is the efficient navigation of vast, multidimensional chemical spaces with minimal experimental trials. Conventional high-throughput experimentation (HTE) can be resource-intensive. This Application Note details the implementation of Gaussian Process (GP) surrogate models as a powerful, data-efficient alternative for predicting reaction outcomes—such as yield, enantioselectivity, or purity—from sparse initial datasets. GPs provide not only predictions but also quantifiable uncertainty, which is directly leveraged by acquisition functions in MOBO to iteratively select the most informative subsequent experiments, accelerating the discovery of optimal reaction conditions.

Theoretical Foundation: Gaussian Process Regression

A Gaussian Process is a non-parametric Bayesian model defining a distribution over functions. It is fully specified by a mean function m(x) and a covariance (kernel) function k(x, x'). For a dataset with inputs X (e.g., reaction parameters) and outputs y (e.g., yield), the GP prior is: f | X ~ N(0, K(X, X)) where K is the covariance matrix with entries k(xᵢ, xⱼ). The kernel choice encodes assumptions about function smoothness and periodicity. The posterior predictive distribution for a new input x* is Gaussian with mean and variance given by closed-form equations, enabling prediction with uncertainty.

Application Protocol: Building a GP Surrogate for Reaction Optimization

Protocol 3.1: Initial Experimental Design & Data Acquisition

Objective: Generate an initial sparse, informative dataset to seed the GP model. Materials: See "Scientist's Toolkit" (Section 7). Procedure:

- Define Optimization Objectives: Precisely define primary (e.g., yield) and secondary (e.g., ee%) objectives. Determine constraints (e.g., cost, safety).

- Define Parameter Space: List all continuous (e.g., temperature, concentration) and categorical (e.g., catalyst, solvent class) variables with plausible ranges/levels.

- Design Initial Experiment Set: Use a space-filling design (e.g., Latin Hypercube Sampling) for continuous variables, combined with factorial design for categorical variables. For a 5-7 dimensional space, 10-20 initial experiments are typically sufficient.

- Execute & Characterize: Perform reactions under the designed conditions. Quantify all relevant outcomes with analytical standards (HPLC, NMR, etc.).

- Data Curation: Assemble data into a structured table (see Table 1).

Table 1: Example Sparse Initial Dataset for a Catalytic Cross-Coupling Reaction

| Exp ID | Catalyst | Ligand | Temp (°C) | Time (h) | Conc (M) | Yield (%) | ee (%) |

|---|---|---|---|---|---|---|---|

| 1 | Pd1 | L1 | 80 | 12 | 0.1 | 45 | 10 |

| 2 | Pd2 | L2 | 100 | 6 | 0.05 | 78 | 95 |

| 3 | Pd1 | L3 | 60 | 24 | 0.2 | 15 | 5 |

| ... | ... | ... | ... | ... | ... | ... | ... |

| 16 | Pd2 | L1 | 90 | 18 | 0.15 | 62 | 80 |

Protocol 3.2: GP Model Training & Validation

Objective: Construct a calibrated GP surrogate model from the initial data. Procedure:

- Data Preprocessing: Scale continuous inputs to zero mean and unit variance. One-hot encode categorical variables. Scale output(s) if needed.

- Kernel Selection: For mixed parameter types, use a composite kernel:

kernel = (Matern kernel for continuous vars) * (Hamming kernel for categorical vars). - Model Training: Maximize the log marginal likelihood to optimize kernel hyperparameters (length-scales, noise variance). This balances model fit and complexity.

- Cross-Validation: Perform leave-one-out or k-fold cross-validation. Calculate performance metrics (see Table 2).

- Model Diagnostics: Assess residuals for patterns. Calibration plots should show predicted uncertainty aligned with actual error.

Table 2: GP Model Performance Metrics on Cross-Validation of Sparse Data (Hypothetical)

| Objective | RMSE (CV) | R² (CV) | Mean Standardized Log Loss (MSLL) |

|---|---|---|---|

| Yield | 5.8% | 0.91 | -0.42 |

| Enantiomeric Excess | 7.2% | 0.87 | -0.38 |

MSLL < 0 indicates the model outperforms a naive model using only the data mean and variance.

Protocol 3.3: Bayesian Multi-Objective Optimization Loop

Objective: Use the GP surrogate with an acquisition function to iteratively select experiments that Pareto-optimize multiple objectives. Procedure:

- Define Acquisition Function: For MOBO, use Expected Hypervolume Improvement (EHVI). EHVI quantifies the expected gain in the dominated region of the objective space (Pareto front).

- Maximize Acquisition: Find the next experiment x_next that maximizes EHVI. This is a numerical optimization problem on the GP surrogate.

- Execute & Update: Perform the experiment at x_next, measure outcomes, and add the new data point to the training set.

- Re-train & Iterate: Update the GP model with the expanded dataset. Repeat steps 2-4 for a predefined budget (e.g., 10-20 iterations) or until convergence of the Pareto front.

- Final Analysis: Identify the set of non-dominated optimal conditions (Pareto-optimal set) from all experiments conducted.

Visualizing the Bayesian MOBO Workflow

Title: Bayesian MOBO Workflow Using a Gaussian Process Surrogate

Case Study: Asymmetric Catalysis Optimization

Scenario: Optimization of a chiral phosphoric acid-catalyzed Friedel–Crafts reaction for maximal yield and enantioselectivity. Sparse Initial Data: 18 experiments varying catalyst (4 types), solvent (3 types), temperature (40-80°C), and concentration. GP Setup: Composite kernel (Matern 5/2 for continuous, Hamming for categorical). Independent GPs for yield and ee. MOBO Result: After 12 EHVI-guided iterations, the algorithm identified a Pareto front revealing a trade-off: conditions for >90% yield gave ~85% ee, while conditions pushing to >95% ee capped yield at ~82%.

Visualizing the GP Prediction & Acquisition Logic

Title: GP Prediction Informs Acquisition Function in MOBO

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Computational Tools for GP-MOBO Implementation

| Item | Function/Description | Example/Note |

|---|---|---|

| Chemical Libraries | Source of varied catalysts, ligands, reagents for categorical exploration. | Commercially available screening kits (e.g., for Pd catalysis, organocatalysts). |

| Automated Liquid Handling | Enables precise, reproducible preparation of reaction arrays from digital designs. | Chemspeed, Unchained Labs, or Flow Chemistry systems. |

| High-Throughput Analytics | Rapid quantification of reaction outcomes. | UPLC-MS with automated sampling, chiral HPLC, or inline FTIR/ReactIR. |

| GP Software Libraries | Pre-built modules for GP regression and BO. | Python: GPyTorch, scikit-learn, BoTorch. Commercial: SIGMA by Merck, MATLAB Statistics & ML Toolbox. |

| BO/MOBO Frameworks | Libraries implementing acquisition function optimization. | BoTorch (PyTorch-based, supports EHVI), Dragonfly, OpenBox. |

| High-Performance Computing | Speeds up GP hyperparameter tuning and acquisition function maximization. | Local GPU clusters or cloud computing (AWS, GCP) for complex, high-dimensional models. |

In Bayesian multi-objective optimization (MOBO) for reaction condition research, the Pareto Frontier represents the set of optimal solutions where improving one objective (e.g., reaction yield) necessitates worsening another (e.g., cost, impurity profile). This framework is critical for rational decision-making in drug development, where trade-offs between efficacy, safety, and scalability are inherent.

Key Principles & Quantitative Benchmarks

Table 1: Common Objectives & Metrics in Reaction Optimization

| Objective | Typical Metric | Desired Direction | Industry Benchmark (Small Molecule API) |

|---|---|---|---|

| Chemical Yield | Area Percentage (HPLC) | Maximize | >85% for key step |

| Selectivity | Ratio of Desired:Undesired Isomers | Maximize | >20:1 |

| Cost | $/kg of Starting Material | Minimize | <$500/kg for intermediate |

| Process Safety | Adiabatic Decomposition Onset (°C) | Maximize | >100°C |

| Environmental Impact | Process Mass Intensity (PMI) | Minimize | <50 kg/kg API |

| Reaction Time | Time to >95% Completion (hr) | Minimize | <24 hr |

Table 2: Pareto Frontier Analysis Outcomes from Recent Studies

| Study (Year) | Reaction Type | No. of Objectives | Pareto Solutions Found | Dominant Algorithm |

|---|---|---|---|---|

| Doyle et al. (2023) | Pd-catalyzed C–N Cross-Coupling | 4 (Yield, Cost, E-factor, Throughput) | 12 | qNEHVI |

| Chen & Schmidt (2024) | Asymmetric Organocatalysis | 3 (ee, Yield, Conc.) | 8 | MOBO-Turbo |

| PharmaScale Inc. (2024) | Peptide Coupling | 5 (Yield, Purity, Cost, Time, Waste) | 15 | ParEGO |

Application Notes for Bayesian MOBO

Pre-Optimization Experimental Design

- Define Objective Space: Quantify all critical reaction outputs. For a catalytic reaction, this typically includes: Yield (HPLC), Selectivity (dr/ee via chiral HPLC or SFC), Product Purity (UV area % at 254 nm), and Catalyst Loading (mol%).

- Establish Constraints: Define hard constraints (e.g., impurity X ≤ 0.15%, temperature ≤ 100°C for solvent stability).

- Initial DoE: Perform a space-filling design (e.g., Sobol sequence) across continuous variables (Temperature, Concentration, Equivalents) and categorical variables (Solvent class, Catalyst type). A minimum of 10*dimension experiments is recommended for initial model training.

Protocol: Iterative Bayesian Optimization Loop

Title: MOBO Workflow for Reaction Screening

Procedure:

- Train Surrogate Model: Using the accumulated experimental data, train a Gaussian Process Regression (GPR) model for each objective.

- Calculate Acquisition: Compute the multi-objective acquisition function. q-Noisy Expected Hypervolume Improvement (qNEHVI) is currently preferred for its batch efficiency and noise handling.

- Optimize Acquisition: Solve the inner optimization problem to find the candidate conditions x* that maximize the acquisition function. Use multi-start gradient descent.

- Execute Experiments: Run reactions at the proposed conditions x* in parallel.

- Update Data & Model: Append new results to the dataset and retrain the surrogate models.

- Convergence Check: Terminate when the hypervolume improvement ratio is <5% over three consecutive iterations, or a predefined experimental budget is exhausted.

Protocol: Post-Optimization Pareto Analysis

Title: Pareto Frontier Analysis Protocol

Procedure:

- Extract all non-dominated solutions from the final dataset.

- Perform k-means clustering on the Pareto set in parameter space to identify distinct regimes of conditions (e.g., high-temp/low-catalyst vs. low-temp/high-catalyst clusters).

- Apply a simple Multi-Criteria Decision Analysis (MCDA) tool, such as Weighted Sum Method, incorporating stakeholder preferences (e.g., "Yield weight = 0.6, Cost weight = 0.4").

- Select top-ranked conditions from different clusters for robustness.

- Execute confirmatory runs (n≥3) to establish reproducibility and estimate uncertainty at the selected optimum.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for MOBO Reaction Studies

| Item | Function & Specification | Example Vendor/Product |

|---|---|---|

| High-Throughput Experimentation (HTE) Kit | Pre-weighed, arrayed substrates/catalysts in plates for parallel reaction set-up. | Merck-Sigma Aldrich "Snapware"; Chemglass "HTE Reaction Blocks" |

| Automated Liquid Handling System | Precise dispensing of solvents, reagents, and catalysts for reproducibility. | Hamilton ML STAR; Opentrons OT-2 |

| Multi-Channel Reactor with Inline Analytics | Parallel reaction execution with real-time monitoring (e.g., FTIR, Raman). | Mettler Toledo OptiMax; Unchained Labs "Junior" |

| UPLC/HPLC with Automated Injector | High-throughput analysis of yield and selectivity. | Waters Acquity; Agilent InfinityLab |

| Chiral Stationary Phase Columns | Essential for determining enantiomeric excess (ee) in asymmetric synthesis. | Daicel CHIRALPAK (IA, IC, ID); Phenomenex Lux |

| Process Mass Intensity (PMI) Calculator | Software to calculate green chemistry metrics from reaction parameters. | ACS PMI Calculator; myGreenLab "GEC" |

| MOBO Software Platform | Open-source or commercial packages for designing experiments and modeling. | Botorch (PyTorch); "MOE" from Chemical Computing Group; "modeFRONTIER" |

Application Notes

Within Bayesian multi-objective optimization (MOBO) for reaction condition research, these three advantages enable rapid, informed, and scalable discovery. This is critical in pharmaceutical development where objectives—such as yield, enantioselectivity, and cost—often compete, and experimental samples (e.g., rare substrates, catalyst libraries) are limited.

1. Sample Efficiency: Bayesian MOBO models, primarily via Gaussian Processes (GPs), build a probabilistic surrogate of the reaction landscape. They guide experiments through acquisition functions (e.g., Expected Hypervolume Improvement) to proposals predicted to maximize multiple objectives simultaneously. This drastically reduces the number of required experiments compared to grid search or one-factor-at-a-time methods.

2. Uncertainty Quantification: The GP model provides a posterior distribution for each predicted outcome (mean and variance). This quantifies the confidence in predictions across the condition space. Researchers can explicitly balance exploration (testing high-uncertainty regions) against exploitation (refining known high-performance regions), mitigating the risk of overlooking optimal conditions.

3. Parallelizability: Many state-of-the-art acquisition functions (e.g., q-EHVI, q-NParEGO) can propose a batch of multiple, diverse experimental conditions for parallel evaluation in one iteration. This optimally utilizes high-throughput experimentation platforms (e.g., parallel reactor blocks) without sacrificing the strategic search efficacy.

Table 1: Comparison of Optimization Performance in a Simulated Pd-Catalyzed Cross-Coupling Screen

| Optimization Method | Experiments to Reach Target Hypervolume | Final Hypervolume | Avg. Parallel Utilization (Expts/Batch) |

|---|---|---|---|

| Bayesian MOBO (q-EHVI) | 42 | 0.87 | 4 |

| Random Search | 118 | 0.81 | 4 |

| Single-Objective BO (Yield only) | 60* | 0.79 | 1 |

| Full Factorial Design | 256 (exhaustive) | 0.85 | N/A |

*Yield-optimized path ignored selectivity objective. Hypervolume measured relative to normalized objectives: Yield (0-100%), Selectivity (0-100%), Cost (inverted scale). Target hypervolume set at 95% of maximum found.

Table 2: Impact of Uncertainty-Guided Exploration on Outcome Robustness

| Strategy (Acquisition Function) | Probability of Finding True Pareto Front (%) | Max Performance Drop on Validation (%) |

|---|---|---|

| EHVI (Exploit + Explore) | 98 | 5.2 |

| Pure Exploitation | 65 | 15.7 |

| Pure Exploration | 92 | 8.1 |

*Based on 50 simulated runs with a 5-objective reaction optimization problem.

Experimental Protocols

Protocol 1: Setting Up a Bayesian MOBO Workflow for High-Throughput Reaction Screening

Objective: To identify Pareto-optimal conditions for a catalytic reaction maximizing yield and enantiomeric excess (ee).

Materials:

- Automated liquid handling station.

- Parallel reaction block (e.g., 24- or 96-well).

- Pre-prepared stock solutions of catalyst, ligands, substrates, bases, and solvents.

- UPLC/MS system for rapid analysis.

Procedure:

- Define Design Space: Specify continuous (temperature, concentration) and categorical (catalyst identity, solvent type) variables. Normalize ranges to [0, 1].

- Define Objectives: Specify primary (e.g., Yield) and secondary (e.g., ee) objectives. Determine direction (maximize/minimize).

- Initial Design: Perform a space-filling initial design (e.g., Sobol sequence, 10-20 points) covering the variable space. Execute these experiments in parallel.

- Model Initialization: For each objective, fit a GP model with a composite kernel (e.g., Matern for continuous, Hamming for categorical variables) to the initial data.

- Iterative Optimization Loop: a. Acquisition: Using the fitted models, compute the q-EHVI acquisition function to select the next batch (e.g., 4) of candidate conditions. b. Parallel Execution: Physically set up and run the batch of proposed reactions simultaneously. c. Analysis: Quantify yield and ee for all reactions in the batch. d. Model Update: Augment the dataset with the new results and refit the GP models.

- Termination: Halt after a predefined budget (e.g., 80 experiments) or convergence criterion (e.g., improvement in hypervolume < 2% over 3 iterations).

- Pareto Front Analysis: Identify the set of non-dominated optimal conditions from the final dataset for downstream validation.

Protocol 2: Validating Uncertainty Estimates via Hold-Out Experiments

Objective: To assess the calibration of the GP model's uncertainty predictions.

Procedure:

- After completing the MOBO run, randomly withhold 20% of the experimental data as a test set.

- Train the final GP model on the remaining 80% of data.

- Use the trained model to predict the mean (μ) and standard deviation (σ) for each point in the test set.

- For each test point, calculate the Z-score: (Actual_Value - μ) / σ.

- Assess calibration: The distribution of Z-scores across the test set should approximate a standard normal distribution (mean=0, std=1). Systematic deviations indicate poorly quantified uncertainty.

- Refine the GP kernel or likelihood function based on this analysis to improve future model reliability.

Visualizations

Bayesian MOBO Workflow for Reaction Optimization

Uncertainty Quantification Informs Experiment Selection

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Bayesian MOBO-Driven Reaction Optimization

| Item | Function in Bayesian MOBO Context |

|---|---|

| High-Throughput Experimentation (HTE) Kit | Pre-weighed, standardized vials of diverse catalyst/ligand libraries, substrates, and additives. Enables rapid, parallel assembly of proposed condition batches from the algorithm. |

| Automated Liquid Handler | Precisely dispenses microliter volumes from stock solutions. Critical for reliably and reproducibly executing the discrete conditions proposed by the optimization algorithm. |

| Parallel Pressure Reactor | A block of multiple miniature reactors allowing simultaneous execution of reactions under inert atmosphere and controlled heating/stirring. Maximizes parallelizability. |

| Rapid UPLC-MS/Chiral Station | Provides quick quantitative analysis (yield, conversion) and qualitative analysis (ee, selectivity) for high-frequency sample turnover required by iterative BO loops. |

| BO Software Platform (e.g., BoTorch, Ax) | Open-source or commercial libraries that implement GP models, multi-objective acquisition functions (EHVI), and offer APIs to integrate with lab automation. |

| Chemical Data Management System | A structured database (e.g., ELN/LIMS) to log all experimental conditions (features) and outcomes (objectives), creating the essential dataset for model training and iteration. |

A Step-by-Step Workflow: Implementing Bayesian MOBO in Your Lab for Reaction Screening

Within a Bayesian multi-objective optimization (BO-MO) framework for chemical reaction development, the precise definition of the initial search space is paramount. This step directly influences the efficiency of the optimization algorithm in navigating the complex parameter landscape towards Pareto-optimal conditions, balancing objectives such as yield, enantioselectivity, cost, and sustainability. This application note details the methodology for defining the critical parameter space for a model Suzuki-Miyaura cross-coupling reaction, a workhorse transformation in pharmaceutical synthesis.

Critical Parameter Selection & Rationale

For a generic aryl halide – boronic acid cross-coupling, four parameters are identified as most influential:

- Catalyst: Determines reaction feasibility, rate, and potential for side reactions.

- Solvent: Impacts catalyst solubility, stability, and reaction mechanism.

- Temperature: Governs reaction kinetics and thermodynamics.

- Time: Ensures reaction completion while minimizing decomposition.

Defined Search Space Ranges

Based on a survey of recent literature (2023-2024) and chemical feasibility, the following discrete and continuous ranges are proposed for initial Bayesian optimization.

Table 1: Defined Parameter Space for Suzuki-Miyaura Optimization

| Parameter | Type | Levels / Range | Rationale |

|---|---|---|---|

| Catalyst | Categorical | Pd(PPh3)4, Pd(dppf)Cl2, SPhos Pd G2, XPhos Pd G3 | Common, commercially available catalysts with varied steric/electronic properties. |

| Solvent | Categorical | 1,4-Dioxane, Toluene, DMF, EtOH/H2O (4:1) | Covers a range of polarities, coordinating abilities, and green chemistry considerations. |

| Temperature | Continuous | 50 °C – 120 °C | Below 50°C may lead to impractically slow rates; above 120°C risks solvent boiling/decomposition. |

| Time | Continuous | 1 – 24 hours | Practical range for standard laboratory operation. |

Experimental Protocol: High-Throughput Initial Condition Screening

This protocol supports the generation of initial data points for the BO-MO model.

Materials & Equipment

- Reactants: Aryl halide (1.0 mmol), Boronic acid (1.5 mmol), Base (e.g., K2CO3, 2.0 mmol).

- Catalyst Stock Solutions: 10 mM in appropriate anhydrous solvent.

- Solvents: Anhydrous and degassed 1,4-Dioxane, Toluene, DMF. EtOH/H2O mixture.

- Hardware: 24-well glass reaction block, aluminum heating block with stirring, inert atmosphere (N2/Ar) manifold.

Procedure

- Preparation: Under an inert atmosphere, prepare separate stock solutions of the aryl halide and boronic acid in each candidate solvent (0.1 M concentration).

- Dispensing: To each well of the reaction block, add: 1.0 mL aryl halide stock (0.1 mmol), 1.5 mL boronic acid stock (0.15 mmol), solid base (0.2 mmol).

- Catalyst Addition: Add 1.0 mL of the appropriate catalyst stock solution (0.01 mmol, 1 mol% Pd).

- Reaction Initiation: Seal the block, place in a pre-heated aluminum block at the target temperature (±1 °C), and initiate stirring (700 rpm).

- Quenching: At the predetermined time, remove the block and quench each well with 1 mL of saturated aqueous NH4Cl.

- Analysis: Extract with ethyl acetate, dry over MgSO4, and analyze by quantitative GC-FID or UPLC-MS using an internal standard. Calculate yield and, if applicable, enantiomeric excess (ee) via chiral stationary phase HPLC.

Table 2: Key Research Reagent Solutions

| Item | Function | Example/Specification |

|---|---|---|

| Pd Precatalyst Stock Solutions | Provides consistent, accurate catalyst dispensing. | 10 mM SPhos Pd G2 in anhydrous THF, stored under argon. |

| Degassed Solvents | Prevents catalyst oxidation/deactivation. | Solvents sparged with Ar for 30 min prior to use. |

| Internal Standard Solution | Enables accurate quantitative yield analysis. | 0.05 M dimethyl terephthalate in ethyl acetate. |

| Quench Solution | Stops the reaction uniformly for all samples. | Saturated aqueous ammonium chloride (aq. NH4Cl). |

Bayesian Optimization Workflow Diagram

Diagram Title: Bayesian Optimization Loop for Reaction Screening

Within the thesis on Bayesian multi-objective optimization (MOBO) for reaction conditions research in pharmaceutical development, selecting the appropriate acquisition function is a critical methodological step. This choice dictates how the algorithm balances exploration of the design space with exploitation of known high-performing regions across multiple, often competing, objectives (e.g., reaction yield, enantiomeric excess, cost, safety). This protocol details the application notes for three prominent functions: Expected Hypervolume Improvement (EHVI), ParEGO, and Multi-Objective Expected Improvement (MOEI).

The table below provides a structured comparison to guide selection based on research goals.

Table 1: Quantitative and Qualitative Comparison of MOBO Acquisition Functions

| Feature | Expected Hypervolume Improvement (EHVI) | ParEGO | Multi-Objective Expected Improvement (MOEI) |

|---|---|---|---|

| Core Principle | Directly maximizes the increase in dominated hypervolume. | Scalarizes objectives via random weights, applies single-objective EI. | Extends EI via maximin improvement or random scalarization. |

| Primary Goal | Convergence & Diversity. Find a Pareto front that maximizes overall coverage. | Convergence-focused. Efficiently approach a region of the Pareto front. | Exploration-focused. Good for initial search; can find diverse solutions. |

| Scalability (Objectives) | Computationally expensive beyond ~4 objectives (HV calc. complexity: O(n^(k/2))). | Excellent, designed for many objectives (≥4). | Moderate, depends on implementation. |

| Parameter Sensitivity | Low. Hypervolume reference point is main parameter. | Medium. Sensitive to the distribution of random weights and scalarization function (e.g., Tchebycheff). | Medium. May require tuning of the scalarization or improvement metric parameters. |

| Computational Cost | High per iteration, requires Monte Carlo integration. | Very Low. Uses fast single-objective optimization. | Moderate. Typically lower than EHVI. |

| Ideal Use Case in Drug Dev. | Final-stage optimization of ≤4 key reaction metrics (e.g., yield, purity, throughput). | High-dimensional objective space (e.g., optimizing yield against multiple impurity profiles). | Early-phase screening where broad exploration of reaction condition space is paramount. |

Application Protocols

Protocol 3.1: Implementing EHVI for Pareto Front Refinement

Objective: To precisely refine the Pareto-optimal set for 2-4 critical reaction objectives after initial screening. Materials: Gaussian Process (GP) surrogate models for each objective, historical experimental data. Procedure:

- Define Reference Point (z_ref): Set to a vector of "worst acceptable" values for each objective (e.g., [min yield, min ee]) based on domain knowledge. This is critical for EHVI performance.

- Model Training: Train independent GP models on all available reaction data for each objective.

- Monte Carlo EHVI Calculation: a. Draw joint posterior samples from the GPs across a candidate set of reaction conditions. b. For each sample, compute the hypervolume improvement over the current best Pareto set. c. Average the improvement across all samples to estimate EHVI.

- Select Next Experiment: Choose the reaction condition (e.g., catalyst, solvent, temperature) maximizing the EHVI value.

- Iterate: Run the experiment, update the dataset and GP models, and repeat from step 3.

Protocol 3.2: Implementing ParEGO for Many-Objective Optimization

Objective: To efficiently drive optimization when considering ≥4 reaction performance metrics. Materials: GP models, random weight generator. Procedure:

- Scalarization: At each iteration, generate a random weight vector (λ) from a Dirichlet distribution.

- Create Scalarized Objective: Transform the multiple objective values for each data point using the Tchebycheff function: f_scalar = max_i[ λ_i * |y_i - z_i| ] + ρ * Σ_i (λ_i * |y_i - z_i| ), where z_i is an ideal point and ρ=0.05.

- Build Single GP: Train a single GP model on the scalarized objective values.

- Maximize Expected Improvement (EI): Use standard, efficient EI to select the next reaction condition to evaluate.

- Iterate: Run experiment, add result to dataset, repeat from step 1 with a new random weight vector.

Protocol 3.3: Implementing MOEI for Exploratory Screening

Objective: To broadly explore a new reaction's condition space before focused optimization. Materials: GP models. Procedure:

- Model Training: Train independent GPs for each objective.

- Calculate Maximin Improvement: a. For each candidate condition, draw posterior samples. b. For each sample, compute the minimum improvement over the current Pareto front across all objectives. c. The MOEI value is the expected value of this minimax improvement.

- Alternative: Random Scalarization EI: Similar to ParEGO but often with fixed or fewer weight vectors aimed at exploration.

- Select Next Experiment: Choose the condition with the highest MOEI value.

- Iterate: Experiment, update, and repeat. Typically used for the first 10-20 iterations before switching to EHVI or ParEGO.

Visualizations

Title: Acquisition Function Selection Decision Tree

Title: MOBO Workflow with Acquisition Function Step

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Materials for Bayesian Optimization of Reaction Conditions

| Item | Function in MOBO Research |

|---|---|

| Automated Reactor Platform (e.g., Chemspeed, Unchained Labs) | Enables high-throughput, reproducible execution of candidate reaction conditions generated by the BO algorithm. |

| Online Analytical Instrumentation (e.g., UPLC, GC-MS, FTIR) | Provides rapid, quantitative multi-objective data (conversion, purity, selectivity) for immediate feedback into the BO loop. |

| GPy/BOTorch (Python Libraries) | Core software for building Gaussian Process models and implementing acquisition functions (EHVI, ParEGO, MOEI). |

| Dirichlet Distribution Sampler | Crucial for generating the random weight vectors in ParEGO to ensure effective exploration of the many-objective space. |

| Hypervolume Calculation Library (e.g., pygmo, deap) | Required for evaluating EHVI and benchmarking the performance of the final Pareto front. |

Application Notes

This protocol outlines the critical third step in a Bayesian multi-objective optimization (MOBO) framework for pharmaceutical reaction optimization. The objective is to transition from a prior model to an informed posterior by collecting a minimal, high-value initial dataset. This dataset bootstraps the active learning cycle, enabling efficient navigation of the complex trade-off space between reaction yield, enantioselectivity (e.r.), and cost/safety objectives.

A strategically designed Design of Experiments (DoE) is employed for this initial data collection, moving beyond traditional one-factor-at-a-time approaches. The data feeds a Gaussian Process (GP) surrogate model, which forms the core of the Bayesian optimizer. The quality of this initial design directly impacts the convergence rate and resource efficiency of the entire MOBO campaign.

Experimental Protocol: Initial DoE Execution and Data Collection

Objective

To execute a pre-defined experimental design (e.g., Latin Hypercube Sample, Sobol Sequence) for the catalyzed asymmetric reaction under study, collecting precise data on primary (Yield, e.r.) and secondary (Cost, Safety Index) objectives to populate the initial training set for the Bayesian MOBO model.

Pre-Experiment Requirements

- Completed Steps: Step 1 (Parameter Space Definition) and Step 2 (Prior Model & DoE Generation).

- Validated Design: A computer-generated design of 12-20 unique reaction condition sets within the defined parameter bounds.

- Materials: All reagents, catalysts, and solvents, as specified in the "Research Reagent Solutions" table, pre-characterized for quality.

Safety & Preparation

- Review all relevant Material Safety Data Sheets (MSDS).

- Perform all manipulations in an appropriately ventilated fume hood.

- Prepare and label individual vials or reaction vessels for each design point.

Detailed Procedure

Part A: Parallelized Reaction Setup

- Parameter Translation: Map each coded design point (e.g., values between -1 and 1) to actual physical conditions using the scaling equations defined in Step 1.

- Master Stock Solutions: Prepare stock solutions of substrate, catalyst, and any ligands in the specified dry solvent to ensure consistency across variable concentration conditions.

- Aliquoting: Using a calibrated automated liquid handler or positive displacement pipettes, transfer the specified volumes of stock solutions or neat reagents into each reaction vessel according to the design matrix. The order of addition should be standardized (e.g., solvent, substrate, catalyst, additive).

- Environment Control: Place all sealed reaction vessels onto a pre-equilibrated parallel stirring/heating block. Confirm each vessel reaches and maintains its designated temperature (±1°C).

Part B: Reaction Monitoring & Quenching

- Time Points: For reactions with uncertain kinetics, remove a small aliquot (e.g., 10 µL) from designated "kinetic probe" reactions at t = 30 min, 1 h, 2 h, 4 h, and 8 h for immediate analysis.

- Standard Quench: At the designated reaction time, simultaneously quench all reactions by injecting a pre-calculated volume of a standardized quenching agent (e.g., a 1:1 mixture of ethyl acetate and saturated aqueous NH₄Cl) using a programmable syringe pump.

Part C: Product Analysis & Data Extraction

- Sample Workup: Extract each quenched reaction mixture with a predefined volume of ethyl acetate (3 x 1 mL). Combine organic layers, dry over anhydrous MgSO₄, filter, and concentrate under reduced pressure.

- Yield Determination:

- Dissolve the crude residue in a known volume of a deuterated solvent containing a precise concentration of an internal standard (e.g., 1,3,5-trimethoxybenzene).

- Acquire ¹H NMR spectrum.

- Calculate yield by integrating the characteristic product peak(s) against the internal standard peak.

- Enantioselectivity Determination:

- Dilute a portion of the crude sample for chiral HPLC or SFC analysis.

- Use a validated chiral stationary phase (e.g., Chiralpak AD-H, OD-H).

- Calculate enantiomeric ratio (e.r.) from the integrated peak areas of the two enantiomers.

- Objective Calculation: For each reaction i, compute the objective vector yᵢ:

- Objective 1 (Maximize):

Yield (%)= NMR yield. - Objective 2 (Maximize):

Selectivity= log(e.r.), transforming the ratio to a symmetric scale. - Objective 3 (Minimize):

Cost Index= Σ(price of reagents in mmol). - Objective 4 (Minimize):

Safety Index= Σ(assigned penalty scores for solvent and reagent hazards).

- Objective 1 (Maximize):

Data Recording & Curation

Record all raw and calculated data in a structured table (see Table 1). This table constitutes the initial training data D = {X, Y} for the GP model.

Data Presentation

Table 1: Initial DoE Data for Model Bootstrapping (Example Subset)

| Exp ID | Catalyst (mol%) | Temp (°C) | Time (h) | [Sub] (M) | Solvent | Yield (%) | e.r. | log(e.r.) | Cost Index | Safety Index |

|---|---|---|---|---|---|---|---|---|---|---|

| D01 | 2.5 | 30 | 18 | 0.10 | Toluene | 45 | 88:12 | 2.04 | 12.5 | 15 |

| D02 | 5.0 | 50 | 6 | 0.05 | DCM | 78 | 92:8 | 2.44 | 18.7 | 18 |

| D03 | 1.0 | 70 | 12 | 0.15 | MeCN | 15 | 80:20 | 1.39 | 8.9 | 12 |

| D04 | 3.5 | 40 | 24 | 0.08 | THF | 92 | 95:5 | 2.94 | 22.1 | 16 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| D16 | 4.5 | 35 | 8 | 0.12 | EtOAc | 85 | 90:10 | 2.20 | 20.5 | 10 |

Visualization: Experimental and Computational Workflow

Diagram 1: Step 3 workflow for bootstrapping Bayesian MOBO.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for High-Throughput Reaction Optimization

| Item | Function / Rationale |

|---|---|

| Automated Liquid Handler | Ensures precise, reproducible dispensing of variable reagent volumes across dozens of experiments, critical for DoE fidelity. |

| Parallel Reaction Block | Enables simultaneous execution of all DoE points under controlled temperature and stirring, eliminating temporal bias. |

| Dry, Degassed Solvents | Contributes to reproducibility, especially for air/moisture-sensitive organometallic catalysts. |

| Internal Standard (e.g., 1,3,5-Trimethoxybenzene) | Allows for rapid, quantitative yield determination via ¹H NMR without need for purification or calibration curves. |

| Validated Chiral HPLC/SFC Column | Provides accurate and reproducible enantiomeric ratio measurement, the key metric for asymmetric catalysis. |

| Quench Solution Stock | Standardized quenching solution allows for simultaneous, automated termination of all reactions. |

| Electronic Lab Notebook (ELN) with API | Facilitates structured, machine-readable data capture directly from instruments, minimizing transcription errors. |

| Hazard Scoring Database (e.g., CHEM21) | Provides consistent penalty scores for calculating the Safety Index objective function. |

Application Notes

In Bayesian multi-objective optimization (MOBO) for reaction condition research, the optimization loop is the iterative engine driving discovery. This step refines the surrogate model with new experimental data, selects the most informative candidates for subsequent testing via an acquisition function, and executes parallel experiments to maximize knowledge gain per experimental cycle. The primary objectives are typically Pareto-optimal trade-offs between yield, selectivity, cost, and sustainability metrics.

Table 1: Common Multi-Objective Acquisition Functions & Performance Metrics

| Function Name | Mathematical Focus | Key Advantage | Common Use-Case in Reaction Optimization |

|---|---|---|---|

| Expected Hypervolume Improvement (EHVI) | Maximizes dominated hypervolume. | Directly targets Pareto front. | High-fidelity optimization with 2-4 objectives. |

| ParEGO | Scalarizes objectives via random weights. | Computational efficiency. | Screening phases with >4 objectives. |

| q-Nondominated Sorting (qNEI) | Batched Expected Improvement. | Balances exploration/exploitation in batch. | Parallel experimentation on robotic platforms. |

| Predictive Entropy Search (PES) | Maximizes information gain about Pareto set. | Reduces model uncertainty efficiently. | When experimental budget is severely limited. |

Table 2: Representative Parallel Experimentation Batch Results (Hypothetical Suzuki-Miyaura Cross-Coupling)

| Experiment ID | Ligand (mol%) | Base | Temp (°C) | Yield (%) | Selectivity (A:B) | Process Mass Intensity | Predicted EHVI |

|---|---|---|---|---|---|---|---|

| B-1 | SPhos (2.0) | K₃PO₄ | 80 | 92 | 99:1 | 12.4 | 0.154 |

| B-2 | RuPhos (1.5) | Cs₂CO₃ | 100 | 87 | 95:5 | 18.7 | 0.142 |

| B-3 | XPhos (3.0) | K₂CO₃ | 60 | 95 | 99:1 | 10.8 | 0.161 |

| B-4 | None | t-BuONa | 120 | 45 | 70:30 | 45.2 | 0.003 |

Experimental Protocols

Protocol 1: Iterative Model Update and Candidate Selection Workflow

Objective: To refine a Gaussian Process (GP) model and select the next batch of reaction conditions for experimental validation.

Materials: Historical dataset (min. 20 data points), MOBO software (e.g., BoTorch, Dragonfly), computational environment.

Procedure:

- Model Initialization: Train independent GP models for each objective (e.g., yield, enantiomeric excess) using a Matern 5/2 kernel on the normalized historical dataset.

- Hyperparameter Optimization: Maximize the log marginal likelihood of each GP model to optimize length-scales and noise parameters.

- Monte Carlo Sampling: Draw random scalarization weights from a Dirichlet distribution (ParEGO) or use direct integration (EHVI).

- Acquisition Optimization: Using a quasi-Newton method (e.g., L-BFGS-B), maximize the acquisition function (e.g., qNEHVI) over the continuous reaction parameter space (e.g., concentration, temperature, time).

- Candidate Selection: Select the top q points (where q is the batch size, e.g., 4-8) from the optimized acquisition function that are maximally distant in parameter space to ensure diversity.

- Output: Generate a machine-readable table (CSV/JSON) of the q selected reaction condition sets for the experimental platform.

Protocol 2: Parallelized Robotic Experimental Validation

Objective: To execute the batch of selected reaction conditions in parallel using automated liquid handling.

Materials: Automated synthesis platform (e.g., Chemspeed, Unchained Labs), stock solutions of reagents, catalysts, and solvents, HPLC/LCMS for analysis.

Procedure:

- Platform Preparation: Prime all fluidic lines with appropriate solvents. Load stock solutions into designated vials on the platform's deck.

- Method Programming: Translate the candidate table into a robotic execution method. Define aspirate/dispense steps for substrates, catalyst, ligand, base, and solvent.

- Reaction Execution: The platform sequentially or in parallel dispenses components into reaction vials (e.g., 8mL screw-top vials). The reactor module seals, inertizes (N₂/Ar purge), heats, and stirs the batch simultaneously.

- Quenching & Sampling: At reaction completion, the platform automatically cools the vials and injects a predefined aliquot into a prepared HPLC vial containing quenching solvent (e.g., acetonitrile with internal standard).

- Analysis: The batch of HPLC vials is transferred (manually or via robot) to an autosampler for sequential UPLC/LCMS analysis.

- Data Processing: Analytical results (peak area, conversion, yield via calibration) are automatically parsed and appended to the master dataset, completing one optimization cycle.

Visualizations

Title: Bayesian MOBO Iterative Workflow for Reaction Optimization

Title: Multi-Objective Candidate Selection from Parameter to Objective Space

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Bayesian Optimization Studies

| Item | Function in MOBO Workflow | Example/Note |

|---|---|---|

| Automated Synthesis Reactor | Enables precise, reproducible execution of parallel reaction batches. | Chemspeed SWING, Unchained Labs Junior. |

| Liquid Handling Robot | Prepares stock solutions, reaction aliquots, and dilution series for analysis. | Gilson Pipetmax, Hamilton Microlab STAR. |

| Integrated Analysis Module | Provides on-line or at-line reaction monitoring (e.g., HPLC, FTIR). | ReactIR, EasySampler coupled to UPLC. |

| MOBO Software Library | Provides algorithms for surrogate modeling, acquisition, and optimization. | BoTorch (PyTorch-based), Dragonfly. |

| Chemical Inventory Database | Tracks stock concentrations, locations, and metadata for automated liquid handling. | CSDS (Chemspeed), CAT (MCEC). |

| Internal Standard Solution | Enables robust quantitative analysis by correcting for injection volume variability. | Stable, inert compound not present in reaction mixture. |

Within the framework of Bayesian multi-objective optimization (MOBO) for reaction condition screening in drug development, Step 5 represents the critical decision-making phase. After the iterative optimization loop converges, a set of non-dominated optimal solutions—the Pareto front—is generated. This section provides protocols for analyzing this front and selecting a single, final set of conditions for scale-up or further development, balancing objectives such as yield, purity, cost, and environmental impact.

Quantitative Analysis of a Representative Pareto Front

The following table summarizes quantitative data from a hypothetical MOBO study optimizing a palladium-catalyzed cross-coupling reaction, with objectives to maximize Yield (%) and minimize Estimated Process Mass Intensity (PMI, kg/kg).

Table 1: Pareto Front Solutions from a Bayesian MOBO Study of a Cross-Coupling Reaction

| Solution ID | Catalyst Loading (mol%) | Temperature (°C) | Residence Time (min) | Solvent Ratio (Water:MeCN) | Yield (%) | PMI (kg/kg) | Purity (Area%) |

|---|---|---|---|---|---|---|---|

| PF-1 | 0.5 | 70 | 10 | 90:10 | 78 | 12 | 98.5 |

| PF-2 | 1.0 | 80 | 15 | 80:20 | 89 | 25 | 99.2 |

| PF-3 | 0.8 | 75 | 12 | 85:15 | 85 | 18 | 98.9 |

| PF-4 | 1.5 | 90 | 20 | 70:30 | 92 | 45 | 99.0 |

| PF-5 | 0.3 | 65 | 8 | 95:5 | 65 | 8 | 97.0 |

Protocols for Pareto Front Analysis and Selection

Protocol 3.1: Visualization and Clustering of the Pareto Front

Objective: To visually identify trade-offs and cluster similar solutions. Materials: Data table of Pareto-optimal solutions (e.g., Table 1), statistical software (e.g., Python with Matplotlib/Pandas, R, JMP). Procedure:

- Create a 2D/3D scatter plot of the primary objectives (e.g., Yield vs. PMI). Color-code points by a third key variable (e.g., catalyst loading).

- Perform principal component analysis (PCA) on all objective values and critical process parameters to reduce dimensionality.

- Apply a clustering algorithm (e.g., k-means, DBSCAN) to the PCA scores to identify groups of solutions with similar performance profiles.

- Overlay clustering results on the 2D scatter plot to contextualize the groups within the objective trade-off space. Deliverable: A annotated Pareto plot with clustered solutions, highlighting the "knee" region and outlier solutions.

Protocol 3.2: Decision-Making Using Scalable Criteria

Objective: To apply project-specific weights and constraints to select a final condition. Materials: Pareto front data, project requirement definitions (e.g., minimum yield, maximum allowable cost). Procedure:

- Define Constraints: Eliminate solutions that fail hard constraints (e.g., Yield < 80%, Purity < 98%, PMI > 30).

- Assign Weights: In consultation with project stakeholders, assign quantitative weights (w) to each objective reflecting strategic priorities (e.g., Yield: 0.6, PMI: 0.3, Purity: 0.1; Σw=1).

- Normalize Objectives: Scale each objective column to a 0-1 range, where 1 is the best value on the front (for minimization objectives, invert the scale).

- Calculate Score: For each solution, compute a weighted sum score:

Score = (w_Yield * Norm_Yield) + (w_PMI * Norm_PMI) + (w_Purity * Norm_Purity). - Rank and Select: Rank solutions by the composite score. The top-ranked solution is the proposed final condition. Deliverable: A ranked table of constrained solutions with composite scores, leading to a recommended selection.

Protocol 3.3: Robustness Verification of Selected Conditions

Objective: To experimentally confirm the performance of the selected condition under expected operational variability. Materials: Reagents and equipment for the selected reaction setup. Procedure:

- Prepare reaction setup according to the selected optimal conditions (e.g., Solution PF-3 from Table 1).

- Execute the reaction in triplicate to establish a baseline mean and standard deviation for key objectives.

- Design and execute a narrow, focused variation study (e.g., +/- 2°C on temperature, +/- 1 min on time) around the selected conditions.

- Measure outcomes (Yield, Purity) for each varied condition. Calculate the mean and variance across all runs.

- Compare the performance distribution from the robustness study against the project's success criteria. A robust solution will have all runs within acceptable limits. Deliverable: A verification report including comparative performance data and a conclusion on robustness.

Visualizing the Selection Workflow

Title: Workflow for Pareto Analysis and Final Selection

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for MOBO Reaction Screening & Analysis

| Item | Function in MOBO Context | Example/Notes |

|---|---|---|

| Bayesian Optimization Software | Core platform for designing experiments, updating surrogate models, and identifying the Pareto front. | Custom Python scripts with libraries like BoTorch, GPyOpt, or SciPy; commercial DOE software with MOBO capabilities. |

| High-Throughput Experimentation (HTE) Robotic Platform | Enables rapid, parallel execution of hundreds of reaction condition variations generated by the MOBO algorithm. | Chemspeed, Unchained Labs, or customized liquid handling systems integrated with microreactors. |

| Automated Analytical System | Provides rapid, quantitative analysis of reaction outcomes (yield, purity) essential for fast Bayesian model updates. | UPLC/HPLC systems with autosamplers (e.g., Agilent, Waters) coupled to mass spectrometry or diode array detectors. |

| Chemoinformatics & Data Analysis Suite | For processing analytical data, calculating derived objectives (e.g., PMI, cost), and performing statistical analysis. | KNIME, Spotfire, or Python/R environments with pandas, scikit-learn. |

| Model Reaction Substrate & Catalyst Library | A chemically diverse but relevant set of starting materials to validate the generalizability of optimized conditions. | Commercially available fragment libraries; in-house collections of common pharmacophores and privileged catalysts (e.g., Pd, Ni, organocatalysts). |

| Green Chemistry Solvent Kit | A pre-mixed set of sustainable solvents (e.g., 2-MeTHF, Cyrene, water) for evaluating environmental impact objectives. | Solvent selection guides (e.g., ACS GCI, CHEM21) compiled into a standardized HTE kit. |

Application Notes: Tools for Bayesian Multi-Objective Optimization

This overview details key software tools for implementing Bayesian Optimization (BO) in multi-objective reaction condition research. The objective is to efficiently navigate high-dimensional chemical spaces (e.g., catalyst, solvent, temperature, concentration) to simultaneously optimize yield, enantioselectivity, and cost.

Table 1: Quantitative Comparison of BO Frameworks

| Feature / Framework | BoTorch | Trieste | Summit | Custom Python |

|---|---|---|---|---|

| Primary Language | Python (PyTorch) | Python (TensorFlow) | Python | Python (NumPy, SciPy) |

| Core Strength | Flexible, research-oriented, modular | Robust, probabilistic, integrates w/ GPflow | Domain-specific (chemistry), user-friendly | Complete control, minimal dependencies |

| MOBO Acquisitions | qNEHVI, qNParEGO | EHVI, PES | Expected Improvement (EI) based | User-defined (e.g., EHVI, UCB) |

| Surrogate Model | GP, Multi-task GP | GP, Sparse GP, Deep GP | Random Forest, GP | GP (via GPyTorch/scikit-learn) |

| Automated Constraints | Via penalties/constrained BO | Yes | Yes | Manual implementation |

| Experimental Noise | Handled via heterogeneous noise GPs | Integrated | Additive noise assumption | Model-dependent |

| Learning Curve | Steep | Moderate | Gentle | Very Steep |

| Best For | Novel algorithm research | Production-ready robust BO | Chemists with limited coding | Specific, tailored research needs |

Table 2: Typical Performance Metrics in Reaction Optimization (Benchmark Example)

| Optimization Method | Avg. Iterations to Pareto Front* | Hypervolume Increase (%)* | Computational Cost per Iteration (CPU-s)* |

|---|---|---|---|

| Grid Search | 100+ | Baseline (0) | Low (1-5) |

| Summit (Random Forest) | 25-35 | ~45 | Medium (10-30) |

| BoTorch (qNEHVI) | 15-25 | ~65 | High (30-60) |

| Trieste (EHVI) | 20-30 | ~60 | Medium-High (20-50) |

| * Illustrative data from simulated benchmark (e.g., Branin-Currin). Real chemistry experiment iteration count is lower but wall-time is dominated by reaction execution. |

Experimental Protocols

Protocol 1: Setting Up a Multi-Objective Optimization Experiment Using Summit Objective: To optimize a Pd-catalyzed cross-coupling reaction for both yield and enantiomeric excess (ee) using Summit's GUI.

- Define Variables: In Summit, create continuous variables (e.g., Temperature: 25-100 °C, Catalyst Loading: 0.5-5.0 mol%) and categorical variables (e.g., Solvent: [THF, Dioxane, Toluene], Ligand: [L1, L2, L3]).

- Define Objectives: Create two objectives:

yield(MAXIMIZE) andee(MAXIMIZE). - Select Strategy: Choose "MOBO" from the strategies, with

Expected Hypervolume Improvementas the acquisition function. Use aRandom Forestsurrogate model. - Initial Design: Specify an initial

Latin Hypercubedesign of 5-10 experiments. - Run Iteratively: Execute initial experiments, input results (

yield,ee) into Summit. Use the "Suggest Next Experiments" function to generate a batch of 3-5 new conditions. Repeat for 4-8 cycles. - Analysis: Use Summit's built-in visualization to plot the 2D Pareto front of yield vs. ee.

Protocol 2: Implementing a Custom qNEHVI Loop with BoTorch Objective: To implement state-of-the-art multi-objective batch optimization for a high-throughput experimentation campaign.

- Environment Setup: Install

botorch,gpytorch,ax-platform. Initialize aSingleTaskGPmodel with aMaternKernelandHeteroskedasticLikelihoodto model experimental noise. - Data Formatting: Standardize input variables (zero mean, unit variance). Normalize objective values between 0 and 1 using a known reference point.

- Acquisition Function: Define the

qNoisyExpectedHypervolumeImprovementacquisition function. Set the reference point to[0.0, 0.0]for normalized objectives. - Optimization Loop:

- Posterior Analysis: Compute the Pareto front from the final GP posterior mean. Calculate the dominated hypervolume metric against a baseline.

Visualizations

Title: MOBO Workflow for Reaction Optimization

Title: Decision Tree for Selecting a Bayesian Optimization Tool

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Digital & Experimental Materials for Bayesian MOBO in Chemistry

| Item | Function in MOBO Reaction Research |

|---|---|

| High-Throughput Experimentation (HTE) Platform (e.g., automated liquid handler, parallel reactor blocks) | Enables rapid, precise, and reproducible execution of the candidate reaction conditions suggested by the BO algorithm. |

| Online Analytics (e.g., UPLC/MS, SFC, inline IR/ReactIR) | Provides rapid quantification of objective functions (yield, ee, conversion) for immediate feedback into the BO loop, minimizing iteration time. |

| Domain-Knowledge Informed Search Space | A critically constrained set of plausible reagents, solvents, and conditions (e.g., solvent dielectric range, catalyst family) defined by the chemist to guide the AI, preventing nonsensical experiments. |

| Reference Catalysts & Control Reactions | Included in each experimental batch to calibrate and validate the consistency of the HTE platform and analytical methods over time. |

| Computational Environment (Python 3.9+, JupyterLab, containerization with Docker) | Ensures reproducibility of the BO algorithm's numerical results, model training, and candidate selection across different hardware setups. |

| Benchmark Reaction Dataset (e.g., a known reaction with a mapped Pareto front) | Used to validate and tune the performance of a new BO implementation before applying it to a novel, unknown chemical system. |

1. Introduction and Thesis Context Within the broader thesis on Bayesian multi-objective optimization (MOBO) for chemical reaction research, this case study presents its application to a critical pharmaceutical development challenge: the Suzuki-Miyaura cross-coupling reaction. MOBO is a machine learning framework ideal for navigating complex experimental landscapes where multiple, often competing, objectives must be balanced. Here, we simultaneously maximize the yield of the desired biaryl product P1 and minimize the formation of a critical homocoupling impurity ImpA, derived from the aryl bromide reactant.

2. Reaction Scheme and Optimization Objectives

- Reaction: Aryl Bromide R1 + Aryl Boronic Acid R2 → Biaryl Product P1 (Target) + Homocoupling Impurity ImpA (Primary Byproduct).

- Decision Variables (Inputs): Catalyst loading (mol%), Ligand loading (mol%), Base concentration (equiv.), Temperature (°C), Reaction time (h).

- Objectives (Outputs): Maximize Yield(P1)%, Minimize Area% of ImpA by UPLC.

3. Bayesian Multi-Objective Optimization Workflow

Diagram Title: Bayesian MOBO Workflow for Reaction Optimization

4. Experimental Data Summary Table 1: Representative Experimental Data from Iterative Optimization

| Experiment Cycle | Catalyst (mol%) | Ligand (mol%) | Base (equiv.) | Temp. (°C) | Time (h) | Yield(P1)% | ImpA Area% |

|---|---|---|---|---|---|---|---|

| DoE-1 | 1.0 | 2.0 | 2.0 | 70 | 16 | 78 | 5.2 |

| DoE-2 | 2.0 | 4.0 | 3.0 | 90 | 8 | 85 | 12.1 |

| ... | ... | ... | ... | ... | ... | ... | ... |

| MOBO-5 | 0.8 | 1.6 | 2.5 | 75 | 12 | 94 | 1.8 |

| MOBO-6 | 1.5 | 2.5 | 2.2 | 82 | 10 | 91 | 0.9 |

Table 2: Final Pareto-Optimal Conditions Identified

| Condition Set | Catalyst | Ligand | Base | Temp. | Time | Trade-off Focus |

|---|---|---|---|---|---|---|

| A (High Yield) | 1.2 mol% | 2.2 mol% | 2.8 equiv. | 85°C | 10 h | Max Yield (95%), Accept ImpA (2.5%) |

| B (High Purity) | 0.8 mol% | 1.6 mol% | 2.5 equiv. | 75°C | 12 h | Min ImpA (1.8%), High Yield (94%) |

| C (Balanced) | 1.0 mol% | 2.0 mol% | 2.5 equiv. | 80°C | 11 h | Yield 93%, ImpA 1.2% |

5. Detailed Experimental Protocols

Protocol 5.1: General Procedure for Suzuki-Miyaura Cross-Coupling Screening

- Preparation: In a nitrogen-filled glovebox, charge a 2-dram vial with a magnetic stir bar.

- Catalyst/Precatalyst System: Weigh and add palladium precatalyst (e.g., Pd(OAc)₂, PdCl₂(dppf)) and selected ligand (e.g., SPhos, XPhos, BrettPhos) according to specified mol% loading relative to R1.

- Reagents: Add aryl bromide R1 (0.1 mmol, 1.0 equiv.) and aryl boronic acid R2 (1.2-1.5 equiv.).

- Solvent and Base: Add degassed solvent (1.0 M concentration, e.g., toluene/water 4:1 or dioxane/water) followed by the base (e.g., K₃PO₄, Cs₂CO₃; 2.0-3.0 equiv.).

- Reaction: Seal the vial with a PTFE-lined cap, remove from glovebox, and place in a pre-heated aluminum block stirrer at the target temperature (e.g., 70-90°C). Stir for the designated time.

- Quenching: Cool the vial to room temperature. Dilute the reaction mixture with ethyl acetate (2 mL) and a saturated aqueous NH₄Cl solution (1 mL).

Protocol 5.2: UPLC Analysis for Yield and Impurity Quantification

- Sample Preparation: Transfer 100 µL of the quenched reaction mixture to a HPLC vial. Dilute with 900 µL of acetonitrile. Filter through a 0.45 µm PTFE syringe filter into a new HPLC vial.

- UPLC Conditions:

- Column: C18 reverse-phase (e.g., 50 x 2.1 mm, 1.7 µm).

- Mobile Phase A: Water with 0.1% formic acid.

- Mobile Phase B: Acetonitrile with 0.1% formic acid.

- Gradient: 5% B to 95% B over 3.5 minutes, hold 1 minute.

- Flow Rate: 0.6 mL/min.

- Detection: UV at 254 nm.