Bayesian Optimization for Reaction Conditions: A Machine Learning Guide for Accelerated Drug Discovery

This article provides a comprehensive guide to Bayesian Optimization (BO) for automating and accelerating the discovery of optimal chemical reaction conditions.

Bayesian Optimization for Reaction Conditions: A Machine Learning Guide for Accelerated Drug Discovery

Abstract

This article provides a comprehensive guide to Bayesian Optimization (BO) for automating and accelerating the discovery of optimal chemical reaction conditions. We explore the foundational principles of BO as an efficient global optimization strategy for expensive-to-evaluate black-box functions, such as reaction yield or selectivity. The methodological section details practical implementation, including surrogate model selection (e.g., Gaussian Processes), acquisition functions (EI, UCB, PI), and experimental design. We address common pitfalls, parallelization strategies (batch BO), and constraints handling. Finally, we validate BO's effectiveness through comparative analysis with traditional optimization methods like Design of Experiments (DoE) and grid search, highlighting its transformative potential in reducing experimental cost and time in pharmaceutical R&D.

What is Bayesian Optimization? Core Principles for Reaction Optimization

In synthetic chemistry and drug development, optimizing reaction conditions (e.g., catalyst, ligand, solvent, temperature, concentration) is a multidimensional challenge traditionally addressed through costly, time-consuming trial-and-error or one-variable-at-a-time (OVAT) experimentation. This application note frames the problem within the thesis that Bayesian Optimization (BO) guided by machine learning (ML) provides a superior, data-driven framework for reaction optimization. We detail protocols and data demonstrating how BO-ML systematically navigates complex chemical space to discover optimal conditions with minimal experimental iterations.

Quantitative Data: Traditional vs. BO-ML Approaches

Data sourced from recent literature on reaction optimization via Bayesian Optimization.

Table 1: Comparative Performance of Optimization Methods for a Palladium-Catalyzed C-N Cross-Coupling Reaction

| Optimization Method | Initial Experiments | Total Experiments to >90% Yield | Total Resource Cost (Estimated) | Optimal Conditions Found |

|---|---|---|---|---|

| Traditional OVAT | 1 (baseline) | 96 | 100% (Baseline) | Yes |

| Human Design-of-Experiments (DoE) | 24 | 48 | 60% | Yes |

| Bayesian Optimization (ML-Guided) | 12 | 24 | 30% | Yes |

Table 2: Key Parameters & Bounds for BO-ML Optimization of C-N Coupling

| Parameter | Symbol | Range/Bounds | Role in Optimization |

|---|---|---|---|

| Catalyst Loading | Cat | 0.5 - 2.0 mol% | Continuous Variable |

| Ligand Equivalents | Lig | 1.0 - 3.0 eq. | Continuous Variable |

| Base Concentration | Base | 1.0 - 3.0 eq. | Continuous Variable |

| Reaction Temperature | Temp | 60 - 120 °C | Continuous Variable |

| Solvent Dielectric | Solv | 4.0 - 25.0 (ε) | Categorical (Transformed) |

| Reaction Yield | Yield | 0-100% | Objective Function |

Experimental Protocol: Bayesian Optimization for Reaction Screening

Protocol 1: Setting Up a Bayesian Optimization Loop for Chemical Reactions

Objective: To maximize the yield (or other metric) of a target chemical reaction by iteratively selecting experiments via a Bayesian surrogate model.

I. Pre-Optimization Phase

- Define Search Space: Precisely specify continuous (e.g., temperature) and categorical (e.g., solvent type) variables and their bounds (See Table 2).

- Choose Objective Function: Define the primary outcome to optimize (e.g., NMR yield). Optionally, include penalties for cost or undesired byproducts.

- Select Initial Design: Perform a small set (n=8-12) of initial experiments using a space-filling design (e.g., Latin Hypercube Sampling) to gather baseline data for the model.

II. Core Optimization Loop

- Model Training: Train a Gaussian Process (GP) regression model on all accumulated data (Yield = f(Cat, Lig, Base, Temp, Solv)).

- Acquisition Function Maximization: Use an acquisition function (e.g., Expected Improvement, EI) to calculate the next most promising experimental conditions. EI balances exploitation (high predicted yield) and exploration (high uncertainty).

- Experiment Execution: Perform the reaction(s) suggested by the acquisition function in the laboratory.

- Data Augmentation: Add the new experimental result (yield) to the training dataset.

- Iteration: Repeat steps 1-4 until a yield threshold is met or the iteration budget is exhausted (typically 20-30 total experiments).

III. Post-Optimization Analysis

- Validate the top predicted conditions with triplicate experiments.

- Analyze the model's partial dependence plots to understand critical parameter interactions.

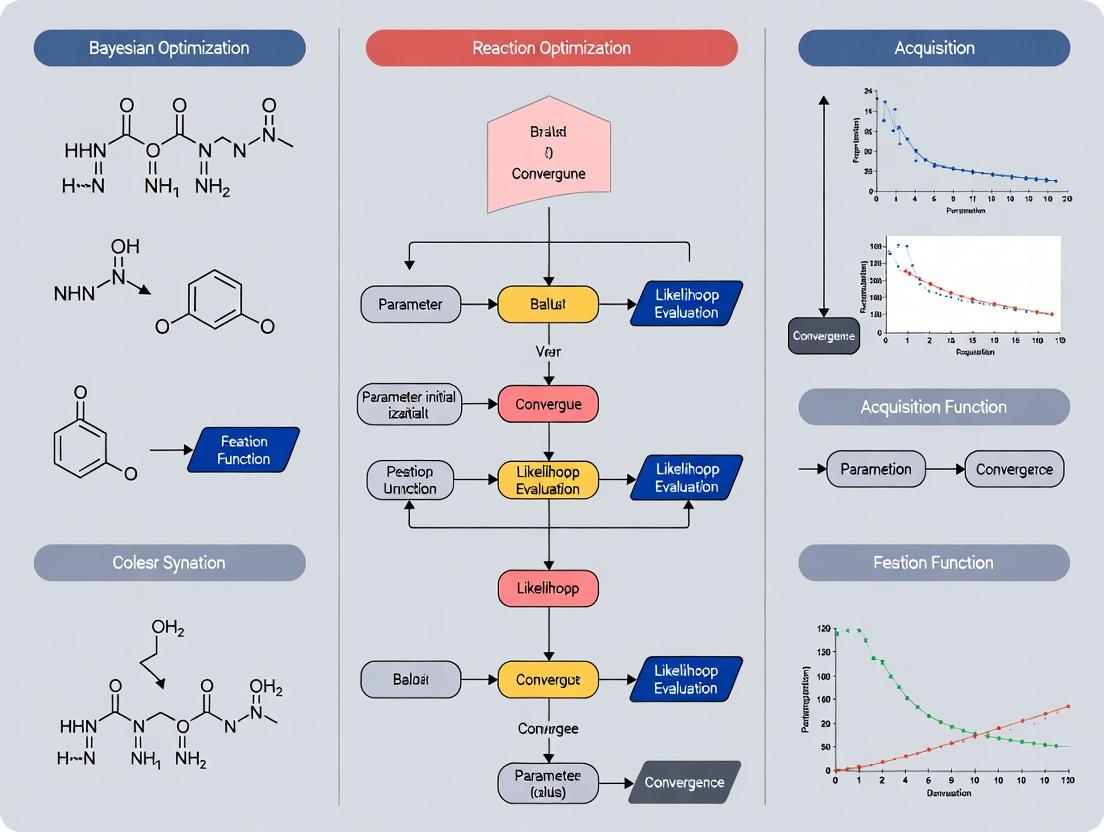

Visualization: BO-ML Workflow and Chemical Space Navigation

Title: Bayesian Optimization Loop for Chemistry

Title: Navigation Strategies in Chemical Space

The Scientist's Toolkit: Key Reagents & Materials for BO-ML-Driven Optimization

Table 3: Research Reagent Solutions for AI-Guided Reaction Screening

| Item | Function in BO-ML Workflow | Example/Notes |

|---|---|---|

| High-Throughput Experimentation (HTE) Kit | Enables rapid parallel execution of the initial design and suggested experiments. | 96-well microtiter plates with pre-weighed catalysts/ligands in vials. |

| Liquid Handling Robot | Automates reagent dispensing for reproducibility and scalability of the experimental loop. | Critical for ensuring data quality for model training. |

| In-line/Automated Analysis | Provides rapid quantification of reaction outcomes (yield, conversion). | UPLC-MS, HPLC with autosampler, or FTIR reaction monitoring. |

| BO-ML Software Platform | Hosts the algorithm for Gaussian Process modeling and acquisition function calculation. | Python libraries (scikit-learn, GPyTorch, BoTorch) or commercial platforms (Schrödinger, ASKCOS). |

| Chemical Database | Provides prior knowledge for feature generation (e.g., solvent parameters) or initial model pretraining. | PubChem, Reaxys, or internal electronic lab notebooks (ELN). |

Bayesian optimization (BO) is a powerful, sample-efficient strategy for optimizing expensive-to-evaluate "black-box" functions. In the context of machine learning for reaction condition optimization in drug development, it provides a principled mathematical framework for iteratively probing chemical space to rapidly converge on optimal conditions (e.g., yield, selectivity) with minimal experimental runs.

Core Principles and Application Notes

BO operates through a two-step iterative cycle:

- Surrogate Modeling: A probabilistic model, typically a Gaussian Process (GP), is trained on all data from previous experiments. It provides a prediction (mean) and an uncertainty estimate (variance) for all unexplored conditions.

- Acquisition Function Maximization: An acquisition function, using the surrogate's predictions, quantifies the utility of testing a new point. It balances exploitation (probing near high-performing known conditions) and exploration (probing regions of high uncertainty). The next experiment is selected by maximizing this function.

Key advantages for reaction optimization include handling noisy data, integrating prior knowledge, and optimizing over continuous, discrete, or categorical variables (e.g., catalyst, solvent, temperature).

Table 1: Comparison of Common Acquisition Functions in Bayesian Optimization

| Acquisition Function | Key Formula/Principle | Exploration-Exploitation Balance | Best For |

|---|---|---|---|

| Expected Improvement (EI) | EI(x) = E[max(f(x) - f(x*), 0)] |

Moderate, tunable via parameter ξ | General-purpose, robust |

| Upper Confidence Bound (UCB) | UCB(x) = μ(x) + κ * σ(x) |

Explicitly controlled by κ | Controlled exploration; theoretical guarantees |

| Probability of Improvement (PoI) | P(f(x) > f(x*) + ξ) |

Can be overly greedy | Rapid initial improvement, simple objectives |

Table 2: Illustrative BO Performance vs. Traditional Methods in Reaction Yield Optimization

| Optimization Method | Avg. Experiments to Reach >90% Yield | Best Yield Found (%) | Key Limitation |

|---|---|---|---|

| Bayesian Optimization (GP-UCB) | 18 ± 3 | 95.2 | Computationally intensive surrogate fitting |

| Grid Search | 45 (full factorial) | 94.8 | Exponentially scales with parameters |

| Random Search | 35 ± 8 | 92.1 | No information gain between experiments |

| One-Variable-at-a-Time (OVAT) | 28 ± 5 | 88.5 | Fails to capture parameter interactions |

Experimental Protocols

Protocol 1: Bayesian Optimization for Pd-Catalyzed Cross-Coupling Reaction Objective: Maximize reaction yield by optimizing four continuous variables: Temperature (30-100°C), Catalyst Loading (0.5-5.0 mol%), Reaction Time (1-24 h), and Equiv. of Base (1.0-3.0).

Materials: See The Scientist's Toolkit below. Pre-optimization:

- Define parameter bounds and objective (HPLC yield).

- Select an initial experimental design (e.g., 6 points via Latin Hypercube Sampling) and execute.

- Initialize BO algorithm with data from step 2. Standardize all input variables.

Iterative Optimization Cycle (Repeat until convergence or budget exhausted):

- Train Surrogate Model: Fit a Gaussian Process (GP) with a Matérn kernel to all collected (input, yield) data. Use maximum likelihood estimation for kernel hyperparameters.

- Propose Next Experiment: Calculate the Upper Confidence Bound (UCB, κ=2.0) across a dense grid of the parameter space. Identify the set of conditions

(T, Cat, t, Base)that maximize UCB. - Conduct Experiment: Perform the reaction under the proposed conditions in triplicate. Quench, work up, and analyze by HPLC using a calibrated internal standard.

- Update Dataset: Record the average yield. Append the new data point to the historical dataset.

- Check Stopping Criterion: Proceed if the iteration count is <50 AND the improvement in best yield over the last 10 iterations is >2%. Otherwise, terminate.

Protocol 2: Multi-Objective BO for Selective Inhibition Objective: Optimize reaction conditions to maximize yield of a kinase inhibitor analog while minimizing the formation of a toxic regioisomer byproduct.

- Define a vector objective:

[Yield(%), Isomer(%)]. Aim to maximize Yield and minimize Isomer. - Use a GP surrogate model for each objective.

- Employ the Expected Hypervolume Improvement (EHVI) acquisition function to propose experiments that expand the Pareto-optimal front.

- Follow a workflow similar to Protocol 1, but selecting conditions based on EHVI and analyzing outcomes for both objectives.

Mandatory Visualization

Bayesian Optimization Iterative Cycle

Gaussian Process Prior and Posterior

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for BO-Guided Reaction Optimization

| Reagent / Material | Function / Role in BO Workflow |

|---|---|

| Automated Parallel Reactor (e.g., Chemspeed, Unchained Labs) | Enables high-throughput execution of proposed condition arrays from the BO algorithm, ensuring reproducibility and speed. |

| HPLC-MS with Automated Sampler | Provides quantitative yield/purity data (the objective function) for each reaction, essential for updating the BO dataset. |

| Bayesian Optimization Software (e.g., BoTorch, GPyOpt, custom Python) | Core computational engine for building surrogate models and calculating acquisition functions to propose next experiments. |

| Chemical Libraries (Solvents, Catalysts, Reagents) | Broad stock of categorical variables for the BO algorithm to select from, defining the search space for reaction components. |

| Electronic Lab Notebook (ELN) with API | Critical for structured data logging, linking experimental results (yield) to precise input conditions, enabling automated data pipelining to the BO platform. |

Within a thesis on Bayesian optimization (BO) for reaction condition optimization in drug discovery, understanding the triad of core components is essential. This framework automates the search for optimal conditions (e.g., yield, enantioselectivity) by intelligently balancing exploration and exploitation, drastically reducing costly experimental iterations.

Core Components: Definitions and Current Research

The Surrogate Model

The surrogate model is a probabilistic model that approximates the expensive, black-box objective function (e.g., chemical reaction yield). It provides a posterior distribution (mean and uncertainty) over the objective given observed data.

Current Trends (2024-2025): Gaussian Processes (GPs) remain the gold standard for low-dimensional problems (<20 variables). For high-dimensional chemical spaces (e.g., mixed continuous/categorical variables), advanced models are gaining traction:

- Deep Kernel Learning (DKL): Combines neural networks' feature extraction with GPs' uncertainty quantification.

- Sparse Gaussian Processes: Address scalability issues for large datasets.

- Bayesian Neural Networks (BNNs): Offer flexibility for complex, high-dimensional data but can be computationally intensive.

Table 1: Quantitative Comparison of Surrogate Model Performance

| Model Type | Best For Dimensionality | Uncertainty Estimation | Training Scalability | Typical Use in Reaction Optimization |

|---|---|---|---|---|

| Standard Gaussian Process | Low (<20) | Excellent | Poor (>500 data points) | Solvent, catalyst, temperature screening |

| Sparse Variational GP | Medium (10-50) | Good | Good | Multi-step reaction condition optimization |

| Deep Kernel Learning | High (50-500+) | Good | Medium | High-throughput experimentation (HTE) data |

| Bayesian Neural Network | Very High (100+) | Moderate | Poor | Complex biochemical or pharmacokinetic objectives |

The Acquisition Function

The acquisition function uses the surrogate's posterior to decide the next point(s) to evaluate by balancing predicted performance (exploitation) and model uncertainty (exploration).

Leading Acquisition Functions:

- Expected Improvement (EI): The most widely used function. Measures the expected gain over the current best observation.

- Upper Confidence Bound (UCB): Adds a parameter (κ) to control the exploration-exploitation trade-off explicitly: UCB(x) = μ(x) + κ * σ(x).

- Knowledge Gradient (KG): Considers the value of information after the next evaluation, beneficial in batch settings.

- q-EI / q-UCB: Extensions for parallel or batch evaluation, critical for modern lab automation.

Table 2: Key Metrics of Popular Acquisition Functions

| Function | Parallelizable | Hyperparameter Sensitive | Computationally Efficient | Dominant Use Case |

|---|---|---|---|---|

| Expected Improvement (EI) | No (requires q-EI) | Low | High | Sequential optimization of single reactions |

| Upper Confidence Bound (UCB) | Yes | Moderate (κ) | High | Highly automated platforms with clear trade-off needs |

| Knowledge Gradient (KG) | Yes (q-KG) | Low | Low (complex) | Expensive batch experiments (e.g., biologics development) |

| Thompson Sampling | Yes | Low | Medium | Very large search spaces (e.g., polymer discovery) |

The Objective

The objective function is the costly experiment to be optimized. In reaction optimization, it is often a composite function balancing multiple outcomes.

Common Objectives in Drug Development:

- Primary: Reaction yield, enantiomeric excess (ee), purity.

- Composite: Weighted sum of yield and cost, or multi-objective optimization (Pareto fronts) for yield vs. environmental factor (e.g., E-factor).

- Constrained: Maximize yield subject to impurity being below a threshold.

Experimental Protocol: A Standard Bayesian Optimization Loop for Reaction Screening

Aim: To autonomously optimize the yield of a Pd-catalyzed cross-coupling reaction.

Protocol Steps:

- Define Search Space: Specify bounds/choices for continuous (temperature: 25-100°C, time: 1-24 h) and categorical (solvent: DMF, toluene, dioxane; ligand: L1-L4) variables.

- Initial Design: Perform a space-filling design (e.g., Latin Hypercube Sampling) for n=8 initial experiments. Execute reactions in parallel, purify, and quantify yield (HPLC analysis).

- Surrogate Model Training: Standardize input variables. Train a GP model with a Matérn kernel (ν=2.5) using the n input-condition → output-yield pairs. Optimize kernel hyperparameters via marginal likelihood maximization.

- Acquisition Optimization: Using the trained GP, compute the Expected Improvement (EI) across the search space. Identify the condition set x_next that maximizes EI. For parallel execution, optimize q-EI for a batch of 4 suggestions.

- Experiment & Update: Execute the reaction(s) at the suggested condition(s) x_next. Measure the objective (yield). Append the new data (x_next, y_next) to the existing dataset.

- Iteration: Repeat steps 3-5 for a predefined budget (e.g., 40 total experiments) or until a performance threshold (e.g., >90% yield) is met.

- Validation: Conduct triplicate experiments at the predicted optimal conditions to confirm reproducibility.

Visualization: Bayesian Optimization Workflow

Title: Bayesian Optimization Closed-Loop for Reaction Screening

The Scientist's Toolkit: Key Reagent Solutions for BO-Driven Reaction Optimization

Table 3: Essential Research Reagents and Materials

| Item | Function in BO Workflow | Example/Note |

|---|---|---|

| Automated Liquid Handling System | Enables precise, reproducible dispensing of reagents for initial design and iterative experiments. | Hamilton STAR, Labcyte Echo. Critical for high-throughput data generation. |

| Parallel Reactor Platform | Allows simultaneous execution of multiple reaction conditions under controlled environments (T, stirring). | HEL FlowCAT, Unchained Labs Junior. Provides the experimental throughput. |

| Online Analytical Instrument | Rapid, in-line quantification of reaction outcomes (yield, conversion). | Mettler Toledo ReactIR, HPLC/MS with autosampler. Accelerates the data collection step. |

| BO Software Library | Provides implemented algorithms for surrogate modeling and acquisition optimization. | BoTorch (PyTorch-based), Scikit-Optimize, GPyOpt. The computational core. |

| Chemical Variable Library | Pre-curated sets of solvents, catalysts, ligands, and reagents defining the categorical search space. | Solvents: varied polarity & proticity. Ligands: diverse steric/electronic profiles. |

| Standard Substrate Pair | Well-characterized starting materials for method development and BO algorithm benchmarking. | E.g., Boronic acid & aryl halide for Suzuki coupling optimization studies. |

Why Gaussian Processes Are the Go-To Surrogate for Chemical Spaces

Within Bayesian optimization (BO) frameworks for reaction condition screening and molecular property prediction, selecting a surrogate model is critical. Gaussian Processes (GPs) have become the predominant surrogate model for navigating chemical spaces due to their principled quantification of uncertainty and natural ability to model complex, non-linear relationships from sparse data.

Core Advantages in Chemical Space Applications

Table 1: Quantitative Comparison of Surrogate Models for Chemical Space

| Model Feature | Gaussian Process | Random Forest | Neural Network | Support Vector Machine |

|---|---|---|---|---|

| Intrinsic Uncertainty Quantification | Native (via predictive variance) | Via ensemble methods (e.g., jackknife) | Requires Bayesian or ensemble variants | Limited; typically point estimates |

| Data Efficiency | High (effective with <1000 samples) | Moderate | Low (requires large datasets) | Moderate |

| Handling of Sparse, Noisy Data | Excellent (via kernel & likelihood) | Good | Poor (prone to overfitting) | Moderate |

| Model Interpretability | Moderate (via kernel analysis) | High (feature importance) | Low | Moderate (support vectors) |

| Typical Optimization Overhead | O(n³) for training | O(n·trees) | Variable, often high | O(n² to n³) |

| Common Use in BO for Chemistry | >70% of published studies (est.) | ~15% | ~10% | <5% |

The cornerstone of a GP is its kernel (covariance) function, which dictates the similarity between molecular descriptors or fingerprints. For chemical spaces, the Matérn kernel (particularly ν=5/2) and composite kernels are standards.

Application Notes: GP-Guided Reaction Optimization

Protocol 3.1: Setting Up a GP Surrogate for Reaction Yield Prediction Objective: Build a GP model to predict reaction yield based on continuous (temperature, concentration) and categorical (catalyst, solvent) condition variables.

- Feature Representation: Encode continuous variables via min-max scaling. Encode categorical variables (e.g., 15 solvent choices) using a one-hot or learned embedding.

- Kernel Selection: Construct a composite kernel:

(Matérn(ν=5/2) on continuous vars) + (WhiteKernel for noise). For categorical variables, use a separate Matérn kernel on their embeddings. - Model Initialization: Use

GPRegressor(scikit-learn) orSingleTaskGP(BoTorch/GPyTorch). Set the likelihood toGaussianLikelihoodto model homoscedastic noise. - Training: Maximize the marginal log-likelihood using the L-BFGS-B optimizer. Typical convergence is achieved in <100 iterations for datasets of ~100 points.

- Validation: Perform 5-fold cross-validation. A well-specified GP should achieve a Q² > 0.6 and the predictive variance should correlate with absolute error.

The Scientist's Toolkit: Key Reagents for GP-Based Chemical BO

| Item | Function & Rationale |

|---|---|

| RDKit or Mordred | Generates molecular fingerprints (e.g., Morgan) or 2D/3D descriptors as input features for the GP. |

| scikit-learn / GPyTorch | Provides core GP regression implementations, optimizers, and kernel functions. |

| BoTorch or GPflow | Frameworks for scalable, high-level BO, integrating GP surrogates with acquisition functions. |

| Dragonfly or Sherpa | Alternative platforms for hyperparameter tuning and experimental design using GPs. |

| Custom Composite Kernels | Kernels combining linear, periodic, and Matérn components to model complex chemical relationships. |

Experimental Protocols

Protocol 4.1: Iterative Bayesian Optimization Loop for Catalyst Discovery Objective: Identify a high-performance catalyst from a library of 500 candidates within 50 experimental cycles.

- Initial Design: Select an initial diverse set of 10 catalysts using MaxMin diversity algorithm on molecular fingerprint space.

- Experimental Run: Perform reaction with each catalyst under standardized conditions; measure yield and selectivity.

- GP Model Update: Train a GP on the accumulated data, using a Tanimoto kernel on Morgan fingerprints to model catalyst similarity.

- Acquisition Function: Calculate Expected Improvement (EI) over the entire catalyst library. EI balances predicted high yield (exploitation) and high uncertainty (exploration).

- Next Experiment Selection: Choose the catalyst with the maximum EI score.

- Iteration: Repeat steps 2-5 until a yield >85% is achieved or the cycle limit is reached.

- Analysis: The final GP model provides a predictive landscape of catalyst performance, identifying structural features correlated with high yield.

Protocol 4.2: Uncertainty-Calibrated Virtual Screening Objective: Prioritize 50,000 virtual compounds for synthesis and testing against a target protein, focusing on predicted high activity and reliable predictions.

- Data Preparation: Use a curated set of 200 known active/inactive compounds with pIC50 values.

- GP Model Training: Train a GP using an ensemble of kernels (e.g., RBF on MACCS keys + linear kernel on physicochemical descriptors).

- Prediction & Uncertainty Estimation: Predict mean (μ) and predictive variance (σ²) for all 50,000 virtual compounds.

- Ranking Strategy: Rank compounds not just by μ, but by a lower confidence bound (LCB) score:

LCB = μ - κ * σ, where κ=1.5 (balances optimism with uncertainty). This penalizes compounds with high uncertainty. - Synthesis Priority List: Select the top 100 compounds ranked by LCB for further consideration.

Visualizations

Title: Bayesian Optimization Loop with GP Surrogate

Title: GP Kernel Composition for Chemical Features

The Exploration vs. Exploitation Trade-Off in Experiment Design

In Bayesian optimization (BO) for reaction condition screening in drug development, the exploration-exploitation trade-off is central. The algorithm must decide between exploring uncertain regions of the chemical space (potentially finding superior conditions) and exploiting known high-performing regions to optimize the objective function. This document provides application notes and protocols for implementing this trade-off in machine learning-guided experimentation.

Quantitative Comparison of Acquisition Functions

The core of managing the trade-off lies in the choice of acquisition function. The table below summarizes key functions, their parameters, and trade-off characteristics.

Table 1: Acquisition Functions for Managing Exploration/Exploitation

| Acquisition Function | Key Parameter(s) | Exploitation Bias | Exploration Bias | Primary Use Case |

|---|---|---|---|---|

| Expected Improvement (EI) | ξ (xi) | High (ξ=0.01) | Adjustable (ξ=0.1+) | General-purpose optimization |

| Upper Confidence Bound (UCB) | κ (kappa) | Low (κ=1.0) | High (κ=2.0+) | Directed exploration |

| Probability of Improvement (PI) | ξ (xi) | Very High | Low | Refining known optima |

| Thompson Sampling | Random sample from posterior | Balanced | Balanced | Stochastic parallelization |

| Entropy Search/Predicted Entropy Search | - | Information-theoretic | Maximizes information gain | Global mapping |

Data sourced from current literature (2024-2025) on Bayesian optimization benchmarks in chemical reaction space.

Experimental Protocol: Iterative BO Cycle for Reaction Optimization

This protocol details a standard cycle for optimizing a catalytic cross-coupling reaction using a BO framework.

Protocol 3.1: Iterative Bayesian Optimization Loop Objective: Maximize reaction yield over a multidimensional condition space (e.g., catalyst loading, ligand, temperature, concentration, solvent). Materials: See "Scientist's Toolkit" (Section 6). Procedure:

- Initial Design (Pure Exploration):

- Define parameter bounds and constraints (e.g., temperature: 25-100°C, catalyst: 0.5-5 mol%).

- Using a space-filling design (e.g., Latin Hypercube Sampling), run n initial experiments (n=8-16) to seed the model.

- Analyze yields (HPLC/LCMS) and record data.

Model Training (Gaussian Process):

- Standardize input features (e.g., scale to 0-1).

- Train a Gaussian Process (GP) regression model with a Matérn kernel. The GP provides a surrogate model of the reaction landscape: a mean prediction and uncertainty (variance) for any unobserved condition set.

Acquisition Function Optimization (Trade-off Decision):

- Select an acquisition function α(x) (e.g., EI with ξ=0.05).

- Maximize α(x) over the defined parameter space using a numerical optimizer (e.g., L-BFGS-B) to propose the next experiment's conditions. This step automatically balances exploring high-uncertainty regions and exploiting predicted high-yield regions.

Experiment Execution & Model Update:

- Perform the reaction at the proposed conditions.

- Quantify the yield.

- Append the new {conditions, yield} data pair to the training set.

- Retrain/update the GP model.

Iteration & Termination:

- Repeat steps 3-4 for a predefined number of iterations (e.g., 20-40) or until yield/convergence criteria are met (e.g., no improvement in max yield over 5 iterations).

- Analyze the final model to identify optimal conditions and interpret variable importance.

Protocol: Benchmarking Acquisition Functions

To empirically determine the best strategy for a specific reaction class, a benchmarking study is recommended.

Protocol 4.1: Benchmarking the Trade-off

- Select a known reaction system with a published or internally mapped yield landscape.

- Define a standardized initial design (same for all benchmarks).

- Run parallel, simulated BO campaigns using different acquisition functions (EI, UCB, PI) and multiple parameter settings (e.g., κ=1.0, 2.0, 3.0 for UCB).

- Track key metrics over iterations: Best Found Yield, Cumulative Regret, and Model Uncertainty Reduction.

- Compare the convergence rates and final outcomes to recommend a function for similar reaction spaces.

Table 2: Sample Benchmark Results (Simulated Suzuki-Miyaura Optimization)

| Iteration | EI (ξ=0.05) Best Yield | UCB (κ=2.0) Best Yield | PI (ξ=0.01) Best Yield | Random Search Best Yield |

|---|---|---|---|---|

| 0 (Init) | 45% | 45% | 45% | 45% |

| 5 | 78% | 72% | 85% | 65% |

| 10 | 92% | 88% | 90% | 78% |

| 15 | 95% | 95% | 92% | 82% |

| 20 | 98% | 97% | 93% | 85% |

Simulated data based on recent publications comparing BO strategies in high-throughput experimentation.

Visualizations

BO Workflow for Reaction Optimization

Acquisition Functions Balance Trade-Off

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Toolkit for ML-Guided Reaction Optimization

| Item | Function & Relevance to BO |

|---|---|

| High-Throughput Experimentation (HTE) Plate/Block | Enables parallel execution of initial design or batch proposals, drastically reducing cycle time per iteration. |

| Automated Liquid Handling System | Provides precise, reproducible dispensing of reagents (catalysts, ligands, substrates) across multidimensional condition arrays. Critical for reliable data generation. |

| Online/At-line Analytical (HPLC, UPLC-MS, GC) | Rapid yield/selectivity quantification to close the BO loop quickly. Integration with data pipelines is ideal. |

| Chemical Inventory & ELN | Structured data on reagent properties (e.g., pKa, steric volume) for feature engineering, enhancing the GP model's predictive power. |

| BO Software Library (e.g., BoTorch, Ax, GPyOpt) | Provides implemented acquisition functions, GP models, and optimization routines to build the experimental workflow. |

| Cloud/High-Performance Computing (HPC) | Resources for training GP models and optimizing acquisition functions over high-dimensional spaces, which is computationally intensive. |

The optimization of reaction conditions in chemical synthesis and drug development is a fundamental challenge. This document, framed within a thesis on Bayesian Optimization (BO) for machine learning-driven research, compares two traditional experimental design methods—One-Factor-at-a-Time (OFAT) and Full Factorial Design (FFD)—with the emerging approach of Bayesian Optimization. The objective is to provide application notes and detailed protocols for researchers aiming to efficiently navigate complex experimental spaces, such as reaction condition optimization, where factors like temperature, catalyst loading, pH, and solvent composition interact non-linearly.

Methodological Comparison: Core Principles

One-Factor-at-a-Time (OFAT): An iterative, sequential approach where one variable is changed while all others are held constant at a baseline. It is simple to execute and interpret but fails to detect interactions between factors, often leading to suboptimal results.

Full Factorial Design (FFD): A structured approach that experiments with all possible combinations of levels for all factors. It captures all main effects and interactions but becomes prohibitively expensive (experimentally) as the number of factors or levels increases (experiments = L^k, where L is levels and k is factors).

Bayesian Optimization (BO): A machine learning framework for global optimization of expensive black-box functions. It builds a probabilistic surrogate model (e.g., Gaussian Process) of the objective (e.g., reaction yield) and uses an acquisition function (e.g., Expected Improvement) to guide the selection of the next most promising experiment. It is highly sample-efficient, actively manages the trade-off between exploration and exploitation, and naturally handles noise.

Table 1: High-Level Method Comparison

| Feature | OFAT | Full Factorial (2-Level) | Bayesian Optimization |

|---|---|---|---|

| Experimental Efficiency | Low | Very Low (exponential growth) | Very High |

| Ability to Find Global Optimum | Low | High (within design space) | Very High |

| Handling of Factor Interactions | None | Complete | Model-Dependent |

| Number of Experiments for k factors | Linear (~k*L) | Exponential (2^k) | Sub-linear (Typically < 50) |

| Ease of Implementation | Very High | Medium | Medium (requires ML expertise) |

| Adaptivity | None | None | High |

| Best Use Case | Preliminary screening, very few factors | Small factor sets (k<5), where interactions are critical | Expensive experiments, >4 factors, non-linear responses |

Table 2: Simulated Optimization of a Palladium-Catalyzed Cross-Coupling Reaction (4 factors) Target: Maximize Yield. Baseline OFAT yield: 65%. Theoretical maximum: 95%.

| Method | Avg. Experiments to Reach >90% Yield | Total Expts. for Full Evaluation | Max Yield Found | Key Interaction Identified? |

|---|---|---|---|---|

| OFAT | Not Reached (plateau at ~78%) | 16 | 78% | No |

| Full Factorial (2^4) | 16 (all required) | 16 | 92% | Yes |

| Bayesian Optimization | 11 (± 3) | 20 (stopping point) | 94% | Yes (via model) |

Experimental Protocols

Protocol 4.1: OFAT for Preliminary Reaction Scoping

Objective: Identify rough trends for individual factors. Materials: See Scientist's Toolkit. Procedure:

- Establish Baseline: Run reaction with pre-defined standard conditions (e.g., 80°C, 2 mol% Catalyst, 1.5 eq. Base, Solvent A).

- Vary Temperature: Perform reactions at 60, 70, 80, 90, 100°C, holding all other factors at baseline.

- Analyze: Plot yield vs. temperature. Select the best level (e.g., 90°C).

- Iterate: Using the new best temperature (90°C), vary Catalyst Loading (1, 1.5, 2, 2.5 mol%) while holding others. Continue sequentially for all factors.

- Final Condition: The combination of individually optimal levels is declared the optimum.

Protocol 4.2: Full Factorial Design (2-Level) for Interaction Analysis

Objective: Quantify main effects and all two-factor interactions. Design: 2^4 design for factors A (Temp: Low/High), B (Catalyst: Low/High), C (BaseEq: Low/High), D (Solvent: Type1/Type2). Procedure:

- Define Levels: Set realistic high/low levels for each factor (e.g., Temp: 70°C / 110°C).

- Generate Design Matrix: List all 16 unique combinations.

- Randomize Order: Randomize run order to minimize bias.

- Execute Experiments: Perform each reaction in the randomized order.

- Statistical Analysis: Use multiple linear regression (Yield = β0 + β1A + β2B + β3C + β4D + β12AB + ...) to calculate effect sizes and p-values. A significant interaction term (e.g., A*B) indicates the effect of temperature depends on catalyst loading.

Protocol 4.3: Bayesian Optimization for Efficient Optimization

Objective: Maximize reaction yield with a budget of 20 experiments. Procedure:

- Define Search Space: Specify continuous ranges for each factor (e.g., Temp: [50, 120]°C).

- Initial Design: Perform 4-5 initial experiments using a space-filling design (e.g., Latin Hypercube) to seed the model.

- Model & Iterate: For each iteration: a. Surrogate Modeling: Fit a Gaussian Process (GP) model to all data collected so far. b. Acquisition Maximization: Calculate the Expected Improvement (EI) across the search space. Select the factor combination that maximizes EI. c. Experiment: Run the reaction at the proposed conditions. d. Update: Add the new (input, yield) data point to the dataset.

- Termination: Stop after 20 experiments or when yield improvement plateaus. The best observed condition is the recommended optimum.

Visual Workflows

Diagram 1: Sequential OFAT Workflow

Diagram 2: Full Factorial Design Process

Diagram 3: Bayesian Optimization Iterative Loop

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Reaction Optimization Studies

| Reagent/Material | Function/Explanation | Example in Cross-Coupling |

|---|---|---|

| Precatalyst Systems | Source of active metal center; choice influences rate, selectivity, and functional group tolerance. | Pd(PPh3)4, Pd2(dba)3, XPhos Pd G3 |

| Ligand Libraries | Modulate catalyst properties (sterics, electronics); critical for optimization. | Phosphine (SPhos), N-Heterocyclic Carbene (IPr·HCl) ligands |

| Base Solutions | Scavenge acids, facilitate transmetalation; type and equivalence are key variables. | K2CO3 (aqueous), Cs2CO3, organic bases (DIPEA) |

| Anhydrous Solvents | Reaction medium; affects solubility, stability, and mechanism. | Toluene, 1,4-Dioxane, DMF, MeCN (sparged with N2) |

| Quenching Agents | Safely terminate reactions for analysis. | Aqueous NH4Cl, silica gel plugs |

| Internal Standards | For accurate yield determination via chromatographic analysis. | Trifluoromethylbenzene, tetradecane (GC); 1,3,5-trimethoxybenzene (NMR) |

| Analytical Standards | Pure samples for calibration and product identification. | Authentic sample of target product for HPLC/GC retention time and NMR comparison |

Implementing Bayesian Optimization: A Step-by-Step Workflow for Chemists

Within Bayesian optimization (BO) for reaction condition optimization, the initial and most critical step is the rigorous definition of the search space. This space is a multidimensional hyperparameter domain where each axis represents a continuous or categorical reaction variable. A well-constructed search space bounds the BO algorithm's exploration, improving convergence efficiency and the practical relevance of discovered optima. This protocol details the systematic definition of search spaces for four fundamental parameters: catalysts, temperatures, solvents, and reagent equivalents, framing them as input variables for machine learning models.

Quantitative Parameter Ranges & Data Types

The following table summarizes typical ranges and data handling strategies for key parameters, based on current literature in automated synthesis and high-throughput experimentation (HTE).

Table 1: Search Space Parameter Specifications for Bayesian Optimization

| Parameter | Typical Type in BO | Recommended Range / Options | Data Encoding | Justification & Constraints |

|---|---|---|---|---|

| Catalyst | Categorical | E.g., Pd(PPh₃)₄, Pd(dba)₂, XPhos Pd G2, Ni(acac)₂, None | One-Hot or Label | Selection guided by reaction chemistry. Include a "no catalyst" option. |

| Temperature (°C) | Continuous (or Ordinal) | -78 to 250 (or solvent boiling point) | Normalized [0,1] | Lower bound set by cryogenic cooling; upper bound by solvent/reagent stability. |

| Solvent | Categorical | E.g., DMF, THF, Toluene, MeOH, ACN, DMSO, Water | One-Hot or SMILES | Prioritize solvents with diverse polarity, dielectric constant, and protic/aprotic nature. |

| Reagent Equivalents | Continuous | 0.5 to 3.0 (relative to limiting reagent) | Normalized [0,1] | Prevents large excesses that waste material or cause side reactions. |

| Reaction Time (hr) | Continuous | 0.5 to 48 | Log-scale normalization | Covers a broad dynamic range from fast to slow kinetics. |

| Concentration (M) | Continuous | 0.01 to 0.50 | Normalized [0,1] | Avoids overly dilute or viscous conditions. |

Experimental Protocol: High-Throughput Search Space Validation

This protocol describes the generation of a small, space-filling initial dataset (e.g., via Latin Hypercube Sampling) to validate the defined search space before full BO campaign initiation.

Materials & Reagents

Table 2: Research Reagent Solutions & Essential Materials

| Item | Function / Specification |

|---|---|

| Liquid Handling Robot | For precise, automated dispensing of catalysts, solvents, and reagents in microliter volumes. |

| HTE Reaction Blocks | 96-well or 384-well plates compatible with heating, stirring, and inert atmosphere. |

| Catalyst Stock Solutions | 0.1 M solutions in appropriate dry solvent (e.g., THF, Toluene), stored under argon. |

| Anhydrous Solvents | Stored over molecular sieves under inert gas to prevent hydrolysis-sensitive reactions. |

| Internal Standard Solution | Pre-weighed, consistent compound for reaction quenching and HPLC/GC-MS quantification. |

| Automated LC-MS/GC-MS System | High-throughput analytical system for rapid yield/conversion analysis. |

Step-by-Step Procedure

- Algorithmic Design: Use a Latin Hypercube Sampling (LHS) algorithm to select 20-30 distinct reaction condition sets from the defined multidimensional search space (Table 1). Ensure non-collapsing projections for each parameter.

- Plate Map Generation: Translate the LHS output into a robotic dispensing instruction file. Assign each condition to a specific well, including positive (known high-yielding condition) and negative (no catalyst, no heat) controls.

- Automated Dispensing: a. Purge the HTE reaction block with inert gas (N₂ or Ar). b. Using the liquid handler, first dispense the specified volumes of solvent to each well. c. Dispense the stock solutions of the substrate(s) and internal standard. d. Dispense the specified volume of catalyst stock solution. For "no catalyst" wells, dispense pure solvent. e. Finally, dispense the reagent stock solution to initiate the reaction.

- Reaction Execution: Seal the reaction block, initiate stirring, and transfer it to a pre-equilibrated heating block set to the specified temperature for each well (using a gradient thermal cycler if available). Run for the designated time.

- Quenching & Analysis: Automatically inject a quenching agent (e.g., a defined volume of acid or scavenger resin solution) into each well. Dilute an aliquot from each well with a standard analysis solvent.

- High-Throughput Analysis: Inject samples via an autosampler into the LC-MS/GC-MS. Quantify yield or conversion relative to the internal standard using calibrated curves or direct UV/ELSD response.

- Data Aggregation: Compile results (Yield/Conversion %) into a table matching the initial LHS design matrix. This forms the initial dataset for the BO algorithm.

Bayesian Optimization Workflow Integration

Diagram 1: BO Loop for Reaction Optimization

Parameter Interaction Diagram

Diagram 2: Key Parameter Interactions Affecting Outcome

In Bayesian Optimization (BO) for chemical reaction optimization, the objective function is the critical bridge between experimental outcomes and algorithmic learning. It quantitatively encodes the chemist's primary goal—maximizing yield, enhancing selectivity, or minimizing cost—into a single, computable metric. The formulation of this function directly dictates the efficiency and practical relevance of the optimization campaign. Within a broader machine learning research thesis, this step represents the translation of chemical intuition into a landscape that the BO algorithm can navigate.

Quantitative Data: Common Objective Function Formulations

Table 1: Standard Objective Function Components for Reaction Optimization

| Objective Primary Goal | Typical Mathematical Formulation | Key Variables | Advantages | Limitations |

|---|---|---|---|---|

| Maximizing Yield | f(x) = Yield(%) |

x: Reaction parameters (e.g., temp., conc.) |

Simple, direct, high throughput compatible. | Ignores impurities, cost, and sustainability. |

| Enhancing Selectivity | f(x) = Selectivity Index = [Product] / [Byproduct] or f(x) = -[Byproduct] |

x: Parameters influencing pathway kinetics. |

Drives towards cleaner reactions, reduces purification burden. | May compromise absolute yield. Requires analytical differentiation (e.g., GC, HPLC). |

| Minimizing Cost | f(x) = -[α*(Material Cost) + β*(Processing Cost) + γ*(Time Cost)] |

α, β, γ: Weighting coefficients; Cost factors. |

Promotes economically viable and scalable conditions. | Requires accurate cost models and weighting decisions. |

| Multi-Objective Composite | f(x) = w₁*Yield + w₂*Selectivity - w₃*Cost |

w₁, w₂, w₃: Normalized weighting factors summing to 1. |

Balances multiple, often competing, priorities. | Weight selection is subjective; requires domain expertise or Pareto front analysis. |

Table 2: Reported Performance of Different Objective Functions in BO Studies

| Study (Representative) | Reaction Type | Objective Function Chosen | BO Algorithm | Key Outcome | Reference Year* |

|---|---|---|---|---|---|

| Organic Synthesis | Pd-catalyzed C-N coupling | Yield (%) | Gaussian Process (GP)-BO | Achieved >95% yield in <15 experiments. | 2022 |

| Photoredox Catalysis | Alkene functionalization | Selectivity (Area% of desired isomer) | GP-BO | Improved regio-selectivity from 3:1 to >20:1. | 2023 |

| API Development | Multi-step sequence | Composite (0.7Yield + 0.3-Cost) | Tree-structured Parzen Estimator (TPE) | Reduced estimated cost by 35% vs. baseline. | 2023 |

| Biocatalysis | Enzyme-mediated reduction | Yield * Enzyme Turnover Number | Batch BO | Optimized for both efficiency and catalyst stability. | 2024 |

Note: Information sourced from recent literature searches.

Experimental Protocols

Protocol 3.1: Establishing a Baseline and Defining a Composite Objective Function

Aim: To initiate a BO campaign for a novel Suzuki-Miyaura cross-coupling reaction with considerations for yield, selectivity (against homo-coupling), and reagent cost.

Materials: (See Scientist's Toolkit) Procedure:

- Initial Design of Experiment (DoE): Perform 6-8 initial reactions using a space-filling design (e.g., Latin Hypercube) across the defined parameter space (Catalyst Loading: 0.5-2.5 mol%; Temperature: 25-80°C; Equiv. of Base: 1.0-3.0).

- Analytical Quantification:

- Quench reactions and dilute for analysis.

- Analyze via UPLC with UV detection at 254 nm.

- Quantify Yield using a calibrated external standard of the target product.

- Quantify Selectivity as

[Product Area] / ([Product Area] + [Homo-coupling Byproduct Area]).

- Cost Assignment: Calculate a normalized Cost Index for each condition using current catalog prices for catalysts, ligands, and reagents. Set the cheapest possible condition in the design space to an index of 1.0.

- Objective Function Calculation: For each experiment

i, compute:Objective_i = (0.50 * Normalized_Yield_i) + (0.35 * Selectivity_i) + (0.15 * (1 / Cost_Index_i)). Normalization scales Yield and (1/Cost) from 0 to 1 relative to the initial dataset. - Data Submission: Input the parameter sets and corresponding

Objectivevalues into the BO software platform as the training data.

Protocol 3.2: Iterative BO Loop for Objective Function Maximization

Aim: To execute the automated cycle of suggestion, experimentation, and learning. Procedure:

- Model Training: The BO algorithm (e.g., GP) models the relationship between reaction parameters and the composite objective score using the existing data.

- Acquisition Function Optimization: The algorithm's acquisition function (e.g., Expected Improvement) proposes the next 3-5 reaction conditions that balance exploration and exploitation.

- Robotic Execution: Program an automated liquid handling platform to prepare reactions in parallel according to the proposed conditions.

- Inline/Online Analysis: Transfer reaction aliquots to an inline HPLC or ReactIR for rapid analysis. Automate data processing to compute the objective score.

- Data Augmentation & Iteration: Append the new

(parameters, objective)results to the training set. Return to Step 1. Continue until convergence (e.g., <5% improvement over 3 consecutive iterations) or a resource limit is reached.

Mandatory Visualizations

Title: Workflow for Formulating the BO Objective Function

Title: Data Fusion into a Single Objective Score

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials

| Item | Function in Objective Function Development | Example/Note |

|---|---|---|

| Automated Synthesis Platform (e.g., Chemspeed, HEL Flowcat) | Enables high-fidelity, reproducible execution of the reaction conditions proposed by the BO algorithm. Critical for gathering consistent data. | Flowcat systems allow precise control of continuous variables (temp, flow rate). |

| Inline/Online Analytical (e.g., ReactIR, HPLC-SFC) | Provides rapid, quantitative data (yield, conversion, selectivity) for immediate objective function calculation without manual workup. | ReactIR monitors functional group conversion in real-time. |

| Chemical Cost Database (Internal or Commercial) | Supplies up-to-date reagent, catalyst, and solvent pricing for calculating the economic component of a cost-informed objective function. | Can be integrated via API into the data processing pipeline. |

| Data Management Software (e.g., CDD Vault, Benchling) | Centralizes experimental parameters, analytical results, and calculated objective scores, ensuring traceability and easy data export for BO. | |

| BO Software Library (e.g., BoTorch, Ax Platform) | Provides the algorithmic backbone for modeling the objective function landscape and suggesting new experiments. | Ax offers user-friendly interfaces for composite metric definition. |

| Normalization Scripts (Python/R) | Custom code to scale disparate metrics (%, ratio, $) to a common range (e.g., 0-1) before weighted summation, preventing unit bias. | Essential for robust composite functions. |

Within a Bayesian optimization (BO) framework for reaction condition optimization, the surrogate model approximates the unknown objective function (e.g., reaction yield, enantiomeric excess). The Gaussian Process (GP) is the predominant choice due to its inherent uncertainty quantification. The kernel (or covariance function) is the core of the GP, defining its prior over functions and profoundly impacting BO performance. This protocol details the selection and tuning of GP kernels for chemical applications.

Kernel Selection: A Comparative Analysis

Kernels encode assumptions about function properties like smoothness, periodicity, and trends. The table below summarizes key kernels for chemical optimization.

Table 1: Common GP Kernels and Their Applicability in Chemical Optimization

| Kernel Name & Mathematical Form | Hyperparameters (θ) | Function Properties | Best For Chemical Use-Case | Key Reference (from search) | ||||

|---|---|---|---|---|---|---|---|---|

| Radial Basis Function (RBF) / Squared Exponentialk(x,x') = σ² exp( -0.5 | x-x' | ² / l² ) | Signal variance (σ²), Length-scale (l) | Infinitely differentiable, very smooth. | Default choice for smoothly varying, continuous reaction landscapes (e.g., yield vs. temperature, concentration). | Rasmussen & Williams (2006), Gaussian Processes for Machine Learning | ||

| Matérn (ν=3/2)k(x,x') = σ² (1 + √3 r / l) exp(-√3 r / l)where r = ||x-x'|| | Signal variance (σ²), Length-scale (l) | Once differentiable, less smooth than RBF. | Realistic physical/chemical processes where response is not infinitely smooth. More robust to noise. | Shields et al. (2021), Nature (reaction optimization benchmark) | ||||

| Matérn (ν=5/2)k(x,x') = σ² (1 + √5 r/l + 5r²/3l²) exp(-√5 r/l) | Signal variance (σ²), Length-scale (l) | Twice differentiable. | A balanced, often recommended default for chemical data. | Reizman et al. (2016), React. Chem. Eng. (flow chemistry BO) | ||||

| Rational Quadratic (RQ)k(x,x') = σ² (1 + | x-x' | ² / (2α l²))⁻ᵅ | Signal variance (σ²), Length-scale (l), Scale mixture (α) | Flexible, can model multi-scale variations. | Complex landscapes with variations at different length-scales (e.g., mixed catalytic systems). | Hase et al. (2019), Trends Chem. (autonomous platforms) | ||

| Lineark(x,x') = σb² + σv² (x·x') | Bias variance (σb²), Variance (σv²) | Models linear trends. | Often combined with others to capture global linear trends in data. | N/A (standard kernel) | ||||

| Periodick(x,x') = σ² exp(-2 sin²(π||x-x'||/p) / l²) | Signal variance (σ²), Length-scale (l), Period (p) | Strictly periodic functions. | Rare for standard conditions. Potential for oscillatory phenomena in sequential reactions. | N/A (standard kernel) |

Note: Composite kernels (sums and products of the above) are frequently used to model complex structure.

Title: Decision Flow for GP Kernel Selection in Chemistry

Experimental Protocol: Kernel Implementation & Tuning for a Reaction Yield BO

This protocol outlines steps for a BO campaign optimizing a Pd-catalyzed cross-coupling reaction yield over three continuous variables.

Protocol 3.1: Initial Kernel Selection and Model Setup

- Define Search Space: For example: Catalyst loading (0.5-2.0 mol%), Temperature (50-120 °C), Reaction time (1-24 hours). Normalize all dimensions to [0, 1].

- Acquire Initial Data: Using a space-filling design (e.g., Latin Hypercube), conduct 5-10 initial experiments. Record yields (

y). - Standardize Data: Center yields to zero mean:

y_standardized = y - mean(y). - Select Initial Kernel: Based on Table 1 and the decision flow, start with a Matérn (ν=5/2) kernel. Assume a separate length-scale for each dimension (ARD=True).

- Construct GP Model: Use a GP implementation (e.g., GPyTorch, scikit-learn). Use a

ZeroMeanfunction and aGaussianLikelihood(to model homoscedastic noise). The full kernel is:Kernel = Matérn-5/2 (lengthscales=[l_cat, l_temp, l_time]). - Set Hyperparameter Priors (Bayesian Tuning): Apply weakly informative priors to regularize optimization:

- For length-scales: Set a

GammaPrior(concentration=2.0, rate=0.5). This discourages extremely small or large values. - For output scale (σ²): Set a

GammaPrior(concentration=2.0, rate=0.1). - For noise variance (σ_n²): Set a

GammaPrior(concentration=1.5, rate=5.0).

- For length-scales: Set a

Protocol 3.2: Hyperparameter Optimization & Model Training

- Objective: Maximize the Marginal Log Likelihood (MLL) of the data given the hyperparameters: log p(y | X, θ).

- Procedure: a. Initialize hyperparameters (e.g., all length-scales = 1.0). b. Using an optimizer (e.g., L-BFGS-B, Adam), perform gradient ascent on the MLL for 100-200 iterations. c. For a more robust search, perform this from 5-10 different random initializations and select the hyperparameter set with the highest MLL.

- Diagnostics: Check convergence (MLL curve plateauing). Examine learned length-scales: a very long length-scale implies low sensitivity; a very short one implies high sensitivity/non-stationarity.

Protocol 3.3: Iterative Refinement During BO Loop

- After each new experiment (or batch), update the GP by re-running Protocol 3.2.

- Monitor predictive performance on held-out initial data (e.g., via standardized mean squared error).

- If BO performance is poor (e.g., slow convergence, bad predictions):

a. Switch Kernel: Change from Matérn-5/2 to Matérn-3/2 if the landscape appears rough.

b. Add a Linear Kernel: Form a new composite:

Linear() + Matérn-5/2()if a global drift is observed. c. Use a Different Likelihood: For non-Gaussian noise (e.g., bounded yield data), consider aBetaLikelihood.

Title: GP Kernel Tuning and BO Iteration Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for GP Kernel Implementation in Chemical BO

| Item / Software | Function in Kernel Tuning | Example/Note |

|---|---|---|

| GPyTorch Library | Flexible, GPU-accelerated GP framework. Enables custom kernel design and modern optimizer use. | Preferred for research due to modularity. |

| scikit-learn GaussianProcessRegressor | Robust, user-friendly API for standard kernels and MLL optimization. | Ideal for rapid prototyping. |

| BoTorch Library | Built on GPyTorch, provides state-of-the-art BO loops, batch acquisition functions, and composite kernel support. | Recommended for full BO integration. |

| Gamma Prior Distributions | Regularizes hyperparameter optimization, preventing overfitting to small initial datasets. | Use torch.distributions.Gamma in GPyTorch. |

| L-BFGS-B Optimizer | Quasi-Newton method for efficient, deterministic MLL maximization. | Standard for low-dimensional hyperparameter spaces. |

| Adam Optimizer | Stochastic gradient descent variant. Useful for large models or many random restarts. | Use in GPyTorch with fit_gpytorch_torch. |

| ARD (Automatic Relevance Determination) | Uses a separate length-scale per input dimension. Identifies irrelevant variables. | Critical for high-dimensional chemical spaces. |

| Composite Kernel (Sum) | Models superposition of different effects (e.g., Linear + Periodic). |

ScaleKernel(Linear()) + ScaleKernel(RBF()). |

| Composite Kernel (Product) | Models interaction between different effects. | RBF(active_dims=[0]) * Periodic(active_dims=[1]). |

Within a Bayesian optimization (BO) framework for chemical reaction optimization, the acquisition function is the decision-making engine. It balances exploration (probing uncertain regions of the parameter space) and exploitation (refining known high-performing regions) to propose the next experiment. This protocol details the application and selection of two predominant functions—Expected Improvement (EI) and Upper Confidence Bound (UCB)—within drug development research, specifically for reaction condition optimization.

Quantitative Comparison of Acquisition Functions

Table 1: Core Characteristics of EI and UCB for Reaction Optimization

| Feature | Expected Improvement (EI) | Upper Confidence Bound (UCB) |

|---|---|---|

| Mathematical Formulation | EI(x) = E[max(0, f(x) - f(x*))] |

UCB(x) = μ(x) + κ * σ(x) |

| Key Parameter | ξ (Exploration-exploitation trade-off) | κ (Exploration weight) |

| Primary Strength | Directly targets improvement over best-observed. Provably convergent. | Explicit, tunable balance via κ. Intuitive interpretation. |

| Primary Weakness | Can be overly greedy with small ξ; sensitive to posterior mean scaling. | Requires careful manual or heuristic scheduling of κ. |

| Best Suited For | Final-stage optimization, constrained experimental budgets, maximizing yield quickly. | Early-stage screening, when broad exploration is paramount, multi-fidelity settings. |

| Common Defaults in Chemistry | ξ = 0.01 (low noise) to 0.1 (higher noise) | κ decreasing schedule (e.g., from 2.0 to 0.1) or fixed at 2.0-3.0. |

Table 2: Performance Metrics from Recent Studies (2023-2024)

| Study (Focus) | Acquisition Functions Tested | Key Finding (Mean ± Std Dev) |

|---|---|---|

| Palladium-Catalyzed Cross-Coupling (Yield Max.) | EI, UCB, Probability of Improvement | EI (ξ=0.05) found optimal conditions in 14 ± 3 iterations, vs. UCB (κ=2) in 18 ± 4 iterations. |

| Enzymatic Asymmetric Synthesis (Enantioselectivity) | EI, UCB, Thompson Sampling | UCB (κ=2.5) identified >99% ee in 22 ± 5 runs, outperforming EI which converged to local optimum (95% ee). |

| Flow Chemistry Reaction (Space-Time Yield) | EI, GP-UCB, Random | GP-UCB (decaying κ) achieved 90% of max STY in 30% fewer experiments than standard EI. |

Experimental Protocol: Implementing EI vs. UCB in a Reaction Optimization Loop

Protocol 1: Setting Up the Bayesian Optimization Experiment

- Objective: Maximize reaction yield (%) of a novel small-molecule kinase inhibitor intermediate.

- Parameters: 3 continuous variables (Temperature: 25-100°C, Catalyst Loading: 0.5-5.0 mol%, Reaction Time: 1-24 hours).

- Initial Design: 12 experiments via Latin Hypercube Sampling (LHS).

- Surrogate Model: Gaussian Process (GP) with Matérn 5/2 kernel.

- Acquisition Function Comparison Arm A: Expected Improvement (ξ = 0.05).

- Acquisition Function Comparison Arm B: Upper Confidence Bound (κ = 2.0).

- Budget: 40 total experiments per arm (including initial 12).

- Tools: Python with BoTorch or GPyOpt library; automated reactor platform.

Protocol 2: Iterative Experimentation and Evaluation Cycle

- Initialization: Run the 12 LHS-designed reactions, record yields.

- Model Training: Train separate GP models on cumulative data for Arm A and Arm B.

- Acquisition Maximization:

- For Arm A (EI): Compute

EI(x)over the parameter space. Identifyx_next = argmax(EI(x)). - For Arm B (UCB): Compute

UCB(x) = μ(x) + 2.0 * σ(x). Identifyx_next = argmax(UCB(x)).

- For Arm A (EI): Compute

- Experiment Execution: Execute the proposed reaction

x_nextin parallel for both arms using an automated reactor array. - Data Augmentation: Append the new

(x_next, y_next)result to the respective dataset. - Iteration: Repeat steps 2-5 until the total experiment budget (40) is reached.

- Analysis: Compare the convergence rate (yield vs. iteration) and final best yield achieved by each arm.

Visual Workflows

Title: Bayesian Optimization Loop for Reaction Screening

Title: How EI and UCB Use the GP Model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Bayesian Optimization-Driven Reaction Screening

| Item | Function in the Workflow | Example/Notes |

|---|---|---|

| Automated Parallel Reactor | Enables high-throughput execution of proposed experiments from the BO loop. | Chemspeed, Unchained Labs, or homemade array systems. |

| Liquid Handling Robot | For precise, reproducible dispensing of catalysts, ligands, and substrates. | Integrates with reactor platform for closed-loop automation. |

| Online Analytical | Provides immediate feedback (yield, conversion) for data augmentation. | HPLC, UPLC, or ReactIR coupled to the reaction array. |

| Bayesian Optimization | Core software for GP modeling and acquisition function computation. | BoTorch (PyTorch-based), GPyOpt, or custom Python scripts. |

| Chemical Databases | Informs prior distributions for GP models or initial design space. | Reaxys, SciFinder; used to set plausible parameter ranges. |

| Standard Substrate/Catalyst Kits | Ensures consistency and reproducibility across numerous experimental runs. | Commercially available diversity-oriented screening libraries. |

Within the broader thesis on Bayesian Optimization (BO) for reaction condition optimization in machine learning-driven research, Step 5 represents the core iterative engine. This step encapsulates the closed-loop cycle where theoretical models interface with empirical laboratory science. For drug development professionals, this phase is critical for accelerating the discovery of optimal synthetic routes, catalyst formulations, or bioprocessing conditions while minimizing costly and time-consuming experimentation. The BO loop systematically balances exploration of uncharted condition spaces with exploitation of known promising regions, a paradigm shift from traditional one-factor-at-a-time (OFAT) or statistical design of experiments (DoE) approaches.

The BO Loop: Detailed Components

Run Experiment

The first action in the loop is the execution of a physical or in silico experiment at a condition proposed by the acquisition function (from Step 4). The outcome, typically a yield, selectivity, or other performance metric, is measured with high fidelity.

Protocol 2.1.1: Executing a Chemical Reaction for BO Input

- Objective: To reliably generate the target response variable (e.g., reaction yield) for a given set of condition parameters (e.g., temperature, concentration, catalyst loading).

- Materials: See "The Scientist's Toolkit" (Section 5).

- Procedure:

- Condition Setup: In a controlled environment (e.g., glovebox for air-sensitive reactions), prepare the reaction vessel according to the specified parameters from the BO algorithm (e.g., set reactor temperature to 85°C).

- Reagent Addition: Sequentially add reagents following the order specified in the generic reaction scheme. Use precise analytical balances and calibrated pipettes.

- Reaction Monitoring: Initiate the reaction (e.g., by stirring). Monitor progress over time using an appropriate analytical method (e.g., in-situ FTIR, periodic sampling for UPLC analysis).

- Quenching & Work-up: At the predetermined reaction time, quench the reaction using a specified method (e.g., rapid cooling, addition of a quenching agent).

- Product Isolation & Analysis: Perform standard work-up (extraction, filtration) and purification (e.g., preparatory HPLC or flash chromatography) as required. Analyze the purified product via quantitative NMR (qNMR) or UPLC with diode array detection (DAD) against a calibrated standard to determine exact yield and purity.

- Data Recording: Document all raw analytical data (chromatograms, spectra) and calculate the final performance metric. Record any observed anomalies.

Update Model

The new experimental datum (condition x_new, outcome y_new) is added to the historical dataset D = D ∪ {(x_new, y_new)}. The Gaussian Process (GP) surrogate model is then retrained on this expanded dataset.

Protocol 2.2.1: Retraining the Gaussian Process Surrogate Model

- Objective: To update the probabilistic model of the objective function

f(x)incorporating the latest experimental result. - Inputs: Historical dataset

D(now updated), choice of kernel functionk(x, x'), prior mean function (often zero). - Software Tools: Python libraries (GPyTorch, scikit-learn, BoTorch) or commercial platforms (Siemens PSE gPROMS, Synthia).

- Procedure:

- Data Preprocessing: Normalize the updated input space

Xand target valuesyto zero mean and unit variance to improve model numerical stability. - Kernel Hyperparameter Optimization: Maximize the log marginal likelihood of the GP with respect to the kernel hyperparameters (e.g., length scales, output variance). This is typically done via gradient-based optimizers (e.g., L-BFGS-B).

- Equation:

log p(y|X) = -½ y^T K_y^{-1} y - ½ log |K_y| - (n/2) log(2π), whereK_y = K(X, X) + σ_n²I.

- Equation:

- Model Re-instantiation: Recompute the posterior distribution of

fusing the optimized hyperparameters. The posterior at any pointx*is Gaussian with updated meanμ(x*)and varianceσ²(x*).

- Data Preprocessing: Normalize the updated input space

- Output: A refreshed GP model that now reflects information from all experiments conducted to date.

Recommend Next Condition

The updated GP model's posterior distribution is used by the acquisition function α(x) to compute the utility of sampling each point in the design space. The point maximizing α(x) is selected as the next condition to test.

Protocol 2.3.1: Maximizing the Acquisition Function for Next Experiment Selection

- Objective: To identify the single most informative condition

x_nextto evaluate in the subsequent iteration. - Inputs: Updated GP model (mean

μ(x)and varianceσ²(x)functions), choice of acquisition function (e.g., Expected Improvement - EI), search space constraints. - Procedure:

- Acquisition Function Calculation: Evaluate

α(x)over the entire bounded search space. For EI:- Equation:

EI(x) = (μ(x) - f(x^+) - ξ) Φ(Z) + σ(x) φ(Z), whereZ = (μ(x) - f(x^+) - ξ) / σ(x),f(x^+)is the best observed value,Φandφare the CDF and PDF of the standard normal distribution, andξis a small exploration parameter.

- Equation:

- Global Optimization: Solve

x_next = argmax_x α(x). This is performed using an internal optimizer (e.g., multi-start gradient descent, DIRECT) asα(x)is cheap to evaluate. - Constraint Validation: Ensure

x_nextsatisfies all practical and safety constraints (e.g., solvent boiling points, equipment limits).

- Acquisition Function Calculation: Evaluate

- Output: A vector

x_nextspecifying the recommended condition for the next experiment, which is then fed back to "2.1. Run Experiment."

Data Presentation: Representative BO Loop Iteration Data

Table 3.1: Iterative Data from a BO Campaign for a Pd-Catalyzed Cross-Coupling Yield Optimization

| Iteration | Temperature (°C) | Catalyst Mol% | Equiv. Base | Ligand Type | Observed Yield (%) | Acquisition Value (EI) | Best Yield to Date (%) |

|---|---|---|---|---|---|---|---|

| 0 (Seed) | 80 | 2.0 | 2.0 | Biarylphosphine | 45 | - | 45 |

| 1 | 95 | 1.5 | 1.5 | N-Heterocyclic Carbene | 12 | 0.15 | 45 |

| 2 | 105 | 0.5 | 3.0 | Monophosphine | 78 | 0.82 | 78 |

| 3 | 70 | 2.5 | 2.5 | Biarylphosphine | 65 | 0.04 | 78 |

| 4 | 90 | 1.0 | 2.0 | N-Heterocyclic Carbene | 91 | 0.91 | 91 |

| 5 | 85 | 0.8 | 2.2 | N-Heterocyclic Carbene | 89 | 0.01 | 91 |

Note: Highlighted cells show key changes leading to improvement. The acquisition value drops after Iteration 4, suggesting convergence near the optimum.

Mandatory Visualizations

Title: BO Loop High-Level Workflow

Title: Model Update & Next Point Selection

The Scientist's Toolkit: Key Research Reagent Solutions

Table 5.1: Essential Materials for BO-Driven Reaction Optimization

| Item | Function & Relevance to BO | Example Product/Catalog Number |

|---|---|---|

| Automated Parallel Reactor | Enables high-throughput, simultaneous execution of multiple reaction conditions (x_next candidates) with precise control over temperature, stirring, and pressure. Critical for rapid BO iteration. | Chemspeed Swing, Unchained Labs Big Kahuna |

| Liquid Handling Robot | Automates precise dispensing of variable reagent amounts (catalyst, ligand, base) as dictated by BO-suggested continuous parameters, minimizing human error. | Hamilton MICROLAB STAR, Opentrons OT-2 |

| In-situ Reaction Monitor | Provides real-time kinetic data (y vs. time), allowing for dynamic termination or richer data (e.g., initial rate) as the objective function for the BO loop. | Mettler Toledo ReactIR, ASI RoboSynth ATR-FTIR |

| High-Throughput UPLC/MS | Rapidly quantifies yield and identifies byproducts for multiple reaction samples in parallel, generating the y_new for the data set. |

Waters Acquity UPLC H-Class, Agilent InfinityLab LC/MSD |

| GP/BO Software Platform | Provides the algorithmic backbone for model updating and next-point recommendation, often integrated with laboratory hardware. | BoTorch (Python), gPROMS (Siemens), Seeq |

| Chemical Inventory Database | Tracks stock levels and metadata for all reagents, enabling automated planning and preventing failed experiments due to material shortages. | Benchling ELN, Titian Mosaic |

| Parameter Constraint Library | A digital list of hard bounds (e.g., solvent boiling points, catalyst solubility) to ensure BO only recommends physically plausible conditions. | Custom SQL/Python database integrated with the BO algorithm |

This application note details a case study on the machine learning (ML)-guided optimization of a Suzuki-Miyaura cross-coupling reaction, a pivotal step in synthesizing a key intermediate for a Bruton’s Tyrosine Kinase (BTK) inhibitor candidate. The work is situated within a broader thesis employing Bayesian optimization (BO) for the autonomous discovery of complex pharmaceutical reaction conditions. The primary challenge addressed is the simultaneous maximization of yield and minimization of a critical aryl boronic acid homocoupling side product.

Bayesian Optimization Framework and Experimental Design

The BO loop was designed to optimize four continuous variables: catalyst loading (PdCl2(dppf)), ligand-to-palladium ratio, base equivalence (K3PO4), and reaction temperature. The objective function was a custom composite score: Score = Yield (%) - 5 × Homocoupling Area Percent (%).

A Gaussian Process (GP) surrogate model with a Matérn kernel was used to model the reaction landscape. For each iteration, the Expected Improvement (EI) acquisition function proposed the next set of conditions for experimental validation.

Table 1: Key Experimental Results from BO-Guided Optimization Campaign

| Experiment | Pd Loading (mol%) | L:Pd Ratio | Base (eq.) | Temp (°C) | Yield (%) | Homocoupling (%) | Composite Score |

|---|---|---|---|---|---|---|---|

| Initial DOE (Avg) | 1.0 | 2.0 | 2.0 | 80 | 65.2 | 8.5 | 22.7 |

| BO Iteration 5 | 0.75 | 1.5 | 2.5 | 70 | 78.5 | 4.2 | 57.5 |

| BO Iteration 12 (Optimal) | 0.5 | 1.2 | 3.0 | 65 | 92.1 | 1.8 | 83.1 |

| Final Validation | 0.5 | 1.2 | 3.0 | 65 | 91.8 | 1.7 | 83.3 |

Table 2: Comparison of Optimization Methods for Final Reaction Conditions

| Optimization Method | Avg. Yield (%) | Avg. Homocoupling (%) | Number of Experiments Required |

|---|---|---|---|

| Traditional OFAT | 85.3 | 3.5 | 32+ |

| Full Factorial DoE | 88.5 | 2.8 | 81 |

| Bayesian Optimization | 92.1 | 1.8 | 15 |

Detailed Experimental Protocols

Protocol 1: General Procedure for ML-Guided Suzuki-Miyaura Cross-Coupling Materials: See Scientist's Toolkit below. Procedure:

- Under a nitrogen atmosphere, charge a microwave vial with the aryl bromide substrate (1.0 equiv, 0.2 mmol scale), aryl boronic acid (1.3 equiv), and PdCl2(dppf) (X mol%, as per BO suggestion).

- Add the ligand (dppf, Y equiv relative to Pd, as per BO suggestion) and K3PO4 (Z equiv, as per BO suggestion).

- Evacuate and backfill with N2 (3x). Add degassed solvent mixture (1,4-dioxane/H2O, 4:1 v/v, 0.1 M concentration) via syringe.

- Seal the vial and place it in a pre-heated aluminum block at the target temperature (T °C, as per BO suggestion) with stirring for 18 hours.

- Cool to room temperature. Quench with saturated aqueous NH4Cl. Extract with ethyl acetate (3 x 5 mL).

- Dry the combined organic layers over anhydrous MgSO4, filter, and concentrate in vacuo.

Protocol 2: Quantitative Analysis by UPLC-MS

- Redissolve a precise aliquot of the crude residue in acetonitrile to a known concentration (~1 mg/mL).

- Inject onto a C18 reversed-phase UPLC column (1.7 µm, 2.1 x 50 mm).

- Employ a gradient from 5% to 95% acetonitrile in water (both containing 0.1% formic acid) over 3.5 minutes at 0.6 mL/min.

- Detect via diode array (UV at 254 nm) and mass spectrometry (ESI+).

- Calculate yield using an internal standard (dibenzyl ether) calibration curve. Quantify the homocoupling side product using its isolated standard.

Visualizations

Diagram 1: Bayesian Optimization Workflow for Reaction Screening

Diagram 2: Target API Synthesis Pathway with Key Coupling

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for High-Throughput Cross-Coupling Optimization

| Item | Function/Application |

|---|---|

| PdCl2(dppf) | Palladium pre-catalyst; stable, air-tolerant source of Pd(0) for Suzuki couplings. |

| 1,1'-Bis(diphenylphosphino)ferrocene (dppf) | Bidentate phosphine ligand; stabilizes Pd, modulates reactivity and selectivity. |

| Potassium Phosphate Tribasic (K3PO4) | Strong, non-nucleophilic base; essential for transmetalation step in Suzuki mechanism. |

| Anhydrous 1,4-Dioxane | Common, high-boiling ethereal solvent for Pd-catalyzed cross-couplings. |

| Inert Atmosphere Glovebox | For oxygen/moisture-sensitive reagent handling and vial setup. |

| Automated Liquid Handling System | Enables precise, reproducible reagent dispensing for high-throughput experimentation. |

| UPLC-MS with PDA Detector | Provides rapid, quantitative analysis of reaction conversion and impurity profile. |

| Multi-Position Parallel Reactor | Allows simultaneous execution of multiple condition variations under controlled heating/stirring. |

Integration with Robotic Flow Reactors and High-Throughput Experimentation (HTE)

Application Notes

The integration of robotic flow reactors with High-Throughput Experimentation (HTE) platforms, guided by Bayesian optimization (BO), creates a closed-loop system for autonomous reaction discovery and optimization. This synergy accelerates the exploration of chemical space for drug development by efficiently navigating multivariate parameter landscapes (e.g., temperature, residence time, stoichiometry, catalyst loading) with minimal human intervention. The robotic flow system executes experiments, HTE analytics provide rapid feedback, and a BO algorithm proposes the most informative subsequent experiments to maximize an objective (e.g., yield, selectivity).

Key Applications in Drug Development

- Rapid Screening of Cross-Coupling Conditions: Optimization of Pd-catalyzed reactions (Suzuki, Buchwald-Hartwig) for constructing complex pharmaceutical intermediates.

- Photoredox and Electrochemistry: Safe exploration of reactive intermediates and precise control of electrochemical parameters in flow.

- Heterogeneous Catalysis: Studying packed-bed reactors with online analysis to deconvolute catalyst activity and stability.

- Pharmaceutical Process Development: Accelerated route scouting and identification of optimal, scalable conditions for API synthesis.

- Biocatalysis in Flow: High-throughput optimization of enzyme-mediated transformations under continuous conditions.

Bayesian Optimization Integration