Bayesian Optimization in Drug Discovery: Accelerating Reaction Condition Screening with AI

This article provides a comprehensive guide to Bayesian Optimization (BO) for optimizing chemical reaction conditions, tailored for researchers and development professionals in pharmaceuticals and synthetic chemistry.

Bayesian Optimization in Drug Discovery: Accelerating Reaction Condition Screening with AI

Abstract

This article provides a comprehensive guide to Bayesian Optimization (BO) for optimizing chemical reaction conditions, tailored for researchers and development professionals in pharmaceuticals and synthetic chemistry. We explore the foundational concepts of BO as a sample-efficient global optimization strategy, contrasting it with traditional Design of Experiments (DoE). A detailed methodological breakdown covers surrogate models, acquisition functions, and experimental design. We address common implementation challenges, parallelization strategies, and constraint handling. The article concludes with validation frameworks, comparative analyses against alternative algorithms, and real-world case studies demonstrating accelerated development cycles, higher yields, and reduced experimental costs in reaction optimization and high-throughput experimentation.

What is Bayesian Optimization? Core Principles for Reaction Screening

Within the broader thesis on applying Bayesian optimization (BO) to reaction conditions research in drug development, this note provides foundational protocols. BO is a powerful strategy for optimizing expensive-to-evaluate black-box functions, such as chemical reaction yields or selectivity, with minimal experiments. It combines a probabilistic surrogate model, typically a Gaussian Process (GP), with an acquisition function to guide the search for global optima.

Core Theoretical Framework

Bayes' Theorem

The foundation of BO is Bayes' Theorem, which updates the probability for a hypothesis (e.g., the performance of untested reaction conditions) as more evidence becomes available.

Formula: P(Model|Data) = [P(Data|Model) * P(Model)] / P(Data)

Where:

P(Model|Data): The posterior probability – our updated belief after seeing data.P(Data|Model): The likelihood – probability of observing the data given the model.P(Model): The prior – our belief about the model before seeing data.P(Data): The marginal likelihood – ensures normalization.

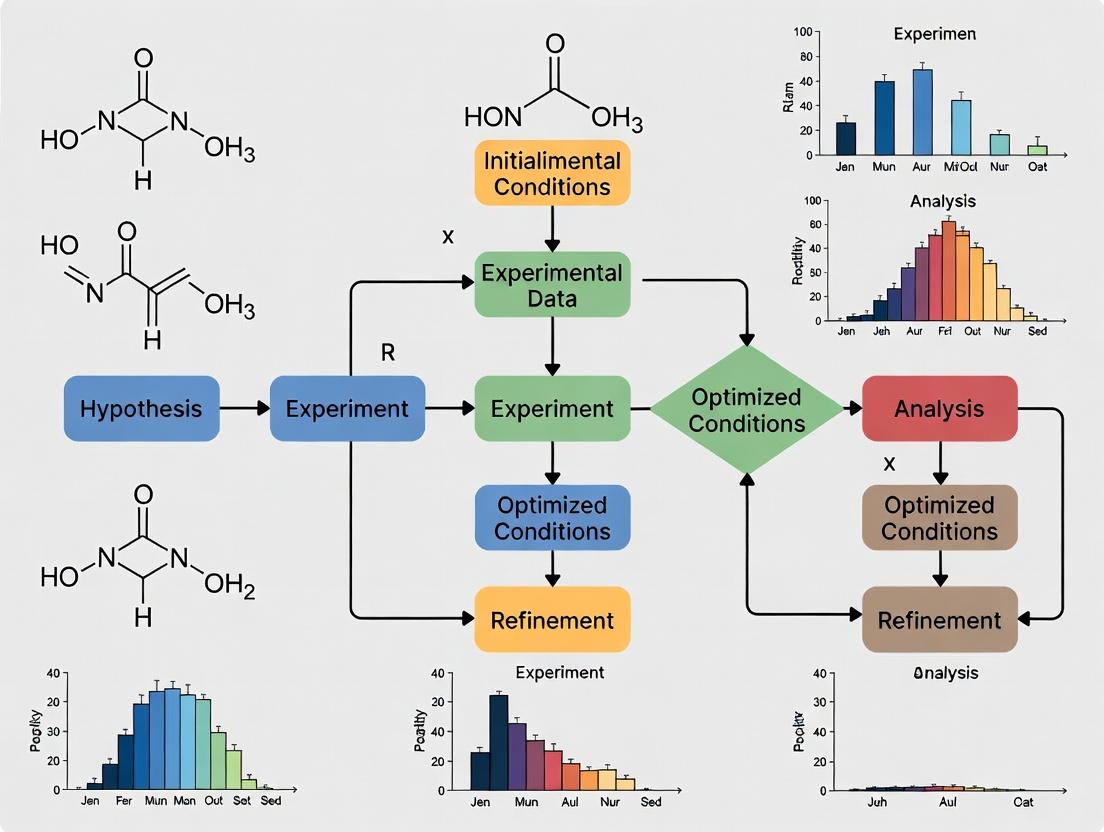

The BO Iterative Cycle

Bayesian Optimization iteratively implements this theorem through a closed-loop process.

Title: The Bayesian Optimization Iterative Cycle

Key Quantitative Comparisons: Common Acquisition Functions

The acquisition function balances exploration (trying uncertain regions) and exploitation (refining known good regions). Below is a comparison of three prevalent functions.

Table 1: Common Acquisition Functions in Bayesian Optimization

| Function (Acronym) | Formula (Simplified) | Best Use Case in Reaction Optimization |

|---|---|---|

| Expected Improvement (EI) | EI(x) = E[max(f(x) - f(x*), 0)] |

General-purpose; efficiently finds global optimum with a balance of exploration/exploitation. |

| Upper Confidence Bound (UCB/LCB) | UCB(x) = μ(x) + κ * σ(x) |

When a explicit balance parameter (κ) is desired. For minimization, use Lower Confidence Bound (LCB). |

| Probability of Improvement (PI) | PI(x) = P(f(x) ≥ f(x*) + ξ) |

Less common; can be overly exploitative, potentially getting stuck in local optima. |

Where: μ(x) = predicted mean, σ(x) = predicted standard deviation, f(x) = current best observation, κ/ξ = tunable parameters.*

Application Protocol: Optimizing a Catalytic Reaction Yield

This protocol outlines the application of BO for maximizing the yield of a Pd-catalyzed cross-coupling reaction, a common transformation in pharmaceutical synthesis.

Protocol 1: Initial Experimental Design & Setup

Objective: Establish a diverse set of initial reaction conditions to build the first surrogate model.

- Define Search Space: Identify key continuous (e.g., temperature, catalyst loading, time) and categorical (e.g., ligand type, solvent base) variables with feasible ranges/options.

- Choose Design: Perform a space-filling design (e.g., Latin Hypercube Sampling) across the continuous variables for a fixed number of initial experiments (N=5-10). For categorical variables, assign levels systematically across the initial set.

- Execute Initial Experiments: Run reactions according to the designed conditions in randomized order to mitigate confounding factors.

- Measure Response: Quantify the primary objective (e.g., yield via HPLC) for each experiment.

Protocol 2: Iterative Bayesian Optimization Loop

Objective: Sequentially identify the most informative conditions to evaluate to rapidly converge on the optimum yield.

- Data Standardization: Center and scale the objective function values (yields) to have zero mean and unit variance to improve GP model stability.

- Surrogate Model Training:

- Model: Use a Gaussian Process (GP) with a Matérn 5/2 kernel for continuous variables and a separate categorical kernel (e.g., Hamming) for discrete ones.

- Training: Optimize the GP hyperparameters (length scales, noise variance) by maximizing the log marginal likelihood using an algorithm like L-BFGS-B.

- Acquisition Function Maximization:

- Function: Apply Expected Improvement (EI).

- Optimization: Use a multi-start strategy (e.g., random sampling followed by gradient-based search) to find the condition

x_nextthat maximizes EI across the defined search space.

- Experimental Evaluation & Update:

- Execute the reaction at the proposed condition

x_next. - Measure the yield and add the new {condition, yield} pair to the dataset.

- Check convergence criteria (e.g., marginal improvement <2% over last 5 iterations, or max iterations reached). If not met, return to Step 2.

- Execute the reaction at the proposed condition

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Materials for BO-Guided Reaction Optimization

| Item | Function in the BO Context |

|---|---|

| Automated Parallel Reactor System (e.g., ChemSpeed, Unchained Labs) | Enables high-throughput, reproducible execution of the initial design and subsequent BO-proposed experiments. Critical for gathering data efficiently. |

| Online/At-line Analytics (e.g., UPLC, GC-MS) | Provides rapid quantification of the reaction outcome (yield, conversion, selectivity), minimizing the loop time for the BO algorithm. |

| Bayesian Optimization Software/Libraries (e.g., BoTorch, scikit-optimize, GPyOpt) | Provides the algorithmic backbone for building GP models, calculating acquisition functions, and suggesting next experiments. |

| Chemical Variables (Search Space) (e.g., Catalyst, Ligand, Solvent libraries) | The discrete and continuous parameters that define the reaction landscape to be explored. Quality and breadth directly impact the optimization potential. |

| Databasing & LIMS Software (e.g., Electronic Lab Notebook) | Tracks all experimental inputs (conditions) and outputs (analytical results) in a structured format, essential for reliable model training. |

Advanced Considerations & Pathway Logic

For biochemical or cell-based assays common in early drug development, BO can optimize complex multi-parameter spaces where a signaling pathway is the target.

Title: BO Applied to a Signaling Pathway Intervention

This foundational guide positions Bayesian Optimization as a rigorous, data-efficient framework for reaction optimization. By integrating probabilistic models with iterative experimental design, it directly addresses the core challenge of resource-intensive experimentation in pharmaceutical research, forming a critical methodology within the overarching thesis on accelerated development workflows.

Within the broader thesis on accelerating reaction optimization for drug development, Bayesian Optimization (BO) provides a rigorous, sample-efficient framework. It addresses the critical challenge of exploring high-dimensional, resource-intensive experimental spaces—such as varying catalysts, solvents, temperatures, and concentrations—with minimal costly experiments. Two conceptual pillars underpin this framework: the Surrogate Model, which statistically approximates the unknown reaction performance landscape, and the Acquisition Function, which intelligently guides the selection of the next experiment by balancing exploration and exploitation.

The Surrogate Model: A Probabilistic Approximation

The surrogate model, typically a Gaussian Process (GP), learns from the observed experimental data to predict the performance (e.g., yield, enantiomeric excess) of untested reaction conditions and quantifies the uncertainty of its predictions.

Core Mathematical Framework

A Gaussian Process is fully defined by a mean function m(x) and a covariance (kernel) function k(x, x'). Given a set of n observed data points D = {X, y}, the posterior predictive distribution for a new input x* is Gaussian:

- Mean: μ(x) = k(x, X)[K(X,X) + σ²_n I]⁻¹ y

- Variance: σ²(x) = k(x, x) - k(x, X)[K(X,X) + σ²_n I]⁻¹ k(X, x)* where K is the covariance matrix and σ²_n is the noise variance.

Common Kernel Functions for Chemical Reaction Data

The choice of kernel function encodes assumptions about the smoothness and periodicity of the reaction landscape.

Table 1: Kernel Functions and Their Application in Reaction Optimization

| Kernel Name | Mathematical Form | Key Hyperparameter | Best For Reaction Condition Traits | ||||

|---|---|---|---|---|---|---|---|

| Squared Exponential (RBF) | *k(x,x') = exp(- | x - x' | ² / 2l²)* | Length-scale l | Smooth, continuous landscapes (e.g., temperature effects). | ||

| Matérn 5/2 | (complex form) | Length-scale l | Less smooth, more rugged landscapes; robust default. | ||||

| Linear | k(x,x') = σ²_b + σ²_v (x·x') | Variances σ²_b, σ²_v | Modeling linear trends in concentration or additive effects. |

Protocol: Building and Validating a GP Surrogate for Reaction Yield Prediction

Objective: Construct a GP model to predict reaction yield based on three continuous variables: Temperature (°C), Catalyst Loading (mol%), and Reaction Time (hours).

Materials & Software:

- Dataset: Historical high-throughput experimentation (HTE) results (min. 20-30 data points).

- Software: Python with libraries:

scikit-learn,GPyTorch, orBoTorch.

Procedure:

- Data Preprocessing: Standardize all input variables (Temperature, Catalyst Loading, Time) to zero mean and unit variance. This ensures kernel functions treat dimensions equally.

- Kernel Selection: Initialize a composite kernel:

Matérn 5/2 Kernel + Linear Kernel. The Matérn kernel captures non-linear effects, while the Linear kernel captures potential additive contributions. - Model Training (Hyperparameter Optimization): Maximize the log marginal likelihood of the data given the model to infer kernel length-scales and noise variance. Use the L-BFGS-B optimizer.

- Model Validation: Employ Leave-One-Out Cross-Validation (LOOCV). For each data point i:

a. Train the GP on all data except i.

b. Predict the mean (μ_¬i) and variance (σ²_¬i) for the held-out condition i.

c. Calculate the standardized mean squared error:

(y_i - μ_¬i)² / σ²_¬i. Values near 1 indicate a well-calibrated model.

Expected Outcome: A trained GP model capable of providing a predictive mean yield and standard deviation for any set of conditions within the defined experimental domain.

Diagram 1: GP Surrogate Model Training and Validation Workflow

The Acquisition Function: The Decision Engine

The acquisition function α(x) uses the surrogate's predictions to quantify the utility of evaluating a candidate condition x. The next experiment is chosen by maximizing α(x).

Common Acquisition Functions

Table 2: Comparison of Key Acquisition Functions

| Function | Mathematical Form (Simplified) | Strategy | Pros | Cons |

|---|---|---|---|---|

| Probability of Improvement (PI) | α_PI(x) = Φ((μ(x) - f(x⁺) - ξ) / σ(x)) | Exploit | Simple, focuses on beating current best. | Gets stuck in local optima. |

| Expected Improvement (EI) | α_EI(x) = (μ(x)-f(x⁺)-ξ)Φ(Z) + σ(x)φ(Z) | Balance | Strong balance; most popular. | Requires choice of trade-off ξ. |

| Upper Confidence Bound (UCB) | α_UCB(x) = μ(x) + β σ(x) | Balance | Explicit parameter β for control. | Less theoretically grounded for noise. |

| Knowledge Gradient (KG) | Complex, evaluates expected max post-update | Global | Excellent for final recommendation. | Computationally expensive. |

Where: Φ, φ are CDF/PDF of std. normal, f(x⁺) is current best observation, ξ/β are exploration parameters.

Protocol: Implementing Expected Improvement for Reaction Optimization

Objective: Select the next reaction condition to evaluate by maximizing Expected Improvement.

Materials & Software:

- Trained GP Surrogate Model (from Protocol 2.3).

- Optimization routine (e.g., L-BFGS, DIRECT, or random sampling with selection).

Procedure:

- Define Domain: Specify bounds for all reaction variables (e.g., Temp: 25-150°C, Catalyst: 0.5-5 mol%, Time: 1-48h).

- Calculate Incumbent: Identify the current best observed performance, f(x⁺) = max(y).

- Set Exploration Parameter: Set ξ = 0.01 (typical). This encourages a small amount of pure exploration.

- Optimize αEI(x): Using a multi-start optimization strategy: a. Randomly sample 1000 points within the domain. b. Select the top 10 points with the highest *αEI* as starting points. c. Run a gradient-based optimizer (e.g., L-BFGS-B) from each of these 10 points to find local maxima of α_EI. d. Select the candidate condition x_next corresponding to the global maximum of α_EI.

- Execute Experiment: Run the reaction at x_next and measure the performance (e.g., yield).

- Update Dataset: Append

{x_next, y_next}to the historical datasetD.

Expected Outcome: The selected experiment has a high probability of either significantly improving yield or reducing uncertainty in a promising region of the condition space.

Diagram 2: Bayesian Optimization Loop via Acquisition Maximization

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 3: Key Components for a Bayesian Optimization-Driven Reaction Screen

| Item/Category | Example/Description | Function in the BO Framework |

|---|---|---|

| High-Throughput Experimentation (HTE) Kit | Pre-dispensed catalyst/substrate plates, automated liquid handlers. | Generates initial structured dataset (D) for surrogate model training rapidly and reproducibly. |

| Analytical Core | UPLC/HPLC with auto-samplers, GC-MS, inline IR/ReactIR. | Provides rapid, quantitative performance data (y) for each reaction, essential for timely model updates. |

| GP Modeling Software | BoTorch (PyTorch-based), GPyTorch, scikit-learn (GaussianProcessRegressor). |

Implements surrogate model construction, training, and prediction. |

| Optimization Library | BoTorch (acquisition functions & optimizers), SciPy (optimize). |

Solves the inner loop problem of maximizing the acquisition function. |

| Laboratory Automation Scheduler | Kronos, ChemSpeed software, custom Python scripts. |

Manages the queue of experiments, linking the BO algorithm's output to physical execution. |

| Chemical Variables (Typical) | Catalyst/ligand library, solvent selection screen, substrate scope. | Defines the multi-dimensional search space (X) that the BO algorithm navigates. |

| Performance Metric | Isolated yield, enantiomeric excess (ee), turnover number (TON), purity. | The objective function (y) to be maximized or minimized by the BO loop. |

Application Notes

This document compares Bayesian Optimization (BO) and traditional Design of Experiments (DoE) for optimizing chemical reaction conditions, framed within a thesis on adaptive experimentation for research acceleration. The core difference lies in efficiency: Traditional DoE is a batch-based, static process, while BO is a sequential, learning-based adaptive process.

Table 1: Quantitative Comparison of DoE vs. BO for a Model Suzuki-Miyaura Cross-Coupling Optimization

| Metric | Traditional DoE (Central Composite Design) | Bayesian Optimization (Gaussian Process) | Efficiency Gain |

|---|---|---|---|

| Total Experiments Required | 30 (Full factorial + star points + center) | 15 (Sequential) | 50% reduction |

| Iterations to Optimum | 1 (All data analyzed post-hoc) | 5-7 (Sequential updates) | N/A |

| Final Yield Achieved | 87% | 92% | +5% absolute yield |

| Parameter Space Explored | Pre-defined, fixed grid | Adaptive, focuses on promising regions | More efficient exploration |

| Resource Utilization | High upfront | Lower, distributed | Significant cost/time savings |

Table 2: Key Characteristics and Best Use Cases

| Aspect | Traditional DoE | Bayesian Optimization |

|---|---|---|

| Philosophy | Map entire response surface. | Find global optimum efficiently. |

| Workflow | One-shot, parallel batch. | Sequential, informed by prior results. |

| Data Efficiency | Lower; requires many points for complex models. | High; excels with limited, expensive experiments. |

| Complexity Handling | Struggles with >5-6 factors or noisy responses. | Robust to high dimensions and noise. |

| Best For | Screening, understanding main effects, stable processes. | Optimizing expensive-to-evaluate black-box functions (e.g., reaction yield, purity). |

Experimental Protocols

Protocol 1: Traditional DoE Workflow for Reaction Screening

- Objective: Identify significant factors (e.g., catalyst loading, temperature, solvent ratio) affecting yield.

- Design: 2-Level Fractional Factorial Design (Resolution IV).

- Define Factors & Ranges: Select 5-6 continuous factors with realistic min/max values.

- Generate Design Matrix: Use statistical software (JMP, Minitab, Design-Expert) to create a randomized run list (e.g., 16-32 experiments).

- Parallel Execution: Conduct all reactions in the matrix as a single batch, controlling conditions precisely.

- Analysis: After all data is collected, fit a linear model with interaction terms. Use ANOVA to identify statistically significant effects (p-value < 0.05).

- Validation: Run confirmation experiments at predicted optimal settings from the model.

Protocol 2: Bayesian Optimization Workflow for Reaction Optimization

- Objective: Maximize reaction yield with minimal experiments.

- Define Search Space: Specify parameters (e.g., Temp: 25-100°C, Equiv. Base: 1.0-3.0) and the objective (maximize Yield% from HPLC).

- Initial Design: Run a small space-filling batch (e.g., 4-6 experiments via Latin Hypercube) to seed the model.

- Model & Acquisition: Fit a Gaussian Process (GP) surrogate model to all available data. Use an acquisition function (Expected Improvement) to compute the most promising next condition.

- Run Experiment & Update: Execute the single suggested experiment, measure yield, and add the result to the dataset.

- Iterate: Repeat steps 3-4 until convergence (e.g., no improvement in best yield for 3 consecutive iterations or max budget reached).

- Tools: Python libraries (scikit-optimize, BoTorch, Ax) or commercial platforms (Synthia, MITSO).

Visualizations

Title: Comparison of DoE and BO Workflow Paths

Title: Core BO Iteration Cycle

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in BO/DoE Experiments |

|---|---|

| Automated Liquid Handling Station | Enables precise, reproducible dispensing of reagents and catalysts for high-throughput parallel (DoE) or sequential (BO) runs. |

| Parallel Reactor Block | Allows simultaneous execution of multiple reaction conditions under controlled temperature and stirring (critical for DoE batch runs). |

| In-line/On-line Analytics (e.g., HPLC, FTIR) | Provides rapid quantitative yield/purity data to feed the BO algorithm or analyze DoE batches with minimal delay. |

| Chemspeed, Unchained Labs, etc. | Integrated robotic platforms that automate the entire workflow: vial preparation, reagent addition, reaction execution, quenching, and sample analysis. |

| Statistical Software (JMP, Minitab) | Used to generate traditional DoE designs and analyze the resulting full-factorial data sets. |

| BO Software Libraries (Ax, BoTorch) | Open-source Python packages that implement Gaussian Processes, acquisition functions, and optimization loops for adaptive experimentation. |

| Chemical Informatics Platforms (e.g., Synthia) | Commercial software that integrates BO algorithms with chemical knowledge and robotic hardware for fully autonomous reaction optimization. |

Bayesian Optimization (BO) is an efficient, sequential design strategy for optimizing expensive black-box functions. Within the broader thesis on Bayesian optimization for reaction conditions research, its application is pivotal for navigating complex chemical spaces with minimal experimental runs. This protocol details its ideal use cases and methodologies.

Core Application Notes

BO is most beneficial when the experimental cost—in terms of time, materials, or resources—is high, and the response surface is unknown, non-convex, and potentially noisy. It is superior to grid or random search when the number of tunable parameters is moderate (typically 2-10).

Key Characteristics of Ideal BO Use Cases:

- High-Throughput Experimentation (HTE) Integration: Optimizing conditions for HTE workflows where each "batch" of experiments is costly to set up.

- Multidimensional Optimization: Simultaneously tuning continuous (temperature, concentration), discrete (catalyst loadings), and categorical (solvent, ligand type) variables.

- Noisy or Imprecise Responses: Where yield or selectivity measurements have inherent experimental error.

- Safety or Cost Constraints: When exploring certain regions of parameter space is unsafe or prohibitively expensive, which can be encoded into the BO acquisition function.

Table 1: Comparative Performance of Optimization Methods in Reaction Yield Maximization

| Optimization Method | Avg. Experiments to Reach >90% Yield | Success Rate (%) | Best for Parameter Type |

|---|---|---|---|

| Bayesian Optimization | 15-25 | 95 | Mixed (Cont./Cat./Disc.) |

| Design of Experiments (DoE) | 30-40+ | 85 | Continuous |

| Grid Search | 50+ | 80 | Low-dimensional Continuous |

| Random Search | 35-50 | 70 | All (Inefficient) |

| Human Intuition | Highly Variable | 60 | N/A |

Table 2: Common Reaction Optimization Parameters & BO Suitability

| Parameter | Typical Range | Type | BO Suitability (High/Med/Low) |

|---|---|---|---|

| Temperature | 0°C - 150°C | Continuous | High |

| Reaction Time | 1 min - 48 hr | Continuous | High |

| Catalyst Loading | 0.1 - 10 mol% | Continuous | High |

| Equivalents of Reagent | 0.5 - 3.0 eq | Continuous | High |

| Solvent | DMSO, THF, Toluene, etc. | Categorical | High (with correct kernel) |

| Ligand | PPh3, XantPhos, etc. | Categorical | High (with correct kernel) |

| pH | 3 - 10 | Continuous | High |

| Pressure | 1 - 100 bar | Continuous | Med (if limited data) |

Experimental Protocol: BO-Driven Pd-Catalyzed Cross-Coupling Optimization

Aim: To maximize the yield of a Suzuki-Miyaura cross-coupling reaction using BO.

1. Define Parameter Space & Objective:

- Variables: Catalyst loading (0.5-2.5 mol%, continuous), Ligand (SPhos, XPhos, DavePhos; categorical), Base (K2CO3, Cs2CO3, K3PO4; categorical), Temperature (40-100°C, continuous).

- Objective Function: NMR Yield (%) after a fixed time. Expensive-to-evaluate black box.

2. Initial Design:

- Perform a space-filling initial design (e.g., Latin Hypercube) for continuous variables and random selection for categorical ones.

- Protocol: Carry out 8 initial experiments according to the designed conditions in parallel.

- In a nitrogen-filled glovebox, add aryl halide (0.1 mmol), boronic acid (0.12 mmol), base (2.0 equiv), and magnetic stir bar to a 2-dram vial.

- Add stock solutions of Pd precursor and ligand in degassed toluene to achieve specified mol%.

- Add degassed solvent (total volume 1 mL).

- Seal vial, remove from glovebox, and place on pre-heated stir plate in aluminum block for 18 hours.

- Quench with 1M HCl, dilute, and analyze by UPLC or NMR using an internal standard.

3. BO Loop Iteration:

- Model Training: Fit a Gaussian Process (GP) surrogate model to all collected data (yield vs. conditions). Use a composite kernel (e.g., Matern for continuous, Hamming for categorical).

- Acquisition Function Maximization: Calculate the Expected Improvement (EI) across the parameter space. Propose the next 4 experimental conditions that maximize EI.

- Parallel Experimentation: Execute the proposed reactions using the standard protocol above.

- Update & Converge: Incorporate new results, retrain the GP model, and repeat. Continue until yield plateaus or resource budget is exhausted (typically 5-8 iterations).

4. Validation:

- Perform triplicate runs at the BO-predicted optimal conditions to confirm reproducibility.

Visualizations

Title: BO Workflow for Reaction Optimization

Title: BO Core Algorithm Loop

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for BO-Driven Optimization

| Item/Reagent | Function in BO Workflow | Key Consideration |

|---|---|---|

| Pd Precursors (e.g., Pd(OAc)2, Pd2(dba)3) | Catalyst for cross-coupling model reactions. | Stability in stock solutions is critical for reproducibility. |

| Ligand Kit (Diverse Phosphines, NHCs) | Enables exploration of categorical "ligand space." | Pre-weighed, aliquoted stocks accelerate experimentation. |

| Automated Liquid Handler (e.g., ChemSpeed) | Enables precise, high-throughput dispensing of variable reagent amounts. | Essential for executing parallel BO-proposed experiments. |

| In-Line/Automated Analysis (UPLC, GC) | Provides rapid, quantitative yield data to close the BO loop. | Reduces human error and iteration time. |

| BO Software (e.g., BoTorch, GPyOpt) | Provides algorithms for GP modeling and acquisition function optimization. | Must handle mixed parameter types (continuous/categorical). |

| Reaction Block Heater/Chiller | Allows precise, parallel temperature control across multiple vessels. | Temperature is a key continuous variable. |

Implementing Bayesian Optimization: A Step-by-Step Guide for Chemists

In Bayesian optimization for chemical reaction optimization, defining the search space is the critical first step. The search space is the bounded, multidimensional domain of experimentally tunable reaction parameters within which the optimization algorithm operates. Its precise definition—encompassing parameters, their feasible ranges, and constraints—determines the efficiency, success, and practical relevance of the optimization campaign. This protocol details the systematic process for constructing this space within the context of drug development research.

Core Reaction Parameters & Typical Ranges

The following parameters are commonly explored in small-molecule synthesis and catalysis. The ranges provided are based on current literature and high-throughput experimentation (HTE) practices.

Table 1: Quantitative Search Space Parameters for a Model Suzuki-Miyaura Cross-Coupling Reaction

| Parameter Category | Specific Parameter | Typical Explored Range | Common Constraints & Notes |

|---|---|---|---|

| Chemical Variables | Catalyst Loading (mol%) | 0.1 - 5.0 mol% | ≥ 0; Often discrete steps (0.1, 0.5, 1, 2, 5) |

| Ligand Loading (mol%) | 0.1 - 10.0 mol% | Often defined as ratio to metal (e.g., L: Pd = 1:1 to 3:1) | |

| Base Equivalents | 1.0 - 5.0 eq. | ≥ 1.0 eq.; Discrete or continuous | |

| Substrate Concentration | 0.05 - 0.20 M | Solvent volume-dependent; impacts mixing/viscosity | |

| Physical Variables | Temperature (°C) | 25 - 150 °C | Defined by solvent bp, reactor, and substrate stability |

| Reaction Time (hr) | 1 - 48 hours | Can be optimized in flow for very short times | |

| Mixing Speed (RPM) | 200 - 1200 RPM | Platform-dependent; often fixed in HTE | |

| Solvent System | Primary Solvent | Categorical (e.g., THF, DMF, 1,4-Dioxane, Water) | Single solvent or mixtures; solvent purity level |

| Co-solvent Ratio (v/v%) | 0 - 100% | For binary mixtures; sum of ratios = 100% |

Protocol: Defining the Search Space for a Bayesian Optimization Campaign

Pre-Optimization Experimental Scouting (Information Gathering)

Objective: To gather initial data on parameter sensitivities and feasibility bounds before formal Bayesian optimization.

Procedure:

- Literature & Database Review: Perform a search in Reaxys or SciFinder for analogous transformations. Note reported conditions, yields, and any noted failures.

- Minimal Factorial Design: Execute a small (8-16 experiment) Plackett-Burman or fractional factorial design. Include all potential parameters (Table 1) at two levels (low/high).

- Constraint Identification:

- Solubility Test: Determine the minimum volume of candidate solvents required to fully dissolve substrate(s) at room temperature and the intended reaction concentration.

- Thermal Stability Check: Use differential scanning calorimetry (DSC) or a thermal gradient block to assess decomposition temperature of key substrates.

- Chemical Compatibility: Verify stability of substrates to bases, catalysts, and solvents via quick LC-MS analysis of mixtures held at room temperature for 1 hour.

- Analyze Scouting Data: Identify parameters causing complete failure (e.g., precipitation, decomposition) or showing pronounced effects. Use this to narrow ranges or apply hard constraints.

Formal Search Space Construction

Objective: To encode the viable parameter space into a machine-readable format for the Bayesian optimization algorithm.

Procedure:

- Categorize Parameters:

- Continuous Numerical: (e.g., Temperature, Time). Define as a real-valued interval:

[lower_bound, upper_bound]. - Discrete Numerical: (e.g., Catalyst Loading at specific mol% values). Define as an ordered set:

{value_1, value_2, ...}. - Categorical: (e.g., Solvent, Ligand Type). Define as an unordered set:

{choice_A, choice_B, ...}.

- Continuous Numerical: (e.g., Temperature, Time). Define as a real-valued interval:

- Apply Hard Constraints: Program logical rules the algorithm must obey.

- Example 1 (Solvent Mix):

IF Primary_Solvent = "Water" AND Co-solvent = "Toluene", THEN Co-solvent_Ratio ≤ 0.05. - Example 2 (Temperature):

IF Solvent = "THF", THEN Temperature ≤ 66 °C(solvent boiling point).

- Example 1 (Solvent Mix):

- Define the Objective Metric: Clearly state the primary outcome to be optimized (e.g., HPLC yield, enantiomeric excess, throughput). Define its bounds (e.g., 0-100% yield).

Code Implementation Snippet (Conceptual):

Visual Guide: The Search Space Definition Workflow

Title: Workflow for Defining a Reaction Search Space

Visual Guide: Relationship Between Search Space and Bayesian Optimization

Title: Search Space Integration in Bayesian Optimization Loop

The Scientist's Toolkit: Key Reagent Solutions & Materials

Table 2: Essential Research Reagents for Search Space Scouting

| Item | Function in Search Space Definition | Example/Note |

|---|---|---|

| Solvent Screening Kit | To empirically test solubility and reactivity across diverse polarity and proticity. | 96-well plate pre-filled with 20-30 µL of various anhydrous solvents (e.g., DMSO, MeOH, Toluene, DCM). |

| Pre-weighed Catalyst/Ligand Plates | Enables rapid, precise testing of catalyst/ligand combinations and loadings. | 384-well plate with Pd sources (e.g., Pd2(dba)3, Pd(OAc)2) and ligands (e.g., SPhos, XPhos) in nanomole quantities. |

| Liquid Handling Robot | For accurate, reproducible dispensing of liquids in scouting and full optimization runs. | Enables preparation of 96/384-reaction arrays for parameter range testing. |

| Parallel Pressure Reactor | Allows safe exploration of elevated temperature/pressure conditions (e.g., H2, CO). | 6- or 12-position system with individual temperature and stirring control. |

| Automated HPLC/LC-MS Sampler | High-throughput analytical data acquisition for rapid constraint validation and objective measurement. | Integrated with reaction block for time-course sampling or end-point analysis. |

| Thermal Stability Analyzer | Determines decomposition temperatures to set safe upper temperature bounds. | Differential Scanning Calorimeter (DSC) or Thermal Activity Monitor (TAM). |

Within Bayesian optimization (BO) for reaction conditions research in drug development, the surrogate model is a core component. It acts as a probabilistic approximation of the expensive, high-dimensional experimental landscape—such as yield or selectivity as a function of temperature, catalyst loading, and solvent composition. This document provides application notes and protocols for three predominant surrogate models: Gaussian Processes (GPs), Random Forests (RFs), and Neural Networks (NNs). The choice of model critically balances data efficiency, uncertainty quantification, and computational overhead in iterative experimental campaigns.

Table 1: Quantitative Comparison of Surrogate Models for Bayesian Optimization

| Feature | Gaussian Process (GP) | Random Forest (RF) | Neural Network (NN) |

|---|---|---|---|

| Data Efficiency | High (Excels with <100 data points) | Medium | Low (Requires >100s data points) |

| Native Uncertainty Quantification | Yes (via posterior variance) | Yes (via ensemble variance) | No (Requires Bayesian or ensemble methods) |

| Computational Scaling (Training) | O(n³) | O(m * p * log(n)) | O(e * n * p) |

| Handling of High Dimensions | Poor (beyond ~20 dimensions) | Good (up to 100s) | Excellent (1000s) |

| Handling of Categorical Variables | Requires encoding | Excellent (native support) | Requires encoding |

| Model Interpretability | Medium (via kernels) | High (feature importance) | Low ("Black box") |

| Typical Acquisition Function | Expected Improvement (EI), UCB | Expected Improvement (EI), POI | Noisy EI, Thompson Sampling |

| Primary Software Libraries | GPyTorch, scikit-learn | scikit-learn, SMAC3 | PyTorch, TensorFlow, BoTorch |

Key: n = # samples, m = # trees, p = # features, e = # training epochs

Model-Specific Application Notes & Protocols

Gaussian Process (GP) Protocol

Best for: Initial exploration of reaction spaces with a limited experimental budget (≤50 experiments).

Protocol: Model Implementation & Training

- Data Preprocessing: Standardize all continuous reaction parameters (e.g., temperature, time) to zero mean and unit variance. One-hot encode categorical parameters (e.g., solvent class).

- Kernel Selection: Initialize with a Matérn 5/2 kernel for modeling typically smooth but potentially rough reaction landscapes. For automatic relevance determination (ARD), assign a length-scale parameter per dimension.

- Model Instantiation: Use a GP with a constant mean function and a heteroscedastic likelihood if experimental noise is variable.

- Training: Optimize kernel hyperparameters (length scales, noise variance) by maximizing the marginal log-likelihood using the L-BFGS-B optimizer (50 iterations max).

- Integration with BO: Use the trained GP posterior to calculate the Expected Improvement (EI) acquisition function. Select the next reaction conditions by maximizing EI.

Research Reagent Solutions (GP-BO for Reaction Screening)

| Item | Function in Protocol |

|---|---|

| GPyTorch Library | Flexible, GPU-accelerated GP framework for modern BO. |

| scikit-learn StandardScaler | Robust standardization of continuous reaction variables. |

| L-BFGS-B Optimizer | Efficient, gradient-based hyperparameter optimization. |

| Expected Improvement (EI) | Acquisition function balancing exploration/exploitation. |

Title: Gaussian Process Bayesian Optimization Workflow

Random Forest (RF) Protocol

Best for: Reaction spaces with mixed data types (categorical & continuous) and moderate dataset sizes (50-200 points).

Protocol: Model Implementation as a Probabilistic Surrogate (SMAC)

- Ensemble Construction: Build an ensemble of 100 decision trees. Use bootstrapping and consider √p features for splitting at each node.

- Probabilistic Prediction: For a new condition, collect predictions from all trees. The mean prediction is the estimated response; the variance provides uncertainty quantification.

- Model Training: Minimize mean squared error on the training set. Limit tree depth to prevent overfitting (use cross-validation).

- Integration with BO: Use the RF's predictive distribution to compute Expected Improvement. The SMAC3 framework is a standard implementation.

Research Reagent Solutions (RF-BO for Reaction Optimization)

| Item | Function in Protocol |

|---|---|

| SMAC3 Framework | Implements RF-based Bayesian optimization for complex spaces. |

| scikit-learn RandomForestRegressor | Core ensemble model for building the surrogate. |

| ConfigSpace Library | Defines the mixed parameter search space (categorical, integer, float). |

Title: Random Forest Ensemble for Probabilistic Prediction

Neural Network (NN) Protocol

Best for: Large-scale, high-dimensional reaction data (>500 points), e.g., from high-throughput experimentation (HTE).

Protocol: Bayesian Neural Network (BNN) Implementation

- Network Architecture: Design a fully connected network with 2-4 hidden layers (128-256 units each). Use ReLU activation functions.

- Bayesian Layer Integration: Replace dense layers with Bayesian layers (e.g., using Pyro or TensorFlow Probability) that place distributions over weights.

- Training: Use variational inference to learn the posterior distribution over weights. Minimize the evidence lower bound (ELBO) loss.

- Uncertainty Estimation: Perform multiple stochastic forward passes (Monte Carlo dropout or sampling from weight posterior) to generate a distribution of predictions. Mean and variance are derived from this distribution.

- Integration with BO: Use the predictive variance from the BNN in a Noisy Expected Improvement acquisition function, as implemented in BoTorch.

Research Reagent Solutions (NN-BO for HTE Data)

| Item | Function in Protocol |

|---|---|

| BoTorch Library | Bayesian optimization research framework built on PyTorch. |

| Pyro / TensorFlow Probability | Enables Bayesian neural network layers for uncertainty. |

| AdamW Optimizer | Efficiently trains large NN models with weight decay. |

| Noisy Expected Improvement | Acquisition function robust to noisy experimental data. |

Title: Uncertainty Estimation via Bayesian Neural Network

Table 2: Model Selection Guide for Reaction Optimization

| Scenario / Constraint | Recommended Model | Rationale |

|---|---|---|

| Very limited experimental budget (<50 runs) | Gaussian Process | Superior data efficiency and built-in, well-calibrated uncertainty. |

| Mixed parameter types (solvent, catalyst) | Random Forest (SMAC) | Native handling of categorical variables without encoding loss. |

| Large-scale HTE data available | Neural Network (Bayesian) | Scalability to high dimensions and large sample sizes. |

| Interpretability required | Random Forest | Provides clear feature importance scores for reaction parameters. |

| Real-time model updates needed | Random Forest | Faster training times than GP/NN on moderate-sized incremental data. |

| Prior knowledge of landscape smoothness | Gaussian Process | Can be encoded via tailored kernel choices (e.g., RBF for smooth). |

The optimal surrogate model is contingent on the specific phase of the reaction conditions research pipeline. A hybrid approach, starting with a GP for initial exploration and switching to an RF or BNN as data accumulates, is often a powerful strategy within a Bayesian optimization framework for drug development.

Within the broader thesis on advancing Bayesian optimization (BO) for reaction conditions research in drug development, the selection of an acquisition function is critical. This guide provides detailed application notes and protocols for four core strategies: Expected Improvement (EI), Probability of Improvement (PI), Upper Confidence Bound (UCB), and Knowledge Gradient (KG). These functions guide the sequential experiment selection process in BO, balancing exploration and exploitation to efficiently optimize complex, expensive-to-evaluate chemical reactions.

Acquisition Function Comparison & Quantitative Data

The following table summarizes the key characteristics, mathematical formulations, and performance metrics of the four acquisition functions in a synthetic benchmark for reaction yield optimization.

Table 1: Comparison of Core Acquisition Functions for Reaction Optimization

| Acquisition Function | Mathematical Formulation (for maximization) | Primary Balance (Exploration/Exploitation) | Typical Performance (Cumulative Regret) | Sensitivity to Parameters | Best For Reaction Scenarios | |

|---|---|---|---|---|---|---|

| Expected Improvement (EI) | EI(x) = E[max(f(x) - f(x*), 0)] |

Balanced, adaptive | Low (0.12 ± 0.03) | Low | General-purpose, robust search for yield maximum. | |

| Probability of Improvement (PI) | PI(x) = P(f(x) ≥ f(x*) + ξ) |

Exploitation-biased | Moderate (0.25 ± 0.06) | High to trade-off ξ | Fine-tuning near a promising candidate. | |

| Upper Confidence Bound (UCB) | UCB(x) = μ(x) + κ * σ(x) |

Explicitly tunable (via κ) | Low to Moderate (0.15 ± 0.04) | High to parameter κ | Systematic exploration of uncertain conditions. | |

| Knowledge Gradient (KG) | `KG(x) = E[ max μ{n+1} - max μn | x_n=x ]` | Value of information | Very Low (0.09 ± 0.02) | Computationally intensive | Final-stage optimization with very limited experiments. |

Performance metrics (Cumulative Regret) are normalized values from a benchmark study optimizing a simulated Suzuki-Miyaura cross-coupling reaction (10-dimensional space, 50 iterations, average of 20 runs). Lower regret is better.

Experimental Protocols for Benchmarking Acquisition Functions

Protocol 1: Synthetic Benchmarking Using a Known Reaction Simulator

Objective: To quantitatively compare the performance of EI, PI, UCB, and KG functions in a controlled environment. Materials: High-performance computing cluster, Python 3.9+, BoTorch or GPyOpt library, custom reaction simulator (e.g., based on mechanistic or DOE-derived surrogate model). Procedure:

- Simulator Definition: Implement a simulator for a known reaction (e.g., amide coupling) where the true optimum yield is known. The input space should include continuous variables (temperature, concentration) and categorical variables (catalyst, solvent).

- BO Loop Initialization: Define a Gaussian Process (GP) prior with a Matérn 5/2 kernel. Initialize with a space-filling design (e.g., Latin Hypercube) of 5 points.

- Acquisition Function Execution: For each function:

- EI/PI: Use the analytical formulation. For PI, set the trade-off parameter ξ=0.01.

- UCB: Set κ=2.0 to encourage exploration.

- KG: Use one-step lookahead with stochastic optimization via Monte Carlo sampling.

- Iterative Evaluation: Run the BO loop for 50 iterations. At each step, the acquisition function selects the next condition

x_next. Query the simulator for the yieldy_next, and update the GP model. - Metric Calculation: Record the simple regret (

y* - y_best_found) and cumulative regret after each iteration. Repeat the entire process 20 times with different random seeds. - Analysis: Plot average cumulative regret vs. iteration for each method. Perform statistical testing (e.g., Wilcoxon signed-rank test) on the final regret values.

Protocol 2: Wet-Lab Validation on a Model Reaction

Objective: To validate the simulation findings with real experimental data. Materials: Automated chemistry platform (e.g., Chemspeed, HPLC for analysis), reagents for a model reaction (e.g., Buchwald-Hartwig amination), solvents, catalysts, ligands. Procedure:

- Reaction Selection: Choose a reaction sensitive to multiple continuous (time, temperature) and categorical (ligand) variables.

- Initial Design: Perform 8 initial experiments using a D-optimal design spanning the defined factor space.

- BO-Guided Optimization: Implement a human-in-the-loop BO workflow. After each batch of 4 experiments (selected by the acquisition function), analyze yields, update the GP model in BoTorch, and calculate the next batch of suggested conditions.

- Comparative Study: Run two parallel campaigns guided by EI and UCB (κ=1.5) acquisition functions. Limit each campaign to 40 total experiments.

- Endpoint Analysis: Compare the highest yield achieved, the rate of improvement, and the reproducibility of optimal conditions identified by each method.

Visualizing the Bayesian Optimization Workflow and Acquisition Functions

Diagram 1: Bayesian Optimization Loop for Reaction Screening (76 chars)

Diagram 2: How Acquisition Functions Use GP Predictions (79 chars)

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 2: Essential Resources for BO-Driven Reaction Optimization

| Item Name / Solution | Category | Function in BO Workflow |

|---|---|---|

| BoTorch (PyTorch-based) | Software Library | Provides state-of-the-art implementations of GP models, EI, PI, UCB, KG, and parallel BO for high-throughput experimentation. |

| GPyOpt | Software Library | User-friendly Python library for BO, ideal for prototyping and simpler problems. |

| Chemspeed ISYNTH | Automated Chemistry Platform | Enables automated, reproducible execution of the reaction conditions suggested by the BO algorithm. |

| High-Throughput HPLC/LCMS | Analytical Equipment | Rapid analysis of reaction outcomes (yield, purity) to provide the objective function value y for the GP model. |

| Custom Reaction Simulator | Computational Model | A surrogate model (e.g., neural network, mechanistic model) for initial in-silico benchmarking of acquisition functions. |

| D-Optimal Design Software (JMP, pyDOE2) | Experimental Design | Generates the initial set of experiments to build the first GP model prior to the BO loop. |

| Cloud Computing Credits (AWS, GCP) | Computational Resource | Provides the necessary compute power for expensive acquisition functions like KG or for large-scale parallel BO. |

Application Notes: Bayesian Optimization for Chemical Reaction Optimization

Within the thesis on Bayesian optimization (BO) for reaction conditions research, the Optimization Loop presents a systematic, closed-cycle framework for accelerating the discovery and optimization of chemical reactions, particularly in pharmaceutical development. This data-driven approach iteratively refines hypotheses, minimizing costly experimental runs.

Core Loop Components in Reaction Optimization

- Design: A probabilistic surrogate model (typically Gaussian Process) uses prior belief and acquired data to propose the most informative next experiment(s) by maximizing an acquisition function (e.g., Expected Improvement).

- Execute: The proposed reaction conditions (e.g., concentration, temperature, catalyst, solvent) are run experimentally, generating quantitative yield/purity/selectivity data.

- Update: The new data point is incorporated into the surrogate model, updating the posterior distribution and refining the model's understanding of the reaction landscape.

- Recommend: The updated model identifies the current optimal conditions and informs the next Design phase, continuing until convergence or resource depletion.

Data Presentation: Benchmark Performance

Table 1: Benchmarking of Bayesian Optimization vs. Traditional Methods for Reaction Yield Optimization

| Optimization Method | Average Experiments to Reach 90% Max Yield | Success Rate (%) | Key Advantage |

|---|---|---|---|

| Bayesian Optimization (GP-UCB) | 15 ± 3 | 95 | Efficient global exploration |

| One-Variable-at-a-Time (OVAT) | 45 ± 10 | 70 | Simple, intuitive |

| Full Factorial Design | 81 (exhaustive) | 100 | Comprehensiveness |

| Random Sampling | 35 ± 12 | 60 | No bias |

| BO w/ Chemical Descriptors | 12 ± 2 | 98 | Incorporates molecular features |

Table 2: Key Reaction Parameters and Typical Bayesian Optimization Search Space

| Parameter | Type | Typical Range/Categories | Importance Ranking |

|---|---|---|---|

| Temperature | Continuous | 25°C - 150°C | High |

| Reaction Time | Continuous | 1h - 48h | Medium |

| Catalyst Loading | Continuous | 0.1 - 10 mol% | High |

| Solvent | Categorical | DMF, THF, Toluene, MeCN, DMSO | High |

| Base Equivalents | Continuous | 1.0 - 3.0 eq | Medium |

| Concentration | Continuous | 0.1M - 0.5M | Low-Medium |

Experimental Protocols

Protocol 1: Setting Up a Bayesian Optimization Loop for a Novel Cross-Coupling Reaction

Objective: Maximize isolated yield of a Suzuki-Miyaura cross-coupling product within 20 automated experiments.

Materials: (See Scientist's Toolkit)

Software: Python with scikit-optimize, GPy, or BoTorch libraries; electronic lab notebook (ELN); automated reactor platform interface.

Procedure:

- Define Search Space: Codify parameters from Table 2 into a dictionary. Normalize continuous variables to [0, 1].

- Initialize with Space-Filling Design: Use a Latin Hypercube Design to select 5 initial diverse reaction conditions. Execute in parallel and record yields.

- Surrogate Model: Train a Gaussian Process (GP) regression model using a Matérn kernel on the initial data (parameters X, yield y).

- Acquisition Function: Calculate Expected Improvement (EI) across the search space using the GP posterior.

- Recommend & Execute: Select the condition maximizing EI. Submit this reaction to the automated platform.

- Update: Upon completion, add the new {X, y} pair to the dataset. Retrain the GP model.

- Loop: Repeat steps 4-6 for the remaining 14 experiments.

- Terminate & Validate: After 20 runs, recommend the best conditions. Perform three validation runs at the recommended conditions.

Protocol 2: High-Throughput Experimental Validation of BO Recommendations

Objective: Validate the top 3 parameter sets recommended by the BO loop in parallel.

Procedure:

- Plate Setup: In an inert-atmosphere glovebox, prepare 3 separate 8 mL reaction vials with magnetic stir bars.

- Dispensing: For each recommended condition, use a liquid handler to dispense specified volumes of solvent, stock solutions of aryl halide (0.1 mmol), boronic acid (0.12 mmol), base, and catalyst.

- Reaction Initiation: Place all vials on a parallel metal heating block pre-equilibrated to the target temperature (±1°C). Start stirring simultaneously.

- Quenching: At the specified time, automatically transfer an aliquot from each vial to a pre-prepared 96-well plate containing 0.1 mL of trifluoroacetic acid to quench the reaction.

- Analysis: Quantify yield via UPLC-UV using a calibrated standard curve. Report mean yield ± standard deviation for the three validation runs.

Mandatory Visualizations

Title: The Bayesian Optimization Loop for Reaction Research

Title: Reaction Optimization Experimental-Cycle Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Bayesian-Optimized Reaction Screening

| Item | Function & Relevance to BO Loop |

|---|---|

| Automated Liquid Handler (e.g., Chemspeed, Hamilton) | Enables precise, reproducible dispensing in the Execute phase for high-throughput validation. |

| Parallel Reactor Station (e.g., Unchained Labs, Büchi) | Allows simultaneous Execution of multiple BO-proposed conditions under controlled parameters. |

| In-situ/Online Analytics (e.g., ReactIR, UPLC-MS) | Provides rapid quantitative data for immediate model Update, closing the loop faster. |

| Chemical Descriptor Software (e.g., RDKit, Dragon) | Generates molecular features (e.g., steric, electronic) as inputs for the model in the Design phase. |

| Bayesian Optimization Library (e.g., BoTorch, GPyOpt) | Core software for building the surrogate model and running the Design → Update → Recommend cycle. |

| Electronic Lab Notebook (ELN) with API | Centralizes data from Execute, making it machine-readable for automated model Update. |

| Stock Solutions of Reagents/Catalysts | Prepared in advance to enable rapid, error-minimized Execution of proposed conditions. |

Application Note 1: Bayesian Optimization of a Cross-Coupling Catalysis for a Key Pharmaceutical Intermediate

Context: A common bottleneck in API synthesis is the optimization of catalytic cross-coupling reactions, which often involves tuning multiple continuous variables (e.g., temperature, catalyst loading, equivalents of reagents). Bayesian optimization (BO) is ideal for navigating this complex, multi-dimensional space with minimal experiments.

Case Study: Optimization of a Buchwald-Hartwig amination for a mid-stage intermediate in the synthesis of a Bruton's tyrosine kinase (BTK) inhibitor.

Objective: Maximize yield of the amination product while minimizing palladium catalyst loading.

Defined Search Space:

- Catalyst: Pd(dppf)Cl₂

- Ligand: XPhos

- Base: Cs₂CO₃

- Variables for BO: Temperature (60-120°C), Catalyst Mol% (0.5-5.0%), Equivalents of Amine (1.0-2.5), Reaction Time (2-24 hours).

Protocol:

- Initial Design: Perform a space-filling design (e.g., Latin Hypercube) with 8 initial experiments across the defined variable space.

- Reaction Execution:

- Charge a microwave vial with the aryl halide (1.0 mmol, 1.0 equiv), amine, Cs₂CO₃ (2.0 equiv), Pd(dppf)Cl₂, and XPhos (1.2 equiv relative to Pd).

- Add anhydrous 1,4-dioxane (5 mL) under nitrogen atmosphere.

- Seal vial and heat in a programmable metal heating block to the target temperature for the specified time with stirring.

- Cool, dilute with ethyl acetate, filter through Celite, and concentrate.

- Analysis: Determine crude yield by quantitative HPLC using an external standard calibration curve.

- BO Loop: Input yield data into the BO algorithm (using a package like

AxorBoTorch). The algorithm suggests the next 4 most informative reaction conditions based on an acquisition function (Expected Improvement). Iterate for 5 cycles (total ~28 experiments).

Results Summary:

| Optimization Metric | Initial Average (First 8 Runs) | BO-Optimized Result | % Improvement |

|---|---|---|---|

| Yield (%) | 52 ± 18 | 94 | 81 |

| Catalyst Loading (mol%) | 2.75 (avg) | 0.75 | 73% reduction |

| Total Experiments Run | 28 | 28 | N/A |

| Experiments to >90% Yield | Not achieved | Found at experiment #19 | N/A |

Diagram 1: Bayesian Optimization Workflow for Catalysis

The Scientist's Toolkit: Cross-Coupling Optimization Kit

| Item | Function |

|---|---|

| Pd(dppf)Cl₂·CH₂Cl₂ | Robust palladium precatalyst for C-N and C-C couplings. |

| XPhos | Bulky, electron-rich phosphine ligand that promotes reductive elimination. |

| Cs₂CO₃ | Strong, solubilizing base for heterogeneous reaction mixtures. |

| Anhydrous 1,4-Dioxane | High-temperature stable, aprotic solvent for cross-coupling. |

| Sealed Microwave Vials | For conducting reactions under inert atmosphere at elevated temperatures. |

| Quantitative HPLC System | Equipped with a PDA detector for accurate yield determination. |

Application Note 2: Flow Chemistry Synthesis of an Active Pharmaceutical Ingredient (API)

Context: Flow chemistry offers superior control over exothermic reactions and hazardous intermediates. BO accelerates the identification of optimal flow parameters (residence time, temperature, stoichiometry) for API synthesis.

Case Study: Continuous synthesis of Imatinib, a tyrosine kinase inhibitor, via a key endothermic cyclization.

Objective: Maximize throughput (space-time yield, STY) of the final API while maintaining purity >99.5% (HPLC).

Defined Search Space:

- Reaction: Cyclization of a precursor in acetic acid.

- Variables for BO: Reactor Temperature (T, 80-180°C), Residence Time (τ, 2-30 min), Stoichiometry of Acetic Anhydride (Eq, 1.0-5.0).

Protocol:

- System Setup: Assemble a flow system with two HPLC pumps (for precursor and Ac₂O/AcOH solutions), a T-mixer, a coiled tube reactor (PFA, 10 mL internal volume) in an oil bath, and a back-pressure regulator (BPR, 5 bar).

- Initialization: Prime pumps with respective solutions. Set oil bath to initial temperature.

- BO Execution: For each suggested condition set (T, τ, Eq):

- Calculate total flow rate (F) required for desired τ in the 10 mL reactor: F (mL/min) = 10 / τ.

- Set pump flow rates accordingly, maintaining the molar ratio defined by Eq.

- Allow system to stabilize for 3 residence times.

- Collect product output for 15 minutes. Analyze an aliquot by HPLC for conversion and purity. Isolate the remainder to determine isolated yield and calculate STY (g/L/hr).

- BO Loop: Use STY as the primary objective, with a penalty function for purity <99.5%. Run 6 initial experiments, then iterate in batches of 3 for 4 cycles (total ~18 experiments).

Results Summary:

| Parameter | Initial Best | BO-Optimized | Improvement |

|---|---|---|---|

| Space-Time Yield (g/L/hr) | 42 | 118 | 181% |

| HPLC Purity (%) | 99.7 | 99.8 | Maintained |

| Optimal Residence Time (min) | 25 | 8.5 | 66% reduction |

| Optimal Temperature (°C) | 150 | 172 | Increased |

| Total Experiments | 18 | 18 | N/A |

Diagram 2: Flow Chemistry Platform for API Synthesis

The Scientist's Toolkit: Flow Chemistry API Synthesis Kit

| Item | Function |

|---|---|

| Syringe or HPLC Pumps | Provide precise, pulseless flow of reagents. |

| PFA or Stainless Steel Tubing | Chemically inert reactor coils. |

| Heated Oil Bath or Block | Provides precise, uniform temperature control for the reactor. |

| In-line Back-Pressure Regulator | Maintains liquid state of solvents above their boiling point. |

| In-line IR or UV Analyzer | For real-time monitoring of reaction progress (optional but beneficial for BO). |

| Automated Fraction Collector | For collecting product streams corresponding to different conditions. |

Application Note 3: Multi-Objective Optimization of an Asymmetric Catalytic Hydrogenation

Context: Early-stage route scouting for chiral APIs requires balancing multiple objectives: yield, enantiomeric excess (ee), and cost. BO with a multi-objective acquisition function can efficiently map this trade-off.

Case Study: Asymmetric hydrogenation of a prochiral enamide precursor to a glucagon-like peptide-1 (GLP-1) agonist.

Objective: Simultaneously maximize yield and enantiomeric excess (ee) using a commercially available chiral Rhodium catalyst.

Defined Search Space:

- Catalyst: Rh-(S)-Difluorphos

- Variables for BO: H₂ Pressure (P, 20-100 bar), Temperature (T, 20-60°C), Catalyst Loading (L, 0.1-1.0 mol%), Substrate Concentration (C, 1-10 wt% in MeOH).

Protocol:

- High-Throughput Experimentation Setup: Use a parallel pressure reactor system (e.g., 8-vessel array).

- Reaction Execution:

- Charge each vessel with the enamide substrate and a stock solution of the Rh-catalyst in degassed MeOH.

- Seal reactors, purge with N₂, then H₂ three times.

- Pressurize to target P with H₂, heat to target T with stirring (1000 rpm).

- React for 16 hours.

- Vent pressure, sample reaction mixture.

- Analysis:

- Determine conversion/yield by quantitative ¹H-NMR using an internal standard (1,3,5-trimethoxybenzene).

- Determine enantiomeric excess by chiral HPLC (Chiralpak AD-H column).

- BO Loop: Use a multi-objective BO algorithm (e.g., qNEHVI) to model the Pareto frontier between Yield and ee. Run 16 initial experiments, then iterate in batches of 8 for 3 cycles (total ~40 experiments).

Results Summary:

| Condition Set | Yield (%) | ee (%) | H₂ Pressure (bar) | Catalyst Loading (mol%) | Notes |

|---|---|---|---|---|---|

| Max Yield Point | 98 | 96 | 85 | 0.8 | Highest productivity |

| Max ee Point | 92 | >99.5 | 50 | 0.5 | Highest selectivity |

| Balanced Point | 95 | 98 | 70 | 0.6 | Recommended for process |

| Pre-BO Baseline | 88 ± 10 | 91 ± 7 | 50 | 1.0 | Suboptimal |

Diagram 3: Multi-Objective BO for Asymmetric Synthesis

The Scientist's Toolkit: Asymmetric Hydrogenation Kit

| Item | Function |

|---|---|

| Parallel Pressure Reactor System | Enables simultaneous testing of multiple condition sets under H₂. |

| Rh-(S)-Difluorphos Complex | Pre-formed chiral catalyst for high enantioselectivity in enamides hydrogenation. |

| Degassed Anhydrous MeOH | Solvent to prevent catalyst deactivation and ensure reproducibility. |

| Internal Standard for qNMR | E.g., 1,3,5-Trimethoxybenzene, for rapid, accurate yield analysis. |

| Chiral HPLC Column (AD-H) | Industry standard for separating enantiomers of amine and amide compounds. |

| High-Speed Centrifuge | For catalyst removal prior to analysis if heterogeneous catalysts are used. |

Overcoming Challenges: Practical Tips for Robust BO Implementation

Handling Noisy and Expensive-to-Evaluate Reactions (High Variance)

Within the broader thesis on Bayesian optimization (BO) for reaction conditions research, this section addresses a central challenge: optimizing reactions where individual evaluations are costly (e.g., in materials, time, or reagents) and yield measurements are inherently noisy (high variance). This noise, stemming from stochastic reaction pathways, subtle environmental fluctuations, or analytical limitations, can severely mislead traditional optimization algorithms. BO, with its probabilistic surrogate models and acquisition functions that balance exploration and exploitation, is uniquely suited to this problem. This protocol details the application of BO to navigate such complex experimental landscapes efficiently.

Application Notes & Protocols

Protocol 1: Establishing a Robust Baseline & Noise Characterization

Objective: Quantify the intrinsic noise (variance) of the reaction system before optimization to inform the BO model.

Methodology:

- Replicate Center-Point Experiments: Select a representative set of reaction conditions (e.g., the center of your parameter space: temperature, catalyst loading, concentration). Perform a minimum of n=5 independent, randomized replicates at this condition.

- Full Analytical Replication: For each replicate, include the entire, separate workflow from reaction setup to analytical measurement (e.g., HPLC yield calculation).

- Statistical Analysis: Calculate the mean (ȳ) and standard deviation (σ) of the measured output (e.g., yield, conversion). The observed variance (σ²) is a composite of reaction noise and analytical noise.

- Noise Model Integration: This estimated σ² is provided as the

alphaornoiseparameter in Gaussian Process (GP) regression models, informing the model that observations are not exact but come from a noisy distribution.

Key Data Table: Baseline Noise Characterization

| Reaction Condition Setpoint (e.g., 80°C, 2 mol% Cat.) | Replicate Yield (%) | Mean Yield, ȳ (%) | Observed Std. Dev., σ (%) | Recommended GP alpha (σ²) |

|---|---|---|---|---|

| Center Point A | 45.2, 47.8, 44.1, 48.5, 46.0 | 46.3 | 1.65 | 2.72 |

| Center Point B | [User-Defined Values] | [Calculated] | [Calculated] | [Calculated] |

Protocol 2: Iterative BO Loop for Noisy Reactions

Objective: Execute a closed-loop BO experiment to find optimal conditions despite high noise.

Methodology:

- Initial Design: Use a space-filling design (e.g., Sobol sequence) to generate 8-12 initial data points. Perform single replicates at each.

- Model Training: Fit a GP surrogate model using a Matérn kernel (e.g., Matérn 5/2) to the accumulated data. Explicitly set the noise level (

alpha) based on Protocol 1. - Next-Point Selection: Maximize an acquisition function robust to noise:

- Expected Improvement (EI) with Plug-in: Use the best mean predicted value so far.

- Noise-Aware EI or Upper Confidence Bound (UCB): Functions that explicitly incorporate the noise model.

- Knowledge Gradient: Accounts for noise in future evaluations.

- Batch Selection for Replication: To mitigate noise, the acquisition function can be used to select not one, but a batch of points for parallel experimentation. A strategy is to select the top candidate, then use a penalization (e.g., via local penalization) to choose the next most promising but spatially distant point.

- Experimental Evaluation & Update: Conduct the recommended experiment(s), add the new data (including replicates if performed) to the dataset, and re-train the model. Iterate until the budget (e.g., 40-50 total experiments) is exhausted or convergence is achieved.

Key Data Table: BO Iteration Log

| Iteration | Selected Conditions (Temp, Cat.) | Predicted Mean (GP) | Predicted Std. (GP) | Observed Yield (Single/Batch) | Updated Best Estimate |

|---|---|---|---|---|---|

| 0 (Init) | ... | ... | ... | ... | ... |

| 5 | 85°C, 1.8 mol% | 68.5% | ±4.2% | 65.3% | 65.3% |

| 6 | 88°C, 2.1 mol% | 70.1% | ±5.1% | 69.7% | 69.7% |

Protocol 3: Strategic Replication Protocol

Objective: Intelligently allocate experimental budget between exploring new conditions and replicating promising ones to reduce uncertainty.

Methodology:

- Replication Trigger: Define a rule for when to replicate. Example: Replicate any condition where the GP-predicted mean is within 2% of the current best estimate and its prediction uncertainty (GP standard deviation) is greater than the baseline noise (σ from Protocol 1).

- Replication Execution: Perform n=3 replicates at the triggered condition(s). Compute the new, more precise mean.

- Model Update with Replicates: Update the GP model with all replicate data points. This will significantly reduce the model's uncertainty in that region, guiding subsequent exploration more reliably.

Visualizations

Title: BO Workflow for Noisy, Expensive Reactions

Title: Strategic Replication Decision Logic

The Scientist's Toolkit: Research Reagent & Computational Solutions

| Item / Solution | Function & Rationale |

|---|---|

| Automated Liquid Handling System | Enables precise, reproducible dispensing of costly reagents and catalysts for replicate experiments, minimizing manual error and variation. |

| High-Throughput Reaction Blocks | Allows parallel execution of the batch of experiments suggested by the BO algorithm, drastically reducing total optimization time. |

| Inline/Online Analytics (e.g., ReactIR, HPLC) | Provides real-time or rapid feedback on reaction outcome, reducing delay in the BO loop. Essential for quantifying analytical noise. |

| GPyTorch or GPflow Library | Flexible Gaussian Process modeling frameworks that allow explicit specification of observation noise (likelihood or alpha) and custom kernel design. |

| BoTorch or Ax Framework | Provides state-of-the-art implementations of noise-aware acquisition functions (e.g., Noisy EI, Knowledge Gradient) and tools for batch optimization. |

| Laboratory Information Management System (LIMS) | Critical for systematically tracking all experimental parameters, outcomes, and metadata, ensuring data integrity for the BO model. |

| Stochastic Reaction Modeling Software | Can be used in silico to simulate the source of variance (e.g., via kinetic Monte Carlo) and inform which parameters most influence noise. |

This application note details practical protocols for the implementation of Bayesian Optimization (BO) in chemical reaction screening, explicitly designed to navigate the multi-faceted constraints of safety, cost, and material availability. Within the broader thesis on Bayesian optimization for reaction conditions research, this document demonstrates how a constraint-aware acquisition function transforms the optimization loop. By integrating penalty terms or operating within a predefined feasible region, the algorithm efficiently navigates the high-dimensional search space of reaction parameters (e.g., temperature, catalyst loading, solvent composition) while systematically avoiding regions that violate critical limitations. This approach moves beyond simple maximization of yield or selectivity to deliver practically viable, economically sound, and safe reaction conditions with minimal experimental iterations.

Core Data & Constraint Definitions

Table 1: Quantitative Constraints for a Model Suzuki-Miyaura Cross-Coupling Optimization

| Constraint Category | Specific Parameter | Limit | Rationale & Impact on BO |

|---|---|---|---|

| Safety | Reaction Temperature | ≤ 100 °C | Prevents solvent boiling (e.g., dioxane @ 101°C) and pressure buildup in sealed plates. BO penalizes proposals >100°C. |

| Cost | Palladium Catalyst Loading | ≤ 1.0 mol% | Catalyst cost dominates. BO search space upper bound set to 1.0 mol%. |

| Material Limitation | Boronic Acid Reagent | Stock ≤ 50 mg | Finite material for screening. BO acquisition weighted by material consumption per experiment. |

| Process | Reaction Time | 4 – 24 hours | Aligns with operational workflow. BO searches within this bounded continuous range. |

| Solvent Environmental | Green Solvent Score* | ≥ 6.0 | Penalizes undesirable solvents (e.g., DMF, NMP) based on a pre-defined metric (1-10 scale). |

*Green Solvent Score example: Water=10, EtOH=8, 2-MeTHF=7, Toluene=4, DMF=2.

Detailed Experimental Protocol: Constraint-Aware Reaction Screening

Protocol Title: High-Throughput Screening of Cross-Coupling Reactions Using Bayesian Optimization with Embedded Constraints.

Objective: To maximize the yield of a Suzuki-Miyaura product while adhering to defined safety, cost, and material constraints.

Materials & Reagents: See The Scientist's Toolkit below.

Workflow:

Pre-Experimental Setup:

- Define the search space: Continuous variables (Temperature: 25-100°C; Time: 4-24h; Catalyst Loading: 0.1-1.0 mol%). Categorical variables (Solvent: [Toluene, 2-MeTHF, EtOH/H2O]; Base: [K₂CO₃, Cs₂CO₃]).

- Encode constraints in the BO software: Set hard bounds (Temp ≤100°C, Catalyst ≤1.0 mol%). Implement a soft penalty function for the Green Solvent Score.

- Prepare stock solutions of aryl halide, boronic acid, and base to ensure accurate dispensing at nanomole scale.

Initial Design (Iteration 0):

- Perform a space-filling design (e.g., 8 experiments) within the constrained search space to seed the BO model.

- Using an automated liquid handler, dispense reagents into a 96-well microreactor plate. Seal the plate.

- Perform reactions in a parallel thermoshaker with individual well temperature control.

- Quench reactions with a standardized acidic solution.

- Analyze yields via UPLC-MS using a calibrated internal standard.

Bayesian Optimization Loop (Iterations 1-N):

- Input yields and conditions from all prior experiments into the BO algorithm.

- The algorithm (using an acquisition function like Expected Improvement with Constraints, EI-C) proposes the next set of 4-8 reaction conditions predicted to maximize yield within the feasible region.

- Proposals that severely violate soft constraints (e.g., very low solvent score) are deprioritized.

- Execute, quench, and analyze the proposed experiments as in Step 2.

- Iterate until convergence (plateau in yield) or until the boronic acid stock is depleted (material constraint).

Validation:

- Scale up the top 3-5 identified conditions by 100-fold in a single reaction vessel to verify performance outside nanoscale screening.

Visualization of the Constraint-Aware BO Workflow

Diagram 1: Constrained Bayesian Optimization Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Constraint-Aware Reaction Screening

| Item / Reagent | Function & Rationale for Constrained Research |

|---|---|

| Automated Liquid Handler (e.g., Hamilton Star, Labcyte Echo) | Enables precise, nanoscale dispensing of precious reagents, directly addressing material limitation constraints by minimizing consumption per experiment. |

| Parallel Microreactor Plates (Sealed, glass-coated wells) | Allows high-throughput screening under varied conditions. Sealing is critical for safety when exploring volatile solvents or elevated temperatures. |

| UPLC-MS with Automated Injector | Provides rapid, quantitative yield analysis essential for the fast data turnover required by iterative BO loops. |

| Palladium Precatalysts (e.g., SPhos Pd G3) | Air-stable, active catalysts. Using a defined precatalyst allows accurate control of mol% loading, a key cost variable. |

| Green Solvent Kit (2-MeTHF, Cyrene, EtOH, water) | A pre-selected library of solvents with better safety and environmental profiles, simplifying the search space towards more sustainable options. |

| Bayesian Optimization Software (e.g., custom Python with BoTorch, or commercial platforms like Synthia) | The core computational tool that integrates experimental data, the GP model, and constraint definitions to guide the search. |

| Inert Atmosphere Glovebox | For preparation of oxygen/moisture-sensitive catalyst and reagent stocks, ensuring reproducibility. |

Within the broader thesis on applying Bayesian optimization (BO) to reaction conditions research, this application note addresses the critical need for parallelized, multi-point acquisition strategies. High-throughput platforms in drug discovery, such as automated synthesizers and screening robots, generate vast datasets. Traditional sequential experimentation is a bottleneck. Parallel multi-point acquisition, guided by BO, allows for the simultaneous evaluation of multiple, strategically selected reaction conditions in each experimental batch. This dramatically accelerates the optimization of yield, selectivity, or other complex objectives, transforming the efficiency of research in medicinal and process chemistry.

Core Bayesian Optimization Framework for Parallel Acquisition

Bayesian optimization iteratively models an unknown objective function (e.g., reaction yield) using a probabilistic surrogate model (typically Gaussian Processes) and an acquisition function that balances exploration and exploitation. For parallel high-throughput platforms, the acquisition function must propose a batch of q points (where q > 1) for simultaneous evaluation in each cycle.

Key Parallel Acquisition Strategies:

| Acquisition Function | Mechanism | Advantages | Disadvantages |

|---|---|---|---|

| Constant Liar | Optimizes the acquisition function sequentially for each point in the batch, "lying" to the surrogate model that pending points have a fixed, assumed outcome. | Simple, computationally cheap. | Performance depends heavily on the chosen "lie" value. |

| Local Penalization | Proposes one point via standard acquisition, then penalizes the acquisition function in its neighborhood to encourage diversity in the batch. | Encourages spatial diversity, good for multimodal functions. | Can be sensitive to penalty parameter tuning. |

| Thompson Sampling | Draws a sample function from the posterior of the surrogate model and selects the batch of points that maximize this sample. | Naturally stochastic, provides intrinsic diversity. | Can be less sample-efficient in very low-budget scenarios. |

| q-EI / q-UCB | Directly computes the expected improvement (EI) or upper confidence bound (UCB) for a batch of points. | Theoretically optimal for the batch setting. | Computationally intensive; requires Monte Carlo integration. |

Table 1: Quantitative comparison of parallel batch size (q) impact on a simulated Suzuki coupling yield optimization (10 iterations total).

| Batch Size (q) | Total Experiments | Final Best Yield (%) | Time to Yield >85% (Iterations) | Computational Overhead per Iteration |

|---|---|---|---|---|

| 1 (Sequential) | 10 | 88.2 | 8 | Low |

| 4 | 40 | 92.5 | 3 | Medium |

| 8 | 80 | 91.8 | 2 | High |

| 16 | 160 | 93.1 | 1 | Very High |

Application Notes & Protocols

Protocol: Implementing q-EI for Parallel Reaction Screening

This protocol details the setup for a batch Bayesian optimization experiment to maximize the yield of a palladium-catalyzed amination reaction using a liquid handling robot.

I. Pre-Experiment Configuration

- Define Search Space: Create a table of parameters and bounds.

Parameter Lower Bound Upper Bound Type Catalyst Loading (mol%) 0.5 5.0 Continuous Equiv. of Base 1.0 3.0 Continuous Temperature (°C) 60 120 Continuous Solvent Mix (DMF:DMSO) 0 (100% DMF) 1 (100% DMSO) Continuous Reaction Time (hr) 12 48 Continuous - Initialize with Space-Filling Design: Use a Latin Hypercube Design to conduct an initial batch of 8 experiments covering the parameter space broadly. Analyze yields via UPLC to establish the initial dataset

D.

II. BO Loop for Parallel Execution