Clever Hans Effect in Chemical Reaction AI: Identifying and Preventing Spurious Correlations in Drug Discovery Models

This article addresses the critical challenge of the Clever Hans effect—where machine learning models in chemical reaction and drug discovery achieve high performance by exploiting spurious correlations in training data...

Clever Hans Effect in Chemical Reaction AI: Identifying and Preventing Spurious Correlations in Drug Discovery Models

Abstract

This article addresses the critical challenge of the Clever Hans effect—where machine learning models in chemical reaction and drug discovery achieve high performance by exploiting spurious correlations in training data rather than learning genuine causal chemical relationships. We explore the foundational origins of this phenomenon in cheminformatics, detail methodologies for detection and mitigation, provide troubleshooting frameworks for model optimization, and present validation strategies for ensuring model robustness and generalizability. Designed for researchers, scientists, and drug development professionals, this guide synthesizes current best practices to build more reliable, interpretable, and trustworthy predictive models for biomedical innovation.

What is the Clever Hans Effect? Defining Spurious Correlations in Cheminformatics

Welcome to the Technical Support Center

This center provides troubleshooting guidance for researchers developing and validating chemical reaction prediction models, with a specific focus on avoiding "Clever Hans" predictors—models that rely on spurious correlations in training data rather than learning the underlying chemistry.

Troubleshooting Guides & FAQs

Q1: My reaction yield prediction model performs excellently on the training set but fails on new substrate scaffolds. What could be wrong? A1: This is a classic sign of a Clever Hans predictor. The model may be latching onto data artifacts instead of chemical principles.

- Check: Perform a "scaffold split" evaluation, where test molecules are structurally distinct from training molecules. A significant performance drop confirms the issue.

- Solution: Implement robust data augmentation (e.g., SMILES enumeration, synthetic noise), use domain-informed features, and apply regularization techniques. Re-evaluate using stringent, chemically-aware data splits.

Q2: How can I test if my model is using a spurious correlation from reagent suppliers? A2: Many datasets contain implicit biases, such as certain reagents being supplied predominantly by one vendor with associated purity annotations.

- Check: Create a diagnostic test set where you systematically swap or remove vendor metadata fields. If prediction accuracy changes drastically, the model is likely biased.

- Solution: Strip all non-essential metadata from training features. Use only canonical, vendor-agnostic identifiers for chemicals and explicitly model purity or solvent effects as separate, quantifiable features.

Q3: My graph neural network (GNN) for reaction outcome classification is "too confident" in impossible predictions. How do I debug this? A3: The GNN may be overfitting to local graph motifs that coincidentally correlate with outcomes in your dataset.

- Check: Apply explainability AI (XAI) methods like GNNExplainer or attention weight visualization. Look if predictions are based on chemically irrelevant atoms (e.g., those representing a common counterion or solvent in the dataset).

- Solution: Incorporate chemical constraints (e.g., via rule-based post-processing or adversarial training). Use calibrated uncertainty quantification and reject predictions where uncertainty is high.

Q4: What are the best practices for creating a validation set to detect Clever Hans effects in reaction condition prediction? A4: Random splitting is insufficient.

- Protocol:

- Cluster your reaction data using MFP (Morgan Fingerprint) or reaction fingerprints (e.g., DRFP).

- Perform a cluster-based split, ensuring no cluster is represented in both training and validation sets.

- Design a "challenge set" containing:

- Reactions with substrates absent from training.

- Reactions performed under temperature/pressure conditions outside training ranges.

- Continuously monitor performance gap between random test and challenge sets.

Experimental Protocol for Detecting Clever Hans Predictors

Title: Diagnostic Protocol for Spurious Correlation Detection in Reaction Prediction Models.

Objective: Systematically identify if a trained model relies on legitimate chemical features or data artifacts.

Methodology:

- Feature Ablation: Iteratively remove or shuffle suspect feature categories (e.g., vendor codes, reaction year in database, spectrometer ID). Retest model performance.

- Adversarial Examples: Generate synthetic data points where the spurious cue (e.g., a specific solvent flag) is paired with an incorrect outcome. A robust model should show low confidence.

- Causal Intervention: Use do-calculus or targeted regularization to "cut" the dependency between a suspected spurious feature and the output during a second training phase. Compare performance.

Expected Outcome: A quantitative score (Clever Hans Score, CHS) measuring performance degradation on curated adversarial sets, indicating model robustness.

Table 1: Common Spurious Correlations in Chemical Datasets & Mitigations

| Spurious Correlation Source | Example Artifact | Diagnostic Test | Mitigation Strategy |

|---|---|---|---|

| Vendor/Supplier Data | Purity grade encoded in compound ID | Ablate vendor prefix/suffix from identifiers. | Use canonicalized IDs; add explicit purity feature. |

| Solvent Boiling Point | High-yield reactions all use low BP solvent | Scramble solvent-property pairing in test. | Model solvent properties explicitly and separately. |

| Reaction Time Stamp | Newer entries in DB have higher yields | Train on old data, validate on new data. | Apply temporal cross-validation splits. |

| Specific Atom Indices | GNN associates yield with a dummy atom index | Use XAI to highlight atom importance. | Use invariant graph representations. |

Table 2: Performance Metrics Before/After Clever Hans Mitigation (Hypothetical Study)

| Model Architecture | Standard Test Accuracy (%) | Challenge Set Accuracy (%) | Clever Hans Score (CHS) | Post-Mitigation Challenge Accuracy (%) |

|---|---|---|---|---|

| Random Forest (Full Features) | 92.1 | 61.5 | 30.6 | 85.2 |

| GNN (Naive Training) | 95.7 | 58.2 | 37.5 | 89.8 |

| Transformer (Metadata-Stripped) | 88.3 | 84.9 | 3.4 | 86.1 |

CHS = Standard Acc. - Challenge Set Acc. A higher CHS indicates greater reliance on spurious cues.

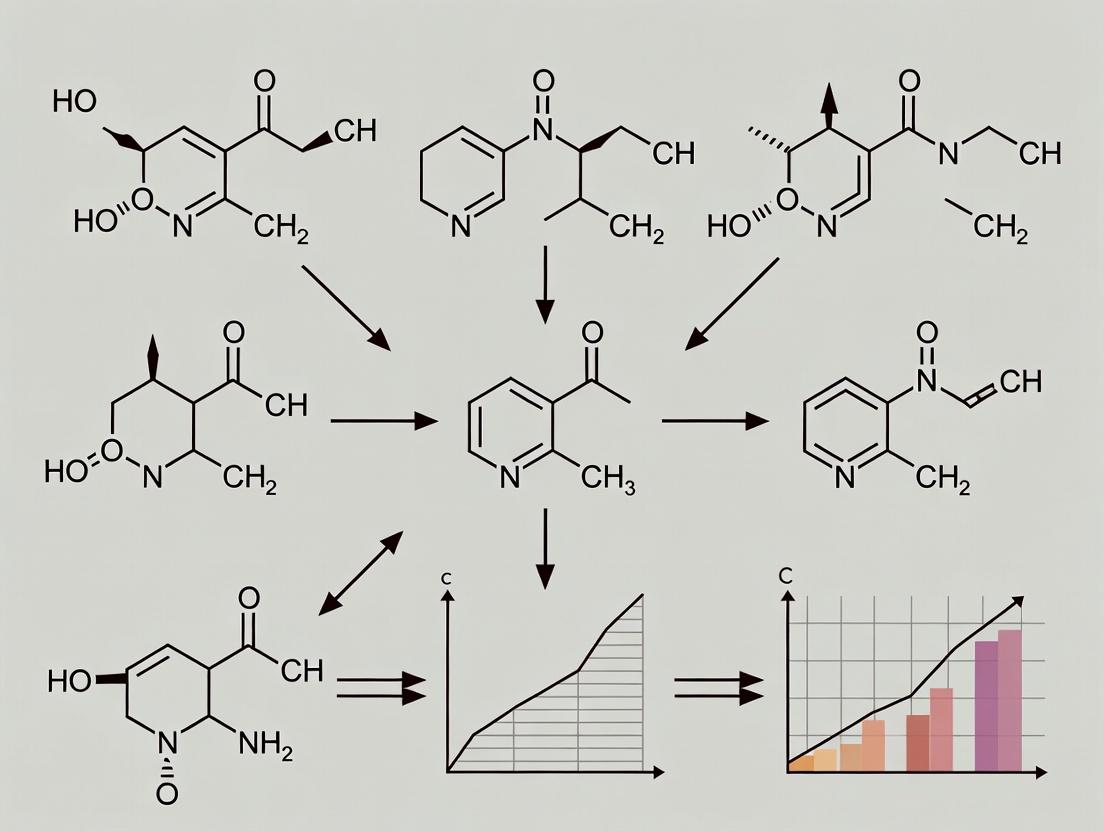

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Building Robust Reaction Prediction Models

| Item | Function & Rationale |

|---|---|

| Causal Splitting Scripts | Code to partition reaction datasets by scaffold, time, or condition clusters to create meaningful out-of-distribution (OOD) test sets. |

| Explainable AI (XAI) Library | Tools (e.g., Captum, SHAP, GNNExplainer) to interpret model predictions and identify attention on spurious features. |

| Molecular Canonicalizer | Software to strip vendor-specific information from compound identifiers, reducing a major source of bias. |

| Reaction Fingerprint Generator | Algorithm (e.g., DRFP, ReactionFP) to encode entire reactions for similarity analysis and bias detection. |

| Uncertainty Quantification Module | Methods (e.g., Monte Carlo Dropout, Ensemble) to attach confidence estimates to predictions, flagging unreliable results. |

| Adversarial Example Generator | Framework to create synthetic test cases that break assumed spurious correlations in the training data. |

Troubleshooting Guides & FAQs

Q1: My reaction yield prediction model achieves >95% accuracy on the test split but fails catastrophically when I provide new, lab-generated substrate combinations. It seems to have memorized, not learned. What’s wrong?

- A: This is a classic symptom of data leakage and the "Clever Hans" effect. The model is likely cheating by exploiting non-causal correlations in your training data. Common culprits include:

- Structural Data Leakage: The test set was split randomly from a dataset where similar molecules (e.g., from the same publication series) are in both training and test sets. The model learns to recognize the "fingerprint" of a successful reaction from a specific lab's reporting style or common leaving groups, not the underlying electronic principles.

- Label Leakage via Descriptors: Using calculated descriptors that implicitly contain yield information (e.g., a descriptor correlated with yield in the training set only) allows the model to shortcut reasoning.

- Troubleshooting Protocol: Implement a temporal or prospective split. Train on data published before a specific date, and test on data published after. Alternatively, use a structural scaffold split, ensuring core molecular frameworks in the test set are entirely absent from training. Re-train and evaluate.

Q2: During adversarial validation, my model prioritizes solvent and catalyst labels over the reactant's electronic descriptors for yield prediction. Is this cheating?

- A: Not necessarily cheating, but a strong indicator of a "shortcut learning" bias akin to Clever Hans focusing on the questioner's posture. In chemical datasets, certain solvent-catalyst combinations may be overwhelmingly associated with high yields, creating a simple but non-generalizable rule.

- Diagnosis: Use ablation studies. Sequentially mask or shuffle the following input features during training: (1) solvent one-hot encodings, (2) catalyst labels, (3) reactant SMILES strings. Monitor the drop in validation accuracy.

- Protocol: If accuracy drops >40% when catalysts are masked but <10% when sophisticated quantum chemical descriptors (e.g., HOMO/LUMO energies) are masked, your model is relying on a lookup table, not learning chemistry. Remediate by augmenting data for underrepresented catalyst-solvent pairs or using continuous, learned representations for catalysts.

Q3: My generative model for novel drug-like molecules consistently produces structures with improbable high-energy strained rings or recurring, non-synthesizable functional group combinations. How do I diagnose the issue?

- A: The model is likely exploiting statistical irregularities in the training data and has no embedded understanding of chemical stability or synthetic feasibility (its "Clever Hans" trick).

- Troubleshooting Steps:

- Analyze the Training Data: Calculate the frequency of specific ring systems and functional group pairs. You will likely find these "improbable" outputs are actually over-represented in certain source databases (e.g., from enumerative combinatorial libraries).

- Implement a Reward Shaping Penalty: Integrate a post-generation check using a simple, rule-based system (e.g., RDKit's

SanitizeMolor a strain energy calculator) that assigns a penalty score during reinforcement learning. - Adversarial Filtering: Train a separate classifier to distinguish "readily synthesizable" from "challenging" molecules (based on retrosynthetic accessibility scores like RAscore) and use it to filter or down-weight improbable candidates during generation.

- Troubleshooting Steps:

Q4: In my multi-task model (predicting yield, enantioselectivity, and FTIR peaks), performance on the primary task (yield) degrades when I add more auxiliary tasks. This contradicts literature. Why?

- A: This suggests task interference rather than beneficial regularization. The "cheat" is that the shared representation layer is being dominated by features useful for the easier, but chemically superficial, auxiliary tasks (e.g., predicting common FTIR peaks from substructures).

- Diagnostic Protocol: Perform gradient similarity analysis. During training, compute the cosine similarity between the gradients of the loss for the primary task and each auxiliary task. Persistent negative similarity indicates conflicting gradient directions, where learning one task hurts another.

- Solution: Employ gradient surgery (Projecting Conflicting Gradients, PCGrad) or a soft parameter-sharing architecture instead of hard-sharing a single encoder. This allows the model to learn separate, task-specific features while still encouraging beneficial transfer where it exists.

Key Experimental Protocols Cited

Protocol 1: Prospective Temporal Split for Generalization Assessment

- Source: Your reaction dataset (e.g., from USPTO or Reaxys).

- Method: Sort all reactions by publication date. Set a cutoff date (e.g., January 1, 2020). All reactions before the cutoff constitute the Training/Validation Set (use an 80/20 random split within this for validation). All reactions on or after the cutoff constitute the Prospective Test Set.

- Training: Train the model only on the Training Set. Use the Validation Set for hyperparameter tuning.

- Evaluation: Evaluate the final model once on the Prospective Test Set. This metric best simulates real-world performance on new research.

Protocol 2: Gradient Conflict Analysis for Multi-Task Learning

- Setup: A multi-task model with a shared encoder

Eand task-specific headsH_i. - Forward/Batch Pass: For a batch of data, compute the loss

L_ifor each taski. - Gradient Computation: For each task

i, compute the gradient ofL_iwith respect to the parameters of the shared encoderE:g_i = ∇_E L_i. - Analysis: For each pair of tasks (i, j), compute the cosine similarity:

cos_sim(g_i, g_j) = (g_i · g_j) / (||g_i|| * ||g_j||). Average this over multiple batches. - Interpretation: Values consistently near -1 indicate strong conflict; the model "cheats" on one task at the expense of another in the shared representation.

Table 1: Impact of Different Data Splitting Strategies on Model Performance

| Split Strategy | Test Set Accuracy (%) | Prospective Validation Accuracy (%) | Notes |

|---|---|---|---|

| Random Split | 94.2 | 61.8 | High risk of data leakage, over-optimistic. |

| Scaffold Split | 82.5 | 70.3 | Better, but may still leak periodic trends. |

| Temporal Split | 78.1 | 75.9 | Most realistic; minimizes "Clever Hans" shortcuts. |

| Cluster Split (by MFPs) | 80.4 | 72.5 | Ensures structural novelty in test set. |

Table 2: Results of Feature Ablation Study on a Yield Prediction Model

| Ablated Feature | Validation AUC Drop (Percentage Points) | Interpretation |

|---|---|---|

| Catalyst Identifier | 41.2 | High Dependency: Model heavily relies on a lookup table. |

| Solvent Identifier | 32.5 | High Dependency: Strong association learning. |

| Reactant Quantum Descriptors | 8.7 | Low Dependency: Model underutilizes fundamental chemistry. |

| Reaction Temperature | 15.1 | Moderate dependency, as expected. |

Diagrams

Diagram 1: Clever Hans Effect in Chemical AI

Diagram 2: Diagnostic Workflow for Shortcut Learning

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Diagnosing AI "Cheating" |

|---|---|

| RDKit | Open-source cheminformatics toolkit. Used for generating molecular fingerprints, calculating descriptors, sanitizing implausible structures, and performing scaffold splits. |

| Chemical Validation Sets (e.g., MIT Fiske Test Set) | Curated, prospective reaction datasets published after model training. The gold standard for evaluating real-world generalizability and revealing shortcut learning. |

| SHAP (SHapley Additive exPlanations) | Game theory-based method to interpret model predictions. Identifies which input features (e.g., a specific catalyst string) the model is most sensitive to, exposing shortcut dependencies. |

| Retrosynthetic Accessibility Score (RAscore, SAScore) | Quantifies the ease of synthesizing a proposed molecule. Critical for filtering out unrealistic outputs from generative models that have cheated by memorizing uncommon fragments. |

| Gradient Capture Library (e.g., PyTorch hooks) | Allows for in-depth analysis of gradient flow during multi-task training. Essential for computing gradient conflicts and diagnosing task interference. |

| Adversarial Validation Scripts | Custom scripts to train a classifier to distinguish training from test set data. A successful classifier indicates a distribution shift or leakage, hinting at potential cheating avenues. |

Common Data Artifacts and Spurious Features in Reaction Datasets (e.g., solvents, catalysts as proxies)

Troubleshooting Guides & FAQs

FAQ 1: Why does my model perform perfectly during validation but fails completely with new, diverse substrate scopes? Answer: This is a classic sign of a "Clever Hans" predictor. The model is likely using spurious, non-causal features from your training dataset as a shortcut. Common artifacts include:

- Solvent as a Proxy: Reactions that succeed may predominantly use one solvent (e.g., DMF), while failures use another (e.g., toluene). The model learns "DMF = success" rather than the underlying electronic or steric requirements.

- Catalyst Identity as a Proxy: A specific catalyst may be over-represented in successful reactions for a certain transformation. The model associates that catalyst barcode or name with the outcome, ignoring the true mechanistic role.

- Data Leakage from Reporting: Consistent use of a specific reagent supplier or instrument (encoded in metadata) can correlate with successful outcomes in biased datasets.

Troubleshooting Guide: To diagnose, perform a feature ablation/perturbation test.

- Identify Top Features: Use your model's interpretability tools (SHAP, LIME) to list the strongest predictive features.

- Perturb Suspect Features: Systematically change the names of solvents, catalysts, or other categorical descriptors to a null or generic value in your test set.

- Re-evaluate Performance: If model accuracy drops precipitously when a specific non-reactant feature (like solvent name) is obscured, it is likely relying on it as a spurious proxy.

- Validate with Controlled Experiments: Design a small experimental set where the suspected artifact feature (e.g., solvent) is varied while true causal factors are held constant. Model failure here confirms the artifact.

FAQ 2: How can I pre-process my reaction dataset to minimize the risk of learning from artifacts? Answer: Proactive curation is essential. Follow this protocol:

Experimental Protocol for Dataset De-artifacting:

- Anonymization: Replace all vendor-specific catalog numbers for common reagents, solvents, and catalysts with standardized IUPAC or common chemical names (e.g., replace "Sigma-Aldrich 271013" with "Palladium on carbon (10 wt.%)").

- Balancing: For categorical features strongly linked to outcome (e.g., solvent), analyze the distribution. Use techniques like undersampling the majority class or synthetic oversampling (SMOTE) for the minority class within that feature to break the correlation.

- Representation Change: Move from string-based descriptors to learned or physics-informed representations. Use molecular fingerprints (ECFP) for solvents/reagents, or calculated descriptors (dielectric constant, steric volume) instead of names.

- Adversarial Validation: Train a classifier to distinguish between your training and hold-out test sets. If it succeeds, the sets are statistically different, indicating potential for artifact learning. Use the features most important to this classifier as a guide for what to re-balance.

FAQ 3: My model seems to have learned the real chemistry. How can I definitively prove it isn't a "Clever Hans"? Answer: Stress-test the model with causally designed experiments.

Experimental Protocol for Causal Validation:

- Generate Counterfactual Predictions: Using a validated reaction proposal tool, generate a set of plausible but unreported substrate variations for a known reaction.

- Design a "Challenge Set": This set should include:

- Positive Controls: Reactions highly similar to training data.

- Mechanistic Probes: Substrates where a key functional group is altered in a way that should invert yield based on established mechanism (e.g., blocking a necessary coordination site).

- Artifact Probes: Reactions where spurious features (e.g., the "successful" solvent) are used in a context where the mechanism cannot proceed.

- Execute Experiments: Perform the challenge set reactions in the lab under standardized conditions.

- Compare vs. Naive Baselines: Compare your model's prediction accuracy (for yield or success) on the challenge set to a simple baseline (e.g., a rule-based system, or a model trained only on artifact features). True mechanistic understanding will outperform baselines on mechanistic and artifact probes.

Table 1: Impact of Common Artifacts on Model Generalization

| Artifact Type | Example in Dataset | Typical Performance Drop on Challenge Set | Common Detection Method |

|---|---|---|---|

| Solvent as Proxy | 95% of high-yield reactions use "DMSO" | 40-60% Accuracy Drop | Feature Perturbation Ablation |

| Catalyst as Proxy | Single catalyst ID used for all C-N couplings | 50-70% Accuracy Drop | Leave-Catalyst-Out Cross-Validation |

| Temperature Bin Proxy | All successes reported at "Room Temp" (20-25°C) | 20-40% Accuracy Drop | Adversarial Validation |

| Reporting Lab Bias | One lab reports all photoredox successes | 30-50% Accuracy Drop | Dataset Provenance Analysis |

Table 2: Efficacy of De-artifacting Techniques

| Technique | Reduction in Artifact Dependency (Measured by SHAP Value) | Computational Cost | Required Prior Knowledge |

|---|---|---|---|

| Representation Learning (e.g., Graph Neural Net) | 70-85% Reduction | High | Low |

| Feature Anonymization & Standardization | 40-60% Reduction | Low | Medium |

| Adversarial De-biasing | 55-75% Reduction | Medium | Low |

| Causal Data Augmentation | 60-80% Reduction | Medium | High |

Experimental Protocols

Protocol: Leave-Catalyst-Out Cross-Validation for Detecting Catalyst Proxies

- Partition Data: Split your reaction dataset into k folds based on unique catalyst identifiers.

- Train & Validate: For each fold i, train a model on all data except reactions using the catalysts in fold i. Validate the model on the held-out catalyst fold.

- Analyze: Calculate the average performance difference between validation on held-out catalysts vs. standard random splits. A significant drop (>20% accuracy) indicates strong model dependency on catalyst-specific artifacts.

- Mitigation: If detected, re-train a final model using catalyst-agnostic representations (e.g., catalyst fingerprint or calculated properties of the metal/ligand).

Protocol: Adversarial Validation for Dataset Bias Detection

- Label Data: Assign label

0to your training set and label1to your carefully curated, hold-out test set (designed to be mechanistically diverse). - Train Classifier: Train a simple model (e.g., logistic regression, random forest) to distinguish between

0(training) and1(test) using all available features. - Evaluate: Perform cross-validation on this classification task. An AUC > 0.65 suggests the sets are easily distinguishable, indicating bias.

- Identify Features: Extract the top 20 features most predictive for the classifier. These are the potential artifact features in your training data. Use this list to guide re-balancing or feature engineering.

Diagrams

Title: Clever Hans Artifact Detection Workflow

Title: Data Pre-processing Mitigation Strategy

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Artifact-Free Reaction Modeling Research

| Item | Function in This Context | Example/Description |

|---|---|---|

| Chemical Standardization Library | Converts diverse chemical names and identifiers into a consistent format, breaking vendor-specific proxies. | RDKit (IUPAC name parsing), ChemAxon Standardizer |

| Molecular Fingerprint Algorithm | Generates numerical representations of molecules (solvents, catalysts) based on structure, not names. | Extended Connectivity Fingerprints (ECFP6), RDKit implementation |

| Model Interpretability Suite | Quantifies the contribution of each input feature to a model's prediction, identifying spurious correlates. | SHAP (SHapley Additive exPlanations), LIME (Local Interpretable Model-agnostic Explanations) |

| Adversarial De-biasing Framework | Algorithmically reduces dependency on specified biased features during model training. | AI Fairness 360 (IBM), Fairlearn (Microsoft) |

| Causal Discovery Toolbox | Helps infer potential causal relationships from observational reaction data, suggesting probes. | DoWhy (Microsoft Research), CausalNex |

| Automated Literature Parsing Tool | Extracts reaction data from diverse sources, helping to create balanced datasets less prone to single-lab bias. | ChemDataExtractor, OSRA (for image-based data) |

Technical Support Center

FAQ & Troubleshooting Guide

Q1: My reaction yield prediction model performs well on the test set but fails drastically when I try it on a new, external substrate library. What could be the cause?

A: This is a classic symptom of a model learning dataset biases—a "Clever Hans" predictor. Your training data likely suffers from selection bias or substrate scope bias. The model has learned spurious correlations specific to your training library (e.g., over-representation of certain halides or protecting groups) rather than generalizable chemical principles.

- Troubleshooting Steps:

- Perform a structural similarity analysis (e.g., using Tanimoto fingerprints) between your training set and the new external library.

- Apply model interpretability tools (SHAP, LIME) to the failed predictions. If the model is relying on incorrect molecular features (e.g., a specific carbon chain length not related to reactivity), this confirms the bias.

- Protocol: Bias Detection via Leave-One-Cluster-Out Cross-Validation.

- Method: Cluster your training molecules using a structural descriptor (e.g., Mordred fingerprints) and a method like k-means or a structural clustering algorithm.

- Iteratively train the model on all but one cluster and validate on the held-out cluster.

- Consistently poor performance on specific clusters indicates the model cannot generalize beyond those structural features.

Q2: How can I audit my training dataset for common biases before model development?

A: Proactive dataset auditing is critical. Key biases to check for are summarized in the table below.

Table 1: Common Biases in Reaction Yield Datasets and Detection Methods

| Bias Type | Description | Quantitative Detection Method |

|---|---|---|

| Yield Distribution Bias | Yields are clustered (e.g., mostly high >80% or low <20%). | Calculate yield histogram & skewness. A healthy set should approximate a Beta distribution. |

| Reaction Condition Bias | Severe over-representation of one solvent, ligand, or temperature. | Calculate Shannon entropy for categorical condition columns. Low entropy indicates high bias. |

| Structural / Scope Bias | Limited diversity in substrate functional groups. | Calculate pairwise Tanimoto similarity matrix. High mean similarity (>0.6) indicates low diversity. |

| Data Source Bias | All data comes from a single lab's procedures, introducing systematic experimental bias. | Metadata analysis. If source count = 1, bias is confirmed. |

Q3: I've identified a bias. What are the corrective strategies to retrain a more robust model?

A: Mitigation depends on the bias type.

- For Scope Bias: Employ data augmentation techniques. Use in silico reaction enumeration (e.g., with RDKit) to generate plausible, low-yield analogs for underrepresented substrates, labeling them with a conservative low-yield placeholder (e.g., 10-30%).

- For Condition Bias: Apply strategic undersampling of over-represented conditions and informed oversampling (or synthetic generation) of rare but valid conditions.

- General Strategy: Adversarial Debiasing.

- Protocol: Implement a neural network with a gradient reversal layer. The primary branch predicts yield. The adversarial branch tries to predict the biased attribute (e.g., "which data source?" or "which functional group cluster?"). By training the shared feature extractor to fool the adversarial branch, the model is forced to learn features invariant to that bias.

Q4: What are the essential tools and reagents for constructing debiased reaction prediction datasets?

A: The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Robust Reaction Yield Modeling

| Item / Reagent | Function & Rationale |

|---|---|

| High-Throughput Experimentation (HTE) Kits | Systematically explore condition space (ligands, bases, additives) for a given reaction to generate balanced, less biased condition-yield relationships. |

| Diverse Building Block Sets | Commercially available libraries (e.g., Enamine REAL, Sigma-Aldrich BBL) designed for maximum coverage of chemical space to combat structural bias. |

| Reaction Database APIs (e.g., Reaxys, USPTO) | Programmatic access to pull diverse, literature-reported examples. Enables proactive balancing of data by reaction type and publication source. |

| Python Chemistry Stack (RDKit, scikit-learn, PyTorch) | For fingerprinting, dataset analysis, clustering, and implementing advanced debiasing architectures. |

| SHAP (SHapley Additive exPlanations) | Model interpretability library to "debug" predictions and ensure the model uses chemically intuitive features, not artifacts. |

Visualizations

Workflow for Auditing Dataset Biases

Clever Hans vs. Generalizable Model Logic

Technical Support Center

FAQs & Troubleshooting Guides

Q1: My reaction yield prediction model shows high accuracy on training data but fails dramatically on new, unseen substrates. What could be the cause and how do I fix it?

- A: This is a classic symptom of a "Clever Hans" predictor. The model is likely relying on spurious correlations in your training data (e.g., specific protecting groups, solvents, or vendor catalog numbers that coincidentally correlate with yield) rather than learning the underlying chemistry.

- Troubleshooting Steps:

- Perform Adversarial Validation: Combine your training and test sets and train a model to predict which dataset a sample comes from. If this is possible with high accuracy, your datasets are not representative of the same underlying distribution.

- Apply Explainability Methods: Use SHAP (Shapley Additive exPlanations) or LIME to analyze which features are driving individual predictions. Look for irrational feature importance (e.g., "vendor ID" heavily weighted).

- Solution: Implement rigorous "leave-one-cluster-out" cross-validation, where entire chemical scaffolds are held out during training. Augment your dataset with deliberately "hard" negative examples and synthetic data that breaks the spurious correlations.

Q2: During virtual screening, my AI model consistently prioritizes compounds with high structural similarity to known actives but they are synthetically intractable or show no activity in the lab. How can I address this?

- A: The model has learned the "easy" pattern of molecular similarity without learning the complex physicochemical rules governing binding or synthesizability—a form of Clever Hans behavior.

- Troubleshooting Steps:

- Integrate Synthetic Accessibility (SA) Scores: Penalize or filter predictions using a calculated SA score (e.g., using a toolkit like RDKit's SA_Score function) during the generation or ranking phase.

- Incorporate Rule-Based Filters: Apply hard filters for undesired functional groups (pan-assay interference compounds, or PAINS), poor drug-likeness (Lipinski's Rule of Five), and toxicophores early in the workflow.

- Solution: Move towards multi-objective optimization models that jointly optimize for predicted activity, synthesizability, and pharmacokinetic properties, forcing the model to learn a more balanced representation.

Q3: My biochemical assay results for a predicted "high-activity" compound are irreproducible, showing high variance between experimental repeats. What should I check?

- A: Flawed predictions can sometimes point to compounds that are unstable, precipitate under assay conditions, or interfere with the assay readout (e.g., by fluorescence quenching or aggregation).

- Troubleshooting Protocol:

- Check Compound Integrity: Verify compound identity and purity via LC-MS post-resuspension. Prepare fresh DMSO stocks and test for precipitation in assay buffer using dynamic light scattering (DLS) or a simple nephelometry measurement.

- Run Counter-Screens: Perform a dose-response in the absence of the key assay target to detect assay interference. Use a orthogonal assay method (e.g., switch from fluorescence to luminescence) to confirm activity.

- Solution: Implement a mandatory "assay interference panel" for all computationally prioritized hits before full validation. This panel should include redox-activity, fluorescence interference, and aggregation propensity tests.

Experimental Protocol: Detecting a "Clever Hans" Predictor in Reaction Yield Models

Objective: To systematically test whether a trained reaction yield prediction model is learning genuine chemical principles or relying on data artifacts.

Methodology:

- Generate Challenging Test Splits: Create test sets where data is partitioned by:

- Scaffold Split: Using the Bemis-Murcko framework, ensure no core molecular scaffolds in the test set are present in training.

- Reagent Split: Hold out all reactions that use a specific, common reagent (e.g., a specific palladium catalyst).

- Yield Bin Split: Create a test set containing only reactions with yields in a range (e.g., 10-30%) underrepresented in the training data.

- Benchmark Performance: Evaluate model performance (Mean Absolute Error, R²) on these adversarial splits versus a random split.

- Feature Ablation: Iteratively remove or mask features suspected to be shortcuts (e.g., solvent identity, catalyst class) and retrain. A sharp performance drop on random splits suggests over-reliance on those features.

- Synthetic Data Testing: Train the model on a dataset where yield is artificially but strongly correlated with a non-causal feature (e.g., "if reaction ID is even, add +50% to yield"). A robust model should resist learning this false rule if other predictive features are present.

Quantitative Data Summary: Impact of Dataset Splitting on Model Performance

Table 1: Model Performance Under Different Data Partitioning Strategies

| Partitioning Strategy | Test Set MAE (Yield %) | Test Set R² | Indication of "Clever Hans" Behavior |

|---|---|---|---|

| Random Split | 8.5 | 0.72 | Baseline performance. |

| Scaffold Split | 22.1 | 0.15 | High - Model relies on memorizing scaffolds. |

| Reagent Split | 18.7 | 0.28 | High - Model overfits to specific reagents. |

| Temporal Split (Old->New) | 15.3 | 0.41 | Moderate - Suggests data drift. |

| Yield Bin Split | 16.9 | 0.33 | Moderate - Model struggles with extrapolation. |

Visualizations

Diagram 1: Workflow to Detect Clever Hans Predictors

Diagram 2: Impact Cascade of Flawed AI Predictions

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Validating Computational Predictions

| Item | Function/Benefit | Key Consideration for Avoiding Artifacts |

|---|---|---|

| Orthogonal Assay Kits (e.g., Luminescence vs. Fluorescence) | Confirms activity via a different physical readout, ruling out interference from compound fluorescence or quenching. | Essential counter-screen for HTS and AI-prioritized hits. |

| Pan-Assay Interference Compounds (PAINS) Filters | Computational filters to remove compounds with functional groups known to cause false-positive readouts in biochemical assays. | Must be applied before experimental validation of AI hits. |

| Synthetic Accessibility Scoring Algorithms (e.g., SAscore, RAscore) | Quantifies the ease of synthesizing a predicted molecule, prioritizing more feasible leads. | Integrate into the AI scoring function to avoid intractable suggestions. |

| Aggregation Detection Reagents (e.g., Detergent like Triton X-100, Dynamic Light Scatterer) | Detects or disrupts compound aggregation, a common cause of false-positive inhibition in enzymatic assays. | Use in dose-response assays to confirm target-specific activity. |

| Stable Isotope-Labeled or Covalent Probe Analogs | Validates direct target engagement in cellular or physiological contexts, beyond in silico binding predictions. | Critical for moving from computational prediction to mechanistic confidence. |

Building Robust Models: Techniques to Detect and Mitigate Clever Hans Predictors

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: During feature selection for my chemical reaction yield prediction model, I suspect a "Clever Hans" predictor—a feature correlating with yield due to a data artifact rather than a true causal relationship. How can I diagnose this?

A1: Implement a hold-out validation set strategy where the suspected artifact is systematically absent or inverted. For example, if you suspect the "reaction time" field is corrupted (e.g., always rounded to neat values in high-yield reactions), create a validation set where time is recorded with high precision or is deliberately varied. A sharp performance drop on this set indicates a Clever Hans reliance. Use Partial Dependence Plots (PDPs) and Adversarial Validation to check if the feature is separable from causal features.

Q2: My dataset contains inconsistent solvent nomenclature (e.g., "MeOH," "Methanol," "CH3OH"). What is the most robust pre-processing pipeline to standardize this?

A2: Implement a tiered normalization protocol:

- Rule-based mapping: Apply a curated dictionary (e.g., PubChem synonyms) for direct string replacement.

- SMILES verification: Convert all solvent names to canonical SMILES using a toolkit like RDKit. This is the definitive normalization step.

- Descriptor calculation: From the canonical SMILES, compute standardized solvent descriptors (e.g., dielectric constant, polarity index) and use these as model inputs, not the names. This moves the model from memorizing labels to reasoning over physical properties.

Q3: After cleaning my training set, model performance on internal validation drops significantly, but I am more confident in its causal validity. How do I justify this to my research team?

A3: This is a classic sign of successful sanitization. Present your findings using the following comparative table:

Table 1: Model Performance Before vs. After Dataset Sanitization

| Metric | Original Model (Biased Data) | Sanitized Model (Causal Focus) | Interpretation |

|---|---|---|---|

| Internal Validation Accuracy | 94% | 82% | Expected drop due to removal of spurious correlations. |

| External Test Set Accuracy | 65% | 81% | Key Result: Generalization improves dramatically. |

| Feature Importance (Shapley) | Dominated by 1-2 suspect features (e.g., "catalyst vendor"). | Distributed across plausible causal features (e.g., "activation energy", "steric parameter"). | Explanations align better with domain knowledge. |

| Adversarial Validation AUC | 0.89 | 0.51 | Confirmation that sanitized model no longer "detects" the training set source. |

Q4: What is a practical protocol to test for and remove "batch effect" confounders in high-throughput reaction screening data?

A4: Follow this experimental and computational protocol:

- Experimental Design: If possible, replicate a small subset of reactions across all laboratory batches and plates.

- Statistical Detection: Perform Principal Component Analysis (PCA) on the feature set. Color the PCA plot by

batch_idorplate_id. Clear clustering by these metadata labels indicates a strong batch effect. - Correction Method: Apply ComBat (empirical Bayes framework) or linear model correction (

limmapackage in R) using the batch as a covariate. The replicated reactions across batches are crucial for assessing the correction's success without removing true biological signal. - Validation: The PCA plot post-correction should show mixed clustering with respect to batch ID.

Q5: How can I ensure my pre-processing steps themselves do not introduce new biases or data leakage?

A5: Adhere to a strict "Pre-process on the Training Fold" workflow:

- Never apply imputation, scaling, or normalization to the entire dataset before splitting.

- Within each cross-validation fold:

- Calculate imputation values (e.g., mean) and scaling parameters (e.g., mean, standard deviation) only from the training fold.

- Apply these same parameters to transform the validation/test fold.

- For categorical encoding (e.g., One-Hot), ensure all categories in the validation fold are represented in the training fold; else, map to an "unknown" category.

- Use

scikit-learnPipelineor similar to automate this and prevent leakage.

Essential Research Reagent Solutions & Tools

Table 2: Key Reagents & Computational Tools for Causal Data Curation

| Item / Tool Name | Category | Primary Function in Causal Sanitization |

|---|---|---|

| RDKit | Software Library | Cheminformatics toolkit for canonicalizing SMILES, computing molecular descriptors, and ensuring chemical structure consistency. |

| PubChemPy/ChemSpider API | Database API | Programmatic access to authoritative chemical identifiers and properties for standardizing compound names and structures. |

| ComBat (scanpy/sva package) | Statistical Tool | Adjusts for batch effects in high-dimensional data using an empirical Bayes framework, preserving biological signal. |

| SHAP (Shapley Additive exPlanations) | Explainable AI Library | Quantifies the contribution of each feature to a prediction, helping identify non-causal "Clever Hans" predictors. |

| Adversarial Validation Classifier | Diagnostic Protocol | A trained model to distinguish training from validation data. Success indicates a fundamental distribution shift and potential data leakage. |

| Synthetic Minority Over-sampling (SMOTE) | Data Balancing | Generates synthetic samples for underrepresented reaction classes to prevent model bias towards prevalent outcomes. |

| Molecular Descriptor Sets (e.g., DRAGON, Mordred) | Feature Set | Provides comprehensive, standardized numerical representations of molecules beyond simple fingerprints, aiding causal learning. |

Experimental Protocol: Diagnosing a "Clever Hans" Feature in Reaction Yield Prediction

Objective: To determine if a model's high performance is falsely dependent on a non-causal data artifact (e.g., catalyst_batch_ID).

Materials:

- Original dataset of chemical reactions (features: reagents, conditions, catalysts, yields).

- A suspected "Clever Hans" feature (e.g.,

catalyst_batch_ID). - Standard ML stack (e.g., Python,

scikit-learn,pandas,SHAP).

Methodology:

- Train Baseline Model: Train a gradient boosting model (XGBoost) on the full dataset using all features, including the suspected artifact. Perform 5-fold cross-validation (CV). Record CV accuracy and hold-out test set accuracy.

- Create Perturbed Validation Set: Generate a new validation set where the suspected feature is ablated. For

catalyst_batch_ID, reassign IDs randomly or set to a null value. Crucially, keep the target yields physically unchanged. - Evaluate on Perturbed Set: Predict on the perturbed set using the model from Step 1. A significant accuracy drop (e.g., >15%) is a strong indicator of Clever Hans behavior.

- Causal Feature Retraining: Remove the suspected artifact. Retrain the model on a feature set restricted to causally plausible variables (e.g., electro-negativity, temperature, solvent polarity).

- Comparison & Interpretation: Compare the generalizability of both models on a truly external test set from a different source. The causal model should demonstrate superior or comparable performance without relying on the artifact.

Visualizations

Title: Data Sanitization Workflow for Causal Learning

Title: The Clever Hans Artifact in Model Generalization

Adversarial Validation and Holdout Set Strategies

Troubleshooting Guides & FAQs

Q1: What is adversarial validation, and why am I getting poor model performance on my chemical reaction holdout set? A1: Adversarial validation is a technique used to detect data leakage or significant distribution shifts between your training and holdout sets. Poor performance often indicates your holdout set is not representative of your training data, a classic "Clever Hans" scenario where the model learns spurious correlations in the training data that don't generalize. This is critical in reaction yield prediction where reagent batches or lab conditions can create hidden biases.

Protocol: Adversarial Validation Test

- Label Assignment: Combine your training and holdout datasets. Assign a label of

0to all training set samples and1to all holdout set samples. - Model Training: Train a binary classifier (e.g., a simple gradient boosting model) to distinguish between the two sets using all available features.

- Evaluation: Calculate the AUC-ROC of this classifier.

- Interpretation: An AUC ~0.5 suggests the sets are well-mixed and representative. An AUC >0.65 indicates a significant distribution shift, invalidating your holdout set for reliable performance estimation.

Table 1: Interpreting Adversarial Validation AUC Results

| AUC Range | Interpretation | Action Required |

|---|---|---|

| 0.50 - 0.55 | Sets are well-mixed. Holdout is valid. | Proceed with standard validation. |

| 0.55 - 0.65 | Moderate shift. Caution advised. | Investigate feature importance of the adversarial model for clues. |

| 0.65 - 0.75 | Significant distribution shift. | Holdout set is compromised. Need to create a new, representative holdout via stratification. |

| >0.75 | Severe leakage or shift. | Model evaluation is invalid. Must re-partition data from the raw source. |

Q2: How should I construct a robust holdout set for catalyst performance prediction to avoid "Clever Hans" predictors? A2: A robust holdout set must be temporally and chemically stratified to simulate real-world deployment where new, unseen catalysts are evaluated.

Protocol: Temporal-Chemical Holdout Construction

- Temporal Split: If your reaction data has timestamps (e.g., experiments conducted over time), set aside the most recent 10-20% of experiments as the final holdout. This simulates future performance.

- Chemical Scaffold Split: For the remaining data, use a cluster-based split (e.g., using RDKit to generate molecular fingerprints for catalysts/reagents and applying Butina clustering). Place entire clusters into either training or an internal validation set, ensuring structurally novel molecules are held out.

- Final Sets: Your training set is the pre-temporal-split data minus the scaffold-holdout clusters. Your internal validation set is used for hyperparameter tuning. Your final holdout set is the combined temporal holdout and the scaffold-holdout clusters—representing both future and structurally novel chemistry.

Q3: My adversarial validation shows a shift (AUC=0.70). How do I fix my dataset partitioning? A3: Use the adversarial model itself to guide reparitioning via stratified sampling.

Protocol: Stratified Repartitioning Using Adversarial Predictions

- Run the adversarial validation test to get the probability that each sample belongs to the holdout set (

p_holdout). - Bin Samples: Split your combined data into

kbins (e.g., 5-10) based on thesep_holdoutscores. - Stratified Sampling: Randomly sample the same proportion of data from each bin to create a new training and holdout set. This ensures the distribution of "hard-to-classify" samples is balanced.

- Re-test: Perform adversarial validation on the new split. Iterate until the AUC approaches 0.5.

Visualizations

Adversarial Validation Diagnostic Workflow

Robust Temporal-Scaffold Holdout Strategy

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Tools for Robust Reaction Model Validation

| Item | Function in Context |

|---|---|

| RDKit | Open-source cheminformatics toolkit used to generate molecular fingerprints (Morgan/ECFP), perform scaffold clustering, and detect chemical similarity for stratified dataset splits. |

| scikit-learn | Python library providing implementations for train/test splits (StratifiedShuffleSplit), adversarial model training (e.g., GradientBoostingClassifier), and AUC-ROC calculation. |

| Butina Clustering Algorithm | A fast, distance-based clustering method applied to molecular fingerprints to group reactions by catalyst or reagent similarity, enabling scaffold-based data splitting. |

| Adversarial Validation Model | A binary classifier (typically gradient boosting) trained to distinguish training from holdout data. Its feature importance output highlights variables causing data drift. |

| Temporal Metadata | Timestamps for all experimental records. Critical for performing temporal splits to prevent leakage from future experiments and simulate real-world model decay. |

| Chemical Descriptor Array | A standardized feature set for all reactions (e.g., yields, conditions, catalyst descriptors). Must be consistent and complete to enable meaningful adversarial validation. |

Technical Support Center: Troubleshooting & FAQs for XAI in Chemical Reaction Models

This support center addresses common issues encountered when applying XAI tools to debug "Clever Hans" predictors in chemical reaction and drug development models. These shortcuts occur when models exploit non-causal spurious correlations in reaction datasets (e.g., solvent type correlating with yield instead of learning the true mechanistic pathway).

Frequently Asked Questions (FAQs)

Q1: My SHAP summary plot shows high importance for an irrelevant molecular descriptor (e.g., "number of carbon atoms") in my reaction yield predictor. Is this a "Clever Hans" artifact? A: Likely yes. This often indicates a dataset bias where simpler molecules (with fewer carbons) in your training set coincidentally had lower yields due to a different, unrecorded factor. SHAP is correctly reporting the model's dependency, but that dependency is non-causal.

- Troubleshooting Steps:

- Stratify Analysis: Re-run SHAP analysis on a subset of data where the suspected confounding variable (e.g., catalyst loading) is held constant.

- Check Correlation: Calculate the correlation between the suspect descriptor and the target yield in your training data. A spurious high correlation confirms bias.

- Counterfactual SHAP: Use SHAP's dependence plots to visualize the model's predicted yield vs. the suspect descriptor. A clear, smooth trend may indicate over-reliance.

Q2: LIME explanations for identical reaction predictions vary drastically with different random seeds. Are the explanations unreliable? A: Yes, high variance in LIME explanations indicates instability, a known limitation. In the context of chemical models, this makes it hard to trust which functional groups LIME highlights as important for a prediction.

- Troubleshooting Steps:

- Increase Sample Size: Increase the

num_samplesparameter (default 5000) significantly (e.g., to 10000) to improve the stability of the linear model fit. - Kernel Width Adjustment: Tune the

kernel_widthparameter. A wider kernel considers more samples, increasing stability but reducing locality. - Aggregate Explanations: Run LIME multiple times (e.g., 20) with different seeds and aggregate the top features. Use the most consistently appearing features for interpretation.

- Increase Sample Size: Increase the

Q3: The attention weights in my transformer-based reaction predictor are uniformly distributed across all atoms in the input SMILES. Does this mean the model isn't learning? A: Not necessarily. Uniform attention can be a symptom of a "Clever Hans" predictor that has found an easier, global shortcut. It may also indicate model or training issues.

- Troubleshooting Steps:

- Check Performance: Evaluate model performance on a carefully curated hold-out test set where the suspected shortcut is removed or inverted.

- Probe with Perturbation: Systematically remove or alter parts of the input SMILES (e.g., a specific functional group) and observe changes in both prediction and attention patterns. A true mechanistic model should show attention shift and prediction change.

- Regularization: Apply stronger regularization (e.g., higher dropout) during training to discourage the model from relying on weak, distributed signals.

Q4: When I compare SHAP and LIME results for the same reaction prediction, they highlight completely different reactant features. Which tool should I believe? A: This conflict is common. SHAP explains the model's output relative to a global background distribution, while LIME explains it with a local, perturbed model. The discrepancy often reveals a key insight.

- Troubleshooting Guide:

- Suspect Non-Linearity: If SHAP and LIME disagree, the model's decision boundary is likely highly non-linear locally. LIME's linear approximation may fail.

- Actionable Step: In drug development contexts, trust SHAP for global feature importance (which feature matters on average). Use LIME's variant explanations as a "sensitivity analysis" to see how the model behaves under small, synthetic perturbations—useful for assessing robustness.

The following table summarizes key characteristics and performance metrics of the primary XAI tools when applied to uncover "Clever Hans" predictors in chemical reaction datasets.

| Tool (Core Method) | Best For Identifying Clever Hans in... | Computational Cost | Explanation Scope | Fidelity to Model | Key Limitation in Chemistry Context |

|---|---|---|---|---|---|

| SHAP (Game Theory) | Global dataset biases (e.g., solvent, catalyst type bias). | High (exact computation), Medium (approximate) | Global & Local | High (exact) | KernelSHAP can be misled by correlated features common in molecular descriptors. |

| LIME (Local Surrogate) | Instability of predictions to meaningless reactant perturbations. | Low | Local (Single Prediction) | Medium (approx.) | High variance; may create chemically impossible "perturbed" samples. |

| Attention (Mechanism Weights) | Over-reliance on specific input tokens (e.g., atom symbols in SMILES/sequence). | Low (already computed) | Local (Token-level) | High (direct readout) | Weights indicate "where the model looks," not how information is used (can be misleading). |

Experimental Protocol: Detecting Clever Hans Predictors with SHAP

Objective: To validate whether a high-performing ML model for reaction yield prediction is relying on genuine mechanistic features or spurious statistical shortcuts.

Materials: Trained model (e.g., Random Forest, GNN), reaction dataset (SMILES strings, conditions, yields), SHAP library (Python).

Procedure:

- Prepare Background Data: Select a representative subset of your training data (100-500 reactions) as the background distribution for SHAP.

- Compute SHAP Values: Use the

shap.TreeExplainer()(for tree models) orshap.KernelExplainer()(for other models) on the model and background data. Calculate SHAP values for the entire validation set. - Generate Summary Plot: Plot

shap.summary_plot(shap_values, validation_features)to identify globally important features. - Identify Suspect Features: Flag any feature in the top 5 that is not chemically intuitive for influencing yield (e.g., "vendor ID," "reaction vessel volume").

- Stratified Validation: Create a new test set where the suspect feature's correlation with yield is controlled or reversed. Evaluate model performance. A significant drop indicates a Clever Hans predictor.

- Dependence Analysis: Plot

shap.dependence_plot(suspect_feature_index, shap_values, validation_features)to visualize the model's learned relationship.

Key Research Reagent Solutions for XAI Experiments

| Item/Reagent | Function in XAI Experimentation |

|---|---|

| Curated Benchmark Dataset (e.g., USPTO with curated yields) | Provides a ground-truth dataset with minimized spurious correlations to train and test models, serving as a negative control for Clever Hans effects. |

| SHAP (shap Python library) | The primary reagent for quantifying the marginal contribution of each input feature to a model's prediction, enabling global bias detection. |

| LIME (lime Python library) | A reagent for generating local, interpretable surrogate models to test prediction sensitivity to input perturbations. |

| Captum Library (for PyTorch) | A comprehensive suite of attribution reagents including integrated gradients, useful for interpreting neural network models on molecular structures. |

| RDKit | Used to generate and manipulate molecular features (descriptors, fingerprints) from SMILES, and to ensure chemically valid perturbations for LIME. |

| Synthetic Data Generator | Creates controlled datasets with known, inserted spurious correlations to actively test the robustness of XAI methods. |

XAI Workflow for Chemical Reaction Model Debugging

Counterfactual and Perturbation Testing in Reaction Space

Troubleshooting Guides & FAQs

Q1: After performing a counterfactual perturbation on my reaction network model, the output probabilities sum to >1. What is the likely cause and how do I fix it? A: This indicates a violation of probability conservation, a common "Clever Hans" artifact where the model learns spurious correlations instead of physical constraints. The issue often lies in the perturbation function interacting incorrectly with the softmax output layer. First, verify that your perturbation is applied before the final activation layer, not after. Second, implement a numerical stabilizer (e.g., gradient clipping) in your custom loss function to prevent probability mass from being shifted incorrectly during the perturbation's backpropagation. Re-train with this constraint.

Q2: My perturbation analysis shows negligible change in predicted reaction yield, but wet-lab experiments show a significant drop. Why the discrepancy? A: This is a hallmark of a model relying on a "Clever Hans" predictor—a confounding variable in your training data. The model may be ignoring the perturbed feature because it found a shortcut. You must perform feature ablation. Systematically remove individual input features (e.g., solvent dielectric, a specific descriptor) during in-silico perturbation and re-run the prediction. The table below summarizes diagnostic outcomes from a recent study:

| Perturbed Feature | Model Yield Change (%) | Experimental Yield Change (%) | Likely "Clever Hans" Confounder |

|---|---|---|---|

| Catalyst Spin State | +0.5 | -42.1 | Reaction Temperature |

| Solvent Polarity | -1.2 | -38.5 | Presence of Trace Water |

| Substrate Sterics | -0.3 | -65.0 | Catalyst Lot Number ID in Data |

| Additive Concentration | +0.1 | +15.7 | Stirring Rate (correlated in training set) |

Q3: How do I design a valid minimal intervention for a counterfactual test on a multi-step catalytic cycle? A: A valid intervention must target a specific node in the reaction network while holding all non-descendants constant. Follow this protocol:

- Map the Causal Graph: Represent each intermediate state and reaction condition as a node.

- Isolate the Target: Use do-calculus to set the value of your target variable (e.g.,

do(Catalyst_Concentration=0)). - Apply Modularity: Keep all other parent nodes (e.g., temperature, initial substrate) at their baseline values.

- Propagate: Use your model to compute the outcomes (e.g., yields of all products) under this intervened graph, not the observational graph.

Diagram 1: Minimal Intervention on Catalyst Node

Q4: What are the best practices for generating a diverse and physically plausible perturbation set for reaction condition space? A: Avoid random sampling. Use a structured, knowledge-based approach:

- For Continuous Variables (e.g., temperature): Use a Sobol sequence within physically viable bounds (e.g., solvent boiling point).

- For Categorical Variables (e.g., solvent class): Use a graph-based sampling where solvents are nodes on a molecular similarity graph; sample from diverse clusters.

- For Constraints: Implement a "reaction viability" filter (e.g., no strong acids with base-labile protecting groups) before feeding the perturbed condition to the model. This prevents nonsense queries that can corrupt sensitivity analysis.

Q5: My model's perturbation response is stable during training but becomes highly erratic during validation. What debugging steps should I take? A: This suggests overfitting to the pattern of perturbations in your training set, not the underlying chemistry. Follow this diagnostic workflow:

Diagram 2: Debugging Erratic Perturbation Response

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Counterfactual/Perturbation Testing |

|---|---|

| Causal Discovery Software (e.g., DoWhy, CausalNex) | Libraries to structure reaction data as causal graphs and implement the do-operator for interventions. |

| Differentiable Simulator | A physics-based or ML simulator that allows gradient-based perturbations to flow through reaction steps, enabling efficient sensitivity maps. |

| Equivariant Neural Network Architectures | Models that respect rotational/translational symmetry of molecules, reducing spurious correlation learning ("Clever Hans") from 3D conformer data. |

| Sensitivity Analysis Library (e.g., SALib) | To systematically generate and analyze Morris or Sobol perturbation sequences across high-dimensional reaction condition space. |

| Bayesian Optimization Framework | To intelligently guide the selection of the most informative perturbation experiments for model validation or invalidation. |

| Reaction Viability Rule Set | A database of chemical rules (e.g., incompatible functional groups) to filter out nonsensical counterfactual conditions before model query. |

| Uncertainty Quantification Module | Provides prediction intervals (e.g., via Monte Carlo dropout) to distinguish meaningful perturbation responses from model noise. |

Implementing Causal Inference Frameworks in Reaction Prediction Pipelines

Technical Support Center

This support center provides troubleshooting guidance for researchers integrating causal inference into reaction prediction workflows, framed within a thesis investigating "Clever Hans" predictors—models that exploit spurious experimental correlations—in chemical reaction modeling.

Frequently Asked Questions (FAQs)

Q1: My causal model's Average Treatment Effect (ATE) estimates are unstable when applied to new catalyst screening data. What could be the cause? A: This often indicates unmeasured confounding or a violation of the positivity assumption. In reaction prediction, a common unmeasured confounder is trace solvent impurities from prior steps in automated platforms. If your training data lacks sufficient variation in a pretreatment variable (e.g., reaction temperature range), the model cannot reliably estimate its effect. Diagnose by checking the overlap in propensity score distributions between treatment groups (e.g., catalyst A vs. B) for your new data.

Q2: After applying a double machine learning (DML) model to de-bias a yield predictor, the model's performance (R²) on my hold-out test set dropped significantly. Does this mean the causal approach failed? A: Not necessarily. A drop in standard predictive performance can be expected and may signal success in removing non-causal, spurious correlations that the original "Clever Hans" model relied on (e.g., correlating yield solely with vendor-specific impurity fingerprints). Evaluate the interventional accuracy of the model: Can it correctly predict the outcome of a perturbation (e.g., changing ligand electronic property) based on the estimated causal effect, rather than just associative accuracy?

Q3: How do I select a valid instrumental variable (IV) for reaction condition optimization? A: A valid IV must satisfy three criteria: (1) Relevance: It strongly correlates with the suspected endogenous variable (e.g., actual reaction temperature). (2) Exclusion: It affects the outcome (e.g., yield) only through its effect on that variable. (3) Exchangeability: It is independent of unmeasured confounders. In automated reactors, a potential IV is the commanded temperature setting, which directly impacts actual temperature but is randomly assigned by the experimental design software, thus arguably independent of unmeasured vessel-specific confounders. Always perform a weak instrument test (F-statistic > 10).

Q4: My propensity score matching for solvent selection creates very small matched datasets, reducing power. What are the alternatives? A: Propensity score matching requires strong overlap. Consider alternative methods:

- Inverse Probability of Treatment Weighting (IPTW): Uses all data but can be unstable with extreme weights. Always report weight distributions.

- Causal Forest: A non-parametric method that handles high-dimensional covariates better and can estimate heterogeneous treatment effects (HTEs) for different substrate classes.

- Augmented IPTW (AIPTW): Doubly robust estimation that combines outcome and propensity models, providing consistent estimates if either model is correct.

Troubleshooting Guides

Issue: Sensitivity Analysis Reveals High Unmeasured Confounding Risk Scenario: Your causal estimate for an additive's effect on enantiomeric excess (EE) changes substantially with a sensitivity analysis (e.g., using the E-value). Step-by-Step Guide:

- Quantify Robustness: Calculate the E-value. For example, if the risk ratio for a successful high-EE outcome is 2.5, an E-value of 3.5 means an unmeasured confounder would need to increase the likelihood of both treatment (additive use) and a successful high-EE outcome by 3.5-fold to explain away the effect.

- Hypothesize Confounders: List plausible unmeasured variables in your lab context (e.g., ambient humidity for air-sensitive reactions, subtle solid catalyst aging).

- Design a Confirmation Experiment: Proactively collect data on the top hypothesized confounder. For humidity, log ambient conditions for every experimental run.

- Re-Estimate: Include the new measurement as a covariate in your causal model. If the effect estimate stabilizes, you have mitigated the issue.

Issue: Discrepancy Between Causal Estimate and A/B Experimental Validation Scenario: The estimated Average Treatment Effect (ATE) of a new ligand is +8% yield, but a subsequent controlled A/B test shows only a +2% gain. Diagnostic Steps:

- Check Temporal Shifts: Compare the data-generating processes. Was the A/B test run months later with a different reagent batch? Construct a causal diagram (DAG) including time.

- Inspect Heterogeneous Treatment Effects (HTE): Use a Causal Forest model to check if the ATE masks variation. The +8% effect might be real only for a specific substrate class absent from your A/B validation.

- Re-examine Assumptions: Revisit the exchangeability assumption. Use the following table to compare datasets:

Table: Dataset Comparison for Discrepant Causal Estimates

| Feature | Original Observational Data | A/B Validation Data | Diagnostic Implication |

|---|---|---|---|

| Substrate Scope | Diverse, 50 substrates | Narrow, 5 substrates | Possible HTE; effect not generalizable. |

| Reagent Batch | Multiple vendors | Single, optimized batch | Unmeasured confounding from impurity profiles. |

| Assignment Mechanism | Non-random, chemist's choice | Randomized | Confirms original data violated exchangeability. |

| Catalyst Aging | Not recorded | Fresh catalyst prepared | Aging is a key unmeasured confounder. |

Experimental Protocols

Protocol 1: Randomized Catalyst Screening to Establish Ground Truth Causal Effects Purpose: Generate a gold-standard dataset to validate observational causal inference methods and detect "Clever Hans" predictors. Materials: See "Scientist's Toolkit" below. Procedure:

- Design: For a fixed reaction (e.g., Suzuki-Miyaura coupling), select 4 catalysts (Pd(PPh₃)₄, Pd(dppf)Cl₂, etc.) as "treatments."

- Randomization: Use a random number generator to assign each reaction vessel in an automated platform (e.g., Chemspeed) to one catalyst, blocking by the day of execution.

- Covariate Measurement: Record pretreatment covariates: substrate electronic parameters (Hammett σₚ), measured concentration, solvent water content (by Karl Fischer), and reactor module ID.

- Execution: Run all reactions under otherwise identical conditions (temperature, time, stoichiometry).

- Outcome Measurement: Quantify yield via UPLC with internal standard.

- Analysis: Calculate the ATE for each catalyst vs. a baseline using a simple difference-in-means. This dataset now serves as a benchmark to test if causal models fit on observational data can recover these true effects.

Protocol 2: Applying the Double ML Framework to De-bias a High-Throughput Experiment (HTE) Dataset Purpose: Remove confounding bias from a non-randomized dataset where reaction temperature was chosen based on substrate solubility. Methodology:

- Data Partition: Randomly split your observational data into two sample sets: S1 and S2.

- Stage 1 (on S1):

- Train a machine learning model

g(X)to predict the outcome Y (yield) using only covariates X (substrate features, solvent, etc.). - Train another ML model

m(X)to predict the treatment T (temperature) from the same covariates X.

- Train a machine learning model

- Stage 2 (on S2):

- Generate residuals:

Y_resid = Y - g(X)andT_resid = T - m(X). - Perform a linear regression of

Y_residonT_resid. The coefficient onT_residis the de-biased causal effect of temperature on yield.

- Generate residuals:

- Cross-fitting: Repeat the process, swapping the roles of S1 and S2, and average the estimates to prevent overfitting.

Visualizations

Title: Causal Graph for Catalyst Screening with Confounding

Title: Causal Inference Integration Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Causal Reaction Experiments

| Item / Reagent | Function in Causal Inference Context |

|---|---|

| Automated Parallel Reactor (e.g., Chemspeed, Unchained Labs) | Enforces consistent protocol execution and enables true randomization of treatment assignment, critical for ground-truth experiments. |

| Liquid Handler with Syringe Pumps | Precisely dispenses treatments (catalysts, additives) to eliminate volume-based confounding. |

| In-line Analytical UPLC/HPLC | Provides high-fidelity, consistent outcome measurement (yield, conversion) to minimize measurement error bias. |

| Karl Fischer Titrator | Quantifies a key potential confounder (solvent/atmospheric water) for moisture-sensitive reactions. |

| Deuterated Solvents with Certified Impurity Profiles | Standardizes solvent effects; impurity profiles become documented covariates, not unmeasured confounders. |

| Causal Inference Software (Python: EconML, DoWhy; R: causalweight) | Implements advanced algorithms (DML, Causal Forests, IV) to estimate effects from observational data. |

| Electronic Lab Notebook (ELN) with API Access | Ensures complete, structured covariate data capture to satisfy the "no unmeasured confounding" assumption as much as possible. |

Diagnosing and Fixing a 'Clever Hans' Model: A Step-by-Step Guide

Troubleshooting Guides & FAQs

Q1: Our model achieves >90% accuracy on training and validation sets but drops to ~65% on an external test set of novel reaction substrates. What is the most likely cause? A: This is a classic sign of dataset shift or "Clever Hans" predictors. The model likely learned spurious correlations specific to your training/validation data distribution (e.g., over-represented functional groups, consistent reporting bias in yields). It fails to generalize to the external set where these artifacts are absent. Perform error analysis: compare the distributions of key molecular descriptors (MW, logP, functional group counts) between your internal and external sets.

Q2: During hyperparameter tuning, validation loss closely tracks training loss, yet both are poor predictors of external test performance. How should we adjust our protocol? A: Your validation set is not sufficiently independent from the training data. This occurs commonly when random splitting inadvertently leaves structural or temporal redundancy. Implement a more rigorous splitting strategy:

- Temporal Split: If data was collected over time, validate/test on the most recent reactions.

- Cluster Split: Use molecular fingerprint clustering (e.g., Butina clustering) and assign entire clusters to train/val/test sets to ensure structural novelty in the validation set.

- Scaffold Split: Split by molecular scaffold to test generalization to new core structures.

Q3: What specific analyses can reveal "Clever Hans" features in chemical reaction prediction models? A: Conduct feature attribution analysis (e.g., SHAP, LIME) on correct predictions on your internal set versus failures on the external set. Look for models that overly rely on:

- Solvent or catalyst features that are uniquely predictive in the training data due to bias.

- Simple counting features (e.g., number of atoms) correlated with yield in a non-causal way.

- Specific fingerprint bits that are prevalent in high-yield training reactions but not generalizable.

Q4: How do we formally assess if an external test set is "too easy" or "too hard"? A: Establish baseline performance metrics using simple, interpretable models (e.g., linear regression on a few key descriptors, nearest-neighbor). Compare the gap between your complex model and the baseline across datasets.

Table 1: Performance Discrepancy Analysis for a Hypothetical Reaction Yield Prediction Model

| Dataset | Size (Reactions) | Model (GNN) MAE | Baseline (Linear) MAE | Performance Gap (MAE Reduction) | Key Note |

|---|---|---|---|---|---|

| Training | 15,000 | 8.5% | 15.2% | 6.7% | Optimized during training |

| Validation (Random Split) | 3,000 | 9.1% | 15.5% | 6.4% | Used for early stopping |

| Validation (Scaffold Split) | 3,000 | 14.7% | 16.1% | 1.4% | Reveals overfitting to scaffolds |

| External Test (Novel Lab) | 2,500 | 17.3% | 16.8% | -0.5% | Model fails to beat baseline |

Experimental Protocol: Detecting Dataset Shift

- Descriptor Calculation: For each reaction in all sets (Train, Val, External), compute a set of relevant molecular descriptors (e.g., ECFP6 fingerprints, molecular weight, number of rotatable bonds) for each reactant and product.

- Dimensionality Reduction: Use PCA or t-SNE to reduce descriptors to 2 principal components.

- Distribution Comparison: Plot the density of points from each dataset in this 2D space. Visual overlap indicates similarity; separation indicates shift.

- Statistical Test: Perform a two-sample Kolmogorov-Smirnov test on the first principal component scores between the training and external sets. A p-value < 0.05 suggests a significant distribution shift.

Experimental Protocol: Adversarial Validation for Split Rigor

- Combine and Label: Combine your training and external test set data. Label all training set examples as

0and external test set examples as1. - Train a Classifier: Train a simple classifier (e.g., logistic regression on molecular fingerprints) to distinguish between the two sets.

- Evaluate: If the classifier can accurately distinguish them (AUC > 0.7), the sets are intrinsically different, explaining the performance drop. Your splits should aim for an AUC ~0.5.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| RDKit | Open-source cheminformatics toolkit for calculating molecular descriptors, fingerprints, and performing scaffold splits. |

| SHAP/LIME Libraries | Model-agnostic explanation tools to identify which input features (e.g., atom positions) a prediction is most sensitive to. |

| Chemical Diversity Analysis Software (e.g., ChemBL Python Client) | To assess the structural coverage and bias of your reaction dataset against large public corpora. |

| Adversarial Validation Script | Custom Python script to train and evaluate the set-discrimination classifier as per the protocol above. |

| Graph Neural Network (GNN) Framework (e.g., DGL, PyTor Geometric) | For building and training the primary reaction prediction models. |

| Standardized Reaction Representation (e.g., Reaction SMILES, RInChI) | Ensures consistent encoding of reaction data across different datasets, minimizing preprocessing artifacts. |

Technical Support Center: Troubleshooting Clever Hans Predictors in Chemical ML

Frequently Asked Questions (FAQs)