Mastering DeePEST-OS: A Comprehensive Guide to Input Preparation and Data Requirements for Drug Development

This article provides a definitive guide for researchers, scientists, and drug development professionals on preparing inputs and meeting data requirements for DeePEST-OS (Deep Learning-based Platform for Evaluating and Simulating Therapeutics...

Mastering DeePEST-OS: A Comprehensive Guide to Input Preparation and Data Requirements for Drug Development

Abstract

This article provides a definitive guide for researchers, scientists, and drug development professionals on preparing inputs and meeting data requirements for DeePEST-OS (Deep Learning-based Platform for Evaluating and Simulating Therapeutics - Omics and Structural). We detail the foundational concepts, step-by-step methodologies, common troubleshooting solutions, and validation protocols essential for successful simulation of pharmacokinetic/pharmacodynamic (PK/PD) profiles and efficacy endpoints. The guide covers everything from raw data sourcing and pre-processing to optimizing parameters and benchmarking results against experimental data, ensuring robust and reliable predictions for accelerating therapeutic discovery.

What is DeePEST-OS? Foundational Inputs and Core Data Prerequisites

Within the broader thesis on DeePEST-OS (Deep Learning for Pharmacokinetic, Efficacy, Safety, and Toxicity - Outcome Simulation) input preparation and data requirements, this document defines the platform's purpose and scope. DeePEST-OS is a predictive artificial intelligence framework designed to integrate heterogeneous data streams to forecast compound behavior across the critical PK, efficacy, safety, and toxicity axes in preclinical and early clinical development.

Purpose and Strategic Scope

The primary purpose of DeePEST-OS is to de-risk drug candidates and optimize pipeline prioritization by generating multi-faceted outcome predictions from complex biological and chemical data. Its scope encompasses the transition from late discovery through Phase II clinical trials.

Table 1: DeePEST-OS Predictive Scope and Impact

| Module | Prediction Target | Typical Input Data | Development Phase Impact |

|---|---|---|---|

| PK/ADME | Clearance, Volume of Distribution, Bioavailability, Half-life | Chemical structure, in vitro microsome/hepatocyte data, physicochemical properties, transporter assays | Lead Optimization to Preclinical |

| Efficacy | Target engagement, biomarker modulation, primary endpoint probability | In vitro potency, omics signatures, in vivo efficacy model results, target pathway data | Preclinical to Phase II |

| Safety/Toxicity | Hepatotoxicity, cardiotoxicity (e.g., hERG), genotoxicity, organ-specific lesions | High-content imaging, transcriptomics (e.g., TempO-Seq), histopathology, clinical chemistry, safety pharmacology | Preclinical to Phase I |

Application Notes: Data Requirements and Integration

Successful DeePEST-OS implementation requires curated, high-quality data. The system employs a hybrid architecture, combining convolutional neural networks (CNNs) for structural data, recurrent neural networks (RNNs) for temporal data, and graph neural networks (GNNs) for pathway interactions.

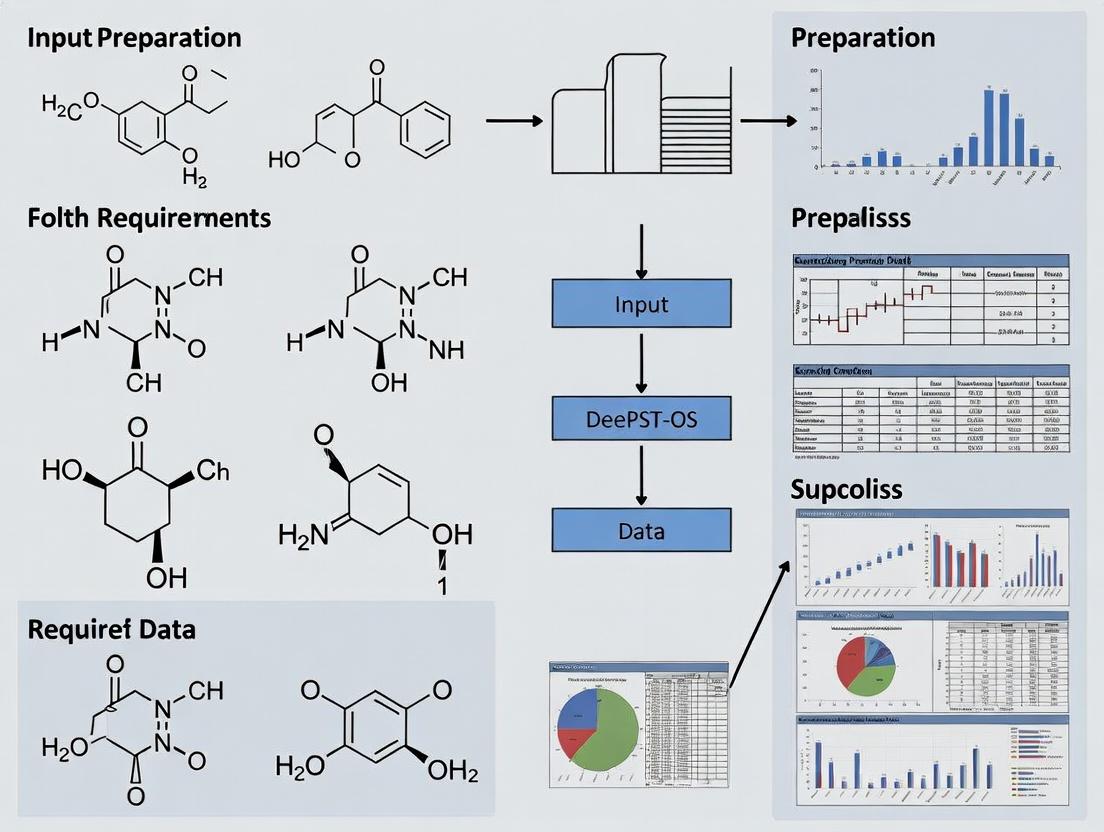

Diagram 1: DeePEST-OS Data Integration Workflow

Experimental Protocols for Input Data Generation

Protocol 4.1: High-Content Transcriptomics for Toxicity Signature

Purpose: Generate TempO-Seq data for hepatotoxicity prediction input.

- Cell Seeding: Plate HepaRG cells in 96-well plates at 50,000 cells/well in Williams' E medium. Differentiate for 14 days.

- Compound Treatment: Treat cells with test compound at 5 concentrations (0.1, 1, 10, 30, 100 µM) and DMSO control for 24h (n=4 wells/concentration).

- TempO-Seq Assay:

- Aspirate medium, lyse cells with 50µL TempO-Seq lysis buffer.

- Add Detector Oligo cocktail (1:100 dilution) and incubate at 37°C for 1h.

- Perform two-step PCR: (i) Target amplification (12 cycles), (ii) Indexing (18 cycles).

- Pool libraries, clean up with SPRI beads, and quantify by qPCR.

- Sequencing & Analysis: Sequence on Illumina NextSeq 500 (75bp single-end). Align reads to TempO-Seq human probe set, generate counts matrix for ~3,000 toxicity-related genes.

- Data Formatting: Normalize counts to transcripts per million (TPM). Format as a structured CSV file with columns:

[Compound_ID, Concentration, Gene_ID, TPM_Value]for DeePEST-OS ingestion.

Protocol 4.2: Multi-species Microsomal Stability for PK Prediction

Purpose: Generate intrinsic clearance (CLint) data across species.

- Reaction Setup: Prepare 0.5 mg/mL liver microsomes (human, rat, dog) in 0.1M phosphate buffer (pH 7.4).

- Pre-incubation: Aliquot 95µL microsome mix per well (96-deep well plate). Pre-warm at 37°C for 5 min.

- Initiation: Add 5µL of test compound (final 1 µM) and 50µL of NADPH regenerating solution (final 1 mM NADP+, 3 mM Glucose-6-P, 0.4 U/mL G6PDH) to start reaction.

- Time Course Sampling: Remove 50µL aliquots at T = 0, 5, 10, 20, 30, 45 min. Quench immediately with 100µL ice-cold acetonitrile containing internal standard.

- Analysis: Centrifuge quenched samples. Analyze supernatant via LC-MS/MS. Plot ln(peak area ratio) vs. time.

- Calculation: Calculate in vitro CLint (µL/min/mg) = (0.693 / t1/2) * (Volume incubation / mg microsomal protein).

- Data Formatting: Report in table for DeePEST-OS:

[Compound_ID, Species, CLint, t1/2, %Remaining_at_45min].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for DeePEST-OS Input Studies

| Reagent/Kit | Vendor Example | Function in DeePEST-OS Context |

|---|---|---|

| HepaRG Differentiated Cells | Thermo Fisher Scientific | Provides metabolically competent human liver model for in vitro toxicity and metabolism studies. |

| Human/Rat/Dog Liver Microsomes | Corning Life Sciences | Enzyme source for measuring intrinsic clearance and metabolic stability across species. |

| TempO-Seq HTG EdgeSeq Oncology Biomarker Panel | Bio-Rad Laboratories | Enables high-content, amplification-based transcriptomics for toxicity pathway profiling without RNA isolation. |

| hERG Ion Channel Expressing Cell Line | Charles River Laboratories | Essential for in vitro cardiotoxicity risk assessment (potassium channel blockade). |

| Nucleofector Kit for Primary Cells | Lonza | Enables efficient transfection for mechanistic in vitro studies (e.g., CRISPR knockouts). |

| Phospho-Kinase Array Kit | R&D Systems | Multiplexed detection of phosphorylation changes in key signaling nodes for efficacy pathway analysis. |

| Panlabs PD/PK Online Services | Eurofins Discovery | Provides standardized in vivo pharmacokinetic data for model training and validation. |

| Matrigel Matrix | Corning Life Sciences | Used for 3D cell culture and xenograft studies to improve physiological relevance of efficacy data. |

Signaling Pathway Integration

DeePEST-OS maps compound effects onto canonical pathways to predict mechanism-based efficacy and toxicity.

Diagram 2: Key Hepatotoxicity Signaling Pathways Mapped

Within the DeePEST-OS (Deep Phenotypic Efficacy and Safety Target Operating System) research framework, precise input preparation is foundational. This document establishes a taxonomy for data inputs—Mandatory, Optional, and Conditional—to ensure robust, reproducible, and computationally efficient modeling for drug discovery. This classification directly supports the broader thesis on standardizing and optimizing data requirements for predictive toxicology and efficacy modeling.

Data Input Classification Protocol

Inputs are classified based on their necessity for core model function, their impact on predictive accuracy, and their dependency on specific experimental or clinical scenarios.

Table 1: Data Input Classification Criteria

| Classification | Definition | Impact on Model | Failure Consequence |

|---|---|---|---|

| Mandatory | Data absolutely required for model initialization and execution. Non-negotiable. | Model cannot run without it. | Complete failure or undefined output. |

| Conditional | Data required only when specific pre-defined conditions are met. | Enhances model specificity and accuracy for a defined scenario. | Loss of scenario-specific insight; potential for generic or less accurate output. |

| Optional | Data that provides supplementary or refining information. | May improve model confidence, granularity, or interpretability. | Model operates at baseline performance with core outputs. |

Application Notes for DeePEST-OS Modules

Target Identification Module

- Mandatory: Primary target gene symbol (HUGO nomenclature), canonical protein sequence.

- Conditional: Known somatic mutations (e.g., from COSMIC) for oncology targets; splice variant information.

- Optional: Tertiary protein structure (PDB ID), quantitative tissue expression profiles (GTEx).

Compound Profiling Module

- Mandatory: Canonical SMILES string, molecular weight, logP.

- Conditional: Metabolite SMILES (for prodrugs); known active/inactive stereoisomer data.

- Optional: NMR or mass spectrometry fragmentation patterns; clinical pharmacokinetic parameters (e.g., Cmax, t1/2).

Phenotypic Screening Module

- Mandatory: Dose-response matrix (concentration vs. % inhibition/viability), positive & negative control identifiers.

- Conditional: Time-course data for dynamic assays; cell line STR profiling data.

- Optional: High-content imaging raw data (e.g., Cell Painting); orthogonal assay readouts.

Table 2: Quantitative Input Requirements for a Standard Dose-Response Analysis

| Input Parameter | Mandatory Threshold | Recommended Precision | Conditional Requirement |

|---|---|---|---|

| Compound Concentration | ≥ 10 data points | Log10 scale, minimum 3 replicates | Required for IC50/EC50 calculation |

| Negative Control (DMSO) | Yes | ≥ 12 replicates | Defines 100% baseline |

| Positive Control | Yes | ≥ 3 replicates | Defines 0% baseline (full effect) |

| Z'-Factor | > 0.5 | Calculated per plate | If < 0.5, data flagged for review |

| Signal-to-Noise Ratio | > 10 | Calculated from controls | Mandatory for high-content screens |

Experimental Protocol: Validating Conditional Input Requirements

Protocol Title: Establishing Conditionality for Mutation-Specific Drug Sensitivity Data.

Objective: To determine when patient-derived mutation data transitions from an Optional to a Conditional input for a kinase inhibitor efficacy model.

Materials: See "Scientist's Toolkit" below. Method:

- Baseline Model Training: Train a base DeePEST-OS efficacy model using only Mandatory inputs (cell line identity, drug concentration, wild-type target sequence).

- Blinded Validation: Input a validation set of dose-response data without mutation status. Record predictive error (RMSE) for IC50.

- Conditional Enrichment: For the same validation set, input known driver mutations (e.g., EGFR L858R, BRAF V600E) as a supplemental data layer.

- Stratified Analysis: Segment the validation set into "Mutation-Present" and "Mutation-Absent" cohorts. Re-run predictions and calculate cohort-specific RMSE.

- Threshold Determination: Apply a predefined improvement threshold (e.g., ≥ 20% reduction in RMSE for the "Mutation-Present" cohort). If met, the mutation data is formally classified as Conditional for predicting sensitivity to compounds known to interact with that mutated target.

Visualization of Input Decision Logic

Title: Input Classification Decision Tree

Title: Data Flow in DeePEST-OS Prediction Pipeline

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Input Validation

| Reagent / Material | Function in Protocol | Key Supplier Examples |

|---|---|---|

| Genomically Characterized Cell Lines (e.g., NCI-60, DepMap) | Provide biological context with known mutations, used to validate conditional input rules. | ATCC, Coriell Institute |

| Reference Compounds (e.g., kinase inhibitors with known mutation-specific efficacy) | Positive controls for establishing conditionality between genetic input and phenotypic output. | Selleck Chemicals, MedChemExpress |

| Cell Viability Assay Kits (e.g., CellTiter-Glo) | Generate mandatory quantitative dose-response data with high signal-to-noise. | Promega Corporation |

| STR Profiling Kits | Authenticate cell lines, a conditional input for all in vitro data submission. | Promega, ATCC |

| LC-MS/MS Systems | Generate optional but high-value pharmacokinetic/metabolite data for model refinement. | Waters, Sciex, Agilent |

The efficacy of the Data-enabled Pharmacological Efficacy and Safety Translator - Open Source (DeePEST-OS) platform is contingent upon the structured integration of multimodal foundational data. This article delineates the essential data types—Chemical, Biological, Omics, and Clinical—required as prerequisites for constructing predictive models of drug action and toxicity. Standardized input preparation across these domains is critical for generating reliable, reproducible outputs in computational drug development.

Chemical Data Prerequisites

Chemical data provides the structural and property-based foundation for understanding drug-target interactions and ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) profiles.

Core Chemical Data Types

- Small Molecule Structures: Canonical SMILES, InChI/InChIKey, 2D/3D molecular descriptors.

- Physicochemical Properties: LogP, pKa, molecular weight, polar surface area, rotatable bonds.

- ADMET Predictions: In silico predictions for permeability, cytochrome P450 inhibition, hERG liability.

- Interaction Data: Binding affinities (Ki, IC50, Kd), bioassay results from public repositories (ChEMBL, PubChem).

Application Note: Preparing Chemical Inputs for DeePEST-OS

Objective: To generate a standardized, curated chemical library file for DeePEST-OS ingestion. Protocol:

- Data Sourcing: Download compound structures and associated bioactivity data from ChEMBL (version 33+).

- Standardization: Use RDKit (Python) to standardize SMILES (neutralize charges, remove salts, generate canonical tautomer).

- Descriptor Calculation: Compute a set of 200 molecular descriptors (e.g., Morgan fingerprints, ECFP4) and key physicochemical properties.

- Curation & Filtering: Apply alert filters for reactive functional groups (e.g., pan-assay interference compounds, PAINS) and enforce property-based rules (e.g., molecular weight < 600 Da, LogP < 5).

- Formatting: Assemble data into the DeePEST-OS required CSV schema (

compound_id,standard_smiles,descriptor_1...N,pChEMBL_value).

Table 1: Optimal Ranges for Drug-like Chemical Properties

| Property | Ideal Range for Oral Drugs | Common Threshold (Rule of 5) | Data Source Typical Variance |

|---|---|---|---|

| Molecular Weight (Da) | 200 - 500 | ≤ 500 | ± 2 Da (experimental) |

| Calculated LogP (cLogP) | 1 - 3 | ≤ 5 | ± 0.5 units (prediction) |

| Hydrogen Bond Donors | 0 - 2 | ≤ 5 | - |

| Hydrogen Bond Acceptors | 2 - 9 | ≤ 10 | - |

| Polar Surface Area (Ų) | 20 - 130 | - | ± 5 Ų |

| Rotatable Bonds | ≤ 7 | ≤ 10 | - |

Biological & Target Data Prerequisites

Biological data contextualizes chemical action within biological systems, focusing on target proteins, pathways, and cellular phenotypes.

Core Biological Data Types

- Target Information: Protein sequence (UniProt ID), family, 3D structure (PDB ID), biological function.

- Pathway Data: Involvement in KEGG, Reactome, or WikiPathways.

- Phenotypic Screening Data: High-content imaging results, cytotoxicity (IC50), functional assay outputs.

Protocol: Validating Target Engagement Data

Objective: To generate dose-response data for confirming compound-target interaction prior to Omics studies. Method: In vitro Kinase Inhibition Assay (HTRF-based). Reagents & Materials:

- Recombinant Kinase Protein: Purified human kinase domain.

- Substrate & ATP: Biotinylated peptide substrate and ATP.

- Detection Reagents: HTRF anti-phospho-antibody labeled with Europium cryptate, Streptavidin-XL665.

- Buffer System: Assay buffer with MgCl2, DTT, and detergent. Procedure:

- In a low-volume 384-well plate, serially dilute the test compound in DMSO, then dilute in assay buffer.

- Add kinase, substrate, and ATP to initiate the reaction. Incubate for 60 minutes at 25°C.

- Stop the reaction by adding EDTA and detection reagents.

- Incubate for 1 hour and read fluorescence at 620 nm (Eu) and 665 nm (XL665) on a compatible plate reader.

- Calculate the ratio (665 nm/620 nm * 10,000) and fit the dose-response curve to determine IC50.

Diagram 1: HTRF Kinase Assay Workflow (80 chars)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for Biological Assays

| Reagent | Function & Application | Example Vendor/Product |

|---|---|---|

| Recombinant Proteins | Provide the purified target for biochemical interaction assays (e.g., kinases, GPCRs). | Sino Biological, Thermo Fisher |

| HTRF/Cisbio Assay Kits | Homogeneous, time-resolved FRET assays for quantifying kinase activity, protein-protein interactions. | Revvity, Cisbio |

| Cell Viability Probes | Measure cellular health and cytotoxicity (e.g., MTT, CellTiter-Glo). | Promega CellTiter-Glo |

| Fluorescent Dyes (Ca²⁺, ROS) | Indicator dyes for measuring intracellular signaling events and oxidative stress. | Thermo Fisher Fluo-4, Invitrogen |

| siRNA/shRNA Libraries | Enable targeted gene knockdown for functional validation of targets. | Horizon Discovery |

Omics Data Prerequisites

Omics data offers a systems-level view of drug response, capturing global molecular changes.

Core Omics Data Types

- Transcriptomics: Bulk or single-cell RNA-Seq data (raw FASTQ or processed count matrices).

- Proteomics: Mass spectrometry data (raw .RAW/.d files or identified protein/peptide tables).

- Metabolomics: LC-MS/NMR spectral data and identified metabolite abundance.

- Epigenomics: ChIP-Seq, ATAC-Seq data for chromatin state changes.

Protocol: Bulk RNA-Seq for Transcriptomic Profiling

Objective: To generate gene expression profiles of treated vs. untreated cell lines for DeePEST-OS pathway analysis. Workflow:

- Cell Treatment & Lysis: Treat triplicate cultures with compound at IC10 & IC50 for 24h. Lyse cells in TRIzol.

- RNA Extraction: Isolate total RNA using chloroform phase separation and silica-membrane columns. Assess integrity (RIN > 8.5).

- Library Preparation: Use a stranded mRNA-seq kit (e.g., Illumina TruSeq) for poly-A selection, fragmentation, cDNA synthesis, and adapter ligation.

- Sequencing: Pool libraries and sequence on an Illumina NovaSeq platform (PE 150 bp) to a depth of 25-30 million reads per sample.

- Bioinformatic Processing: Align reads to the human reference genome (GRCh38) using STAR. Generate gene-level counts with featureCounts.

Diagram 2: Bulk RNA-Seq Analysis Pipeline (74 chars)

Signaling Pathway Visualization

Diagram 3: Drug to Omics Signaling Path (66 chars)

Clinical Data Prerequisites

Clinical data bridges preclinical findings to human outcomes, enabling safety and efficacy prediction.

Core Clinical Data Types

- Electronic Health Records (EHR): Demographics, diagnoses (ICD codes), medications, lab values.

- Clinical Trial Data: Patient outcomes, adverse events (CTCAE), pharmacokinetics, biomarkers from databases like CT.gov.

- Real-World Data (RWD): Longitudinal claims data, patient registries.

- Biomarker Data: Genomic (germline/somatic), imaging, and digital biomarker readouts.

Application Note: Curating Clinical Trial Data for Modeling

Objective: To extract and structure key efficacy and safety endpoints from public clinical trial results for DeePEST-OS training. Protocol:

- Source Identification: Query ClinicalTrials.gov for Phase II/III trials of drugs within the therapeutic area of interest.

- Data Extraction: Use the NIH API to programmatically download structured results data (XML). Manually extract key tabular data from PDFs of published papers where necessary.

- Standardization: Map free-text adverse event terms to preferred terms in the Medical Dictionary for Regulatory Activities (MedDRA). Standardize lab value units.

- Structuring: Create two primary tables:

- Patient Outcomes:

trial_id,patient_id,arm,primary_endpoint_result,response_status. - Adverse Events:

trial_id,patient_id,meddra_pt,ctcae_grade,relatedness.

- Patient Outcomes:

- De-identification & Linking: Ensure all data is anonymized. Create a compound-trial linkage table via NCT numbers and drug names.

Table 3: Common Efficacy & Safety Endpoints in Oncology Trials

| Data Type | Endpoint | Typical Measurement | Data Format for DeePEST-OS |

|---|---|---|---|

| Efficacy | Overall Response Rate (ORR) | Proportion of patients with PR or CR | Float (0-1) |

| Efficacy | Progression-Free Survival (PFS) | Time from treatment to progression/death | Censored time-to-event |

| Efficacy | Biomarker Level (e.g., PSA) | Concentration in serum at baseline & follow-up | Continuous numeric (ng/mL) |

| Safety | Incidence of Grade ≥3 AE | Proportion of patients with severe event | Float (0-1) |

| Safety | Lab Abnormality (e.g., Neutropenia) | Lowest recorded ANC count | Continuous numeric (cells/µL) |

| PK/PD | Cmax, AUC | Peak and total drug exposure | Continuous numeric (ng·h/mL) |

This document provides detailed application notes and protocols for sourcing raw data, a critical phase in preparing inputs for the DeePEST-OS (Deep Learning for Predictive Efficacy, Safety, and Toxicity - Open Science) framework. The broader thesis investigates optimal data requirements and preparation pipelines to train robust, generalizable models for drug development. Sourcing high-quality, standardized raw data from authoritative repositories is the foundational step.

The following repositories are core to sourcing chemical, biological, and omics data for DeePEST-OS model training.

Table 1: Core Data Repositories for DeePEST-OS Input Preparation

| Repository | Primary Data Domain | Key Data Types | Access Method | Data Standards Employed | Update Frequency |

|---|---|---|---|---|---|

| PubChem | Chemical Biology | Small molecules, bioactivities, pathways, genes | Web API, FTP | InChI, SMILES, SDF, CID | Daily |

| ChEMBL | Drug Discovery | Bioactive molecules, binding data, ADMET | Web API, Downloads | ChEMBL ID, Standardized InChI | Quarterly |

| UniProt | Protein Science | Protein sequences, functional annotation, variants | REST API, FTP | FASTA, UniProtKB ID, EC number | Weekly |

| GEO (NCBI) | Functional Genomics | Gene expression, epigenomics, SNP arrays | Web Interface, FTP | MIAME, MINSEQE, SOFT format | Continuous |

| PDB | Structural Biology | 3D macromolecular structures | REST API, FTP | PDBx/mmCIF, PDB ID | Weekly |

| DrugBank | Pharmaceuticals | Drug targets, interactions, pathways | Web API, Download | DrugBank ID, ATC codes | Bi-annual |

| CTD | Toxicology | Chemical-gene-disease interactions | Web API, Downloads | MeSH, CAS RN, Gene ID | Monthly |

| ArrayExpress | Functional Genomics | Transcriptomics, proteomics data | API, FTP | MIAME, MINSEQE, MAGE-TAB | Continuous |

Application Notes & Protocols

Protocol: Building a Curated Chemical-Bioactivity Dataset from PubChem and ChEMBL

Objective: Assemble a standardized dataset linking small molecules to quantitative bioactivity outcomes (e.g., IC50, Ki) for target protein prediction.

Materials & Reagents:

- Computational Environment: Python 3.9+ with

requests,pandas,rdkitlibraries. - Data Sources: PubChem PUG REST API, ChEMBL SQLite/web client.

Procedure:

- Define Target List: From UniProt, obtain a list of target protein accession IDs (e.g., P00533 for EGFR).

- ChEMBL Data Extraction:

a. Query the ChEMBL API for all compounds with reported bioactivities (IC50, Ki) against the target list.

b. Filter for human targets, exact measurement type (

"="), and standard relation ("="). c. Extract compound SMILES, standard InChI Key, canonical ChEMBL ID, standard value (nM), and standard type. - PubChem Data Augmentation:

a. Using the list of InChI Keys from ChEMBL, query PubChem's

identityservice to obtain PubChem CIDs. b. For each CID, use thepropertyendpoint to fetch molecular weight, logP, hydrogen bond donor/acceptor count. c. Use theclassificationendpoint to gather pharmacological activity classifications. - Data Integration & Standardization:

a. Merge ChEMBL and PubChem data on InChI Key.

b. Standardize SMILES strings using RDKit's

Chem.MolToSmiles(Chem.MolFromSmiles())with canonicalization. c. Convert all bioactivity values to -log10(molar concentration) to create a uniform pActivity value. d. Flag and handle duplicates, keeping the highest confidence measurement. - Output: A curated CSV file with columns:

ChEMBL_ID,PubChem_CID,Standard_SMILES,InChI_Key,Target_UniProt_ID,pActivity,Assay_Type,Molecular_Weight,LogP.

Protocol: Sourcing and Preprocessing Transcriptomic Data from GEO

Objective: Download and minimally process raw RNA-seq or microarray data from GEO for subsequent feature extraction in toxicity/safety modeling.

Materials & Reagents:

- Software:

GEOqueryR/Bioconductor package,SRAtoolkit(for SRA data),FastQC,MultiQC. - Computational Resources: High-performance computing cluster for large-scale RNA-seq alignment.

Procedure:

- Study Identification: Use GEO's advanced search with MeSH terms (e.g., "drug-induced liver injury", "hepatotoxicity") and filter for "Series" with "Expression profiling by high throughput sequencing".

- Metadata Retrieval:

a. Using the GEO Series accession (GSEXXXXX), run

gse <- getGEO("GSEXXXXX", GSEMatrix = TRUE)in R. b. Extract phenotypic data (pData(phenoData(gse[[1]]))) including treatment, dose, timepoint, and responder status. - Raw Data Acquisition:

a. For microarray data: Download

Series Matrix FileviagetGEOfile(). b. For RNA-seq: Identify SRA run accessions (SRRXXXX) from thesupplementary_filecolumn. c. Useprefetchandfasterq-dumpfrom SRA Toolkit to download FASTQ files. - Quality Control & Logging:

a. Run

FastQCon all FASTQ files. b. Aggregate reports usingMultiQCto generate a summary of per-base sequence quality, adapter contamination, etc. c. Document and note any batches or outliers. - Output: A structured directory containing: (1)

metadata.csvof samples, (2) rawFASTQfiles orCELfiles, (3) aQC_report.htmlfrom MultiQC. This serves as the input for the next DeePEST-OS pipeline stage (e.g., alignment/quantification).

Visualization of Data Sourcing Workflows

Diagram: DeePEST-OS Raw Data Sourcing Logic

Title: Data Sourcing Logic for Model Input Preparation

Diagram: ChEMBL & PubChem Data Integration Protocol

Title: Chemical Bioactivity Data Integration Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Toolkit for Data Sourcing & Curation Experiments

| Item/Reagent | Function in Protocol | Example/Supplier | Notes for DeePEST-OS |

|---|---|---|---|

| RDKit | Chemical informatics toolkit for molecule standardization, descriptor calculation, and substructure search. | Open-source (rdkit.org) | Critical for ensuring consistent molecular representation from diverse sources. |

Bioconductor (GEOquery) |

R package for querying, downloading, and parsing GEO metadata and data into R data structures. | Open-source (bioconductor.org) | Primary tool for reproducible acquisition of transcriptomic metadata from GEO. |

| SRA Toolkit | Suite of tools for downloading, extracting, and converting sequencing data from SRA databases. | NCBI (github.com/ncbi/sra-tools) | Required for accessing the raw FASTQ files linked from GEO RNA-seq studies. |

| PubChem PUG REST API | Programmatic interface to search, retrieve, and integrate all PubChem data. | NIH PubChem | The most flexible and powerful method for batch retrieval of compound data. |

| ChEMBL web client/API | Interface for extracting curated bioactivity data using SQL-like queries or RESTful calls. | EMBL-EBI | Provides highly curated, target-annotated activity data. Prefer over less curated sources. |

| Custom Python Scripts | Automate multi-repository queries, data merging, and standardization pipelines. | In-house development | Essential for creating reproducible, version-controlled data preparation pipelines. |

| High-Performance Computing (HPC) Cluster | Processing large omics datasets (e.g., aligning RNA-seq reads). | Institutional resource | Necessary for scaling data preprocessing beyond pilot studies. |

This application note details the operational workflow of DeePEST-OS (Deep learning framework for Predicting Essential, Synthetic-lethal, and druggable Targets in Oncology using multi-omics data), a tool central to the broader thesis research on DeePEST-OS input preparation and data requirements. The system integrates multi-omics data to prioritize therapeutic targets in cancer.

The DeePEST-OS Core Workflow

The workflow is executed in four sequential phases.

Phase 1: Input Data Preparation and Requirements

Primary Data Requirements: DeePEST-OS requires multi-omics inputs from patient-matched tumor samples. The minimum data requirement is specified below.

Table 1: Minimum Input Data Requirements for DeePEST-OS

| Data Type | Format | Minimum Coverage/Depth | Purpose in Model |

|---|---|---|---|

| Whole Exome Sequencing (WES) | FASTA/FASTQ + VCF | 100x mean coverage | Identifies somatic mutations, copy number variants (CNVs). |

| RNA Sequencing (RNA-seq) | FASTA/FASTQ + Count Matrix | 30 million paired-end reads | Quantifies gene expression and fusion transcripts. |

| Methylation Array (e.g., 850K) | IDAT files or beta matrix | >90% probe detection p-value < 0.01 | Profiles promoter and enhancer methylation status. |

| Clinical Data | CSV/TSV | Staging, subtype, treatment history | Contextualizes predictions and stratifies outputs. |

Protocol 2.1.1: Pre-processing of Somatic Variants

- Alignment: Align WES reads to the GRCh38 reference genome using BWA-MEM (v0.7.17).

- Variant Calling: Call somatic SNVs and indels using Mutect2 (GATK v4.2) with a matched normal sample.

- Annotation: Annotate VCF files using ANNOVAR and dbNSFP to obtain functional predictions (e.g., SIFT, PolyPhen-2).

- Filtering: Retain variants with PASS filter, read depth ≥ 20, and variant allele frequency (VAF) ≥ 0.05.

- Formatting: Generate a binary (0/1) matrix of genes with at least one nonsynonymous mutation per sample.

Phase 2: Data Integration and Feature Engineering

The pre-processed data streams are integrated into a unified feature matrix.

Protocol 2.2.1: Creation of Unified Feature Matrix

- Expression: TPM-normalize RNA-seq counts. Apply log2(TPM+1) transformation.

- Methylation: Convert beta values to M-values for statistical stability. Perform probe-to-gene mapping (max beta value within ±1500bp of TSS).

- CNV: Convert segmented logR ratios from WES to gene-level categorical calls (-2=homozygous deletion, -1=heterozygous loss, 0=neutral, 1=gain, 2=amplification).

- Alignment: Align all matrices (mutation, expression, methylation, CNV) by Gene Symbol and Sample ID. Missing data are imputed using k-nearest neighbors (k=10).

- Output: A final matrix of dimensions [Nsamples x Mfeatures] is saved as an HDF5 file for model input.

Diagram 1: DeePEST-OS Data Integration Pipeline

Phase 3: Model Architecture and Prediction

DeePEST-OS employs a hybrid deep neural network.

Table 2: DeePEST-OS Model Architecture Specifications

| Layer | Type | Nodes/Parameters | Activation | Dropout |

|---|---|---|---|---|

| Input | Dense | 2048 | ReLU | 0.3 |

| Hidden 1 | Dense | 1024 | ReLU | 0.3 |

| Hidden 2 | Dense | 512 | ReLU | 0.2 |

| Hidden 3 | Attention | 256 | Softmax | - |

| Output | Dense | 3 (Essential/Synthetic-Lethal/Druggable) | Sigmoid | - |

| Optimizer: Adam (lr=0.0001) | Loss Function: Binary Cross-Entropy | Batch Size: 32 | Epochs: 100 (Early Stopping) |

Protocol 2.3.1: Model Training and Prediction

- Splitting: Split data 70/15/15 into training, validation, and hold-out test sets stratified by cancer type.

- Training: Train model on training set, monitoring loss on validation set.

- Early Stopping: Halt training if validation loss does not improve for 15 consecutive epochs.

- Prediction: Generate three probability scores (0-1) per gene per sample on the test set, representing likelihoods of being an essential, synthetic-lethal, or druggable target.

Diagram 2: DeePEST-OS Hybrid Neural Network

Phase 4: Output Interpretation and Prioritization

Raw scores are post-processed for biological actionability.

Protocol 2.4.1: Target Prioritization

- Thresholding: Apply validated thresholds: Essential >0.85, Synthetic-Lethal >0.80, Druggable >0.75.

- Ranking: Calculate a composite priority score:

Priority = (0.4*P(Ess)) + (0.35*P(SL)) + (0.25*P(Drug)). - Annotation: Annotate high-ranking genes with known drug information from DrugBank and clinical trial status from ClinicalTrials.gov.

- Output: Generate a master ranked table and per-sample reports.

Table 3: Example Output for Top-Ranked Gene (TP53 in Glioblastoma)

| Gene | P(Ess) | P(SL) | P(Drug) | Priority | Known Drugs | Clinical Trial Phase |

|---|---|---|---|---|---|---|

| TP53 | 0.99 | 0.92 | 0.45 | 0.82 | APR-246, COTI-2 | Phase I/II |

| EGFR | 0.95 | 0.71 | 0.89 | 0.84 | Gefitinib, Osimertinib | Phase III (Approved) |

| PTEN | 0.97 | 0.88 | 0.15 | 0.72 | None | - |

The Scientist's Toolkit

Table 4: Essential Research Reagent Solutions for DeePEST-OS Validation

| Reagent / Material | Provider (Example) | Function in Validation |

|---|---|---|

| Cancer Cell Line Panel (e.g., 50 lines) | ATCC, DSMZ | Provides biologically relevant models for in vitro functional validation of predicted targets. |

| CRISPR-Cas9 Knockout Libraries (Whole Genome or Custom) | Synthego, Horizon Discovery | Enables genome-wide or targeted knockout screens to experimentally test gene essentiality predictions. |

| siRNA/shRNA Pools (Gene-Specific) | Dharmacon, Sigma-Aldrich | Used for transient or stable knockdown to confirm synthetic-lethal interactions predicted by the model. |

| Viability/Proliferation Assay Kits (CellTiter-Glo) | Promega | Quantifies cell growth and viability after genetic perturbation, providing the primary readout for validation experiments. |

| High-Throughput Sequencing Reagents (for NGS validation) | Illumina, Thermo Fisher | Confirms on-target genetic modifications and measures transcriptomic changes post-perturbation. |

| Compound Libraries (FDA-approved & clinical candidates) | Selleckchem, MedChemExpress | Used to test the druggability predictions by assessing response to pharmacological inhibition. |

Step-by-Step Guide: Preparing and Formatting Data for DeePEST-OS Simulations

Within the DeePEST-OS (Deep learning for Pesticide Efficacy, Safety, and Toxicology - Open Science) framework, the quality and consistency of chemical input data are foundational. This protocol details the critical preprocessing steps for chemical structures—standardization, descriptor calculation, and identifier generation—to ensure reproducibility and robustness in predictive modeling for agrochemical discovery.

Chemical Structure Standardization

Standardization ensures a consistent, canonical representation of a chemical structure, eliminating representation-based noise.

Protocol: Canonical Tautomer and Resonance Form Generation

Objective: Generate a consistent, low-energy tautomer and major resonance form for each input structure.

- Input: A molecular structure file (e.g., SDF, MOL) or identifier (SMILES).

- Tool: Use the

RDKitCheminformatics library (rdkit.Chem.MolStandardizemodule). - Procedure:

a. Sanitization: Run

Chem.SanitizeMol(mol)to check valency and correct basic properties. b. Neutralization: Apply theUnchargertool to adjust protonation states to a neutral, pH 7.4-like representation, unless specifically modeling ionic forms. c. Tautomer Canonicalization: Use theTautomerCanonicalizer()to identify and generate the most stable tautomeric form based on predefined rules. d. Cleanup: Remove solvents, salts, and metal ions using a predefined fragment list unless they are integral to the complex. e. Stereochemistry: Perceive and assign stereochemistry from 3D coordinates if available (Chem.AssignStereochemistryFrom3D(mol)). - Output: A standardized RDKit molecule object.

Quantitative Data: Impact of Standardization on Dataset Consistency

Table 1: Effect of Standardization on a Benchmark Agrochemical Dataset (n=10,234 compounds)

| Standardization Step | Compounds Modified | % of Total Dataset | Common Change Example |

|---|---|---|---|

| Neutralization (Uncharging) | 2,558 | 25.0% | Carboxylic acid (-COO⁻) → -COOH |

| Tautomer Canonicalization | 1,434 | 14.0% | Keto-enol shift (C=O-CH- C-OH=C-) |

| Salt & Solvent Removal | 3,280 | 32.1% | Removal of HCl, Na⁺, H₂O, DMSO |

| Stereochemistry Assignment | 4,715 | 46.1% | Assignment of R/S or E/Z descriptors |

SMILES and InChI Generation & Best Practices

Canonical string identifiers enable unique indexing and database searching.

Protocol: Generating Canonical Identifiers

- Input: Standardized RDKit molecule object (from Section 2.1).

- Canonical SMILES:

a. Use

Chem.MolToSmiles(mol, isomericSmiles=True, canonical=True). b. TheisomericSmiles=Trueflag preserves stereochemical information. - InChI and InChIKey:

a. Use the

RDKitInChI interface:Chem.inchi.MolToInchi(mol)andChem.inchi.MolToInchiKey(mol). b. InChI provides a layered, standardized representation. The InChIKey is a 27-character hashed version suitable for database indexing. - Verification: Perform a round-trip test: convert the SMILES/InChI back to a molecule object and verify it matches the original standardized structure.

Best Practices Table

Table 2: SMILES and InChI Usage Guidelines for DeePEST-OS

| Identifier | Primary Use Case | DeePEST-OS Recommendation | Caveat |

|---|---|---|---|

| Canonical SMILES | Day-to-day processing, featurization input, human-readable exchange. | Store as the primary internal identifier. Use for descriptor calculation. | Can be algorithm-dependent (RDKit vs. OpenEye). |

| InChI | Definitive, absolute structure representation for publication and data merging. | Archive and publish alongside SMILES. Use for cross-database validation. | Less human-readable. Longer string. |

| InChIKey | Database indexing, rapid duplicate detection, web searches. | Use as database key for deduplication and linking external resources. | Potential for collision (extremely rare). |

Molecular Descriptor Calculation

Descriptors translate chemical structure into quantitative features for machine learning models.

Protocol: Calculating a Comprehensive Descriptor Set

Objective: Generate a vector of numerical features representing physicochemical and topological properties.

- Input: Standardized molecule object (canonical SMILES preferred).

- Tool:

RDKitdescriptor calculators (rdkit.Chem.Descriptors,rdkit.ML.Descriptors.MoleculeDescriptors). - Procedure:

a. Import:

from rdkit.Chem import Descriptorsb. List Descriptors:descriptor_names = [x[0] for x in Descriptors._descList]c. Calculator:calculator = MoleculeDescriptors.MolecularDescriptorCalculator(descriptor_names)d. Calculation:descriptor_vector = calculator.CalcDescriptors(mol) - Output: A list/array of numerical values (200+ descriptors). Critical: Handle

NaNor infinity values resulting from calculation errors (e.g., logP for inorganic fragments).

Key Descriptor Categories for DeePEST-OS

Table 3: Essential Molecular Descriptor Categories for Agrochemical Modeling

| Category | Example Descriptors | Relevance to DeePEST-OS (Pesticide Properties) |

|---|---|---|

| Physicochemical | Molecular Weight, LogP (ALogP), TPSA, H-Bond Donor/Acceptor Count | Predicting absorption, membrane permeability, and environmental fate. |

| Topological | BalabanJ, BertzCT | Encoding molecular complexity and branching related to synthesis and degradation. |

| Constitutional | Heavy Atom Count, Ring Count, Fraction of SP³ Carbons | Basic size and flexibility correlates with target interaction and leaching potential. |

| Quantum-Chemical | (Requires external calc.) HOMO/LUMO energy, Dipole Moment | Modeling reactivity, photodegradation, and interaction with biological targets. |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Software and Libraries for Chemical Data Preparation

| Tool / Resource | Function | Application in Protocol |

|---|---|---|

| RDKit (Open-Source) | Core cheminformatics toolkit. | Used for all steps: standardization, SMILES/InChI generation, descriptor calculation. |

| KNIME or Nextflow | Workflow management. | Orchestrating and reproducing the multi-step preprocessing pipeline. |

| PubChemPy/ChemSpider API | Web service clients. | Fetching initial structures and validating identifiers. |

| MongoDB/PostgreSQL | Database systems. | Storing standardized structures, descriptors, and metadata with InChiKey as primary key. |

| Jupyter Notebook | Interactive computing. | Prototyping and documenting standardization rules and descriptor analysis. |

| CDK (Chemistry Dev Kit) | Alternative Java library. | Cross-validating descriptor calculations and fingerprint generation. |

Experimental Workflow Visualization

Workflow for DeePEST-OS Chemical Data Preparation

Detailed Standardization & Featurization Steps

This document serves as a critical application note for the DeePEST-OS (Deep Learning Platform for Enhanced Structure-based Target Screening - Open Science) initiative. The broader thesis explores the optimization of input data preparation to enhance the accuracy and generalizability of machine learning models in structure-based drug discovery (SBDD). The quality, standardization, and biological relevance of the primary inputs—protein structures, sequences, and binding site definitions—directly dictate the predictive performance of DeePEST-OS pipelines. This protocol details the acquisition, validation, and preparation of these fundamental inputs.

Protein Data Bank (PDB) Files

The PDB archive is the primary repository for experimentally determined 3D structures of proteins, nucleic acids, and complexes.

Key Considerations for DeePEST-OS:

- Resolution: Prefer structures with resolution ≤ 2.5 Å for reliable atomic positioning.

- Completeness: Favor structures with minimal missing residues in the target region.

- Experimental Method: X-ray crystallography and Cryo-EM are preferred; NMR ensembles require specific handling.

- Ligand Presence: Structures co-crystallized with a native ligand or drug molecule are invaluable for binding site definition.

Table 1: Quantitative Metrics for PDB File Selection

| Metric | Optimal Range for DeePEST-OS | Acceptable Range | Source/Validation Tool |

|---|---|---|---|

| Resolution | ≤ 2.0 Å | ≤ 2.5 Å | PDB Header / pdb-tools |

| R-free Value | ≤ 0.25 | ≤ 0.30 | PDB Header / Validation Reports |

| Missing Residues (Binding Site) | 0 | ≤ 2 short loops | PDB Header / Visual Inspection |

| Ligand B-factors (Avg.) | ≤ 60 Ų | ≤ 80 Ų | Bio.PDB (Biopython) |

Protein Sequences

Canonical sequences from authoritative databases provide the evolutionary and functional context for the target.

Primary Sources:

- UniProtKB/Swiss-Prot: Manually annotated, high-quality sequences.

- NCBI RefSeq: Comprehensive, non-redundant reference sequences.

Table 2: Essential Sequence Metadata for Input Preparation

| Data Field | Purpose in DeePEST-OS | Source Database |

|---|---|---|

| Canonical Isoform ID | Defines the reference sequence | UniProtKB |

| Amino Acid Sequence | For alignment & homology checks | UniProtKB, RefSeq |

| Post-Translational Modifications | Context for structure anomalies | UniProtKB |

| Domain Annotations (e.g., PFAM) | Functional site correlation | UniProtKB, InterPro |

| Natural Variants | Assessing binding site conservation | UniProtKB, gnomAD |

Binding Site Definitions

Accurately defining the region of ligand interaction is paramount. Multiple complementary methods are employed.

Definition Methods:

- Ligand-Centric: Using coordinates from a co-crystallized ligand.

- Residue-Centric: Based on known functional residues from mutagenesis studies.

- Geometry-Centric: Using algorithms to detect surface pockets and cavities.

Table 3: Binding Site Definition Methods & Outputs

| Method | Tools / Databases | DeePEST-OS Input Format |

|---|---|---|

| From Co-crystal Ligand | PDB file, PyMOL, ChimeraX |

List of residues within 5Å of ligand |

| From Functional Annotation | Catalytic Site Atlas (CSA), UniProtKB | List of annotated residue IDs |

| Computational Prediction | fpocket, CASTp, SiteMap |

Center (x,y,z) and radius, or residue list |

Detailed Experimental Protocols

Protocol 1: Curating a High-Quality PDB Structure Set for a Target Protein

Objective: To obtain and validate a non-redundant set of high-resolution structures for a given target protein, suitable for DeePEST-OS model training.

Materials: See "The Scientist's Toolkit" below.

Method:

- Target Identification: Query the PDB using the target's UniProt accession ID (e.g., P00742) via the RCSB PDB API (

https://search.rcsb.org). - Initial Filtering: Download the list of PDB IDs. Filter programmatically for:

- Experimental Method:

"X-ray"OR"Electron Microscopy". - Resolution: ≤ 2.5 Å.

- Polymer Entity Type:

"Protein"(or complex).

- Experimental Method:

- Manual Curation & Clustering:

- Fetch PDB files using

wgetorBio.PDB. - Align all structures to a reference (highest resolution) using

PyMOL'saligncommand. - Cluster structures based on sequence identity (≥ 95%) and ligand presence to reduce redundancy. Use

CD-HITorMMseqs2.

- Fetch PDB files using

- Structure Validation:

- Run

MolProbityor use RCSB validation reports for each retained structure. - Check clash scores, rotamer outliers, and Ramachandran outliers. Prioritize structures in the 90th+ percentile.

- Run

- Pre-processing for DeePEST-OS:

- Remove all heteroatoms except relevant co-factors (e.g., HEME, ZN) and key ligands.

- Standardize atom and residue names using

PDBFixerorChimeraX. - Add missing hydrogens at physiological pH (7.4) using

ReduceorOpen Babel.

- Output: A directory of cleaned, validated, and non-redundant

.pdbfiles.

Protocol 2: Defining a Consensus Binding Site

Objective: To generate a robust, allosterically relevant binding site definition from multiple data sources.

Method:

- Ligand-Based Definition (Primary):

- Load a co-crystal structure with a high-affinity ligand in

PyMOL. - Execute command:

select site_residues, byres ligand around 5.0 - Save the list of residue identifiers (ChainID and ResSeq number).

- Load a co-crystal structure with a high-affinity ligand in

- Literature-Based Annotation:

- Extract functionally critical residues from the "Function" and "Catalytic activity" sections of the UniProt entry.

- Cross-reference with the Catalytic Site Atlas (CSA).

- Map these residue numbers to the reference PDB sequence using a sequence alignment tool (e.g.,

Clustal Omega).

- Computational Prediction (Validation):

- Run

fpocketon the apo structure:fpocket -f input.pdb. - Analyze the top-ranked pockets. Overlap with residues from Steps 1 & 2 confirms the active site.

- Run

- Generate Consensus Site:

- Take the union of residues from Steps 1 and 2.

- Calculate the geometric center (centroid) of the Cα atoms of these residues.

- Define the site radius as the distance from the centroid to the farthest Cα atom + 5Å (to accommodate ligands).

- Output: A

.jsonfile containing:{ "pdb_id": "1ABC", "chain": "A", "site_residues": [12, 45, 46...], "centroid": [x, y, z], "radius": 12.5 }.

Visual Workflows

Title: DeePEST-OS Biological Target Input Preparation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials and Tools for Input Preparation

| Item / Tool Name | Function in Protocol | Source / Provider |

|---|---|---|

| RCSB PDB API | Programmatic search and metadata retrieval for PDB files. | RCSB Protein Data Bank |

| BioPython (Bio.PDB) | Python library for parsing, manipulating, and analyzing PDB files. | Open Source |

| PyMOL / UCSF ChimeraX | Interactive visualization, alignment, and selection of residues/atoms. | Schrödinger / RBVI |

| PDBFixer | Adds missing atoms/residues, standardizes files for molecular simulation. | OpenMM |

| MolProbity Server | Validates structural geometry (clashes, rotamers, Ramachandran plots). | Richardson Lab, Duke |

| fpocket | Open-source tool for detection of protein pockets and cavities. | Open Source |

| Clustal Omega | Performs multiple sequence alignment to map residues across sources. | EMBL-EBI |

| UniProtKB REST API | Fetches canonical sequence and functional annotation data. | UniProt Consortium |

| Jupyter Notebook | Environment for documenting and executing reproducible preparation scripts. | Open Source |

Application Notes and Protocols

Context: This protocol details the essential data preprocessing steps for RNA-Seq, proteomics, and metabolomics datasets to generate standardized, analysis-ready input files for the DeePEST-OS (Deep Phenotype Extraction and Systems Toxicology - Omics Suite) platform. A core pillar of the DeePEST-OS input preparation thesis is that rigorous, field-specific normalization and formatting are prerequisites for robust multi-omics integration and predictive modeling in drug development.

1. RNA-Seq Data Processing Protocol

Aim: To transform raw RNA-Seq read counts into normalized, gene-level expression values suitable for differential expression analysis and downstream integration.

Key Reagent Solutions:

- Alignment Reference (e.g., GRCh38.p14 genome, Gencode v45 transcriptome): Provides the genomic coordinate system for mapping sequencing reads.

- Alignment Software (e.g., STAR, HISAT2): Aligns short reads to the reference genome/transcriptome.

- Quantification Tool (e.g., featureCounts, HTSeq-count): Summarizes aligned reads per genomic feature (gene).

- R/Bioconductor Packages (e.g., DESeq2, edgeR): Provide statistical frameworks for count data normalization and analysis.

Detailed Protocol:

- Quality Control & Trimming: Assess raw FASTQ files using FastQC. Trim adapters and low-quality bases with Trimmomatic or Cutadapt.

- Alignment: Map cleaned reads to the reference genome using a splice-aware aligner (e.g., STAR with recommended 2-pass mode for novel splice junction discovery).

- Quantification: Generate a raw count matrix by assigning reads to genes using an annotation file (GTF/GFF). Discard ambiguous or multi-mapped reads.

- Normalization (DESeq2 Median-of-Ratios Method):

a. For each gene i in sample j, calculate the geometric mean of counts across all samples.

b. For each sample j, compute the ratio of each gene's count to its geometric mean.

c. The median of these ratios for sample j is its size factor (SFj).

d. Obtain normalized counts for gene i in sample j as:

Count_ij_normalized = Count_ij / SF_j. - Formatting for DeePEST-OS: Export the normalized count matrix (or variance-stabilized transformed data) as a tab-separated file with genes as rows (Official Gene Symbol), samples as columns, and a header row.

Table 1: Common RNA-Seq Normalization Methods Comparison

| Method | Principle | Handles Composition Bias? | Suitable for DE? | DeePEST-OS Recommendation |

|---|---|---|---|---|

| DESeq2 (Median-of-Ratios) | Median scaling by gene ratios | Yes | Excellent | Primary recommended method |

| edgeR (TMM) | Trimmed Mean of M-values scaling | Yes | Excellent | Acceptable alternative |

| Upper Quartile (UQ) | Scales by upper quartile of counts | Partial | Good | Use if TMM/DESeq2 fails |

| Transcripts Per Million (TPM) | Normalizes for gene length & sequencing depth | Yes (within-sample) | No (between-sample) | Not for direct DE input |

| Reads Per Kilobase Million (RPKM/FPKM) | Within-sample length & depth normalization | No | No | Not recommended for DE |

Diagram Title: RNA-Seq Data Processing and Normalization Workflow

2. Proteomics (LC-MS/MS) Data Processing Protocol

Aim: To process raw mass spectrometry output into normalized, protein-level abundance values, accounting for technical variation.

Key Reagent Solutions:

- Search Database (e.g., UniProtKB Swiss-Prot): Reference protein sequence database for peptide identification.

- Search Engine (e.g., MaxQuant, DIA-NN, Spectronaut): Identifies and quantifies peptides from MS/MS spectra.

- Normalization Standards (e.g., Spike-in Proteins, Total Peptide Amount): Used for global intensity scaling.

- Imputation Algorithm (e.g., MinProb, KNN): Handles missing values not Missing At Random (MNAR).

Detailed Protocol (Label-Free Quantification - LFQ):

- Peptide Identification & Quantification: Process *.raw files through a search engine (e.g., MaxQuant). Use default LFQ settings, match-between-runs, and specify a false discovery rate (FDR) < 0.01 at peptide and protein levels.

- Data Filtering: Remove proteins only identified by site, reverse database hits, and common contaminants. Retain proteins with valid values in ≥70% of samples per group.

- Normalization (Median Centering):

a. Calculate the median protein intensity for each sample.

b. Compute the global median of all sample medians.

c. For each sample, derive a scaling factor:

SF = Global Median / Sample Median. d. Multiply all protein intensities in that sample by its SF. - Missing Value Imputation (for MNAR data): Apply a left-censored imputation method (e.g., impute from a normal distribution shifted down by 1.8 standard deviations and scaled by 0.3) to simulate signals below detection limit.

- Formatting for DeePEST-OS: Export the normalized, imputed protein intensity matrix as a tab-separated file with UniProt Protein IDs as rows, samples as columns, and a header row.

Table 2: Proteomics Data Processing Steps and Tools

| Step | Typical Method/Tool | Key Parameter | Purpose |

|---|---|---|---|

| Identification | MaxQuant, DIA-NN | FDR < 0.01 | Map spectra to peptides/proteins |

| Quantification | MaxQuant LFQ, Spectronaut | Match-between-runs ON | Boost quantification coverage |

| Filtering | Manual/Custom Script | Valid vals ≥70% | Remove low-confidence data |

| Normalization | Median Centering, Loess | Sample median scaling | Remove technical bias |

| Imputation | MinProb, KNN | Down-shift 1.8σ | Handle MNAR missing values |

Diagram Title: Proteomics Data Processing and Normalization Workflow

3. Metabolomics (LC-MS) Data Processing Protocol

Aim: To extract, align, and normalize metabolite feature intensities from raw chromatographic data, correcting for batch effects and drift.

Key Reagent Solutions:

- Internal Standards Mix (e.g., IS-MIX Sulfatrack): A set of deuterated or 13C-labeled compounds added to all samples for quality control and signal correction.

- Solvent Blanks & Pooled QC Samples: Essential for background subtraction and monitoring/ correcting instrumental drift.

- Feature Detection Software (e.g., XCMS, MS-DIAL): Detects and aligns metabolite peaks across samples.

- Spectral Library (e.g., NIST20, HMDB): For putative annotation of metabolites.

Detailed Protocol (Untargeted Metabolomics):

- Feature Extraction & Alignment: Use a computational tool (e.g., XCMS in R) with parameters optimized for your LC-MS system. Perform peak picking, retention time alignment, and correspondence across samples.

- Annotation: Match MS/MS spectra and retention time/index to standards or spectral libraries for putative annotation (Level 2 or 3).

- Quality Control-Based Normalization (PQN with QC): a. Calculate the median feature intensity across all pooled Quality Control (QC) samples. b. For each sample (including QCs), calculate the median of all feature intensities. c. For each sample, create a vector of ratios: each feature's intensity / the corresponding QC median intensity. d. The median of this ratio vector is the sample's dilution factor. e. Divide all feature intensities in the sample by its dilution factor.

- Batch & Drift Correction: Use QC samples in a statistical model (e.g., Robust LOESS, Combat) to adjust for intensity drift over the acquisition sequence and batch effects.

- Formatting for DeePEST-OS: Export the normalized feature table as a tab-separated file. Rows are metabolite features (with putative annotation as column), columns are samples, and values are normalized intensities.

Table 3: Metabolomics Normalization & Correction Strategies

| Strategy | Description | Corrects For | Use Case |

|---|---|---|---|

| Probabilistic Quotient Normalization (PQN) | Median quotient of sample vs. reference spectrum | Global urine dilution/concentration differences | Primary normalization for biofluids |

| Internal Standard (IS) Normalization | Scaling to spiked IS signal | Injection volume variation | Targeted assays; support for untargeted |

| QC-Based LOESS Correction | Local regression on QC intensity trends | Within-batch instrumental drift | Mandatory for long LC-MS sequences |

| Batch Correction (ComBat) | Empirical Bayes framework | Systematic inter-batch variation | Multi-batch studies |

Diagram Title: Metabolomics Data Processing and Normalization Workflow

The Scientist's Toolkit: Essential Research Reagents & Software

Table 4: Key Reagents and Tools for Omics Data Preparation

| Item | Function | Example Product/Software |

|---|---|---|

| Sequencing Platform | Generates raw RNA-Seq reads. | Illumina NovaSeq, NextSeq |

| Mass Spectrometer | Generates raw proteomics/metabolomics spectra. | Thermo Q-Exactive, Sciex TripleTOF |

| Curated Reference Database | Provides ground truth for sequence mapping. | Gencode (RNA), UniProt (Prot), HMDB (Metab) |

| Isotope-Labeled Internal Standards | Controls for technical variance in MS sample prep. | IS-MIX Sulfatrack, Biocrates META-KIT |

| Pooled Quality Control (QC) Sample | Monitors instrument stability for correction. | Pool of equal aliquots from all study samples |

| Bioinformatics Pipeline Software | Executes alignment, quantification, normalization. | nf-core/rnaseq, MaxQuant, XCMS, DIA-NN |

| Statistical Programming Environment | Flexible platform for normalization and analysis. | R/Bioconductor, Python (SciPy/Pandas) |

This document serves as an application note within the broader DeePEST-OS (Deep Pharmacometric and Endpoint Simulation and Trial Optimization Suite) thesis research. Effective input preparation for this platform mandates a rigorous, standardized approach to integrating multidimensional clinical data. This note details the protocols for curating and structuring core input variables: dosing regimens, baseline demographics, and physiological covariates, which are critical for generating accurate PK/PD and clinical outcome simulations.

Data Requirements and Standardization Protocols

For population modeling in DeePEST-OS, input data must be formatted according to the following standard table structures. All time variables should be normalized to a common zero (e.g., first dose administration).

Table 1: Dosing Regimen Input Schema

| SUBJECT_ID | EVENT_TYPE | TIME (h) | AMT (mg) | DUR (h) | ROUTE | CYCLE |

|---|---|---|---|---|---|---|

| 101 | DOSE | 0 | 500 | 1 | IV | 1 |

| 101 | DOSE | 168 | 750 | 0 | PO | 2 |

| 101 | OBS | 2 | . | . | . | 1 |

| 102 | DOSE | 0 | 500 | 1 | IV | 1 |

EVENT_TYPE: DOSE, OBS (observation); AMT: Dose amount; DUR: Infusion duration (0 for bolus); ROUTE: IV, PO, SC; CYCLE: Cycle number for oncology trials.

Table 2: Baseline Demographics & Physiology Schema

| SUBJECT_ID | AGE (yr) | SEX (M/F) | WEIGHT (kg) | BSA (m²) | eGFR (mL/min) | ALB (g/dL) | CYP2D6_STATUS | DISEASE_STAGE |

|---|---|---|---|---|---|---|---|---|

| 101 | 67 | M | 82 | 1.95 | 78 | 4.2 | IM | IIIB |

| 102 | 54 | F | 61 | 1.68 | 92 | 3.8 | NM | IIIC |

BSA: Body Surface Area (Calc. via Mosteller formula); eGFR: estimated Glomerular Filtration Rate (CKD-EPI); CYP2D6_STATUS: Phenotype (e.g., NM=Normal Metabolizer, IM=Intermediate); DISEASE_STAGE: Disease-specific classification.

Experimental Protocol: Covariate-PK/PD Relationship Analysis

This protocol outlines the steps to quantify the impact of integrated covariates on PK/PD parameters.

Title: Longitudinal Population PK/PD Analysis with Covariate Screening.

Objective: To identify and quantify significant relationships between baseline demographics/physiological variables and key PK/PD parameters (e.g., Clearance (CL), Volume of Distribution (Vd), EC₅₀).

Materials & Reagents:

- Software: Nonlinear Mixed-Effects Modeling software (e.g., NONMEM, Monolix, R with nlmixr).

- Hardware: High-performance computing cluster for large-scale simulation.

- Data: Curated tables per Section 2, including rich covariate data and sparse PK/PD samples.

Procedure:

- Base Model Development: Develop a structural PK and/or PD model without covariates. Estimate inter-individual variability (IIV) on key parameters.

- Covariate Model Building: Using the finalized base model, test plausible covariate-parameter relationships using a stepwise forward inclusion (p<0.05) and backward elimination (p<0.01) procedure.

- Continuous Covariates (e.g., Weight, Age): Model using a power function:

P = θₚ * (COV/Median_COV)^θᵣ. - Categorical Covariates (e.g., Sex, Genotype): Model using a proportional shift:

P = θₚ * (1 + θᵣ*INDICATOR).

- Continuous Covariates (e.g., Weight, Age): Model using a power function:

- Model Evaluation: Assess significance via objective function value (OFV) change. Validate using visual predictive checks (VPC) and bootstrap diagnostics.

- Simulation Ready Output: Finalize the model and extract the mathematical structure for implementation in DeePEST-OS. This defines the core input-output relationships for simulation.

Diagram Title: Covariate Model Development Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Integrated PK/PD Studies

| Item/Category | Example/Supplier | Primary Function in Context |

|---|---|---|

| Stable Isotope Labeled Drug | Cambridge Isotopes; Alsachim | Serve as internal standards for LC-MS/MS quantification, enabling precise, multiplexed PK assay development. |

| Recombinant Metabolic Enzymes | Corning Gentest; BioIVT | For in vitro reaction phenotyping to identify enzymes (CYP, UGT) involved in drug metabolism, informing covariate selection (e.g., pharmacogenomics). |

| Human Liver Microsomes/Cytosol | BioIVT; XenoTech | Pooled or single-donor systems for in vitro intrinsic clearance and metabolite profiling studies, scaling to in vivo CL. |

| Plasma Protein Fraction | Human Serum Albumin, α-1-Acid Glycoprotein (Sigma-Aldrich) | Used in equilibrium dialysis experiments to measure drug protein binding, a key factor influencing free (active) drug concentration and Vd. |

| Validated Biomarker Assay Kits | Meso Scale Discovery; R&D Systems DuoSet | Quantify soluble PD biomarkers (e.g., cytokines, target engagement markers) for linking PK to pharmacological effect. |

| Population Database Software | WHO Anthro Survey Analyzer; CDC BSA Calculator | Standardize and calculate derived physiological covariates (BMI, BSA, eGFR) from raw demographic data for model input. |

Visualization of Integrated Parameter Relationships

The final model defines how covariates modulate the system. This relationship is central to generating individualized simulations in DeePEST-OS.

Diagram Title: Integrated Covariate-PK/PD Simulation Schema

Within the DeePEST-OS (Deep Learning Platform for Emerging Sensor Technologies in Drug Discovery and Development Operating System) research ecosystem, standardized data input is paramount for model integrity and reproducibility. This document details the application notes and protocols for four critical file formats required for data ingestion, configuration, and biological sequence representation. The selection and proper implementation of these formats constitute a foundational pillar of the broader DeePEST-OS input preparation thesis, ensuring seamless data flow from experimental and computational sources to analytical and predictive modules.

File Format Specifications & Comparative Analysis

Comma-Separated Values (CSV)

Purpose in DeePEST-OS: Primary format for tabular experimental data (e.g., high-throughput screening results, dose-response curves, pharmacokinetic parameters). Specification: A plain-text format where each line represents a data record, with values separated by a delimiter (comma by default). The first line may contain header names. Key Requirements:

- UTF-8 encoding is mandatory.

- The delimiter must be declared in the accompanying configuration file.

- Text fields containing the delimiter or newlines must be enclosed in double quotes.

- Missing values should be represented by an empty field or a standardized null token (e.g.,

NA).

Table 1: Quantitative Specifications for CSV Files in DeePEST-OS

| Feature | Specification | Example |

|---|---|---|

| Encoding | UTF-8 | - |

| Standard Delimiter | Comma (,) | value1,value2,value3 |

| Alternative Delimiters | Tab, Semicolon | Must be declared in config |

| Text Qualifier | Double Quote (") | "Value, with comma" |

| Line Termination | LF or CRLF | System-agnostic parsing |

| Header | Strongly recommended | Compound_ID,EC50,LogP |

| Missing Data | Empty field or NA |

CMPD-001,2.5,NA |

JavaScript Object Notation (JSON)

Purpose in DeePEST-OS: Hierarchical configuration files for experiment parameters, model architectures, and nested metadata. Specification: A lightweight, human-readable data-interchange format based on key-value pairs and ordered lists. Key Requirements:

- Must conform to RFC 8259.

- Serves as the backbone for DeePEST-OS module configuration.

- Supports complex nested structures unsuitable for flat CSV files.

Table 2: JSON Structure for a DeePEST-OS Model Configuration

| JSON Key | Data Type | Description | Example Value |

|---|---|---|---|

experiment_id |

String | Unique experiment identifier | "deeppest_exp_2023_001" |

model_parameters |

Object | Nested model settings | {"layers": 5, "activation": "relu"} |

input_data_path |

String | Path to CSV/FASTA files | "/data/screen_results.csv" |

hyperparameters |

Object | Training parameters | {"learning_rate": 0.001, "epochs": 100} |

FASTA

Purpose in DeePEST-OS: Representation of biological sequences (protein, DNA, RNA) for target identification and cheminformatics pipelines.

Specification: A text-based format where a single-line description (starting with >) is followed by lines of sequence data.

Key Requirements:

- Description line must contain a unique identifier.

- Sequence characters must be standard IUPAC codes.

- Sequence data can be wrapped (multiple lines) or unwrapped (single line).

Table 3: FASTA Format Specifications for DeePEST-OS

| Component | Format Rule | Example | ||

|---|---|---|---|---|

| Description Line | Begins with > |

`>sp | P01308 | INS_HUMAN Insulin OS=Homo sapiens OX=9606` |

| Sequence Line(s) | Subsequent lines contain sequence | MALWMRLLPL... |

||

| Allowed Characters | Protein: A-Z, *, -DNA: A, T, G, C, N, - |

Standard IUPAC | ||

| Line Length | Recommended max 80 characters for readability | - |

Custom Configuration File Template (DeePEST-CFG)

Purpose in DeePEST-OS: A hybrid template for defining complex, multi-part experiments, linking CSV data, JSON parameters, and FASTA sequences. Specification: A YAML-like structure that provides a clear, hierarchical overview of an entire DeePEST-OS run. Key Requirements:

- Uses

---to separate document sections. - Each section defines a different aspect of the experiment.

- References external data files (CSV, JSON, FASTA) or contains inline JSON.

Protocol 1: Creating a DeePEST-CFG File

- Initiate File: Open a new text file with a

.deepcfgextension. - Define Metadata Section: Start with

--- METADATA. Includeexperiment_name,principal_investigator, anddate. - Define Inputs Section: Add

--- INPUTS. Listdata_file(path to CSV),sequence_file(path to FASTA), and anyauxiliary_data. - Define Parameters Section: Add

--- PARAMETERS. Embed a JSON object or reference an external.jsonconfig file using$ref:. - Define Outputs Section: Add

--- OUTPUTS. Specifydirectoryandformats(e.g.,[".json", ".h5"]). - Validation: Use the DeePEST-OS

cfg_validator.pytool to check syntax and file path integrity before execution.

Experimental Protocol for Integrated Data Preparation

Protocol 2: End-to-End Input Preparation for a Target Affinity Prediction Experiment This protocol integrates all four file formats to prepare a DeePEST-OS run predicting small-molecule binding affinity to a protein target.

I. Materials & Reagent Solutions (The Scientist's Toolkit)

- High-Throughput Screening Data Export: Raw dose-response data in plate reader proprietary format (e.g.,

.xlsx). - Sequence Database (e.g., UniProt): Source for obtaining the canonical FASTA sequence of the target protein.

- DeePEST-OS Software Suite: Includes

csv_formatter.py,json_config_builder.py, andcfg_validator.py. - Text Editor or IDE: For editing and viewing JSON, YAML, and configuration files (e.g., VS Code, Sublime Text).

- Command Line Terminal: For executing validation and preparation scripts.

II. Procedure

A. Data Curation & CSV Generation

- Export raw inhibition/response data from the screening instrument.

- Using statistical software (e.g., R, Python/pandas), calculate the desired activity metric (e.g., pIC50, % inhibition at 10 µM).

- Create a structured table with required columns:

Compound_ID,SMILES(canonical),Activity_Metric,Assay_Type. - Apply the DeePEST-OS CSV specifications from Table 1. Save as

affinity_screen_YYYYMMDD.csv.

B. Target Definition via FASTA

- Navigate to the UniProt database (www.uniprot.org).

- Search for the target protein (e.g., "Human EGFR").

- Download the canonical sequence in FASTA format.

- Validate the sequence using

fasta_validator.pyto ensure it contains only valid IUPAC amino acid codes. Save astarget_EGFR.fasta.

C. Model Configuration in JSON

- Use the template from Table 2 as a starting point.

- Modify the

model_parametersto specify a graph neural network or transformer architecture suitable for structure-activity relationship modeling. - Set

input_data_pathto the location of the CSV from step A. - Define

hyperparametersfor optimization. Save asaffinity_model_config.json.

D. Unified Experiment Definition with DeePEST-CFG

- Follow Protocol 1 to create a new

.deepcfgfile. - In the

INPUTSsection, pointdata_filetoaffinity_screen_YYYYMMDD.csvandsequence_filetotarget_EGFR.fasta. - In the

PARAMETERSsection, use$ref: affinity_model_config.json. - Run the validation script:

cfg_validator.py --config experiment.deeppestcfg.

III. Expected Results & Quality Control

- A validated configuration file that passes all path and syntax checks.

- A standardized, machine-readable dataset ready for ingestion by DeePEST-OS training pipelines.

- Log files from the validation script confirming the integrity of all linked external files.

Visual Workflows

DeePEST-OS Input File Integration Workflow

Input File Preparation and Validation Protocol

This document serves as a detailed application note within the broader research thesis on DeePEST-OS input preparation and data requirements. DeePEST-OS (Deep Learning for Pharmacokinetic, Efficacy, Safety, and Toxicity - Omics Systems) is a predictive modeling platform for drug development. The accuracy and completeness of its input datasets are paramount for generating reliable predictions of compound behavior. This case study provides a practical walkthrough for constructing a comprehensive, multi-modal input dataset suitable for training and validating DeePEST-OS models.

Case Study: Building a Dataset for a Kinase Inhibitor Program

This protocol details the assembly of a dataset for a hypothetical pan-kinase inhibitor development program targeting oncology indications. The dataset integrates chemical, in vitro, in vivo, and clinical data.

All quantitative data extracted from literature and public repositories for the case study are summarized below.

Table 1: Chemical and In Vitro ADMET Properties for Candidate Compounds

| Compound ID | Molecular Weight (Da) | LogP | Solubility (µM) | CYP3A4 Inhibition (IC50, µM) | hERG Inhibition (IC50, µM) | Kinase Target A (pIC50) | Kinase Target B (pIC50) |

|---|---|---|---|---|---|---|---|

| CPI-001 | 412.5 | 3.2 | 15.2 | >50 | 12.5 | 8.1 | 6.9 |

| CPI-002 | 398.4 | 2.8 | 45.6 | 25.4 | >50 | 7.8 | 7.5 |

| CPI-003 | 435.6 | 4.1 | 5.8 | 5.2 | 8.7 | 9.2 | 5.1 |

| CPI-004 | 387.3 | 2.5 | 120.3 | >50 | >50 | 6.5 | 8.4 |

Table 2: In Vivo Pharmacokinetic Parameters (Rat, IV & PO)

| Compound ID | CL (mL/min/kg) | Vdss (L/kg) | t1/2 (h) | F (%) | Cmax (ng/mL) | AUC0-∞ (h*ng/mL) |

|---|---|---|---|---|---|---|

| CPI-001 | 25.6 | 2.8 | 1.9 | 45 | 520 | 2850 |

| CPI-002 | 18.2 | 1.5 | 1.4 | 78 | 1250 | 5120 |

| CPI-003 | 32.4 | 5.1 | 2.9 | 22 | 210 | 980 |

| CPI-004 | 15.7 | 1.2 | 1.3 | 85 | 1480 | 6050 |

Table 3: Clinical Efficacy and Safety Endpoints (Phase Ib)

| Endpoint | Dose Level 1 (50mg) | Dose Level 2 (100mg) | Dose Level 3 (200mg) | Placebo |

|---|---|---|---|---|

| Objective Response Rate (ORR, %) | 10 | 25 | 35 | 2 |

| Progression-Free Survival (PFS, months) | 3.2 | 5.6 | 8.1 | 2.9 |

| Incidence of Grade ≥3 Hypertension (%) | 5 | 15 | 30 | 3 |

| Incidence of Elevated ALT (>3x ULN, %) | 8 | 12 | 20 | 5 |

Experimental Protocols for Data Generation

Protocol: High-Throughput Kinase Profiling Assay

Purpose: To determine the inhibitory potency (pIC50) of compounds against a panel of recombinant human kinases. Materials: See "The Scientist's Toolkit" (Section 5). Method:

- Prepare a 10 mM stock solution of each test compound in DMSO. Serial dilute in DMSO to create a 10-point, 1:3 dilution series.

- In a 384-well assay plate, transfer 50 nL of each dilution (in triplicate) using an acoustic dispenser. Include DMSO-only control wells (0% inhibition) and control inhibitor wells (100% inhibition).

- Prepare kinase reaction mix containing recombinant kinase, ATP (at Km concentration), and fluorescently-labeled peptide substrate in assay buffer (50 mM HEPES pH 7.5, 10 mM MgCl2, 1 mM DTT, 0.01% Brij-35).

- Dispense 5 µL of kinase reaction mix into each well to initiate the reaction. Final DMSO concentration must be ≤1%.

- Incubate plate at 25°C for 60 minutes.

- Stop the reaction by adding 5 µL of development solution containing EDTA and a detection reagent (e.g., anti-phospho antibody coupled to Eu3+-chelate for TR-FRET).

- Incubate for 60 minutes at 25°C.

- Read plate on a compatible plate reader (e.g., TR-FRET or Mobility Shift).

- Data Analysis: Calculate percent inhibition relative to controls. Fit dose-response curves using a four-parameter logistic (4PL) model to determine IC50. Convert to pIC50 (-log10(IC50)).

Protocol:In VivoRat Pharmacokinetic Study

Purpose: To determine fundamental PK parameters (CL, Vdss, t1/2, F%) following intravenous (IV) and oral (PO) administration. Method:

- Animal Preparation: House male Sprague-Dawley rats (n=3 per route per compound) with cannulas implanted in the jugular vein. Fast overnight prior to dosing with free access to water.

- Dose Formulation: Prepare IV solution in sterile saline (<5% DMSO final). Prepare PO suspension in 0.5% methylcellulose.

- Dosing and Sampling: Administer IV bolus at 1 mg/kg via tail vein. Administer PO gavage at 5 mg/kg. Collect blood samples (~100 µL) via jugular cannula at pre-dose, 0.083, 0.25, 0.5, 1, 2, 4, 6, 8, and 24 hours post-dose.

- Bioanalysis: Centrifuge blood to obtain plasma. Precipitate proteins with acetonitrile containing internal standard. Analyze supernatant using a validated LC-MS/MS method.