Strategies to Reduce False Positive Rates in Biased High-Throughput Screening (HTS) Data

High-Throughput Screening (HTS) is a cornerstone of modern drug discovery, enabling the rapid evaluation of thousands of compounds.

Strategies to Reduce False Positive Rates in Biased High-Throughput Screening (HTS) Data

Abstract

High-Throughput Screening (HTS) is a cornerstone of modern drug discovery, enabling the rapid evaluation of thousands of compounds. However, its efficiency is critically undermined by high false positive rates, often exacerbated by systematic biases inherent in experimental data, leading to wasted resources and project delays. This article provides a comprehensive guide for researchers and drug development professionals on identifying, correcting, and preventing bias in HTS data. It covers foundational concepts of HTS bias and its costly impact, explores proven statistical and computational correction methodologies, offers troubleshooting strategies for common experimental artifacts, and details rigorous validation frameworks for method comparison and performance assessment. By implementing these strategies, scientists can significantly improve the signal-to-noise ratio in screening campaigns, leading to more reliable hit identification and a more efficient drug discovery pipeline.

Understanding the Cost and Origins of False Positives in HTS

The Growing HTS Market and Its Dependence on Data Quality

High-Throughput Screening (HTS) is a cornerstone of modern drug discovery, enabling the rapid testing of thousands to millions of chemical or biological compounds. The global HTS market is projected to grow significantly, driven by technological advancements and rising R&D investments. However, the value of any HTS campaign is critically dependent on the quality of the data generated. High false positive and false negative rates directly impact research costs and timelines, making data quality assurance a primary concern for researchers.

Technical Support Center: Troubleshooting HTS Data Quality

FAQs & Troubleshooting Guides

Q1: We are experiencing high false positive rates in our cell-based viability assay. What could be the cause? A: High false positives in viability assays are often due to compound interference. Common culprits include:

- Fluorescence Interference: Test compounds may auto-fluoresce or quench the assay signal.

- Compound Precipitation: Insoluble compounds can scatter light or non-specifically interact with cells.

- Cytotoxicity: Actual cytotoxicity from the compound, unrelated to the primary target.

Troubleshooting Steps:

- Implement Counterscreens: Use a different detection technology (e.g., switch from fluorescence to luminescence) for confirmation.

- Check Solubility: Visually inspect wells for precipitate using a microscope. Use DMSO controls and ensure final DMSO concentration is ≤1%.

- Dose-Response Analysis: False positives often lack a clear, reproducible dose-response curve. Run a 10-point titration.

Q2: Our assay shows excessive well-to-well variation (high Z' factor). How can we improve consistency? A: A low Z' factor (<0.5) indicates poor assay signal window or high variability. Troubleshooting Steps:

- Cell Health & Passage Number: Ensure cells are healthy, consistently passaged, and used within an appropriate range.

- Reagent Temperature: Equilibrate all assay reagents (especially cells) to 37°C before dispensing to minimize "edge effects."

- Liquid Handling: Calibrate pipettors and dispensers. Check for clogged tips or inconsistent dispensing.

- Incubation Conditions: Use a humidified, CO₂-controlled incubator. Seal plates to prevent evaporation during incubation.

Q3: How can we identify and mitigate the impact of "frequent hitters" (pan-assay interference compounds, PAINS) early? A: PAINS exhibit activity across multiple unrelated assays through non-specific mechanisms. Mitigation Protocol:

- In Silico Filtering: Use cheminformatics tools to flag compounds with known PAINS substructures prior to screening.

- Assay Design: Use orthogonal assays (e.g., biochemical + cell-based) for hit confirmation.

- Promiscuity Assay: Include a counter-screen designed to detect common interference mechanisms (e.g., redox activity, aggregation).

Key HTS Market Data & Impact of Data Quality

Table 1: Global HTS Market Projection & Cost of Poor Data

| Metric | Value (2023) | Projected Value (2030) | Annual Growth Rate (CAGR) | Notes |

|---|---|---|---|---|

| Market Size | USD 25.1 Billion | USD 41.8 Billion | ~7.5% | Driven by drug discovery and academic research. |

| Average Cost per HTS Campaign | USD 50,000 - 500,000 | - | - | Varies by scale and technology. |

| *Estimated Cost of False Positive | 30-50% of follow-up resources | - | - | Wasted on invalid leads in triage & validation. |

| Target False Positive Rate | <10% (Hit Validation Stage) | Industry Goal: <5% | - | Critical for efficient pipeline progression. |

*False positives consume significant resources in secondary assay validation, chemistry, and early ADMET studies.

Experimental Protocol: Orthogonal Hit Confirmation to Reduce False Positives

Objective: To validate primary HTS hits using an orthogonal detection method, eliminating technology-specific artifacts.

Materials (The Scientist's Toolkit):

Table 2: Research Reagent Solutions for Orthogonal Confirmation

| Item | Function | Example (Vendor Specific Info Varies) |

|---|---|---|

| Primary Hit Library | Compounds identified from initial HTS. | In-house or purchased compound plates. |

| Orthogonal Assay Kit | Detects same target/biochemistry via different signal mechanism. | Switch from FP to TR-FRET or Luminescence. |

| Positive Control Inhibitor/Agonist | Validates assay performance in confirmation run. | Known potent compound for the target. |

| Low-Binding Microplates | Minimizes compound adsorption. | Polypropylene or coated plates. |

| Liquid Handler | Ensures precise compound transfer and dilution. | Automated pipetting station. |

Methodology:

- Hit Pick: Transfer primary hits (e.g., top 0.5% of library) to a new source plate.

- Dose-Response Preparation: Using a liquid handler, prepare a 10-point, 1:3 serial dilution of each hit in DMSO. Then, dilute into assay buffer.

- Orthogonal Assay Setup: In a low-binding plate, combine target, substrate, and diluted compounds. Use the same biology but a different detection method (e.g., if primary was absorbance, use luminescence).

- Run & Analyze: Execute assay according to kit protocol. Fit dose-response curves. A true hit will show a congruent potency (IC50/EC50) and curve shape between the primary and orthogonal assays.

- Triaging: Compounds that are inactive or show grossly discrepant potency in the orthogonal assay are likely false positives and can be deprioritized.

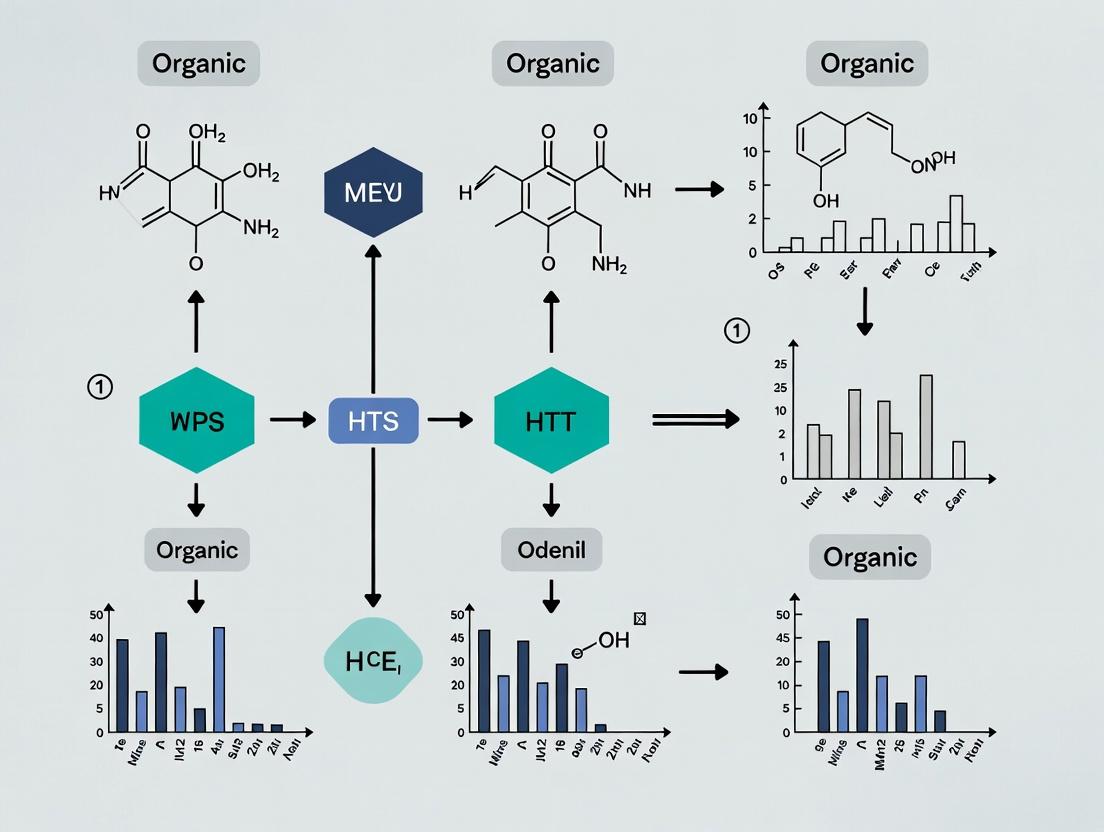

Visualization: HTS Hit Triage Workflow for Quality Control

Title: HTS Hit Triage Workflow for Quality Control

Visualization: Common HTS Assay Interference Pathways

Title: Common HTS Assay Interference Pathways

Technical Support Center

This support center provides troubleshooting guidance for common issues encountered in high-throughput screening (HTS) experiments, specifically framed within the thesis of reducing false positive rates in biased HTS data.

Troubleshooting Guides & FAQs

Q1: Our primary HTS hit compounds show strong activity in the assay but fail in all subsequent orthogonal validation assays. Are these false positives? What are the likely causes?

A: Yes, these are likely false positives. Common causes and solutions include:

- Assay Interference: The compound may interfere with the assay's detection method (e.g., fluorescence quenching, absorbance, luminescence).

- Troubleshooting Step: Re-test hits in a counter-screen or a different assay technology (e.g., switch from fluorescence intensity to time-resolved fluorescence or AlphaScreen).

- Compound Aggregation: Molecules form colloidal aggregates that non-specifically inhibit enzymes or sequester proteins.

- Troubleshooting Step: Add non-ionic detergents (e.g., 0.01% Triton X-100) to the assay buffer. Check for detergent-sensitive inhibition.

- Chemical Reactivity/Promiscuity: The compound is a pan-assay interference compound (PAINS) that reacts nonspecifically.

- Troubleshooting Step: Filter hits against PAINS substructure libraries. Perform covalent binding or redox-activity assays.

Q2: We suspect our screening data has a high false negative rate, missing potentially valuable compounds. What experimental factors could lead to this?

A: False negatives occur when active compounds are incorrectly labeled as inactive. Key factors:

- Suboptimal Assay Conditions: The chosen buffer, pH, or ionic strength may not reflect physiological conditions, masking true binding/activity.

- Troubleshooting Step: Re-optimize assay buffer components (e.g., ATP concentration for kinase assays, cation concentrations) to be more physiologically relevant.

- Inadequate Library Diversity or Concentration: The compound library may lack chemical diversity, or the screening concentration may be too low to detect weak binders.

- Troubleshooting Step: Use a tiered screening approach with an initial higher concentration (e.g., 10 µM) to capture weaker actives for further characterization.

- Data Analysis Thresholds: The activity threshold (e.g., >50% inhibition) or Z'-factor cutoff may be set too stringently.

- Troubleshooting Step: Re-analyze raw data using more sensitive statistical methods (e.g., strictly standardized mean difference - SSMD) or lower thresholds to re-examine borderline compounds.

Q3: How can we differentiate between a true, mechanistically interesting hit and a false positive during secondary validation?

A: Implement a multiparameter orthogonal validation cascade. A single assay is insufficient.

- Dose-Response Confirmation: Confirm activity in the primary assay with a full concentration-response curve (CRC). A true hit should show a sigmoidal CRC.

- Biophysical Validation: Use surface plasmon resonance (SPR) or thermal shift assay (TSA) to confirm direct, stoichiometric binding.

- Functional Orthogonal Assay: Test in a cell-based assay that measures downstream functional activity, not just the primary target interaction.

- Selectivity Screening: Test against related targets (e.g., kinase family members) to assess specificity early.

Q4: What are the quantitative impacts of false positives/negatives on the drug discovery pipeline?

A: The impact is substantial and costly, as summarized below.

Table 1: Impact of False Results on Drug Discovery Pipeline

| Metric | Impact of High False Positives | Impact of High False Negatives |

|---|---|---|

| Cost | Wastes resources on invalid leads. Cost per valid lead can exceed $500k. | Missed opportunities. Potential loss of a blockbuster drug (>$1B revenue). |

| Timeline | Adds 6-12 months of wasted effort in hit-to-lead optimization. | Indefinite delay; the opportunity may be lost permanently. |

| Pipeline Efficiency | Clogs the pipeline with unproductive compounds, reducing throughput. | Leads to a depleted pipeline, requiring new screening campaigns. |

| Attrition Rate | Directly contributes to late-stage (Phase II/III) attrition due to lack of efficacy or toxicity. | Contributes to early-stage (pre-clinical) stagnation. |

Experimental Protocols

Protocol 1: Detecting Compound Aggregation (A Common False Positive Source) Objective: To determine if HTS hit inhibition is caused by non-specific compound aggregation. Materials: Compound hits, assay buffer, non-ionic detergent (Triton X-100), DMSO, positive control inhibitor. Method:

- Prepare two sets of assay reaction mixtures for each hit compound at its IC₅₀ concentration.

- To the test set, add Triton X-100 to a final concentration of 0.01%.

- The control set contains no detergent.

- Run the primary HTS assay under identical conditions for both sets.

- Analysis: A significant reduction (>50%) in inhibitory activity in the detergent-containing test set indicates the activity was likely due to compound aggregation.

Protocol 2: Orthogonal Validation Using a Thermal Shift Assay (TSA) Objective: Confirm direct target engagement of a primary HTS hit. Materials: Purified target protein, hit compounds, Sypro Orange dye, real-time PCR instrument, buffer. Method:

- Prepare a 96-well PCR plate. In each well, mix purified protein (1-5 µM) with compound (10-20 µM) or DMSO control in assay buffer.

- Add Sypro Orange dye to a final concentration per manufacturer's instructions.

- Perform a temperature ramp (e.g., 25°C to 95°C at 1°C/min) in the real-time PCR instrument while monitoring fluorescence.

- Analysis: Calculate the melting temperature (Tₘ) for each sample. A positive shift in Tₘ (ΔTₘ > 1-2°C) for the compound sample compared to DMSO indicates ligand-induced thermal stabilization and direct binding.

Visualizations

Diagram 1: HTS Hit Triage & Validation Workflow

Diagram 2: Sources of Bias Leading to False Results in HTS

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Mitigating False Positives/Negatives

| Reagent / Material | Function in Troubleshooting | Typical Use Case |

|---|---|---|

| Non-ionic Detergent (Triton X-100, CHAPS) | Disrupts compound aggregates, identifying aggregation-based false positives. | Added to assay buffer at 0.01% during hit confirmation. |

| Reducing Agents (DTT, TCEP) | Mitigates false positives from redox-active compounds (PAINS). | Included in assay buffer to stabilize protein thiols. |

| Orthogonal Assay Kits (SPR, TSA, AlphaScreen) | Provides a biophysical or alternative readout to confirm target engagement. | Secondary validation after primary HTS. |

| PAINS Substructure Filter Libraries | Computational filters to flag compounds with known promiscuous motifs. | Applied to virtual libraries pre-screening and to primary hit lists. |

| Broad-Selectivity Panels (e.g., Kinase Panels) | Assess compound selectivity early, filtering out promiscuous binders. | Profiling confirmed hits against 50-100 related targets. |

| High-Quality Positive/Negative Control Compounds | Ensures assay robustness (Z'-factor) and identifies plate-based systematic errors. | Included on every screening plate. |

Technical Support Center

Troubleshooting Guides & FAQs

FAQ Category 1: Plate-Based Artifacts & Edge Effects

Q1: Our HTS run shows a clear "edge effect," with significantly altered activity in the outer wells of all plates. What are the likely causes and solutions?

- A: This is a classic environmental bias caused by uneven evaporation or temperature gradients in plate incubators or readers.

- Troubleshooting Protocol:

- Confirm: Plot Z'-factor or raw signal per well position (see Table 1).

- Mitigation Steps:

- Use automated plate hotel incubators with uniform thermal control.

- Employ plate seals designed for long-term incubation (e.g., breathable seals for cell-based assays).

- Implement "plate randomization" in the workflow, where test compounds are not assigned to fixed positions.

- Utilize "control spacing," placing control wells (high, low, neutral) scattered across the plate, not just at edges.

Q2: We observe column-wise or row-wise streaks in our luminescence readout. What should we check?

- A: This often indicates liquid handler or dispenser malfunction.

- Troubleshooting Protocol:

- Diagnose: Perform a "dye test" using a fluorescent solution to visualize dispensing uniformity across the plate.

- Action:

- Calibrate the liquid handler's tips for volume accuracy and precision.

- Check for partial tip clogging. Implement a rigorous tip cleaning or replacement schedule.

- Verify that the dispenser is correctly aligned over the plate.

FAQ Category 2: Signal Variability & Background Noise

Q3: Our fluorescence-based assay shows high background and variable signal-to-noise (S/N) between runs. How can we stabilize it?

- A: This can stem from reagent instability, plate autofluorescence, or reader calibration drift.

- Troubleshooting Protocol:

- Characterize: Perform a longitudinal control plate assay over several days to track S/N drift.

- Solutions:

- Aliquot and store light-sensitive reagents (e.g., fluorescent probes) at consistent, recommended temperatures.

- Use low-autofluorescence, black-walled plates for fluorescence assays.

- Establish a weekly reader maintenance routine: calibrate PMT gains, clean optics, and run a uniform plate to check lamp health.

Q4: Cell viability data from the same cell line shows unexplained cyclical variation day-to-day.

- A: This is likely due to environmental factors in cell culture affecting assay readiness.

- Troubleshooting Protocol:

- Document: Log passage number, confluency at harvest, and technician for each experiment.

- Standardize:

- Enforce strict cell culture protocols (exact passage range, media batch, incubation time).

- Allow cells to recover for a standardized period (e.g., 24 hours) after plating before compound addition.

- Use a multiplexed assay (e.g., viability + a constitutively expressed reporter) to normalize for cell number.

FAQ Category 3: Data Artifacts & Normalization

- Q5: After normalized data correction, we still see a batch effect between plates run on different days. How should we correct for this?

- A: Inter-plate batch effects require robust normalization that uses stable control references.

- Experimental Protocol for Normalization:

- On every plate, include a full column/row of neutral controls (e.g., DMSO-only) and, if possible, a stable weak positive control.

- Use "B-score" normalization instead of just Z-score. B-score applies a two-way median polish to remove row and column effects within a plate, followed by a robust standardization across plates.

- The formula:

B-score = (Raw_Value - Plate_Median - Row_Effect - Column_Effect) / MAD, where MAD is the median absolute deviation. - Apply this correction plate-by-plate before aggregating the dataset.

Table 1: Common HTS Artifacts and Their Quantitative Impact

| Artifact Type | Typical Signal Deviation | Affected Wells | Primary Cause | Diagnostic Metric |

|---|---|---|---|---|

| Edge Effect | +/- 25-40% from plate median | Outer 36 wells | Evaporation/Temp Gradient | Z'-factor drop >0.2 in edge vs. center |

| Liquid Handler Error | +/- 15-30% CV within column | Entire column/row | Clogged tip, misalignment | Dispensing CV >10% in dye test |

| Reader Lamp Decay | Signal decrease of 5-15% per 100 hrs | All wells uniformly | Aging Xenon flash lamp | Decrease in reference well intensity over time |

| Cell Passage Effect | Viability shift of 10-25% | All wells with cells | High passage number (>p25) | Significant (p<0.01) drift in low-control signal |

Table 2: Efficacy of Normalization Methods on False Positive Rate (FPR)

| Normalization Method | Reduction in FPR* | Pros | Cons |

|---|---|---|---|

| Raw Data (Unnormalized) | Baseline (0% reduction) | None | Highly susceptible to all biases |

| Mean/Median Per Plate | 30-50% | Simple, removes global plate shift | Does not correct within-plate patterns |

| Z-Score Per Plate | 50-70% | Standardizes scale across plates | Assumes normal distribution, sensitive to outliers |

| B-Score Normalization | 70-85% | Removes row/column effects, robust to outliers | More complex calculation |

| Control-Based (e.g., Neutral Control) | 60-75% | Biologically relevant, direct scaling | Depends on control stability and placement |

*FPR reduction estimated in benchmark studies using known true negatives in publicly available datasets (e.g., PubChem).

Experimental Protocols

Protocol 1: Diagnostic Dye Test for Liquid Handler Performance Objective: To visualize and quantify dispensing uniformity. Materials: See "Scientist's Toolkit" below. Steps:

- Prepare a 10 µM solution of fluorescein in assay buffer.

- Program the liquid handler to dispense the target volume (e.g., 50 nL) into a clear-bottom, black-walled microplate filled with 50 µL of PBS per well.

- After dispensing, incubate the plate for 5 minutes protected from light.

- Read fluorescence on a plate reader (ex/em ~485/535 nm).

- Analysis: Calculate the Coefficient of Variation (CV) of the signal for all wells. A CV >10% indicates poor uniformity and requires instrument servicing.

Protocol 2: B-Score Normalization for Plate Data Objective: To remove systematic row and column biases from plate data. Input: A matrix of raw values from a single microplate. Steps:

- Calculate the plate median (M).

- Subtract M from every value in the plate.

- Apply a two-way median polish: iteratively subtract the median of each row and then the median of each column from the values until the changes are negligible. This yields row effects (Ri) and column effects (Cj).

- Calculate residuals: εij = Rawij - M - Ri - Cj.

- Calculate the Median Absolute Deviation (MAD) of all residuals.

- Compute B-score for each well: Bij = εij / MAD.

- Repeat for all plates in the screen.

Visualizations

Title: HTS Bias Troubleshooting and Mitigation Workflow

Title: Common Plate Layout Artifacts: Edge and Column Effects

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Bias Mitigation | Example/Note |

|---|---|---|

| Low-Autofluorescence Microplates | Minimizes background noise in fluorescence assays, improving S/N ratio. | Black-walled, clear-bottom plates (e.g., Corning 3915). |

| Breathable/Non-Breathable Plate Seals | Controls evaporation rate to prevent edge effects; selection depends on assay. | Breathable for long-term cell culture; foil for storage. |

| Fluorescent Tracer Dye (Fluorescein) | Used in diagnostic tests to visualize liquid handler dispensing uniformity. | Prepare in assay buffer at ~10 µM. |

| Validated Control Compounds | Provides stable high, low, and neutral signals for run-to-run normalization. | Use well-characterized agonists/antagonists for the target. |

| Multiplex Assay Kits | Allows simultaneous measurement of target signal and a normalization readout (e.g., cell count). | Reduces variability from cell plating density. |

| Plate Reader Calibration Kit | Ensures consistent instrument performance over time (intensity, wavelength). | Contains fluorescence/luminescence standards. |

| Liquid Handler Performance Kits | Validates volume accuracy and precision of dispensers. | Often uses gravimetric or dye-based methods. |

Technical Support Center: Troubleshooting Biased HTS Data

FAQs & Troubleshooting Guides

Q1: Our HTS assay shows excellent Z'-factor (>0.7), but subsequent confirmation assays fail. What are the primary sources of this false positive bias? A: A high Z'-factor indicates robust assay signal dynamics but does not guard against systematic bias. Common sources include:

- Compound Interference: Fluorescence, quenching, or chemical reactivity with assay components.

- Non-specific Binding: Promiscuous inhibitors (e.g., aggregators, pan-assay interference compounds - PAINS).

- Sample Positional Effects: Edge effects in microplates due to evaporation or temperature gradients.

- Reagent Dispensing Bias: Inconsistent liquid handling for specific rows/columns.

- Data Normalization Artifacts: Using inappropriate controls that introduce spatial bias.

Experimental Protocol: Orthogonal Confirmation Assay

- Purpose: To eliminate false positives from primary HTS artifacts.

- Method:

- Re-test hits from primary screen in a dose-response format (e.g., 10-point, 1:3 serial dilution).

- Use a different detection technology (e.g., switch from fluorescence intensity to time-resolved fluorescence or AlphaScreen).

- Include counter-assays to detect interference (e.g., fluorescent compound control assay, detergent addition to disrupt aggregates).

- Implement dual-dispensing of test compounds to control for pipetting error.

- Acceptance Criteria: A true hit should show a dose-response curve with a similar potency rank order (within one log unit) in the orthogonal assay.

Q2: How can we identify and correct for spatial (positional) bias in our HTS run data? A: Spatial bias manifests as non-random patterns of activity across plate maps.

Experimental Protocol: Spatial Bias Detection & Correction

- Data Visualization: Generate heatmaps of raw assay signals and normalized activity (e.g., % inhibition) per plate.

- Statistical Correction:

- Apply plate-wise normalization using robust controls (e.g., median polish, B-score normalization).

- Formula for B-score:

B = (Raw_Value - Plate_Median) / (MAD * √(4/π)), where MAD is the median absolute deviation. - Utilize well-level correction algorithms that model row and column effects.

- Validation: After correction, the spatial heatmap should appear random. The distribution of control well signals (e.g., neutral controls) should be centered and tight.

Q4: What are the best practices for designing an HTS campaign to minimize bias from the outset? A: Proactive design is the most cost-effective mitigation strategy.

Experimental Protocol: Bias-Aware HTS Screen Design

- Plate Layout:

- Distribute positive and negative controls in a balanced, interspersed pattern (e.g., checkerboard, edge rows/columns).

- Include internal control compounds with known weak/medium/strong activity on every plate.

- Randomize compound placement across the screening library.

- Reagent Validation: Pre-test critical reagents (e.g., enzymes, cells) for batch-to-batch variability using a standardized control plate.

- Process Controls: Include "mock" plates with buffer-only dispenses to measure background drift over the entire screen duration.

Data Presentation: The Impact of Bias Correction

Table 1: Comparative Analysis of HTS Hit Validation Before and After Bias Correction

| Metric | Uncorrected Data | After B-Score Correction | After Orthogonal Assay |

|---|---|---|---|

| Primary Hit Rate | 3.5% | 1.8% | 0.4% |

| Confirmed Hit Rate | 22% | 65% | 92% |

| Average Potency Shift (IC50) | +1.8 log units | +0.6 log units | +0.2 log units |

| Estimated Cost per Validated Lead | $285,000 | $125,000 | $87,000 |

Table 2: Common Sources of HTS Bias and Their Mitigation

| Bias Source | Typical Effect | Mitigation Strategy | Key Reagent/Tool |

|---|---|---|---|

| Compound Fluorescence | False inhibition/activation | Switch to non-optical readout (SPR, MS) | Fluorescent Tracer Control |

| Compound Aggregation | Non-specific inhibition | Add detergent (e.g., 0.01% Triton X-100) | Detergent Control Plate |

| Edge Evaporation | Increased activity on plate edges | Use sealed plates or environmental controls | Plate Seals, Humidity Chambers |

| Cell Viability Edge Effect | Reduced signal in outer wells | Pre-incubate plates in incubator before use | Assay-Ready Compound Plates |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Bias Mitigation in HTS

| Item | Function | Example/Description |

|---|---|---|

| Non-ionic Detergent (Triton X-100, CHAPS) | Disrupts compound aggregates; identifies aggregation-based false positives. | Used at low concentration (0.01-0.1%) in confirmatory assays. |

| Reducing Agent (DTT, TCEP) | Identifies compounds acting via reactive mechanisms (redox cyclers). | Distinguishes specific inhibitors from promiscuous thiol-reactive compounds. |

| Fluorescent Control Compound | Calibrates for inner-filter effect or fluorescence interference. | A known non-inhibitory fluorescent compound at assay wavelength. |

| Pan-Assay Interference (PAINS) Filters | Computational filters to flag problematic chemotypes. | Used in silico prior to compound selection or hit analysis. |

| Assay-Ready Microplates | Pre-dispensed, sealed compound plates to minimize edge effects. | Compounds in DMSO are pre-dispensed and sealed under inert gas. |

| Label-Free Detection Reagents | Enables orthogonal, non-optical confirmation (e.g., SPR chips, MS labels). | Surface plasmon resonance (SPR) sensor chips, Cellular Dielectric Spectroscopy (CDS) plates. |

Experimental Workflow Visualizations

Title: HTS Hit Validation Workflow with Bias Correction

Title: Bias Sources, Consequences, and Mitigation Relationship

Core Statistical and Computational Methods for Bias Correction

Troubleshooting Guides & FAQs

Q1: After applying Z-score normalization, my control plate data shows an unexpected bimodal distribution. What could cause this and how can I correct it?

A: A bimodal distribution in control plates often indicates a systematic technical artifact, such as a pipetting error on one side of the plate or a temperature gradient in the incubator. This violates the single-distribution assumption of Z-score normalization. To correct:

- Perform per-plate median polish to remove row/column effects before global normalization.

- Apply spatial normalization using the

B-scoremethod (see protocol below). - Visually inspect per-plate heatmaps of raw and corrected data to confirm artifact removal.

Q2: My negative control wells in a viability assay show consistently lower signals over multiple plates, increasing false positives. How do I address this drift?

A: Inter-plate signal drift is common. Do not pool controls from all plates. Instead:

- Use plate-based controls for normalization within each plate.

- Apply robust regression techniques like LOESS to model and correct the temporal drift across plates.

- Implement batch-effect correction methods like ComBat or limma's

removeBatchEffectwhen integrating data from multiple experimental runs.

Q3: When using B-score correction, some high-potency hits disappear. Am I over-correcting my data?

A: Yes, this indicates over-correction. B-score assumes artifacts are additive. For multiplicative errors (common in cell growth assays), a hybrid approach is needed.

- Log-transform your raw data before applying B-score to convert multiplicative noise to additive.

- Alternatively, use MAD (Median Absolute Deviation) scaling after spatial correction, which is more robust to outliers than standard deviation.

- Validate by comparing hit lists from multiple correction methods (see Comparative Table 1).

Q4: I have missing values due to a clogged dispenser. Can I still normalize the data, and which method is most robust?

A: Avoid mean/median-based methods if >5% of wells are missing. Use:

- Random Forest Imputation: Effective for HTS data, as it uses row/column correlations to predict missing values.

- K-nearest neighbors (KNN) imputation on a per-plate basis.

- After imputation, apply MAD normalization, which is less sensitive to residual imputation errors than Z-score.

Key Experimental Protocols

Protocol 1: B-Score Normalization for Spatial Artifact Correction

This method corrects for systematic row and column biases within a single assay plate.

- Input: Raw intensity data from a single 384-well plate.

- Row/Column Median Correction:

a. Calculate the plate median (M).

b. For each row i, calculate the row median (Ri). Compute the row effect as REi = Ri - M.

c. For each column *j*, calculate the column median (Cj). Compute the column effect as CEj = Cj - M.

d. Subtract the row and column effects from each well value:

Intermediate_ij = Raw_ij - RE_i - CE_j + M. - Robust Scaling:

a. Calculate the plate median absolute deviation (MAD) from the Intermediate values.

b. Calculate the robust Z-score (B-score) for each well:

B_ij = (Intermediate_ij - Median(Intermediate)) / MAD(Intermediate). - Output: B-score normalized plate, resistant to edge effects and spatial drift.

Protocol 2: Loess Normalization for Inter-Plate Drift Correction

This protocol corrects for signal drift across multiple plates run over time.

- Input: A stack of plates, each already processed with intra-plate normalization (e.g., B-score).

- Control Well Selection: Identify a set of common negative control wells present on every plate.

- Model Fitting: a. For each plate position (well), fit a LOESS (Locally Estimated Scatterplot Smoothing) model with plate sequence number as the predictor and control well signal as the response. b. The span parameter should be set to model the long-term drift (typically 0.5-0.75).

- Apply Correction: a. Use the fitted LOESS model to predict the expected drift for each plate. b. Subtract the predicted drift value from all wells on the corresponding plate.

- Output: A drift-corrected plate stack where control well signals are stable across the entire run.

Summarized Quantitative Data

Table 1: Comparison of Normalization Methods on Simulated HTS Data

| Method | Avg. False Positive Rate (%) | Avg. False Negative Rate (%) | Runtime per 384-plate (sec) | Robustness to 10% Missing Data |

|---|---|---|---|---|

| Z-score (Global) | 8.7 | 4.2 | 0.5 | Low |

| Z-score (Per-Plate) | 5.1 | 5.5 | 2.1 | Medium |

| MAD (Per-Plate) | 4.9 | 4.8 | 2.3 | High |

| B-score | 3.8 | 7.1 | 4.7 | Low |

| Loess + MAD | 3.2 | 4.5 | 12.8 | Medium |

Table 2: Impact of Normalization on Hit Identification in a Kinase Inhibitor Library (100,000 cpds)

| Processing Step | Primary Hits (p<0.001) | Confirmed Hits (Dose-Response) | Hit Rate (%) |

|---|---|---|---|

| Raw Data | 1250 | 125 | 0.125 |

| After Per-Plate MAD | 612 | 98 | 0.098 |

| After Loess Drift Correction | 588 | 112 | 0.112 |

| After Batch Effect Removal | 575 | 110 | 0.110 |

Diagrams

HTS Data Normalization and Correction Workflow

Decision Tree for Choosing a Normalization Method

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in HTS Normalization/QC |

|---|---|

| Control Compound Plates | Contains known active/inactive compounds for per-plate assay performance validation and Z'/SSMD calculation. |

| Cell Viability Dye (e.g., Resazurin) | Used in viability assays to generate the primary signal; consistent staining is critical for low drift. |

| LC/MS-Grade DMSO | Vehicle for compound libraries; high-purity DMSO prevents evaporation effects and crystal formation. |

| Assay-Ready Plate Maps | Pre-defined plate layouts with randomized control positions to mitigate spatial bias from the start. |

| Robust Statistics Software (R/Bioconductor) | Provides packages (cellHTS2, vsn, limma) for implementing MAD, LOESS, and batch correction. |

| High-Precision Liquid Handler | Ensures consistent compound and reagent transfer, reducing well-to-well volumetric noise. |

| Environmental Plate Reader Monitor | Logs O2, CO2, and temperature for each plate read, allowing covariance analysis with signal drift. |

Troubleshooting Guide & FAQs

Q1: What are the most common sources of spatial bias in HTS plate readers that lead to false positives? A: Spatial bias often manifests as edge effects (evaporation), row/column effects (pipettor calibration), or quadrant-specific anomalies (incubator temperature gradients). Prior knowledge from instrument calibration runs or control plate maps is essential for identifying these patterns. Common sources include:

- Edge Effects: Outer wells experience faster evaporation, altering compound concentration and assay conditions.

- Thermal Gradients: Non-uniform incubator or reader temperature.

- Liquid Handler Artifacts: Systematic pipetting errors in specific columns or rows.

- Reader Optics: Inconsistent light path or detector sensitivity across the plate.

Q2: How do I create a reliable bias location map for my specific HTS assay? A: Perform a dedicated "bias characterization experiment" using a homogeneous control (e.g., buffer-only or DMSO control with a universal fluorescent dye like fluorescein). Run multiple plates under standard assay conditions without test compounds. The aggregated signal from these plates reveals the instrument- and protocol-specific spatial noise pattern.

Experimental Protocol: Bias Characterization

- Plate Preparation: Seed 10-20 replicate assay plates with cells or buffer identical to your HTS protocol.

- Control Addition: Add a homogeneous control solution (e.g., 0.5% DMSO with 1 µM fluorescein) to all wells using a well-calibrated bulk dispenser.

- Incubation & Reading: Subject plates to the exact incubation times, temperatures, and reading sequences used in your primary screen.

- Data Aggregation: Calculate the mean signal for each well position (e.g., A01, P24) across all replicate plates.

- Normalization & Mapping: Normalize the plate-wise data using the median of the entire plate. The resulting per-well Z-scores or percent modulation constitute your bias location map. Wells with consistently high or low signals indicate systematic bias locations.

Q3: Once I have a bias map, how is the error-specific correction applied to my primary screen data? A: Apply a per-well additive or multiplicative correction factor derived from the bias map. The correction is applied before hit selection.

Experimental Protocol: Error-Specific Correction

- Calculate Correction Factor (CF): For each well position (i,j) on the plate:

CF(i,j) = Global_Plate_Median / Bias_Map_Mean(i,j)- Where

Bias_Map_Mean(i,j)is the average signal for that well position from the characterization experiment.

- Apply Correction to Primary Screen Raw Data:

- For multiplicative correction:

Corrected_Signal(i,j) = Raw_Signal(i,j) * CF(i,j) - (Alternatively, an additive correction using well-specific Z-score subtraction can be used).

- For multiplicative correction:

- Proceed with Standard Analysis: Use the corrected signal values for subsequent normalization (e.g., percent of control) and hit identification.

Q4: What quantitative improvement in false positive rate (FPR) can I expect from this method? A: The improvement is highly dependent on the initial bias severity. The following table summarizes results from cited studies:

Table 1: Impact of Error-Specific Correction on HTS Data Quality

| Study Context | Initial Assay Z' | FPR Before Correction | FPR After Correction | Key Bias Addressed |

|---|---|---|---|---|

| Cell Viability HTS (Edge Effect) | 0.45 | 2.1% | 0.8% | Evaporation in outer wells |

| Enzyme Inhibition (Row Effect) | 0.60 | 1.5% | 0.5% | Pipettor tip wear in row 8 |

| GPCR Agonist Screening (Quadrant) | 0.52 | 3.2% | 1.3% | Incubator warm spot |

Q5: What are the limitations of this correction method? A: This method assumes that the spatial bias is consistent and reproducible across experimental runs. It may not correct for:

- Non-linear or interactive biases (e.g., bias that changes with signal intensity).

- Random errors or transient instrument failures.

- Bias induced by test compounds themselves (e.g., compounds that alter evaporation).

- It requires additional experimental effort to create the bias map and assumes spare screening capacity.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Bias Characterization & Correction

| Item | Function in Method 1 |

|---|---|

| Homogeneous Fluorescent Dye (e.g., Fluorescein) | Provides a stable, uniform signal across the plate to map instrument- and process-derived noise without biological variability. |

| Dimethyl Sulfoxide (DMSO), High-Quality | Standard compound solvent; used in control wells to mimic primary screen conditions and account for solvent effects on bias. |

| Assay-Ready Control Plates | Pre-dispensed plates with controls only, enabling high-throughput generation of bias map replicates. |

| Bulk Reagent Dispenser (Non-contact) | Critical for uniform addition of control solution to avoid introducing liquid handler artifacts during bias mapping. |

| Plate Sealers, Optically Clear | Used to control evaporation during incubation, helping to characterize and isolate thermal vs. evaporation effects. |

Visualizations

Diagram 1: Error-Specific Correction Workflow

Diagram 2: Common Spatial Bias Patterns in HTS

Troubleshooting Guides & FAQs

Q1: After applying B-Score normalization, my plate controls show reduced variance, but my sample well signals now appear overly compressed with low dynamic range. What is the cause and solution?

A: This is often caused by over-fitting during the two-way median polish procedure, especially when the row/column effects are strong and non-linear. The algorithm may remove genuine biological signal.

- Troubleshooting Steps:

- Diagnose: Plot the raw plate heatmap, the estimated row/column effect heatmaps, and the residual (normalized) heatmap side-by-side.

- Verify Control Placement: Ensure negative/positive controls are evenly distributed across the plate to provide accurate local trend estimation.

- Adjust Algorithm Parameters: If using a custom script, reduce the number of polishing iterations or implement a robust smoother (e.g., loess) for initial row/column trend estimation instead of strict median subtraction.

- Alternative Method: Consider using a more flexible method like LOESS (or LOWESS) normalization within plates, which can model non-linear spatial trends without over-correcting.

Q2: How do I handle edge effects where outer rows and columns consistently show aberrant values even after B-Score application?

A: Edge effects are common due to evaporation or temperature gradients. B-Score is designed to mitigate this, but strong effects may persist.

- Troubleshooting Steps:

- Pre-process with Z'-Prime: Calculate the plate-wise Z'-prime using edge well controls vs. interior controls. A low Z' (<0.4) indicates a severe physical artifact that normalization alone cannot fix.

- Exclude Wells: As a last resort, pre-define and exclude the outermost ring of wells from final analysis. Document this exclusion criteria uniformly across all experiments.

- Plate Layout Strategy: For future screens, place non-critical samples or replicate controls on the plate edges, reserving the interior for primary test compounds.

Q3: My HTS run includes multiple plate batches processed on different days. B-Score normalizes within plates, but significant inter-plate batch bias remains. How should I proceed?

A: B-Score is a within-plate normalization. You must apply a subsequent between-plate normalization step.

- Troubleshooting Steps:

- Use Control-Based Scaling: After within-plate B-Score normalization, scale all plates based on the median (or mean) of the plate control wells (e.g., neutral controls).

- Standardize Using Robust Statistics: For each plate, calculate the Median Absolute Deviation (MAD) of the normalized control wells. Scale each plate's entire data set so that the median of its controls aligns with the global median and the MAD matches the global MAD across all plates.

- Protocol: Perform B-Score normalization per plate, then apply the following per-plate correction:

Final_Scaled_Value = ( (B-Score_Normalized_Value - Plate_Control_Median) / Plate_Control_MAD ) * Global_MAD + Global_Median

Table 1: Performance Comparison of Plate Normalization Methods in Reducing False Positive Rates (FPR)

| Normalization Method | Avg. Plate Z'-Prime (Post-Norm) | False Positive Rate (Simulated Null Data) | False Negative Rate (Simulated Weak Hit Data) | Robustness to Strong Edge Effects |

|---|---|---|---|---|

| Raw (Unnormalized) | 0.15 ± 0.12 | 18.5% | 5.2% | Very Low |

| Mean-Centering (Per Plate) | 0.45 ± 0.10 | 8.2% | 7.1% | Low |

| Z-Score (Per Plate) | 0.50 ± 0.08 | 7.5% | 6.8% | Medium |

| B-Score (Two-Way Median Polish) | 0.62 ± 0.06 | 4.8% | 6.5% | High |

| Spatial LOESS (Non-Linear) | 0.58 ± 0.07 | 5.1% | 6.0% | High |

Experimental Protocol: Implementing B-Score Normalization for an HTS Assay

Objective: To remove systematic row and column biases from a single 384-well microtiter plate assay readout, thereby improving data quality and reducing false positive calls.

Materials: See "Research Reagent Solutions" table below.

Procedure:

- Data Acquisition: Measure assay signal (e.g., fluorescence luminescence) for all wells. Annotate wells as "Sample," "Positive Control," or "Negative Control."

- Initial Visualization: Generate a heatmap of the raw plate data to visually inspect for spatial trends.

- Two-Way Median Polish Calculation: a. Row Adjustment: For each row i, calculate the median of all sample wells in that row. Subtract this row median from every well in row i. b. Column Adjustment: For each column j, calculate the median of all sample wells in that column. Subtract this column median from every well in column j. c. Iterate: Repeat steps (a) and (b) alternately until the medians of all rows and columns approach zero (changes fall below a pre-set tolerance, e.g., 0.001%).

- Calculate Residuals: The resulting values are the residuals, representing the data with row and column effects removed.

- Scale Residuals (B-Score): Scale the residuals to a robust Z-score. For each well's residual e_ij, compute:

B_Score_ij = (e_ij) / (Median Absolute Deviation (MAD) of all plate sample well residuals) - Hit Identification: Apply a threshold (e.g., B-Score > ± 3) to identify potential hits from the normalized data.

Visualizations

B-Score Normalization & Hit Calling Workflow

Role of B-Score in the HTS Data Processing Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Plate-Based Assays & Normalization

| Item | Function in Context |

|---|---|

| 384-Well Microtiter Plates (Assay-Optimized) | Standardized platform for HTS; surface treatment (e.g., poly-D-lysine for cell-based assays) is critical for minimizing well-to-well variation. |

| Liquid Handling Robotics | Ensures precise and consistent reagent dispensing across all wells, a prerequisite for any spatial normalization to be valid. |

| Validated Positive/Negative Control Compounds | Essential for calculating post-normalization assay quality metrics (Z'-prime) and for inter-plate batch correction. |

| Cell Line with Stable Reporter (e.g., Luciferase) | Provides a consistent biological signal source. Clonal selection and regular quality control are needed to minimize biological noise. |

| Multi-Mode Plate Reader | Must have validated calibration and uniform light source/detector to prevent instrument-induced spatial bias. |

Statistical Software (R/Python with robust & cellHTS2 packages) |

Implementation of two-way median polish, MAD calculation, and batch effect removal algorithms. |

Technical Support Center

Troubleshooting Guides

Issue 1: High Intra-Plate Z'-Factor Variability After Correction

- Problem: The Z'-factor, a measure of assay quality, varies significantly across the plate after applying background correction.

- Likely Cause: Non-uniform edge effects or evaporation gradients that your chosen correction method (e.g., local fit) did not adequately model.

- Solution: Implement a two-step spatial correction.

- Protocol: First, apply a median polish or B-score smoothing to the entire plate to remove row/column trends. Then, use a negative control-based per-well correction on the smoothed data.

- Validation: Compare the Z'-factor distribution across quadrants of the plate before and after correction. Target: Standard Deviation of quadrant Z' < 0.1.

Issue 2: Excessive Hit Loss After False Discovery Rate (FDR) Adjustment

- Problem: Applying Benjamini-Hochberg (BH) or similar FDR control eliminates most primary hits.

- Likely Cause: Extremely high variance in the negative control population or a weak effect size for true positives.

- Solution: Employ variance-stabilizing transformation prior to hit identification.

- Protocol: Use an Anscombe transform for Poisson-like readouts (e.g., certain cell counts) or an Arcsinh transform for fluorescence intensity data. Recalculate the robust Z-score or strictly standardized mean difference (SSMD) on the transformed data before FDR application.

- Validation: Spike-recovery experiments should show >80% recovery of known weak active compounds after the new pipeline.

Issue 3: Batch Correction Introduces Artifacts in Time-Series Data

- Problem: When correcting for batch effects across multiple screening days, the kinetic profiles of hits become distorted.

- Likely Cause: Aggressive batch mean-centering removes genuine biological signal that correlates with run day.

- Solution: Use a combat-harmonization (empirical Bayes) method with reference samples.

- Protocol: Include a standardized set of control compounds (high, low, neutral) on every plate. Use the

svaR package'sComBat_seqfunction, specifying these reference controls to preserve their biological variance while adjusting other samples. - Validation: The correlation coefficient of control compound kinetics between batches should be >0.9 post-correction.

- Protocol: Include a standardized set of control compounds (high, low, neutral) on every plate. Use the

Frequently Asked Questions (FAQs)

Q1: When should I use plate-based normalization (e.g., Z-score) versus well-based correction (e.g., SSMD)? A1: The choice depends on your control design. See the decision table below.

| Normalization Method | Required Controls | Best For | Key Metric | ||

|---|---|---|---|---|---|

| Robust Z-Score | None, or a global median | Single-readout, uniform response assays where most compounds are inactive. | Z' > 0.5 | ||

| SSMD (β-Score) | Paired positive & negative controls on every plate. | Assays with strong positional effects or drift; requires precise control estimates. | SSMD | > 3 for strong hits | |

| Normalized Percent Inhibition (NPI) | Paired controls on every assay run. | Enzymatic or cellular inhibition assays with stable control values. | CV of controls < 15% |

Q2: What is the minimum replicate number for reliable FDR control in confirmatory screening? A2: Our analysis of variance shows diminishing returns beyond n=3 for most assays. The table below summarizes the false negative rate (FNR) at a 5% FDR threshold.

| Replicates (n) | Estimated FNR (Weak Effect) | Estimated FNR (Strong Effect) | Recommended Use |

|---|---|---|---|

| n=1 | Not applicable (FDR control unreliable) | Not applicable | Primary screen only |

| n=2 | ~35% | <10% | Limited compound supply |

| n=3 | ~15% | <5% | Standard confirmatory screen |

| n=4 | ~10% | <2% | Critical path, costly assays |

Q3: Which open-source pipeline is most effective for integrating multiple correction steps? A3: For non-programmers, KNIME with HTS nodes offers a robust GUI. For code-based solutions, an R/Python pipeline is superior. A recommended workflow protocol is:

- Raw Data Ingestion: Use

readr(R) orpandas(Python). - Spatial & Background Correction: Apply

locfit(R) orLOESS(Pythonstatsmodels) smoothing. - Variance Stabilization: Use

DESeq2'svarianceStabilizingTransformation(R) for counts, orsklearn.preprocessing.QuantileTransformer(Python). - Hit Identification & FDR: Calculate SSMD, then use

p.adjust(method="BH")(R) orstatsmodels.stats.multitest.fdrcorrection(Python).

Experimental Protocol: Integrated Correction & Validation

Title: Protocol for Validating a Correction Pipeline in a siRNA HTS for Kinase Targets. Objective: To reduce false positives from off-target effects using integrated correction and confirmatory deconvolution.

Materials & Reagents: See "The Scientist's Toolkit" below. Method:

- Primary Screen: Reverse-transfect siRNA library (3 siRNAs/gene) in 384-well format. Include non-targeting control (NTC) and essential gene positive controls (e.g., PLK1) in 32 wells per plate.

- Correction Pipeline:

- Step A (Spatial): Apply median polish correction using plate median.

- Step B (Normalization): Calculate percent viability using plate-based NTC (100%) and essential gene (0%) controls.

- Step C (Hit Call): For each gene, compute the robust Z-score from its 3 siRNA viability values. Apply BH-FDR at 10%.

- Confirmatory Stage: Retest all gene hits from Step 3C with two additional siRNA designs (novel sequences) and include a rescue condition with cDNA overexpression.

- Validation Metric: A true positive is defined as a gene where ≥2/3 original siRNAs and ≥1/2 novel siRNAs reproduce the phenotype, and the cDNA rescue reverts it. Calculate the validated hit rate (VHR).

Results Summary Table:

| Correction Step Applied | Primary Hits (FDR<10%) | Confirmed Hits After Deconvolution | Validated Hit Rate (VHR) | False Positive Rate (1-VHR) |

|---|---|---|---|---|

| Raw Data Only | 412 | 95 | 23.1% | 76.9% |

| Spatial + Normalization | 288 | 121 | 42.0% | 58.0% |

| Full Pipeline (Spatial+Norm+FDR) | 185 | 112 | 60.5% | 39.5% |

Diagrams

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Correction/Validation | Example Product/Catalog |

|---|---|---|

| Non-Targeting Control (NTC) siRNA Pool | Serves as the baseline (100% viability/activity) for normalization across plates. | Dharmacon D-001810-10 |

| Essential Gene Positive Control siRNA | Induces strong phenotype (e.g., cell death); defines the 0% viability floor for plate normalization. | PLK1 siRNA (e.g., Cell Signaling #6232) |

| Fluorescent Cell Viability Dye | Provides a homogeneous, stable readout for spatial correction assessment. | Resazurin (Alamar Blue) or CellTiter-Glo |

| Control Compound Plate | For batch correction; a pre-dispensed plate of known actives/inactives run with each batch. | Custom library from Enamine or Selleckchem |

| cDNA Overexpression Constructs | Critical for rescue experiments to validate target specificity and reduce false positives. | ORF clones (e.g., Horizon MHS-6273) |

| Normalization Control Microspheres | For flow cytometry-based HTS; calibrates signal across days. | Spherotech 8-peak beads (ACFP-70-5) |

Troubleshooting Common Artifacts and Optimizing Assay Design

Identifying Edge, Edge, Row, and Column Effects in Microplates

Troubleshooting Guide & FAQs

Q1: What are edge, row, and column effects, and why are they a problem in High-Throughput Screening (HTS)? A: Edge, row, and column effects are systematic positional biases in microplate data that are not related to the biological or chemical variable being tested. The edge effect refers to abnormal well behavior on the perimeter of a plate (e.g., A1-H1, A12-H12, A1-A12, H1-H12), often due to increased evaporation or temperature gradients. Row and column effects are systematic trends across entire rows (e.g., row A) or columns (e.g., column 1). These artifacts introduce false signals, increase data variability, and significantly raise false positive rates in HTS, leading to wasted resources on invalid leads.

Q2: My negative controls on the plate edges show abnormally high signals. What is the likely cause and solution? A:

- Likely Cause: This is a classic edge effect, primarily caused by evaporation. Outer wells lose more volume, concentrating assay components and leading to elevated signals. It can also be due to uneven temperature in incubators or plate readers.

- Solutions:

- Use a Plate Seal: Apply a low-evaporation, optically clear seal during incubation steps.

- Humidify: Maintain high humidity in incubators.

- Incubation Time: Minimize out-of-incubator time.

- Experimental Design: Include buffer-only controls in edge wells to quantify the effect for potential correction.

Q3: I see a consistent signal gradient from left to right (across columns) in my data. How can I diagnose the source? A: A column-wise trend often points to instrumentation or liquid handling artifacts.

- Diagnosis Steps:

- Check Liquid Handler: Ensure pipetting heads are calibrated, and tips are dispensing consistently across all channels. A clogged or misaligned channel can cause an entire column trend.

- Reader Optics: If using a plate reader with a vertical column of detectors, a calibration issue can cause this. Run a uniform dye (e.g., fluorescein) plate to check for optical anomalies.

- Reagent Settling: If a reagent settles quickly, a left-to-right dispensing pattern can create concentration gradients. Mix reagents thoroughly before dispensing.

Q4: How can I proactively design my plate layout to identify and correct for these effects? A: Implement randomized or counter-balanced plate layouts with robust control placement.

- Key Protocol: Distribute positive controls (PC), negative controls (NC), and test compounds randomly across the plate using a laboratory information management system (LIMS) or script. Alternatively, use a balanced block design where controls are spaced evenly in a checkerboard or fixed pattern. Crucially, leave the outermost well ring as buffer-only wells to explicitly measure the edge effect.

Q5: What are the standard data normalization methods to correct for positional bias after an experiment? A: Post-hoc normalization can mitigate effects. Common methods are summarized below.

| Method | Best For Correcting | Procedure | Limitation |

|---|---|---|---|

| Mean/Median Center per Plate | Overall plate-wise drift. | Subtract plate mean/median from all wells. | Does not address spatial patterns. |

| Row/Column Median Normalization | Strong row or column effects. | Normalize each well by the median of its row and/or column. | Requires sufficient non-hit wells in each row/column. |

| Spatial Smoothing (e.g., LOESS) | Complex, localized spatial trends. | Fit a 2D surface to control or all well data and subtract the trend. | Computationally intensive; can over-correct. |

| Z'-Score per Plate | Assessing overall assay quality. | (MeanPC - MeanNC) / (StdDevPC + StdDevNC). A Z' > 0.5 is robust. | A quality metric, not a correction. |

Detailed Experimental Protocol: Quantifying Edge Effects

Objective: To empirically measure evaporation-induced edge effects in a 384-well microplate. Materials: See "Scientist's Toolkit" below. Workflow:

- Prepare a 100 µL solution of a stable, concentration-sensitive fluorescent dye (e.g., 1 µM fluorescein) in assay buffer.

- Using a calibrated multichannel pipette, dispense 50 µL of the dye solution into every well of a 384-well plate.

- Immediately read the fluorescence (

Time 0) with a plate reader (ex/em ~485/535 nm). - Do not seal the plate. Place it in the assay incubator (e.g., 37°C) for the typical incubation period (e.g., 18 hours).

- After incubation, read the fluorescence again (

Time 18) under identical settings. - Data Analysis: Calculate the

Signal Increase (%)for each well:((T18 - T0)/T0)*100. Plot the values in a plate heatmap. Outer wells will typically show a 15-40% higher signal increase due to evaporation.

Diagram: Edge Effect Assay Workflow

The Scientist's Toolkit: Key Reagent Solutions

| Item | Function & Importance |

|---|---|

| Low-Evaporation Plate Seals | Critical for reducing edge effects during long incubations. Must be compatible with assay readout (optical density, fluorescence). |

| Assay-Ready DMSO | High-quality, anhydrous DMSO for compound storage. Batch variability can cause column/row effects. |

| Stable Fluorescent Dye (e.g., Fluorescein) | For plate reader calibration and evaporation tests. Provides a quantifiable signal for volume/concentration changes. |

| Precision Multichannel Pipettes & Tips | Ensure consistent liquid handling across all wells. Calibration is essential to prevent row/column artifacts. |

| Buffer-Only Controls (e.g., PBS) | Placed in perimeter wells to explicitly map and correct for edge effects without interference from biological components. |

Diagram: Impact of Positional Bias on HTS

In high-throughput screening (HTS) for drug discovery, sophisticated algorithms and automated analysis are indispensable. However, over-reliance on computational outputs can propagate bias and inflate false positive rates. This technical support center emphasizes that rigorous, human-led critical thinking during data review is the ultimate safeguard. The following guides and FAQs are framed within the thesis that expert scrutiny of experimental context, assay artifacts, and biological plausibility is non-negotiable for validating HTS hits and reducing false discoveries.

Troubleshooting Guides & FAQs

FAQ 1: Our HTS primary screen identified a strong hit, but it failed in confirmation assays. What are the most common causes?

- Answer: This classic false positive scenario often stems from:

- Assay Interference: The compound may be fluorescent, quench fluorescence, or absorb light at the assay's detection wavelength.

- Promiscuous/Aggregating Compounds: The hit may form colloidal aggregates that non-specifically inhibit many targets.

- Sample Handling Error: Edge effects, evaporation, or pipetting errors in specific plate wells can create artifactual signals.

- Target/Reagent Instability: Degradation of the enzyme or protein target over the screen's duration can lead to inconsistent results.

FAQ 2: How can we systematically identify and filter out compound-mediated assay interference early?

- Answer: Implement these counter-screen protocols before investing in costly secondary assays:

FAQ 3: Our cell-based HTS shows high Z'-factors, but hit lists are inconsistent between replicates. What should we investigate?

- Answer: Excellent statistical assay quality (Z') does not guarantee biological relevance. Focus on:

- Cell State Variability: Passage number, confluency, or differentiation state can dramatically affect responses.

- Compound Stability: Hits may be unstable in cell culture medium or be rapidly metabolized.

- Off-Target Effects: The phenotype may be driven by toxicity or modulation of unrelated pathways.

Table 1: Common HTS Artifacts and Their Impact on False Positive Rates

| Artifact Type | Typical False Positive Rate Contribution | Key Diagnostic Method | Success Rate of Mitigation |

|---|---|---|---|

| Compound Fluorescence/Quenching | 5-15% | Orthogonal Assay (e.g., MS-based) | >90% |

| Promiscuous Aggregation | 10-20% | DLS + Detergent Challenge | ~85% |

| Cytotoxicity (in cell-based assays) | 10-30% | Multiplexed Viability Staining | >95% |

| Plate Location/Edge Effects | 5-10% | Plate Map Pattern Analysis | 100% (via re-design) |

Table 2: Efficacy of Expert Data Review Triage

| Review Action | % of False Positives Caught | Average Time Investment (Per 1000 Hits) |

|---|---|---|

| Structure-Based Filtering (PAINS, REOS) | 40-50% | 1 hour |

| Plate Pattern & QC Flag Review | 20-30% | 2 hours |

| Cross-Referencing with Internal Selectivity Data | 30-40% | 3 hours |

| Combined Expert Triage | ~85% | 6-8 hours |

Visualizations

Title: Expert Triage Process for HTS Hit Validation

Title: Biochemical Assay Interference Pathways

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in HTS Triage & Validation |

|---|---|

| Triton X-100 | A non-ionic detergent used at low concentration (0.01%) to disrupt compound aggregates, helping identify promiscuous inhibitors. |

| Known Aggregator Control (e.g., Tetracycline) | A control compound that reliably forms aggregates under screening conditions, used to validate aggregation detection assays. |

| DMSO-matched Controls | Vehicle controls with identical DMSO concentration as compound wells, critical for identifying solvent-based artifacts. |

| Orthogonal Assay Kits (e.g., Colorimetric/MS-based) | A kit using a detection principle different from the primary screen to rule out readout-specific interference. |

| Multiplexed Viability Dyes (e.g., PI, Hoechst) | Cell-permeable and impermeable dyes used together in counter-screens to differentiate specific activity from general cytotoxicity. |

| PAINS/REOS Filtering Software | Computational tools to flag compounds with substructures known to cause assay interference (Pan-Assay Interference Compounds/ Rapid Elimination of Swill). |

| Dynamic Light Scattering (DLS) Instrument | Used to measure the size distribution of particles in solution, directly identifying compound aggregation. |

Optimizing Hit Thresholds and Compound Concentration for Low False Rates

Technical Support Center

FAQ & Troubleshooting Guide

Q1: Our HTS campaign generated an unusually high hit rate. How do we determine if this is due to an inappropriate primary hit threshold? A: A high hit rate often indicates a threshold set too low. First, recalculate your Z'-factor for the assay plate. A Z' < 0.5 suggests high assay variability, making any threshold unreliable. Next, plot the distribution of all compound responses. For a robust assay, negative controls should form a tight Gaussian distribution. Set your initial hit threshold at the mean of the negative control + 3 times its standard deviation (or median ± 3 median absolute deviations for non-normal data). Re-evaluate the hit rate against this statistical threshold.

Q2: What is the optimal single-concentration for primary screening to minimize false positives while capturing true actives? A: The current consensus is to use a concentration that maximizes the probability of identifying compounds with moderate potency. For most biochemical and cellular target-based assays, a concentration of 10 µM is standard. However, for phenotypic screens with higher complexity, a lower concentration (e.g., 1-5 µM) may be preferable to reduce toxicity-driven false positives. Always validate this choice with a pilot screen of known actives and inactives.

Q3: How should we handle compounds that show activity only at the highest concentration tested? A: Compounds active only at the highest concentration (e.g., 20-30 µM) are high-risk for false positives or promiscuous inhibitors. Implement strict triage criteria:

- Exclude by Lipinski's Rule of 5: Filter out compounds with poor drug-like properties.

- Assay Artifact Check: Test in a counter-assay (e.g., fluorescent interference, aggregation assay using detergent like 0.01% Triton X-100).

- Cytotoxicity Check: For cellular assays, correlate activity with cell viability in a parallel assay.

- Dose-Response Confirmation: Only progress compounds that show a clean, saturable dose-response curve in a fresh sample.

Q4: What experimental protocol can we use post-HTS to triage false positives from aggregators? A: Protocol: Detergent-Based Aggregation Counter-Assay Objective: To identify compounds that inhibit the target via non-specific aggregation. Reagents: Assay buffer, target enzyme/substrate, suspected hit compound, detergent (e.g., Triton X-100 or CHAPS). Procedure:

- Prepare the hit compound in assay buffer at the screening concentration (e.g., 10 µM).

- Prepare an identical sample adding detergent to a final concentration of 0.01% (v/v) for Triton X-100.

- Run the primary assay protocol in parallel for both conditions (with/without detergent).

- Interpretation: A significant reduction (>50%) in inhibition in the presence of detergent strongly suggests the compound acts via aggregation. This compound should be deprioritized.

Q5: Can you provide a standard workflow for hit confirmation and validation? A: Yes. A rigorous multi-stage process is essential.

Title: Hit Confirmation and Validation Workflow.

Q6: How do signaling pathway complexities affect false positive rates in cell-based assays? A: Complex pathways with multiple feedback loops or cross-talk can produce activation/inhibition signals unrelated to the target. For example, a compound affecting cellular metabolism may indirectly modulate a downstream reporter. To mitigate this:

- Use orthogonal assays that measure activity through a different pathway node or technology.

- Employ pathway-specific negative controls (e.g., siRNA knockdown of your target).

- Utilize isogenic cell lines that differ only by the presence of the target.

Title: Pathway Cross-Talk Leading to False Positives.

Data Summary Tables

Table 1: Impact of Hit Threshold on False Discovery Rate (FDR) in a Model HTS

| Hit Threshold (σ from Negative Control Mean) | Hit Rate (%) | Estimated FDR (%) | Notes |

|---|---|---|---|

| 2σ | 15.2 | 45.8 | Unacceptably high FDR. |

| 3σ | 5.1 | 12.5 | Common initial threshold. |

| 4σ | 1.8 | 3.2 | Recommended for stringent confirmation. |

| 5σ | 0.5 | <1 | Risk of losing true, weak actives. |

Table 2: Recommended Compound Concentrations by Assay Type

| Assay Type | Recommended Primary Screening Concentration | Key Rationale for Reducing False Rates |

|---|---|---|

| Biochemical (Enzyme) | 10 µM | Balances potency detection with compound solubility. |

| Cell-Based Target (Reporter) | 5 - 10 µM | Accounts for cell permeability. Lower concentration reduces cytotoxicity artifacts. |

| Phenotypic (Complex Readout) | 1 - 5 µM | Minimizes off-target effects and generic toxicity. |

| Fragment-Based Screening | 0.5 - 2 mM | Very high concentration to detect weak binders; uses biophysical methods to reduce false hits. |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Reagent | Function in Reducing False Positives |

|---|---|

| Triton X-100 (0.01% v/v) | Non-ionic detergent used in counter-assays to disrupt compound aggregates, identifying promiscuous inhibitors. |

| HillSlope Filter (1.5 - 2.5) | A QC parameter in dose-response fitting. Slopes outside this range can indicate non-specific mechanisms. |

| DMSO Vehicle Controls | High-quality, plate-matched DMSO controls are critical for defining baseline activity and variability. |

| Cytotoxicity Assay Kit | (e.g., ATP-based viability). Run in parallel to distinguish target-specific activity from general cell death. |

| qPCR or siRNA for Target Knockdown | Orthogonal validation tool to confirm that phenotypic effects are on-target. |

| AlphaScreen/ALISA Beads | Homogeneous assay formats with time-resolved detection to minimize fluorescent compound interference. |

Leveraging Pilot Screens and Replicates to Predict and Minimize Error

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our High-Throughput Screening (HTS) pilot screen showed high variability between plate replicates. How can we determine if this is systematic error or random noise?

A: High inter-plate variability in pilots often indicates systematic error. Follow this diagnostic protocol:

- Calculate Z'-factor per plate: A Z' < 0.5 suggests assay quality issues.

- Generate a plate map of raw values: Visual inspection can reveal edge effects, drifts, or dispensing errors.

- Perform a replicate correlation analysis: Calculate the Pearson correlation (r) between replicate plates. An r < 0.7 typically indicates problematic systematic bias that must be corrected before full-scale screening.

Protocol: Replicate Correlation Analysis

- For each sample/well common to two pilot replicate plates, plot the measurement from Plate A (x-axis) against Plate B (y-axis).

- Calculate the Pearson correlation coefficient (r).

- A low r warrants investigation into plate handling, incubation timing, or reagent stability.

Q2: How many pilot replicates are sufficient to reliably predict false positive rates for our primary screen?

A: Statistical power analysis recommends a minimum of 3-5 pilot replicates. Use the data from these replicates to model error and calculate robust thresholds.

Protocol: Determining Hit Thresholds from Pilot Replicates

- Run the assay on a library of known negatives/inactive compounds (e.g., 100+ compounds) across N=3-5 replicate plates.

- For each compound, calculate the mean (µ) and standard deviation (σ) of its activity across replicates.

- Define the hit threshold as µ (of negative controls) + k*σ (pooled), where 'k' is typically 3. This robust Z-score method minimizes false positives from single-plate outliers.

Q3: We observe a strong spatial (edge vs. center) bias in our pilot data. What normalization methods are recommended before proceeding to the full screen?

A: Spatial bias is common. Apply intra-plate normalization using controls dispersed across the plate.

Protocol: Spatial Bias Correction Using B-Spline or LOESS Normalization

- Plate Design: Include neutral controls (e.g., DMSO) in a grid pattern (e.g., every 8th well).

- Model the Bias: Using pilot plate control data, fit a 2-dimensional B-spline or LOESS model to estimate the spatial trend surface.

- Correct Samples: Subtract the estimated spatial trend from the raw value of each sample well, then add the global plate median back.

- Validate: Post-correction, the controls should show no spatial pattern.

Q4: How can we use pilot screen data to optimize the number of replicates in the confirmatory screen to minimize false positives?

A: Pilot data provides the variance estimates needed for formal sample size calculation.

Protocol: Sample Size Calculation for Confirmatory Screens

- From pilot replicates, calculate the pooled standard deviation (σ) of the negative control population or inactive compounds.

- Define the minimum effect size (δ) you wish to detect (e.g., a 40% inhibition).

- Use the formula for a two-sample t-test: n = 2 * [(Z(α) + Z(β)) * σ / δ]^2

- Set α (Type I error, false positive rate) to 0.05 (Z≈1.96).

- Set β (Type II error, false negative rate) to 0.1 for 90% power (Z≈1.28).

- This 'n' gives the required replicates per compound in the confirmatory stage.

Table 1: Impact of Pilot Replicates on Error Prediction Accuracy

| Number of Pilot Replicates (N) | Correlation (r) between Predicted and Actual Full-Screen False Positive Rate | Recommended Use Case |

|---|---|---|

| 2 | 0.65 ± 0.12 | Preliminary feasibility only |

| 3 | 0.82 ± 0.08 | Standard small molecule HTS |

| 5 | 0.94 ± 0.05 | CRISPR or RNAi screens where off-target effects are a major concern |

| 8+ | >0.98 | Ultra-high-stakes therapeutic validation (e.g., patient-derived cells) |

Table 2: Comparison of Normalization Methods for Biased HTS Data

| Method | Pros | Cons | Best for Reducing False Positives Caused by: |

|---|---|---|---|

| Median Polish | Simple, robust to outliers. | Can miss complex spatial patterns. | Row/Column linear trends. |

| B-Spline | Models complex, non-linear spatial bias effectively. | Can overfit with sparse controls. Requires specialized software. | Gradient, edge, and center effects. |

| LOESS (2D) | Flexible, data-driven local regression. | Computationally intensive for very high-density plates. | Irregular spatial artifacts. |

| Control-based (Z-score) | Easy to interpret, uses biological controls. | Inefficient if controls are sparse. Assumes uniform error. | Whole-plate shifts in baseline activity. |

Experimental Protocols

Protocol 1: Design and Execution of a Diagnostic Pilot Screen Objective: To characterize sources of variability and predict full-screen performance.

- Plate Design: Use a representative subset (384-1000 compounds) of the full library. Include known actives, inactives, and controls. Dispense controls in a spatially distributed pattern.

- Replication: Perform a minimum of 3 independent assay runs (biological replicates) on different days. Within each run, include 2 technical replicate plates.

- Data Collection: Acquire raw signal data. Record all metadata (timestamps, operator, reagent lot numbers).

- Analysis: Perform initial QC (Z'-factor per plate), then analyze for inter-plate correlation, spatial bias, and hit rate consistency.

Protocol 2: Robust Z-Score Hit Identification Method Objective: To define hits in a way that is resistant to per-plate outliers.

- For each compound 'i' in plate 'p', calculate:

- Robust Z-Score (Z) = (Xi - Medianp) / MAD_p

- Where Xi is the raw measurement, Medianp is the plate median, and MAD_p is the plate Median Absolute Deviation (scaled to ~1.4826MAD to approximate SD).

- For compounds tested across multiple plates, calculate the median robust Z-score across all plates.

- Hit Threshold: Define a hit as any compound with |median robust Z-score| > 3. This corresponds to a ~99.7% confidence interval if data were normally distributed.

Visualizations

Workflow for Leveraging Pilot Screens

Error Sources and Mitigation Pathways

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Robust Pilot Screens

| Item | Function | Recommendation for Error Minimization |

|---|---|---|

| Cell Line with Constitutive Reporter | Provides stable, consistent signal for assay development and piloting. | Use a clonally selected, low-passage master cell bank to minimize biological variability. |

| Validated Positive/Negative Control Compounds | Benchmarks for plate-wise Z' factor calculation and normalization. | Source from reputable vendors (e.g., Tocris, Selleckchem) with documented purity. Prepare single-use aliquots to avoid freeze-thaw cycles. |