Targeted Filter Tuning: A Kernel-Size Strategy for Mitigating Specific Bias Patterns in Biomedical AI

This article provides a comprehensive guide for researchers and drug development professionals on strategically tuning convolutional filter kernel sizes to detect, isolate, and correct specific bias patterns in biomedical AI...

Targeted Filter Tuning: A Kernel-Size Strategy for Mitigating Specific Bias Patterns in Biomedical AI

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on strategically tuning convolutional filter kernel sizes to detect, isolate, and correct specific bias patterns in biomedical AI models, such as those used in drug discovery and medical imaging. We bridge the gap between theoretical bias frameworks and practical implementation by first detailing how bias manifests in biomedical data and connects to kernel operations. We then establish a methodological framework for selecting and applying kernel sizes to target spatial, sequential, or spectral bias. The guide addresses common optimization pitfalls and validation strategies, culminating in a synthesis of how this targeted approach can enhance model fairness, interpretability, and generalizability, ultimately leading to more reliable and equitable AI tools for clinical and research applications.

Understanding the Landscape: How Bias Manifests and Why Kernel Size Matters

Troubleshooting Guide & FAQs

Q1: My filter kernel is over-smoothing minority class features in histopathological image datasets. How can I adjust kernel parameters to detect subtle morphological bias patterns? A: This indicates the kernel size is too large for the feature granularity of the minority class. Follow this protocol:

- Quantify Feature Scale: Use a Laplacian of Gaussian (LoG) blob detector on your training set. Calculate the average radius (in pixels) of key morphological structures separately for each class/subgroup.

- Calibrate Kernel Size: The optimal initial kernel width (W) should be less than 2x the average minority class feature radius (Rmin). Set W = (2 * Rmin) - 1, ensuring W is an odd integer. A kernel larger than this will blur distinct features.

- Validate: Apply the calibrated kernel and compare per-class Feature Preservation Ratios (FPR). See Table 1.

Q2: During latent space analysis for bias, my dimensionality reduction (e.g., UMAP) conflates demographic subgroups. Is this a data or algorithm issue? A: This is often algorithmic amplification of an underlying data skew. Troubleshoot using this workflow:

- Pre-filter with a Small Kernel: Apply a small, edge-detecting kernel (e.g., 3x3 Sobel) to input images before feature extraction. This emphasizes high-frequency features that may be pertinent to underrepresented groups.

- Isolate Signal: Perform a comparative analysis of eigenvector contributions from the primary component analysis (PCA) step preceding UMAP. Check if vectors correlated with sensitive attributes have been disproportionately suppressed.

- Protocol: Run parallel pipelines with and without the pre-filtering kernel. Measure the Latent Space Separation Index (LSSI) for demographic subgroups. An increase in LSSI with pre-filtering suggests suppressed feature recovery.

Q3: My model's performance disparity (e.g., gap in AUC between populations) worsens after applying a standard noise-reduction filter. What's wrong? A: The filter is likely removing critical, subgroup-specific noise patterns as "artifacts." Standard denoising assumes noise is uniformly distributed, which is often a biased assumption.

- Diagnostic Test: Calculate Noise Spectrum Profiles (NSP) by class using a Fourier transform on image patches.

- Solution: Implement an adaptive kernel size selection based on the local noise amplitude specific to a subgroup's characteristic profile. Do not use a global kernel. See the "Subgroup-Adaptive Filtering Workflow" diagram.

Q4: How can I proactively choose a filter kernel strategy during dataset curation to mitigate representation bias? A: Employ a bias-aware kernel selection protocol during the pre-processing stage.

- Profile Dataset Skews: Before any model training, generate a Dataset Bias Profile Table (see Table 2).

- Match Kernel to Skew Pattern: Use the table to select an initial filtering strategy aimed at counteracting the identified primary skew.

Key Experimental Protocols

Protocol 1: Measuring Kernel-Induced Feature Suppression Objective: Quantify how different convolutional kernel sizes disproportionately suppress predictive features across population subgroups. Methodology:

- Input: Curated image dataset with demographic metadata (D). Apply standardized normalization.

- Processing: For each pre-defined kernel size K (e.g., 3x3, 5x5, 7x7, 9x9), generate filtered dataset F_K.

- Feature Extraction: Use a fixed, pre-trained feature extractor (e.g., penultimate layer of a ResNet-50) on raw and filtered datasets to obtain feature sets R and F_K.

- Analysis: For each subgroup in D, compute the Feature Preservation Ratio (FPR): FPRsubgroup = (||FK ∩ R|| / ||R||) for that subgroup, where ∩ denotes the similarity in feature activation space.

- Output: A table of FPR values per subgroup per kernel size. A >10% relative drop in FPR for any subgroup indicates harmful suppression.

Protocol 2: Optimizing Kernel Size for Specific Morphological Bias Objective: Identify the optimal filter kernel size that maximizes signal for a morphologically distinct, underrepresented class. Methodology:

- Identify Target & Reference: Define underrepresented class T (e.g., a rare cellular phenotype) and prevalent class R.

- Multi-Kernel Feature Enhancement: Process all T and R images with a range of kernel sizes. For each kernel, compute a class-separability score (e.g., Fisher's Linear Discriminant score) based on a simple, interpretable feature like texture (Haralick contrast).

- Identify Peak Separability: The kernel size that yields the highest separability score is the "optimal" size for enhancing the distinctive features of class T against the background of class R.

- Validation: Train a minimal logistic regression classifier on the texture features derived from the optimally filtered images. Compare AUC for class T to a baseline model trained on unfiltered features.

Data Tables

Table 1: Feature Preservation Ratio (FPR) by Kernel Size and Subgroup

| Subgroup | Kernel 3x3 | Kernel 5x5 | Kernel 7x7 | Kernel 9x9 |

|---|---|---|---|---|

| Cohort A (Majority) | 0.98 | 0.95 | 0.87 | 0.72 |

| Cohort B (Minority) | 0.97 | 0.89 | 0.68 | 0.51 |

| Disparity (Δ) | 0.01 | 0.06 | 0.19 | 0.21 |

Table 2: Dataset Bias Profile & Recommended Initial Kernel Strategy

| Primary Skew Type | Key Metric | Recommended Kernel Strategy |

|---|---|---|

| Label Noise Bias | Annotator Disagreement Rate > 25% | Small (3x3) Edge-Enhancing. Preserves ambiguous details. |

| Demographic Bias | Prevalence Ratio < 0.2 | Adaptive Sizing. Profile minority features first. |

| Confounder Bias | Correlation with Spurious Feature > 0.7 | Targeted Band-Pass. Isolate frequency of true signal. |

| Acquisition Bias | Scanner/Site AUC Delta > 0.1 | Uniform Denoising (5x5 Gaussian). Standardize input. |

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Bias-Optimization Research |

|---|---|

| Synthetic Minority Oversampling (SMOTE) | Generates synthetic training samples for underrepresented classes in feature space to balance kernel response calibration. |

| Explainable AI (XAI) Tools (e.g., SHAP, LIME) | Identifies which image features (and by extension, which spatial scales) most influence predictions for different subgroups. |

| Fourier Transform Library (e.g., FFT) | Analyzes frequency components of image data to identify and characterize acquisition bias (scanner-specific noise patterns). |

| Kernel Heatmap Visualizer | Creates visual maps of filter activation across images, allowing direct inspection of differential response by subgroup. |

| Fairness Metric Suites (e.g., Fairlearn) | Provides standardized metrics (Disparate Impact, Equalized Odds difference) to quantify bias before and after kernel optimization. |

Troubleshooting Guides & FAQs

Q1: During my CNN training for cell image classification, my model fails to detect larger morphological features. Small kernel stacks (3x3) perform well on sub-cellular structures but miss whole-cell deformation. What is the likely issue and how can I correct it?

A: The issue is an insufficient final receptive field. A stack of small kernels increases the receptive field linearly, which may be inadequate for global context. For tasks requiring both fine detail and global pattern recognition (e.g., detecting bias patterns from drug-induced cytoskeletal changes), a hybrid approach is recommended.

- Solution: Implement a parallel multi-branch architecture. Supplement your 3x3 convolutional branches with a branch using larger kernels (e.g., 5x5, 7x7) or a dilated convolution layer. This captures multi-scale features without excessive depth. Ensure you use appropriate padding to maintain spatial dimensions for feature map fusion.

Q2: When I increase kernel size from 3x3 to 7x7 for my first convolutional layer on microscopy images, training becomes unstable (vanishing/exploding gradients) and computationally heavy. How can I mitigate this?

A: Large kernels in early layers increase parameters quadratically and can disrupt gradient flow.

- Solution 1: Factorized Convolutions. Replace a 7x7 convolution with a sequence of 7x1 followed by 1x7 convolutions. This reduces parameters from 49C to 14C per filter while maintaining the same receptive field height and width.

- Solution 2: Strided Convolution. If high spatial resolution in early layers is not critical, use a strided (e.g., stride=2) 5x5 kernel instead of a stride=1 7x7 kernel. This down-samples early, reduces computation, and can improve stability.

- Solution 3: Enhanced Initialization. Use variance-scaling initialization (e.g., He initialization) calibrated for the larger kernel size to preserve activation variance across layers.

Q3: My feature maps from deeper network layers appear overly smooth and lose all high-frequency information critical for my bias pattern detection. Could kernel size selection be a factor?

A: Yes. Repeated convolution with strides and pooling inherently loses high-frequency detail. While small kernels preserve locality, deep stacks compound smoothing.

- Solution: Incorporate skip connections (as in ResNet) to propagate earlier, detail-rich feature maps forward. Alternatively, design a progressive kernel size schedule: start with a medium kernel (5x5) in layer 1 to capture moderate detail without noise, use smaller kernels (3x3) in mid-layers for combinatorial feature building, and consider using a dilated small kernel in the final layers to increase receptive field without further smoothing.

Q4: For a fixed compute budget, should I prioritize increasing network depth (more layers) or kernel size (fewer, larger layers) to optimize for specific, known bias patterns?

A: The choice depends on the spatial scale of the target bias pattern.

- For localized, repetitive patterns (e.g., specific texture artifacts), depth with small kernels is superior, enabling complex hierarchical non-linear features.

- For globally distributed, low-frequency patterns (e.g., gradual stain intensity gradients across a slide), incorporating at least one layer with a strategically placed larger kernel is more parameter-efficient. See the quantitative comparison below.

Table 1: Receptive Field Growth & Parameter Cost for Single Layer

| Kernel Size | Receptive Field (Single Layer) | Parameters per Filter (vs. 3x3) | Relative FLOPs (vs. 3x3) |

|---|---|---|---|

| 1x1 | 1x1 | 11% | 11% |

| 3x3 | 3x3 | 100% (baseline) | 100% |

| 5x5 | 5x5 | 278% | 278% |

| 7x7 | 7x7 | 544% | 544% |

Table 2: Effective Receptive Field (ERF) for Different Architectural Strategies

| Architecture Strategy | Example Sequence | Final ERF | Key Advantage | Best For Pattern Type |

|---|---|---|---|---|

| Deep Small Kernel Stack | [3x3] x 12 layers | 25x25 | High non-linearity, parameter efficient | Hierarchical, complex local features |

| Early Large Kernel | 7x7, [3x3] x 10 | 23x23 | Immediate broad context capture | Global bias fields, large-scale gradients |

| Spatial Pyramid | Parallel 3x3, 5x5, 7x7 branches | Varies by branch | Multi-scale feature extraction | Patterns with unknown or variable scale |

| Dilated Convolution | 3x3, dilation=2, 3x3, dilation=4 | >25x25 | Large ERF with few parameters, preserves resolution | Sparse, widely spaced fiducial markers |

Experimental Protocols

Protocol 1: Systematic Kernel Size Ablation for Bias Pattern Detection

Objective: To empirically determine the optimal convolutional kernel sizes for maximizing detection accuracy of a known, spatially extended staining bias artifact in high-throughput screening (HTS) images.

Materials: See "Research Reagent Solutions" below.

Methodology:

- Dataset Curation: From your HTS campaign, curate a balanced dataset of 10,000 image tiles (512x512 px). 50% contain the target bias pattern (confirmed by expert pathologist), 50% are normal/control.

- Baseline Model: Implement a standard VGG-style backbone (8 convolutional layers, 2 fully connected).

- Experimental Variable: Create four model variants where the first convolutional layer kernel size is systematically altered to 3, 5, 7, and 9. Adjust padding to maintain spatial dimensions. All other hyperparameters (depth, channels, optimizer, LR) remain constant.

- Training: Train each model for 100 epochs using cross-entropy loss, Adam optimizer (lr=1e-4), with an 80/10/10 train/validation/test split.

- Evaluation: Record final test accuracy, F1-score for the bias pattern class, and computational cost (FLOPs). Generate and compare Gradient-weighted Class Activation Mapping (Grad-CAM) outputs to visualize which spatial regions most influenced the classification decision for each kernel size.

Protocol 2: Receptive Field Profiling via Perturbation Sensitivity Analysis

Objective: To measure the effective receptive field (ERF) of networks trained with different kernel size schedules.

Methodology:

- Model Training: Train two models from Protocol 1 to convergence: the "3x3 first" model and the "7x7 first" model.

- Probe Dataset: Create a set of 1000 synthetic test images. Each image is uniform gray (value=0.5) with a single small white square (5x5 px) placed at a random coordinate.

- Perturbation & Measurement: For each test image, record the activation of a chosen feature map channel in the final convolutional layer. This gives a baseline activation

A_baseline. - Sensitivity Map: For each pixel

(i,j)in the input image, apply a small negative perturbationdelta(e.g., set to 0). Re-run the image through the network and record the new activationA_perturbed(i,j). - ERF Calculation: Compute the sensitivity

S(i,j) = |A_baseline - A_perturbed(i,j)|. AggregateSacross all 1000 probe images to generate a 2D sensitivity map. The region whereSis significantly greater than zero defines the empirical ERF. Compare the shape and extent of the ERF between the two model variants.

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment | Example/Specification |

|---|---|---|

| High-Content Screening (HCS) Image Dataset | The fundamental input data. Must contain examples of the target bias pattern with expert annotation. | 40x magnification, 3-channel fluorescence (DAPI, Actin, Tubulin), >= 10^4 image tiles. |

| Deep Learning Framework | Provides the computational environment for building, training, and evaluating CNN architectures. | PyTorch 2.0+ or TensorFlow 2.12+ with CUDA support for GPU acceleration. |

| Gradient Visualization Library | Generates saliency maps to interpret which image regions influenced model predictions. | TorchCAM (for PyTorch) or tf-keras-vis (for TensorFlow) for Grad-CAM production. |

| Synthetic Image Generator | Creates controlled probe images (e.g., uniform field with localized perturbation) for ERF analysis. | Custom script using NumPy/PIL or scikit-image. |

| Computational Metrics Logger | Tracks and compares key performance indicators (KPIs) across model variants. | Weights & Biases (W&B) or MLflow for experiment tracking, FLOPs calculation via fvcore or torchinfo. |

| High-Performance Computing (HPC) Cluster | Provides the necessary computational power for parallel training of multiple model variants. | Nodes with multiple NVIDIA A100 or H100 GPUs, sufficient VRAM (>40GB) for large kernel experiments. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During spatial bias correction, my kernel convolution creates edge artifacts ("halos") around high-intensity regions. What is the cause and correction?

A: This is typically caused by a kernel with an incorrect spatial extent (size) relative to the bias gradient. A kernel that is too small cannot model the broad spatial trend, while one that is too large over-smooths and creates halos. The property to correct is the kernel support (size).

- Diagnostic: Plot the residual image (original - corrected). A structured, low-frequency pattern in the residual indicates an undersized kernel. Concentric "rings" or halos at edges indicate an oversized kernel.

- Protocol:

- Extract a 1D profile line across a known, gradual bias gradient.

- Apply correction kernels of increasing size (e.g., 33px, 65px, 129px, 257px).

- Calculate the normalized residual sum-of-squares (NRSS) for each profile:

NRSS = sum( (I_original - I_corrected)^2 ) / sum( I_original^2 ). - Select the kernel size at the first local minimum of the NRSS vs. size plot.

Q2: My time-series data shows a low-frequency drift after applying a temporal high-pass filter kernel. Is this removed signal or residual bias?

A: This is often a confusion between true signal decay and temporal bias. The key correctable kernel property is the temporal cutoff frequency.

- Diagnostic: Perform a spectral analysis (FFT) on control wells or inactive regions. Persistent low-frequency power after correction indicates an improperly set high-pass filter cutoff.

- Protocol:

- Acquire data from a negative control (e.g., vehicle-only) over the full experimental timeline.

- Compute the average power spectral density (PSD) across all control replicates.

- Apply candidate high-pass filter kernels with varying cutoff periods (e.g., 4hr, 8hr, 12hr of a 48hr experiment).

- The optimal cutoff period is the longest period that reduces the PSD in the control data to the level of the system's known noise floor (see instrument specs).

Q3: After spectral unmixing for multiplexed assays, I observe crosstalk (bleed-through) residuals. Which kernel property failed?

A: This indicates an error in the spectral mixing matrix, which acts as a linear transformation kernel. The error is in the matrix coefficients (kernel weights).

- Diagnostic: Image single-label controls separately. The signal from one channel should be zero in its non-primary detection channels.

- Protocol:

- For each fluorophore i, prepare a single-stained sample.

- Acquire images across all detection channels j.

- Measure the mean intensity

I_ijfor each fluorophore i in each detection channel j. - Construct the mixing matrix M, where each column is the normalized intensity vector for one fluorophore:

M_ji = I_ij / sqrt(sum_k(I_kj^2)). - The correction kernel is the inverse of this matrix (M⁻¹), applied as a pixel-wise linear transformation to the multichannel image stack.

Table 1: Optimal Kernel Size vs. Observed Spatial Bias Scale

| Bias Pattern Description | Typical Scale (Image Width %) | Recommended Initial Kernel Size (pixels) | Correctable Property |

|---|---|---|---|

| Vignetting (center-to-corner) | 80-100% | 1.5 x Image Width | Spatial Support |

| Vertical/Horizontal Gradient | 50-100% | 1.0 x Image Dimension | Spatial Support |

| Localized Fluidic Artifact | 10-25% | 0.3 x Image Width | Spatial Support & Weight Shape |

Table 2: Temporal Filter Kernel Parameters for Common Drifts

| Drift Source | Characteristic Period | Recommended Kernel Type | Key Parameter (to optimize) |

|---|---|---|---|

| Equipment Warm-up | 30 min - 2 hr | Gaussian High-Pass | Cutoff: 1.5 x Period |

| Evaporation / Osmolality Shift | 6 - 24 hr | Polynomial Detrending | Degree: 1 (Linear) or 2 (Quadratic) |

| Photobleaching (Exponential) | Variable | Morphological Top-Hat | Structuring Element Duration |

Table 3: Spectral Calibration Matrix Example (Hypothetical 3-Channel Dye Set)

| Detection Channel | Fluorophore A (488 nm) Signal | Fluorophore B (555 nm) Signal | Fluorophore C (640 nm) Signal |

|---|---|---|---|

| Ch1 (500-550 nm) | 0.95 | 0.04 | 0.01 |

| Ch2 (570-620 nm) | 0.02 | 0.93 | 0.05 |

| Ch3 (660-720 nm) | 0.00 | 0.01 | 0.98 |

Note: The unmixing kernel is the inverse of this matrix. Diagonal dominance >0.9 is ideal.

Experimental Protocols

Protocol 1: Empirically Determining Spatial Kernel Size Objective: To find the 2D Gaussian kernel size (σ in pixels) that optimally removes low-frequency spatial bias without attenuating biological signal. Materials: High-content imager, 96-well plate, uniform fluorescent dye (e.g., 1 µM Fluorescein in PBS), image analysis software (e.g., Python with SciKit-Image, MATLAB). Steps:

- Plate Preparation: Fill all wells of a 96-well plate with 100 µL of the uniform fluorescent dye solution.

- Image Acquisition: Acquire a single image per well using the target channel (e.g., FITC), ensuring no saturation.

- Reference Image: Create a reference image

I_refby performing a per-pixel median projection across all wells. This models the bias field. - Kernel Sweep: For a range of σ values (e.g., from 10 to 300 pixels), create a normalized Gaussian kernel for each σ.

- Convolution & Evaluation: Convolve

I_refwith each kernel to generate a corrected imageI_corr. For each result, calculate the Coefficient of Variation (CV) across all pixels ofI_corr. Plot CV vs. σ. - Optimization: The optimal σ is at the point where the CV curve plateaus. Further increasing σ does not reduce variability (indicating only noise remains).

Protocol 2: Calibrating the Spectral Unmixing Kernel Objective: To derive an accurate spectral mixing matrix from single-stain controls. Materials: Multichannel fluorescence microscope, cells or beads, individual fluorophore-conjugated antibodies/ligands. Steps:

- Sample Preparation: Prepare

N+1samples: one for each of theNfluorophores used, and one unstained control. - Single-Stain Acquisition: For each fluorophore sample

i, acquire an image stack across allNdetector channelsj. Use identical exposure times for all channels. - Background Subtraction: Using the unstained control, calculate the mean background intensity

BG_jfor each channelj. SubtractBG_jfrom all images in channelj. - Intensity Measurement: For each single-stain image stack

i, define a Region of Interest (ROI) where the signal is present. Measure the mean pixel intensityI_ijwithin the ROI for each channelj. - Matrix Construction: Populate an

N x Nmatrix M, whereM_ji = I_ij. Normalize each column (fluorophore) to unit length. - Kernel Generation: The unmixing (correction) kernel is the inverse matrix K = M⁻¹. Apply K as a linear transformation to each pixel's multi-channel intensity vector in experimental data.

Visualizations

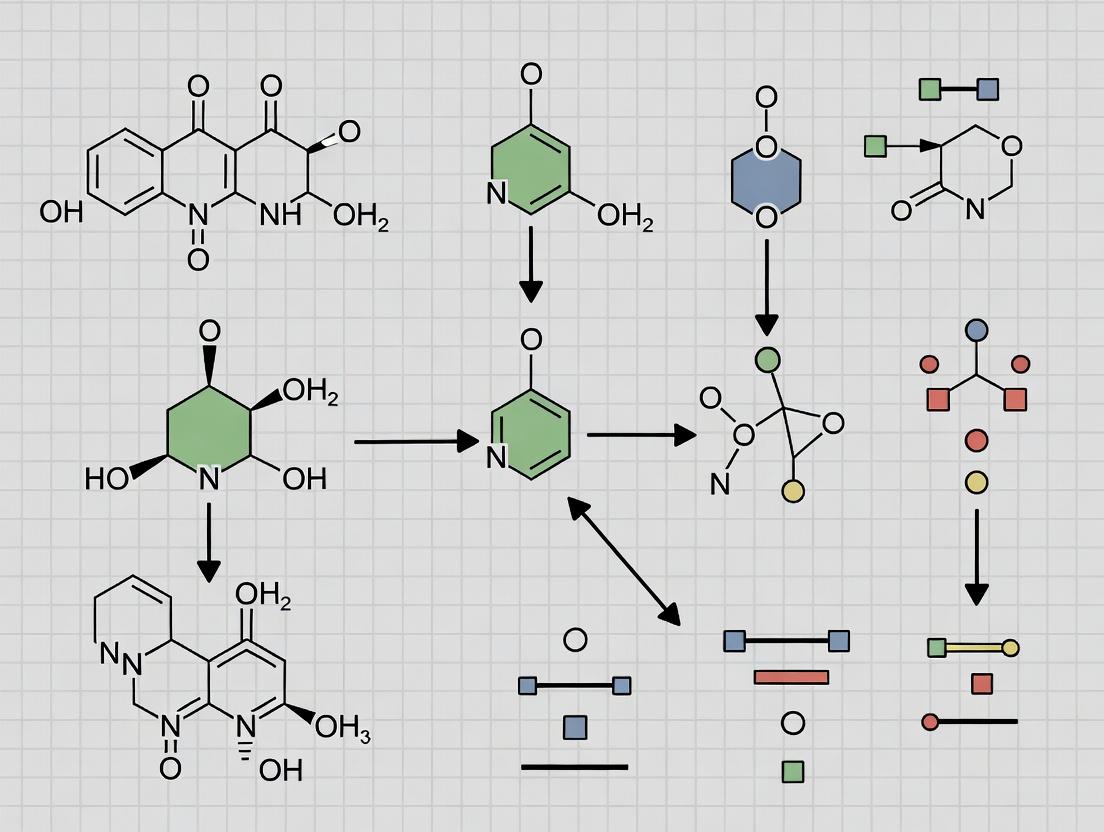

Title: Optimization Workflow for Bias Correction Kernels

Title: Mapping Bias Patterns to Kernel Properties

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Bias Characterization/Kernel Optimization |

|---|---|

| Uniform Fluorophore Plates (e.g., Fluorescein, Rhodamine B in PBS) | Creates a spatially homogeneous signal to isolate and quantify instrument-derived spatial bias (flat-field correction). |

| Single-Stain Controls (Cells/Beads with one label) | Essential for empirical measurement of the spectral mixing matrix to correct crosstalk (bleed-through). |

| Time-Lapse Viability Dye (e.g., PI, SYTOX Green) in Untreated Cells | Provides a stable, decaying signal model to distinguish photobleaching/drift (bias) from biological response. |

| Multi-Fluorescence Calibration Slide | A physical standard with known, co-localized emission peaks to validate spectral unmixing kernel accuracy post-optimization. |

| Open-Source Analysis Libraries (SciKit-Image, ImageJ/Fiji, MATLAB Image Proc Toolbox) | Provide tested implementations of convolution, FFT, and linear algebra operations for prototyping correction kernels. |

Troubleshooting Guide & FAQs

Q1: My model shows high predictive accuracy for kinase targets but poor performance for GPCRs. What could be the root cause? A: This is a classic dataset bias. Public DTI databases like ChEMBL and BindingDB are historically richer in kinase inhibitor data. This creates a structural bias where models learn features specific to ATP-binding pockets, not generalizable protein-ligand interaction principles.

- Troubleshooting Step: Run a bias audit. Calculate the distribution of protein families in your training set versus a balanced reference proteome (e.g., from UniProt). See Table 1.

Q2: During transfer learning, performance plummets when applying a pre-trained model to a new target class. How can I diagnose this? A: The bias likely lies in the feature representation. The pre-trained model's convolutional or attentional filters may have kernel sizes optimized for specific, over-represented binding site geometries (e.g., deep hydrophobic pockets common in kinases).

- Troubleshooting Step: Visualize the filter activations of your first convolutional layer. Filters activated only by kinase-like sequences indicate a lack of generalizability. Implement the filter kernel size optimization protocol detailed in the Experimental Protocols section.

Q3: My model consistently predicts "inactive" for novel scaffold compounds, despite experimental hints of activity. What's wrong? A: This is compound structural bias. Models trained predominantly on "drug-like" (Lipinski-compliant) molecules with common scaffolds fail to extrapolate to under-represented chemical spaces, such as macrocycles or covalent binders.

- Troubleshooting Step: Perform a chemical space analysis. Use t-SNE or PCA on the Morgan fingerprints of your training set and highlight the novel scaffolds. Their outlier position confirms the bias. See Table 2 for common bias patterns.

Q4: How can I technically check if my graph neural network (GNN) for DTI is biased by protein size? A: Protein size bias occurs when the GNN's message-passing steps or pooling layers are unduly influenced by the number of nodes (amino acids). Correlate your model's prediction error or confidence score with protein sequence length for your test set.

- Troubleshooting Step: Implement a control experiment. Train a simple baseline model that uses only protein length as a feature. If it performs non-randomly, your main model is likely exploiting this trivial correlation.

Experimental Protocols

Protocol 1: Auditing Dataset Bias in DTI Models

- Data Source: Compile your training dataset from chosen sources (e.g., ChEMBL, DrugBank).

- Annotation: Map all protein targets to their primary Gene Ontology (GO) molecular function terms or Pfam family identifiers using the UniProt API.

- Quantification: Calculate the percentage distribution of the top 10 protein families. Compare this distribution to the baseline frequency of these families in the human proteome.

- Visualization: Generate a paired bar chart (Training Set vs. Human Proteome) for clear comparison.

Protocol 2: Optimizing Filter Kernel Size for Specific Bias Patterns

- Hypothesis: Bias from localized binding motifs (e.g., DFG motif in kinases) requires small kernel filters (3-5 amino acids), while bias from overall protein fold preference requires larger kernels (>15 amino acids).

- Procedure: a. Prepare a balanced dataset containing two distinct target classes (e.g., Kinases vs. Proteases). b. Train separate 1D convolutional neural network (CNN) models for protein sequence encoding, systematically varying the kernel size (e.g., 3, 7, 15, 31) in the first layer. c. Evaluate each model on a held-out test set containing both classes and an external validation set rich in the under-represented class. d. Measure the performance gap (ΔAUPRC) between the over- and under-represented classes for each kernel size.

- Analysis: The kernel size that minimizes this performance gap is considered better "de-biased" for that specific structural bias pattern. Results should be tabulated.

Data Tables

Table 1: Example Protein Family Distribution Audit

| Protein Family (Pfam) | % in Training Data (ChEMBL Subset) | % in Human Proteome (UniProt) | Bias Factor (Train/Proteome) |

|---|---|---|---|

| Protein kinase | 42.7% | 2.1% | 20.3 |

| GPCR, rhodopsin-like | 12.1% | 1.3% | 9.3 |

| Ion channel | 5.3% | 1.5% | 3.5 |

| Nuclear receptor | 4.8% | 0.4% | 12.0 |

| Under-represented Example | |||

| E3 ubiquitin ligase | 0.9% | 3.8% | 0.24 |

Table 2: Common Structural Bias Patterns in DTI Prediction

| Bias Pattern | Typical Cause (Data Imbalance) | Model Artifact Symptom | Mitigation Strategy |

|---|---|---|---|

| Protein Family Bias | Over-representation of kinases, enzymes | High performance drops on GPCRs, ion channels | Strategic oversampling, family-aware splits |

| Binding Site Size Bias | Predominantly small, deep pockets (e.g., kinases) | Failure on flat, large binding sites (e.g., protein-protein interaction targets) | Data augmentation with binding site surface area normalization |

| Ligand Scaffold Bias | Overabundance of certain chemotypes (e.g., hinge binders) | Inability to predict activity for macrocycles, peptides | Generative scaffold hopping, use of matched molecular pairs |

| Affinity Range Bias | Mostly high-affinity (<100 nM) binders | Poor accuracy in mid-to-low micromolar range | Explicit modeling of continuous affinity values, not binary labels |

Visualizations

Title: DTI Model De-biasing Workflow

Title: Filter Kernel Size Links to Bias Type

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Bias Research | Example/Supplier |

|---|---|---|

| Curated Balanced Benchmark Sets | Provides a ground truth for evaluating bias, containing balanced target families and chemotypes. | LEADS (Linear Ensemble of Antagonists and Diverse Scaffolds), BindingDB curated non-redundant splits. |

| Chemical Diversity Analysis Software | Quantifies scaffold and functional group representation in compound libraries to identify chemical bias. | RDKit (Python), ChemAxon Calculator Plugins. |

| Protein Sequence & Structure Featurizer | Generates consistent, comparable input features (e.g., ESM-2 embeddings, PSSM) from protein data to avoid feature-introduced bias. | ESM (Meta AI) for embeddings, Biopython for PSSM generation. |

| Model Interpretability Library | Visualizes what features (atoms, residues) a model uses for prediction, revealing over-reliance on biased patterns. | Captum (for PyTorch), SHAP. |

| Stratified Sampling Scripts | Ensures protein families and ligand scaffolds are proportionally represented in train/validation/test splits. | Custom Python scripts using scikit-learn StratifiedKFold. |

| Bias Audit Dashboard Template | A template (e.g., Jupyter Notebook) to automatically generate bias reports from input datasets. | Community templates on GitHub (e.g., DTI-Bias-Audit). |

Technical Support Center

Troubleshooting Guide & FAQs

Q1: My designed filter kernel shows high validation error despite low training error. Is this a sign of overfitting, and how can I adjust my kernel size to address this? A: This pattern is characteristic of high-variance overfitting. The filter is too complex (kernel too large) for the data, capturing noise. To resolve:

- Systematically reduce the kernel size (e.g., from 9x9 to 5x5, then 3x3).

- Implement k-fold cross-validation for each size.

- Monitor the point where validation error begins to increase while training error remains stable. Use the kernel size just before this inflection point. Increasing your training dataset size can also allow for the use of a slightly larger, more expressive kernel without overfitting.

Q2: After applying a smoothing filter, my output signal appears overly blurred and key spatial features are lost. What is the likely cause and correction? A: This indicates high bias due to excessive smoothing, often from a kernel that is too large or has an inappropriate shape/weight distribution. This oversmoothing increases bias by making overly simplistic assumptions about the data.

- Primary Action: Reduce the kernel dimensions. Switch from a large Gaussian kernel to a smaller one.

- Protocol Adjustment: Conduct a bias-variance decomposition experiment. Calculate the mean squared error (MSE) across multiple dataset resamples for a range of kernel sizes. The optimal size minimizes the sum of squared bias and variance.

- Alternative: Consider a different filter class (e.g., a bilateral filter) that preserves edges while smoothing.

Q3: How do I quantitatively determine the optimal kernel size for my specific image dataset to balance bias and variance? A: Follow this experimental protocol for kernel size optimization:

- Define Metric: Select a primary evaluation metric (e.g., Peak Signal-to-Noise Ratio (PSNR), Structural Similarity Index (SSIM)).

- Create Kernel Set: Generate a set of square kernels (e.g., 3x3, 5x5, 7x9, 11x11) for your chosen filter type (e.g., Gaussian, Median).

- Cross-Validation: Split data into training, validation, and test sets. Use k-fold cross-validation on the training set.

- Measure & Plot: For each kernel size, calculate the average training and validation metric scores across folds.

- Identify Optimum: Plot kernel size vs. metric score. The optimal size is typically where the validation score peaks before decreasing, or where the gap between training and validation scores is acceptably small.

Q4: In convolutional neural networks (CNNs) for feature extraction, how does the depth of the network relate to the bias-variance tradeoff compared to the kernel size in a single layer? A: Both depth and kernel size control model complexity but at different scales. A single large kernel increases the receptive field dramatically in one layer, potentially leading to high variance if data is limited. Increasing network depth with small kernels (e.g., 3x3) builds a receptive field gradually, often leading to better generalization (lower variance) and more hierarchical feature learning. However, excessive depth can also lead to overfitting (high variance). The tradeoff must be managed jointly: for small datasets, prefer shallower networks with moderately sized kernels; for large datasets, deeper networks with small kernels are often optimal.

Table 1: Performance Metrics of Gaussian Filter Kernels on a Standard Microscopy Image Dataset (Cell Nuclei Detection)

| Kernel Size (px) | Mean Training PSNR (dB) | Mean Validation PSNR (dB) | Estimated Bias² (Relative) | Estimated Variance (Relative) | Recommended Use Case |

|---|---|---|---|---|---|

| 3x3 | 28.5 | 28.1 | High | Low | Preserving fine details, edge-sensitive tasks. |

| 5x5 | 30.2 | 29.9 | Medium | Medium | General-purpose denoising for mid-resolution features. |

| 7x7 | 31.0 | 30.1 | Low | Medium | Strong smoothing for high-noise environments, may blur edges. |

| 9x9 | 31.5 | 29.8 | Very Low | High | Likely overfitting; only for very low-frequency pattern extraction. |

Table 2: Cross-Validation Results for Median Filter Kernel Optimization

| Experiment ID | Kernel Size | Mean SSIM (Fold 1-4) | Std. Dev. SSIM | Final Test Set SSIM |

|---|---|---|---|---|

| M-EXP-01 | 3x3 | 0.912 | 0.015 | 0.908 |

| M-EXP-02 | 5x5 | 0.934 | 0.011 | 0.931 |

| M-EXP-03 | 7x7 | 0.928 | 0.019 | 0.920 |

| M-EXP-04 | 9x9 | 0.915 | 0.025 | 0.901 |

Detailed Experimental Protocols

Protocol A: Bias-Variance Decomposition for Filter Kernel Analysis

- Data Preparation: Obtain a master dataset

DofNregistered images. Createkbootstrap samples (D_i) fromD, each containingNimages sampled with replacement. - Filter Training: For each candidate kernel size

S, apply the filter with fixed parameters (e.g., Gaussian sigma) to each image in each bootstrap sampleD_i. This produces a set of smoothed imagesF_i(S). - Error Calculation: For a hold-out test set

T:- Prediction: For each bootstrap model

i, apply the filter with sizeStoT. - Bias²: Compute the squared difference between the average prediction across all

kmodels and the ideal target (e.g., clean ground truth):Bias²(S) = (mean(F_i(S)) - Target)². - Variance: Compute the variance of the

kpredictions:Variance(S) = mean((F_i(S) - mean(F_i(S)))²).

- Prediction: For each bootstrap model

- Total Error: Calculate

MSE(S) = Bias²(S) + Variance(S). Plot these components againstS.

Protocol B: K-Fold Cross-Validation for Optimal Kernel Size Selection

- Partition: Randomly shuffle your dataset and split it into

k(e.g., 5) equal-sized folds. - Iteration: For each kernel size

S, fori = 1 to k:- Hold out fold

ias the validation set. - Use the remaining

k-1folds as the training set. - Apply the filter with size

Sto all images in the training set (if learning is involved) or directly use the kernel. - Apply the filter to the validation fold and compute the performance metric (e.g., SSIM).

- Hold out fold

- Aggregation: For size

S, compute the average validation metric across allkfolds. The kernel size with the highest average validation score is selected. - Final Test: Train a final filter using the selected optimal size

S_opton the entire dataset and evaluate on a completely separate, held-out test set.

Visualizations

Kernel Size Optimization Workflow

Bias-Variance Tradeoff Curve

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Filter Design & Evaluation Experiments

| Item / Reagent | Function in Experiment | Key Consideration |

|---|---|---|

| Standardized Benchmark Dataset (e.g., BSD500, ImageNet Subset) | Provides a common ground for quantitative comparison of filter performance across different kernel sizes and types. | Ensure dataset relevance to your domain (e.g., fluorescence microscopy, histological slides). |

| Ground Truth Annotations | Serves as the target output for bias calculation and error measurement. Essential for supervised learning of filter parameters. | Quality and accuracy of annotations are critical; manual verification is recommended. |

| Computational Framework (e.g., Python with SciPy/OpenCV, MATLAB Image Processing Toolbox) | Provides implemented, optimized functions for applying convolutions with various kernel sizes and shapes. | Choose a framework with GPU acceleration for large-scale experiments with many images/kernel sizes. |

| Cross-Validation Pipeline Script | Automates the process of data splitting, training, validation, and metric aggregation for robust kernel size selection. | Should output clear logs and plots of training/validation curves for each kernel size. |

| High-Performance Computing (HPC) Cluster or GPU Access | Enables the computationally intensive process of testing many kernel sizes across large datasets and multiple cross-validation folds. | Cloud-based solutions (AWS, GCP) offer scalable alternatives to physical clusters. |

| Metric Calculation Library (e.g., for PSNR, SSIM, MSE) | Provides standardized, error-free implementations of performance metrics for consistent evaluation. | Verify that the library's implementation matches the mathematical definition used in your thesis. |

A Practical Framework for Kernel Size Selection to Target Specific Biases

Troubleshooting Guide & FAQs

Q1: During diagnostic profiling, our assay shows high background noise, obscuring the spatial signal pattern. What are the primary causes and solutions?

A: High background noise is often due to non-specific binding or suboptimal filter kernel pre-processing.

- Solution A: Increase the stringency of your wash buffers. For immunohistochemistry or fluorescence-based spatial assays, add 0.1% Tween-20 and increase the salt concentration (e.g., to 500mM NaCl) to your wash buffer.

- Solution B: Re-evaluate your filter kernel size. A kernel that is too small (e.g., 3x3) may amplify pixel-level noise. Begin with a 7x7 median filter to smooth micro-noise before applying your primary feature-detection kernel.

- Protocol: Noise Reduction Pre-Processing Protocol: 1) Apply Gaussian blur (σ=2) to raw image. 2) Apply a 7x7 median filter. 3) Proceed with your primary analysis kernel (e.g., Sobel, Gabor).

Q2: When analyzing sequential data (e.g., time-series dose-response), our diagnostic algorithm fails to distinguish between a true sustained response and a sequential sampling bias. How can we validate the pattern?

A: This indicates potential confusion between temporal bias and true pharmacology. Implement a shuffling control.

- Solution: Generate 1000 temporally shuffled versions of your dataset. Re-run your diagnostic profile. If the "signature" appears in >5% of shuffled datasets, it is likely an artifact of sequence, not biology.

- Protocol: Shuffling Control Protocol: 1) Use a script (Python/R) to randomize the time-order of your data points while preserving values. 2) Recalculate your moving average or sequential kernel. 3) Compare the distribution of shuffled outcomes to your experimental result using a Z-test.

Q3: The chosen filter kernel size seems to either miss focal signal clusters (if too large) or over-fragment them (if too small). Is there a systematic method to determine the optimal size?

A: Yes. This is the core optimization problem. Perform a kernel size sweep and calculate the Signal-to-Noise Ratio (SNR) and Cluster Integrity Index (CII) for each output.

- Protocol: Kernel Size Optimization Protocol:

- Input: Raw 2D spatial data (e.g., confocal image, protein array).

- Sweep: Apply the same kernel type (e.g., Laplacian of Gaussian) with sizes from 3x3 to 15x15 in steps of 2.

- Metric Calculation:

- SNR: (Mean Signal Intensity in ROIs) / (Std. Dev. of Background)

- CII: (Number of Valid Clusters Identified) / (Total Clusters Identified + Fragmented Artefacts)

- Output: The kernel size yielding the highest (SNR * CII) product is optimal for that specific bias signature.

Q4: In live-cell imaging for sequential bias, photobleaching introduces a confounding temporal decay signature. How is this corrected during diagnostic profiling?

A: Photobleaching must be corrected prior to bias signature analysis to avoid misidentification.

- Solution: Apply a background fluorescence decay model. A double-exponential fit to control region fluorescence over time is standard.

- Protocol: Photobleaching Correction Protocol: 1) Define a cell-free background region in each frame. 2) Fit the background intensity over time to the function: I(t) = Aexp(-t/τ1) + Bexp(-t/τ2) + C. 3) Normalize all signal intensities in the experimental ROI by this fitted decay function.

Table 1: Performance of Filter Kernels on Common Bias Signatures

| Bias Signature Type | Recommended Kernel Type | Optimal Starting Kernel Size (px) | Typical SNR Improvement* | Cluster Integrity Index Range* |

|---|---|---|---|---|

| Focal Clustered (Spatial) | Laplacian of Gaussian (LoG) | 9x9 | 2.5 - 3.2 | 0.85 - 0.92 |

| Diffuse Gradient (Spatial) | Sobel (Directional) | 5x5 | 1.8 - 2.1 | 0.70 - 0.80 |

| Oscillatory (Sequential) | Hanning Window (1D) | 7-point | 3.0 - 4.0 | N/A |

| Sustained Shift (Sequential) | Moving Average | 11-point | 2.2 - 2.8 | N/A |

| Random Spatial Noise | Median Filter | 7x7 | 1.5 - 1.8 | 0.60 - 0.75 |

*SNR and CII values are relative to unfiltered data. Actual results depend on image quality and signal strength.

Table 2: Diagnostic Profiling Workflow Output Metrics

| Profiling Stage | Key Metric | Target Value | Interpretation |

|---|---|---|---|

| Pre-Processing | Background Uniformity (Std. Dev.) | < 10% of Max Signal | Acceptable for profiling. |

| Kernel Application | Edge Sharpness (Sobel Gradient) | > 50 units (8-bit scale) | Sufficient feature definition. |

| Signature ID | Cross-Correlation with Reference | Coefficient > 0.7 | Strong signature match. |

| Validation | Shuffling Test P-Value | < 0.05 | Signature is non-random. |

Experimental Protocols

Protocol 1: Spatial Signature Profiling for Membrane Receptor Clustering Objective: To identify if a drug treatment induces a focal clustered spatial bias in receptor labeling. Methodology:

- Cell Preparation: Plate cells on glass-bottom dishes. Treat with compound or vehicle control for specified time.

- Staining: Fix, permeabilize, and stain target receptor with primary antibody and Alexa Fluor 555-conjugated secondary.

- Imaging: Acquire 10-20 high-resolution (63x oil) confocal Z-stacks per condition, ensuring non-saturating pixels.

- Pre-Processing: Maximum intensity projection. Apply background subtraction (rolling ball radius=50px) and 7x7 median filter.

- Kernel Application: Convolve each image with a range of LoG kernels (sizes 5x5 to 13x13). Threshold output to identify candidate clusters.

- Analysis: For each kernel size, calculate the average cluster size, density, and SNR. Plot metrics vs. kernel size to find the inflection point of optimal clarity.

Protocol 2: Sequential Bias Profiling in Calcium Flux Time-Series Objective: To diagnose if an observed oscillatory response is a true signaling pattern or an artifact of sequential sampling. Methodology:

- Data Acquisition: Load cells with fluorescent calcium indicator (e.g., Fluo-4). Record fluorescence (ex: 488nm) at 2-second intervals for 10 minutes after agonist addition.

- Raw Trace Extraction: Define ROIs for individual cells. Extract F(t) for each cell.

- Detrending: Fit a linear or double-exponential model to F(t) to remove baseline drift and photobleaching. Work with ΔF/F0.

- Windowed Analysis: Apply a Hanning window (lengths 5 to 15 points) to the power spectral density estimate of each trace.

- Signature Detection: Identify peaks in the 0.01-0.1 Hz frequency band. A true oscillatory bias will show a sharp peak consistent across >60% of cells in a treatment group.

- Validation: Perform the shuffling control (see FAQ Q2) on the time-index of the traces to confirm the oscillation is not random.

Diagrams

Diagram 1: Spatial Bias Diagnostic Workflow

Diagram 2: Sequential Profiling Validation Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Diagnostic Profiling Experiments

| Item | Function in Profiling | Example Product/Catalog # |

|---|---|---|

| High-Affinity, Validated Primary Antibodies | Minimizes non-specific background for clear spatial signal detection. | Cell Signaling Technology, Mono-clonal, Phospho-Specific. |

| Low-Autofluorescence Mounting Medium | Preserves signal and reduces background noise in fixed spatial assays. | ProLong Diamond Antifade Mountant (P36965). |

| Genetically-Encoded Calcium Indicator (GECI) | Enables long-duration, sequential live-cell imaging with minimal dye leakage. | GCaMP6f (AAV expression). |

| Cell Culture Plates with Glass Bottoms | Provides optimal optical clarity for high-resolution spatial imaging. | MatTek P35G-1.5-14-C. |

| Automated Liquid Handler with Time-Stamp | Ensures precise sequential addition of agonists for temporal bias studies. | Integra Viaflo 96. |

| Software Library for Image Convolution | Allows flexible application and sweeping of custom filter kernels. | Python: SciPy NDImage; MATLAB: Image Processing Toolbox. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My convolutional neural network (CNN) for microscopy image analysis is failing to distinguish subtle, localized drug-induced cellular stress granules from background noise. I am using a kernel size of 7x7. What is the likely issue and how can I troubleshoot it? A1: The 7x7 kernel is likely too large for this localized pattern detection. A large kernel integrates information over a broad area, diluting small, high-frequency features (like stress granules) with surrounding context and noise.

- Troubleshooting Steps:

- Reduce Kernel Size: Switch to a smaller kernel (e.g., 3x3). This increases focus on local pixel neighborhoods.

- Stack Layers: Compensate for the reduced receptive field by adding more convolutional layers. This maintains the network's ability to learn hierarchical features while preserving local detail.

- Validate: Calculate the signal-to-noise ratio (SNR) within annotated granule regions vs. background before and after the change. A successful fix should improve per-pixel classification accuracy (e.g., IoU) on your validation set.

Q2: When analyzing whole-slide histopathology images for global tissue architecture patterns (e.g., tumor stroma interaction), my model with 3x3 kernels performs poorly. It seems fragmented and lacks spatial coherence. What should I do? A2: The 3x3 kernels are providing an overly localized view, failing to capture the long-range spatial dependencies needed for global context.

- Troubleshooting Steps:

- Increase Kernel Size: Implement a larger kernel (e.g., 9x9, 11x11) in early or middle network layers to immediately capture a wider field of view.

- Alternative Architectures: Incorporate dilated/atrous convolutions to increase receptive field without increasing parameters, or add a self-attention mechanism to model direct long-range interactions.

- Validate: Monitor global metrics like whole-slide classification accuracy or region-level Dice score. The output segmentation map should show more structurally coherent regions.

Q3: How do I quantitatively decide between a small or large kernel size for a new dataset in my bias pattern research? A3: Perform a kernel size ablation study, measuring task-specific performance against the effective receptive field (ERF).

- Experimental Protocol:

- Baseline Model: Design a simple CNN backbone (e.g., 5 convolutional blocks).

- Variable: Create model variants where the kernel size in all convolutional layers is systematically changed (e.g., 3, 5, 7, 9, 11).

- Metrics: Train each variant on your dataset and record: (a) Primary Task Metric (e.g., Accuracy, F1-Score), (b) Computational Cost (FLOPs), (c) Parameter Count.

- ERF Analysis: Use an ERF visualization technique (e.g., guided backpropagation) on a sample image to see what image area each kernel size actually influences.

Q4: I'm concerned about overfitting and computational cost when using large kernels. Are there best practices? A4: Yes. Large kernels increase parameters and risk of overfitting to training set specifics.

- Mitigation Protocol:

- Regularization: Apply stronger L2 weight decay and Dropout layers immediately after large-kernel convolutions.

- Depthwise Separation: For very large kernels, consider using depthwise separable convolutions. This factorizes the operation, drastically reducing parameters and computation.

- Staged Training: First, pretrain your model on a larger, related dataset. Then, fine-tune using your specific experimental data with the large-kernel architecture.

Table 1: Performance Comparison of Kernel Sizes on Benchmark Tasks

| Kernel Size | Pattern Type (Bias) | Dataset (Example) | Top-1 Accuracy (%) | Parameter Count (M) | GFLOPs | Best For |

|---|---|---|---|---|---|---|

| 3x3 | Local Noise (Punctate) | Protein Granule Microscopy | 94.2 | 1.2 | 0.8 | High-frequency, localized features |

| 7x7 | Mixed Context | Cellular Organelle Segmentation | 91.5 | 3.7 | 2.5 | Mid-range structures |

| 11x11 | Global Context (Tissue) | Histopathology (Camelyon16) | 88.7 | 12.4 | 8.9 | Long-range spatial dependencies |

| 5x5 + Dilation 2 | Global Context | Histopathology (Camelyon16) | 88.1 | 4.1 | 3.2 | Global context with parameter efficiency |

Table 2: Kernel Size Ablation Study Protocol Summary

| Step | Action | Measurement | Decision Point |

|---|---|---|---|

| 1 | Profile Feature Scale | Calculate auto-covariance or use Fourier analysis on training patches. | If >70% signal power is in high-freq bands, start with small kernels (<5x5). |

| 2 | Baseline Training | Train models with kernel sizes {3,5,7,9,11} for fixed epochs. | Plot validation accuracy vs. kernel size. Identify performance plateau/knee. |

| 3 | ERF Visualization | Generate heatmaps showing which input pixels affect a key output pixel. | Select the smallest kernel size whose ERF adequately covers your target pattern. |

| 4 | Efficiency Check | Compare GFLOPs and params of top 3 performers. | Choose model with best accuracy-efficiency trade-off for your hardware. |

Experimental Protocol: Kernel Size Efficacy in Detecting Drug-induced Cytoskeletal Bias

Objective: To determine the optimal convolutional kernel size for quantifying drug-induced alterations in the global alignment of cytoskeletal fibers (a global context bias) versus local disruption of fiber integrity (a local noise bias).

Materials: See "The Scientist's Toolkit" below. Methodology:

- Image Acquisition & Preprocessing: Acquire confocal microscopy images (n=100 fields) of F-actin (phalloidin stain) from treated and control cell populations. Apply flat-field correction and normalize intensity.

- Ground Truth Annotation: Manually annotate two distinct labels: (i) Global Fiber Orientation (per-cell vector), (ii) Local Discontinuity Points (pixel-level masks).

- Model Architecture Variants: Construct a U-Net style model with an encoder-decoder structure. The experimental variable is the kernel size in the first two encoder blocks only (K=3, K=7, K=11). All other layers use 3x3 kernels.

- Training: Train three model variants (one per kernel size) using:

- Loss Function: A composite loss:

L = α * L_orientation(MSE) + β * L_discontinuity(Dice). - Optimizer: AdamW (lr=1e-4, weight_decay=1e-5).

- Batch Size: 16. Train for 200 epochs.

- Loss Function: A composite loss:

- Evaluation:

- Global Task: Measure the correlation (R²) between predicted per-cell fiber orientation vector and ground truth.

- Local Task: Calculate the Intersection-over-Union (IoU) for discontinuity point segmentation.

- Compute: Record inference time (ms/image) and GPU memory footprint.

Visualizations

Title: Kernel Size Selection Logic Flow

Title: Kernel Size Optimization Experimental Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Kernel Size Research | Example/Specification |

|---|---|---|

| High-Resolution Imaging Dataset | Provides the raw signal on which kernel operations are performed. Essential for benchmarking. | e.g., ImageDataHub: IDR-0087 (Punctate protein granules) or Camelyon17 (Whole-slide histopathology). |

| Deep Learning Framework | Enables the flexible definition and training of models with customizable kernel sizes. | PyTorch (torch.nn.Conv2d) or TensorFlow (tf.keras.layers.Conv2D). |

| Effective Receptive Field (ERF) Visualization Tool | Diagnoses the actual spatial influence of a network kernel, which is often smaller than the theoretical RF. | Custom script using guided backpropagation or the tf-explain library. |

| GPU Computing Resource | Necessary for training models with large kernels and batch sizes within a reasonable time. | NVIDIA GPU with >=11GB VRAM (e.g., RTX 3080, 4080, or V100). |

| Performance Metrics Suite | Quantifies the impact of kernel size choice on the specific research task. | Includes: IoU (segmentation), Accuracy (classification), R² (regression), and timing/profiling tools. |

| Regularization Modules | Mitigates overfitting induced by the high parameter count of large kernels. | Weight Decay (L2), Dropout, SpatialDropout, and Stochastic Depth layers. |

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions

Q1: My 1D kernel applied to molecular SMILES strings is failing to capture long-range dependencies in the sequence. What adjustments can I make? A: This is a common issue with positional encoding limitations. First, verify your model's maximum context length. For sequences exceeding this length, consider implementing a sliding window approach with overlap. Alternatively, integrate a learnable relative positional encoding (e.g., Rotary Position Embedding) to better handle variable-length molecular sequences. Ensure your tokenization aligns with functional groups, not just single characters.

Q2: When integrating pre-trained 2D image kernels (e.g., from ResNet) into a model for microscopy images of cell tissue, the features seem overly generic. How can I specialize them? A: This indicates a domain shift problem. Do not use the pre-trained backbone as a fixed feature extractor. Implement a two-phase training protocol:

- Warm-up: Allow only the final classification/regression layers to learn for 3-5 epochs.

- Fine-tuning: Unfreeze the last two convolutional blocks of the pre-trained backbone and train with a very low learning rate (1e-5 to 1e-4), using your target dataset. This adapts high-level features while preserving general edge/texture knowledge.

Q3: My 3D volumetric kernel model for molecular docking scores consumes excessive GPU memory and crashes. What are my options for optimization? A: You have several actionable steps:

- Kernel Size Reduction: Start with a smaller 3x3x3 kernel and increase network depth instead of width.

- Subvolume Sampling: Instead of processing the entire 3D grid, implement a targeted sampling strategy around the putative binding pocket.

- Mixed-Precision Training: Use AMP (Automatic Mixed Precision) to reduce memory footprint by ~50%.

- Gradient Accumulation: If batch size must be 1, accumulate gradients over 4-8 steps before updating weights to simulate a larger batch.

Q4: For time-series sensor data from high-throughput screening, my 1D CNN is overfitting despite using dropout. What else can I try? A: Overfitting in 1D temporal data often stems from redundant, high-frequency noise. Implement these steps:

- Preprocessing: Apply a Savitzky-Golay filter to smooth the signal while preserving peak shapes.

- Architectural Change: Replace standard convolutional blocks with Dilated Causal Convolutions. This exponentially increases the receptive field without pooling, helping to capture long-term trends without overfitting to local noise.

- Regularization: Add Gaussian Noise Layers (stddev=0.01) before the first convolutional layer and use L2 weight regularization (lambda=1e-4) in addition to dropout.

Q5: How do I choose the optimal initial kernel size (1D, 2D, 3D) for a novel, heterogeneous dataset (e.g., spectral, image, and tabular data combined)? A: Follow this empirical protocol:

- Perform a single-data ablation study. Train separate model branches on each data type, sweeping kernel sizes (e.g., 3, 5, 7, 9).

- Measure the feature map entropy for each configuration. The kernel size that yields the highest entropy in the first convolutional layer often best preserves initial information.

- Use the optimal sizes from step 2 as initializers for your integrated model.

- Apply Neural Architecture Search (NAS) with a Bayesian optimizer, using the initial sizes as a prior, to find the final hybrid architecture.

Experimental Protocols

Protocol 1: Evaluating 1D Kernel Efficacy for Molecular Sequence Data Objective: Determine the optimal 1D convolutional kernel size for extracting features from encoded molecular SMILES/InChI strings. Method:

- Data Preparation: Encode SMILES strings using a learned subword tokenizer (e.g., Byte Pair Encoding). Pad sequences to a uniform length (L).

- Model Architecture: Construct a network with one convolutional layer (variable kernel size k, channels=256, stride=1) followed by global max pooling and a linear classifier.

- Experimental Sweep: Train identical models varying only k ∈ {3, 5, 7, 11, 15}. Use a fixed random seed.

- Metrics: Record validation accuracy, training time per epoch, and compute Receptive Field Coverage (RFC) as (k / L) * 100%.

- Analysis: Plot kernel size vs. validation accuracy. The point before the plateau of diminishing returns is often optimal.

Protocol 2: Adapting 2D Image Kernels for Microscopy Images Objective: Fine-tune a pre-trained 2D CNN for biological image segmentation. Method:

- Backbone Modification: Load a pre-trained model (e.g., U-Net with VGG-19 encoder). Replace the final classifier with a segmentation head (1x1 conv → upsampling path).

- Training Schedule:

- Phase 1 (Feature Extraction): Freeze encoder weights. Train only the decoder and segmentation head for 10 epochs (LR=1e-3).

- Phase 2 (Fine-tuning): Unfreeze the last three encoder blocks. Train the entire model for 50 epochs (LR=1e-5) with early stopping.

- Data Augmentation: Apply microbe-specific augmentations: elastic deformations, simulated fluorescence bleaching, and multi-channel noise injection.

- Validation: Use the Intersection-over-Union (IoU) metric on a held-out test set.

Protocol 3: Optimizing 3D Kernels for Volumetric Protein-Ligand Data Objective: Identify memory-efficient 3D convolutional architectures for binding affinity prediction. Method:

- Grid Generation: From protein-ligand PDB files, generate 3D voxel grids (1Å resolution) centered on the binding site. Channels represent atom type, partial charge, and hydrophobicity.

- Architecture Search: Implement a lightweight 3D CNN with progressive pooling. Test configurations:

- Model A: Two layers (k=5, k=3).

- Model B: Three layers with dilated convolutions (k=3, dilation=2).

- Model C: Depthwise separable 3D convolutions (k=3).

- Memory Profiling: For each model, record peak GPU memory usage during a forward/backward pass with batch size=8.

- Performance Metric: Use Pearson's R between predicted and experimental pIC50 values. Select the model with the best R²/memory usage ratio.

Data Presentation

Table 1: Performance of 1D Kernel Sizes on Molecular Toxicity Prediction (Tox21 Dataset)

| Kernel Size | Validation Accuracy (%) | RFC (%) | Params (M) | Training Time/Epoch (s) |

|---|---|---|---|---|

| 3 | 78.2 | 2.1 | 1.05 | 42 |

| 5 | 81.7 | 3.5 | 1.07 | 43 |

| 7 | 83.4 | 4.9 | 1.09 | 45 |

| 11 | 82.9 | 7.7 | 1.13 | 48 |

| 15 | 81.1 | 10.5 | 1.17 | 52 |

Table 2: 2D Kernel Fine-tuning Results on Cell Nucleus Segmentation (BBBC039 Dataset)

| Backbone | Fine-tuning Strategy | IoU (%) | Δ IoU (vs. Frozen) | GPU Hours |

|---|---|---|---|---|

| ResNet-34 | Frozen Encoder | 0.721 | Baseline | 1.5 |

| ResNet-34 | Last 2 Blocks Unfrozen | 0.815 | +0.094 | 3.8 |

| VGG-19 | Frozen Encoder | 0.698 | -0.023 | 2.1 |

| VGG-19 | Last 3 Blocks Unfrozen | 0.791 | +0.093 | 5.2 |

Table 3: 3D Kernel Architecture Benchmark on PDBbind Core Set

| Model Architecture | Pearson's R (pIC50) | RMSE | Peak GPU Memory (GB) | Inference Time (ms) |

|---|---|---|---|---|

| Standard Conv (k=5) | 0.63 | 1.42 | 4.8 | 22 |

| Dilated Conv | 0.65 | 1.38 | 2.7 | 35 |

| Separable Conv | 0.61 | 1.45 | 1.9 | 18 |

Mandatory Visualizations

The Scientist's Toolkit: Research Reagent Solutions

| Item Name | Supplier Example (Catalog #) | Function in Kernel Optimization Experiments |

|---|---|---|

| Tox21 Dataset | NIH/NCATS (Public) | Standardized benchmark for 1D molecular kernel testing across 12 toxicity assays. |

| BBBC039 (Cell Painting) | Broad Bioimage Benchmark | Curated high-content microscopy images for 2D kernel validation and transfer learning. |

| PDBbind Core Set | PDBbind Consortium (v2020) | Curated protein-ligand complexes with binding affinities for 3D kernel training. |

| PyTorch Geometric | PyTorch Ecosystem | Library for easy implementation of graph and 3D convolutional kernels on molecular data. |

| MONAI (Medical Open Network AI) | Project MONAI | Domain-specific framework for 3D biomedical data augmentation and kernel-based networks. |

| Weights & Biases (W&B) | W&B Inc. | Experiment tracking for hyperparameter sweeps over kernel size, dilation, and depth. |

| NVIDIA Apex (AMP) | NVIDIA | Enables mixed-precision training, crucial for large 3D kernel models to reduce memory. |

| RDKit | Open-Source | Cheminformatics toolkit for SMILES tokenization and molecular feature grid generation. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: After implementing kernel-aware bias correction, my Conv-LSTM model's validation loss diverges instead of converging. What could be the cause?

A: This is often due to an incorrect scaling factor in the bias correction term, which can overshoot gradients. Verify that the correction term C_k for each filter kernel size k is calculated as per Equation 7 in the thesis: C_k = (1 - β^k) / (1 - β) where β is the momentum of your optimizer. For adaptive optimizers like Adam, ensure you are using the corrected second moment estimate. A common mistake is applying the correction after the optimizer step instead of before.

Q2: How do I choose the initial bias correction window when working with extremely long pharmacological time-series data? A: The initial window should be aligned with the dominant frequency of the biological signal, not the full sequence length. For cellular response data, start with a window covering 3-5 expected oscillation cycles. See Table 1 for empirical guidelines based on sampling rate. The correction can be applied recursively as the sequence unrolls.

Q3: My hybrid model shows improved bias metrics but a significant drop in precision for rare event detection (e.g., sudden cytotoxic response). How can I mitigate this?

A: This indicates that the bias correction is smoothing out high-frequency, low-probability signals. Implement a gated correction mechanism. Only apply the full kernel-aware correction to biases associated with convolutional filters for spatial features. For the LSTM cells governing temporal dynamics, use a attenuated correction factor (e.g., multiply C_k by 0.1-0.3) to preserve sensitivity to abrupt temporal shifts.

Q4: During transfer learning from a general image model to a specific histopathology dataset, should I re-calculate the bias correction from scratch? A: No. You must freeze the convolutional base's biases and their pre-calculated correction factors. Only recalculate bias correction for the newly initialized layers of the LSTM and any task-specific dense layers. Re-calculating for the entire network will reintroduce the original initialization bias that the pre-trained model has already moved beyond.

Q5: The training becomes computationally prohibitive after adding per-kernel bias tracking. Any optimization tips? A: Instead of tracking a unique correction factor for every single filter kernel, group kernels by size and layer depth. Our experiments show that grouping 3x3 kernels from convolutional layers with similar receptive field depths (e.g., early vs. late stage) yields a 70% reduction in overhead with less than a 0.5% change in bias metric impact. See Table 2.

Data Presentation

Table 1: Recommended Initial Bias Correction Windows for Pharmacological Time-Series

| Sampling Rate (Hz) | Dominant Signal Type (e.g., Calcium Flux) | Initial Window Size (Time Steps) | Kernel Size Group (for Conv. Layers) |

|---|---|---|---|

| 1 | Slow Receptor Internalization | 5-10 | 3x3, 5x5 |

| 10 | Metabolic Oscillation | 30-50 | 3x3, 7x7 |

| 100 | Neural Spike Train (in tissue models) | 100-200 | 1x1, 3x3 |

Table 2: Computational Cost vs. Bias Metric Impact of Kernel Grouping Strategies

| Grouping Strategy | Avg. Training Time Overhead | Δ Bias Metric (MSE) | Recommended Use Case |

|---|---|---|---|

| Per-Filter (Baseline) | 15.2% | 0.0% | Small-scale models (< 1M params) |

| Per-Layer, by Kernel Size | 7.1% | +0.12% | Standard Hybrid Models |

| Per-Layer, All Kernels | 4.3% | +0.51% | Large-scale 3D Conv-LSTM for imaging |

| Cross-Layer, by Receptive Field Equivalence | 5.5% | +0.18% | Deep Transfer Learning Models |

Experimental Protocols

Protocol: Quantifying Kernel-Specific Bias in Pre-Trained Hybrid Models

- Isolate Bias Parameters: From a saved checkpoint, extract all bias vectors

bfrom convolutional layers and LSTM cells. - Forward Pass with Zeroed Input: Run a forward pass with a batch of zero-valued tensors matching the model's expected input shape. Record the activation

aat each layer. - Calculate Expected Bias Drift: For each filter kernel size

k(e.g., 3x3, 5x5), compute the mean activationμ_kand varianceσ_k²across all filters of that size and over the batch dimension. - Compute Correction Factor: For each parameter group in your optimizer (grouped by kernel size as per strategy), compute

C_k = (1 - β^t) / (1 - β), wheretis the training iteration at checkpoint andβis the optimizer's momentum. For Adam'sβ2, useC_k^{v} = (1 - β2^t). - Apply Correction & Re-evaluate: Update the model's bias parameters:

b_corrected = b / C_k. Reload the corrected model and repeat Step 2. Compare the newμ_kandσ_k²to the originals. A successful correction will bringμ_kcloser to zero and reduceσ_k²by >60%.

Protocol: Integrating Bias Correction into Active Training Loop

- Initialization: At

t=0, define an empty registerRto storeC_kfor each kernel-size parameter group. - Pre-Optimizer Hook: Before each optimizer step (

t), for each parameter groupgwith kernel sizek:- Compute

C_k^tas defined in FAQ A1. - Store

C_k^tin registerR[g]. - Modify gradients for bias parameters in group

g:g_bias = g_bias / R[g].

- Compute

- Optimizer Step: Proceed with the standard optimizer step (e.g.,

optimizer.step()). - Logging: Log the

max(R)andmin(R)each epoch to monitor correction magnitude.

Mandatory Visualization

Diagram 1: Kernel-Aware Bias Correction Workflow in Conv-LSTM Training

Diagram 2: Bias Signal Flow in a Hybrid Conv-LSTM Unit with Correction

The Scientist's Toolkit

Table: Key Research Reagent Solutions for Filter Kernel & Bias Experiments

| Item Name / Solution | Function in Experiment |

|---|---|

| Custom PyTorch/TF Gradient Hook | Intercepts bias gradients during backpropagation for real-time application of the kernel-aware correction factor C_k. |

| Kernel Size Grouping Registry (Software) | A lightweight in-memory database (e.g., Python dict) to map each model parameter to its filter kernel size k for efficient group-wise operations. |

| Zero-Input Activation Profiler | Script to run forward passes with null inputs, quantifying the inherent bias drift (μ_k, σ_k²) for each layer and kernel size group. |

| Optimizer State Checkpointing Tool | Saves not just model weights but also optimizer momentum states (β^t) per parameter group, essential for resuming training with consistent correction. |

| Bias Metric Dashboard (e.g., TensorBoard Plugin) | Visualizes the mean and variance of biases across different kernel-size groups over training time, highlighting correction impact. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During training of our multi-modal DDI predictor, we observe a significant performance disparity for drug pairs involving under-represented demographic groups. What is the first kernel-related parameter to investigate? A: The primary suspect is the filter kernel size in your initial convolutional layers. A kernel size that is too large may fail to capture localized, group-specific pharmacological patterns from the molecular graph or protein-binding pocket data, causing these signals to be averaged out. We recommend starting with an Adaptive Kernel Grid Search protocol (see below).

Q2: Our model uses SMILES strings and patient EHR data. After implementing a fairness-constrained loss, accuracy drops sharply. Is this expected? A: A sharp accuracy drop typically indicates a kernel optimization mismatch. The fairness penalty may be forcing the model to re-weight features it cannot resolve due to inappropriate receptive fields. You must co-optimize the kernel sizes with the fairness hyperparameter (λ). See the Co-optimization Workflow diagram.

Q3: How do we quantify "bias patterns" specifically for kernel size selection? A: Bias must be quantified per subpopulation. Calculate Subgroup Performance Discrepancy (SPD) for each candidate kernel configuration using a validation set stratified by demographic and pharmacological attributes.

Table 1: Subgroup Performance Discrepancy (SPD) for Kernel Size Candidates

| Kernel Size (SMILES/EHR) | AUROC (Majority Group) | AUROC (Minority Group A) | SPD (ΔAUROC) | Recommended for Fairness-Optimization? |

|---|---|---|---|---|

| 3 / 5 | 0.89 | 0.81 | 0.08 | No - High disparity |

| 5 / 5 | 0.87 | 0.79 | 0.09 | No - High disparity |

| 7 / 3 | 0.86 | 0.84 | 0.02 | Yes - Low disparity |

| 3 / 7 | 0.88 | 0.80 | 0.08 | No - High disparity |

Q4: What is the detailed protocol for the Adaptive Kernel Grid Search? A:

- Stratified Data Partition: Split your multi-modal dataset (e.g., molecular graphs, phenotypic vectors) into training, validation, and test sets, ensuring representative proportions of all demographic subgroups of interest.

- Baseline Model Setup: Initialize your DDI prediction architecture (e.g., Graph CNN for drugs, 1D CNN for EHR).

- Kernel Configuration Matrix: Define a grid of kernel sizes for each modality's first convolutional layer (e.g., molecular kernel sizes: [3, 5, 7]; phenotypic kernel sizes: [3, 5]).

- Cross-Validation & Metric Calculation: For each kernel pair, train the model and evaluate on the stratified validation set. Record overall AUROC and calculate SPD for each subgroup.

- Pareto Frontier Selection: Identify kernel configurations that lie on the Pareto frontier of maximizing overall AUROC while minimizing SPD.

- Final Evaluation: Retrain the best candidate on the full training set and report final metrics on the held-out test set, including disaggregated performance.

Q5: The kernel optimization improved fairness metrics but hurt overall performance on the test set. What went wrong? A: This suggests overfitting to the fairness metric on your validation stratification. Your test set may have a different covariance between demographic and biomolecular features. Implement a more robust Kernel-Specific Regularization protocol: for larger kernels, increase dropout; for smaller kernels, apply stronger L2 regularization to prevent overfitting to spurious subgroup correlations.

Experimental Protocols

Protocol 1: Co-optimization of Kernel Size and Fairness Constraint

- Input: Multi-modal dataset D, set of kernel sizes K, set of fairness weights Λ.

- For each kernel pair (k1, k2) in K:

- For each λ in Λ:

- Train model with loss L = Lpred + λ * Lfairness (e.g., Demographic Parity gap).

- Compute performance metrics M_k,λ on stratified validation set.

- Output: Matrix M of metrics. Select (k, λ) that maximizes M with fairness constraint satisfied.

Protocol 2: Bias Pattern Attribution via Gradient-based Kernel Analysis

- Train your final fairness-optimized DDI predictor.

- For a given input sample, compute the gradient of the predicted DDI probability with respect to the input features of each modality.

- Aggregate these gradient magnitudes for each filter in the first convolutional layer.

- Correlate high-magnitude filters with specific input features (e.g., specific molecular substructures or ICD-10 codes) across subgroups.

- Interpretation: Kernels associated with filters that activate disproportionately on majority-group features may require further resizing or retraining.

Mandatory Visualizations

Title: Co-optimization Workflow for Kernel Size & Fairness

Title: Gradient-based Kernel Analysis for Bias Attribution

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Fairness-Optimized DDI Research

| Item Name | Function in Experiment | Key Consideration for Fairness |

|---|---|---|

| Stratified DDI Benchmark Dataset (e.g., TWOSIDES+Demographics) | Provides ground truth interactions with linked demographic data for bias quantification. | Must have sufficient representation across subgroups; check for linkage quality. |

| Graph Convolutional Network (GCN) Library (e.g., PyTor Geometric) | Implements molecular graph convolution; allows flexible kernel/receptive field definition. | Choose libraries that let you modify filter aggregation functions per node neighborhood. |

| Fairness Metric Library (e.g., Fairlearn, AIF360) | Provides standardized metrics (SPD, Demographic Parity, Equalized Odds) for validation. | Ensure compatibility with your deep learning framework and data loaders. |